Report Summary

Summary of “Aptos Delphi Report V2”

Title: Aptos: Building Better Plumbing for the Internet Financial System

Theme: Aptos is positioned as a high-performance, next-generation Layer 1 (L1) blockchain aimed at addressing the blockchain trilemma—scalability, security, and decentralization—while delivering a superior developer and user experience. It merges the benefits of both monolithic and modular architectures.

Key Takeaways

1. Architectural Advantage: Monolithic + Modular Hybrid

-

Pipelined Architecture: Decouples consensus, execution, storage, and certification but runs them efficiently within a single stack.

-

False Dichotomy Solved: Aptos breaks the monolithic vs. modular binary by offering the performance of monoliths and the scalability of modular systems—without fragmenting user or developer experience.

2. High Performance + Parallelism

-

BlockSTM (Execution Layer): Enables automatic parallel processing—no manual conflict management needed. Scales up to 170K TPS on 32-core machines, and BlockSTM v2 will support 256-core machines.

-

Zaptos: Optimizes latency by overlapping execution, commitment, and certification with consensus—achieving sub-second finality even under high load.

-

Raptr (Upcoming): DAG + Leader-based hybrid consensus mechanism for optimal latency.

3. Scalability on Every Layer

-

Consensus (Quorum Store): Based on Narwhal, it decouples transaction dissemination and ordering, enabling a 10x boost in throughput.

-

Execution (Shardines): Uses real-time partitioning and pipelining to scale execution across 30+ shards—targeting 1M+ TPS.

-

State Scalability: Splits global state across multiple RocksDB instances using Jellyfish Merkle Trees (JMT), enabling parallel and sharded state updates.

4. Developer-Centric Design

-

Move Language: Safe by design. Prevents reentrancy, double-spending, and ensures asset-level correctness by treating digital assets as first-class resources.

-

BlockSTM: Removes the burden of declaring read/write sets, enabling faster iteration and lower audit costs.

-

Aptos Build & Tooling:

-

Aptos SDKs, Explorer, NFT Studio, Petra Wallet

-

Keyless login, X-chain accounts, and account abstraction out of the box.

-

5. UX-First Chain for Mainstream Adoption

-

Keyless Onboarding: Google login support—no seed phrase required.

-

Account Abstraction: Multisig, recovery options, session keys, automation.

-

Pre-execution Simulation: Prevents phishing attacks by letting users see human-readable transaction outcomes before signing.

-

Scheduled Transactions: Allows automation without off-chain keepers.

6. Security + Compliance Built-in

-

Formal Verification via Move Prover for provable contract behavior—ideal for regulated, high-value applications.

-

Ledger Certification: Split into fast (diff-based) and slow (full snapshot) layers for trustless state proofs without sacrificing performance.

7. Future Roadmap & Vision

-

Upcoming Tech Upgrades:

-

BlockSTM v2, Raptr, Shardines, Tiered Storage, Execution Pool

-

-

Aims to become the foundational layer for internet-scale capital markets.

-

Positioned to win based not just on speed, but on fairness, composability, and UX.

Conclusion

Aptos is more than a fast chain—it’s a composable, secure, developer-first platform that scales horizontally and delivers a Web2-quality user experience. By removing complexity at both infrastructure and application layers, it positions itself as the blockchain best suited to host next-gen DeFi, institutional products, AI agents, and real-world use cases.

If it maintains execution on its roadmap, Aptos has a strong shot at becoming a core financial infrastructure layer for the onchain economy.

-

Modular vs Monolithic: Mutually Exclusive?

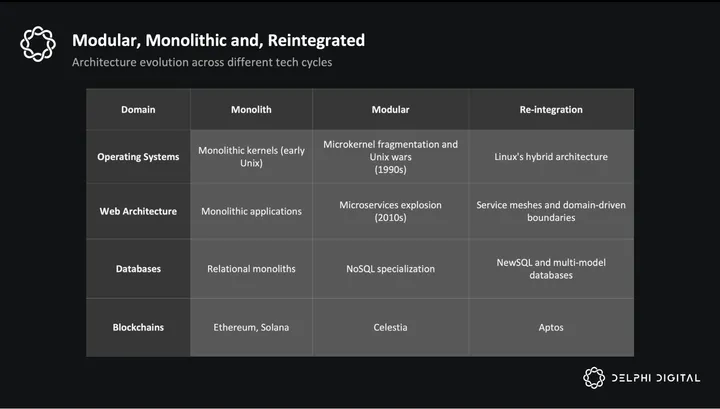

The question of how to scale a blockchain is often framed as a dichotomy: either a monolithic or a modular architecture. Historically, this is not a new precedent in systems design. Platform architecture has tended to swing from tight integration to modular decomposition, and then back towards reintegration.

Operating Systems (OS) offer a helpful analogy. Early kernels were monolithic – fast, but hard to extend. Microkernels brought clean separation, but added overhead. Eventually, systems like Linux found a middle ground: modular subsystems within a monolithic kernel, which balanced both architectures.

In the blockchain world, Solana and Celestia represent opposite poles of this dynamic. Solana has proven it can deliver strong performance using an integrated approach. The benefits, which come in the form of being a unified user hub and having composable liquidity, are massive. At the same time, there has also been a trend of successful applications (dYdX, Aevo) leaving their base chains in the pursuit of customization and greater revenues.

These cases highlight the modular thesis: once an app reaches scale, tailoring the stack becomes attractive. Still, each model has tradeoffs – monolithic chains risk centralization as hardware demands grow, while modular systems introduce fragmentation and coordination overhead.

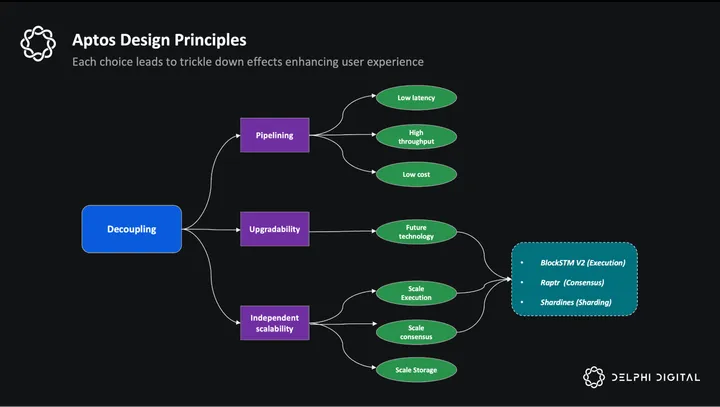

Aptos offers a unique solution. It decouples the key components of a blockchain system: dissemination of data, consensus, execution, and storage. But it keeps all of these functions within a single chain. Pipelining allows the system to process things in parallel while keeping it in the same software stack. This mitigates common issues with modular architectures, like fragmented liquidity and the general degraded user experience. The result is a system that embraces the merits of both modular and monolithic architectures: composability, seamless UX and DevEx, scalability, and high performance.

The main insight from Aptos’ design is that monolithic and modular might be a false dichotomy. The real challenge is figuring out how to separate parts of the system without fragmenting it, and how to reintegrate the pieces that matter.

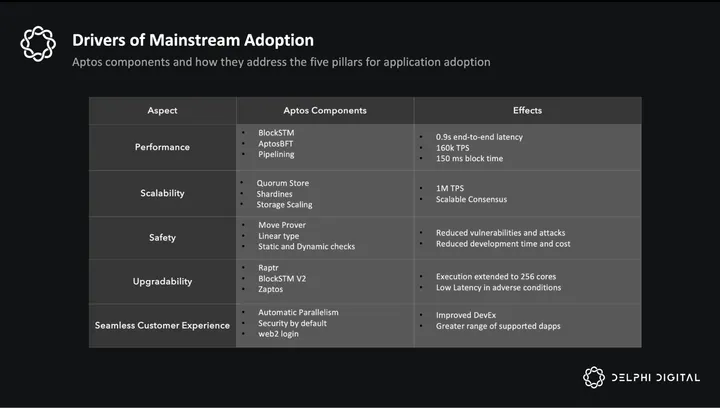

This approach addresses a long-standing debate – the blockchain trilemma of scaling, decentralization, and security. But Aptos goes further – its architecture is grounded in five pillars that address the growing demands of Web3 and lay the foundation for a global trading engine.

- Performance – To address today’s applications and tomorrow’s needs with minimal hiccups.

- Scalability – To support the growth of applications and the platform’s user base.

- Safety – So individuals, investment funds, and institutions can trust onchain applications with their capital.

- Upgradability – To push the limits of performance, scalability, and safety in a relatively seamless way.

- Seamless Customer experience – Easier DevEx for builders and UX for end users.

Key Drivers of Mainstream Adoption

Blockchains are generally pitted against each other by their outputs and raw performance. Throughput per second (TPS), block times, and latency are all the usual metrics for comparison. But there is a missing piece to this train of thought: resource efficiency. The inputs of a blockchain and general resource efficiency is not a usual topic of discussion despite how important it is to the vision of building a performant chain.

On most chains today, only transaction execution is optimized using a parallel processing engine. The SVM, MoveVM, or Parallel EVM are examples of this. But execution is only one part of the pipeline. Most blockchains still handle consensus, storage, and certification sequentially – one after the other. Each stage also uses different hardware components of a validator’s hardware stack. This means while one stage is in progress, one component works, and others sit idle. Most of the hardware is underused, and the whole system is inefficient.

Each stage causes a spike in different hardware components:

- Consensus uses network bandwidth

- Execution uses CPU cores

- Storage uses disk I/O

- Certification uses both CPU and disk

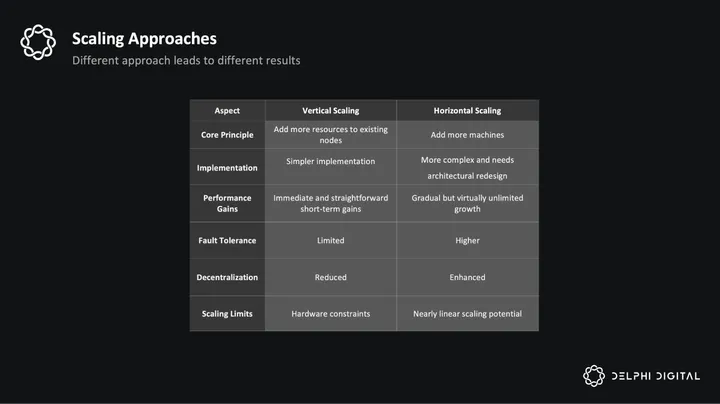

Aptos is hardware efficient, which means it can squeeze more performance out of the same hardware. The reason for this is its parallelization and optimization of all stages, not just execution. The Aptos approach is rooted in the notion that throughput and scalability are limited more by how blockchain components are combined together rather than raw hardware capabilities. By separating transaction stages and re-organizing them into pipelines, Aptos gets increased throughput as more blocks are processed in different stages. Better hardware utilization leads to lower transaction costs and reduced validator requirements.

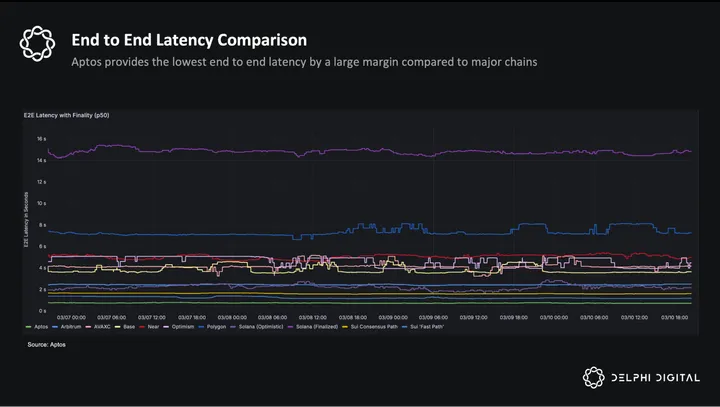

Aptos’s original pipeline design was already fairly efficient, and the recent Zaptos upgrade (unpacked later in this report) pushes performance closer to the theoretical limits of latency. Even under high loads. Aptos has recently created blocks in as quick as 130-150 milliseconds, which is perhaps an industry leading feat.

Decoupling and pipelining also lead to two important long-term benefits beyond immediate speed. First, Aptos can horizontally scale resources exactly at the bottlenecks (like execution or storage) independently. Second, each stage can also be upgraded separately, enabling faster innovation without a complete overhaul of the system.

This leads to an iterative and recursive process of discovering bottlenecks and fixing them. The most important downstream implication of this is that Aptos can avoid technical debt that compounds as the system gets more activity. This is evident in the upcoming Raptr consensus upgrade, which delivers nearly optimal latency even under challenging network conditions. Details on Raptr are not completely public yet, but one of its main feats is combining the high throughput of DAG-based approaches with the low latency of the leader-based approach.

In short, Aptos maximizes resource usage and clearly defines upgrade paths, achieving strong performance today and setting the stage for predictable scaling needs down the line. Such scalability is essential for mainstream adoption, as reliably managing unpredictable growth preserves network effects and keeps users and developers committed to the platform.

As we’ve already explored how platform growth involves multiple factors beyond pure performance, we’ll now examine how Aptos addresses each of these dimensions through its design choices.

Upgradability

Aptos’s ability to rapidly upgrade is a key strength in situations driven by open-source innovation. In open source tech, competitors can copy or fork code. Lasting dominance heavily depends on the speed of integrating technical improvements. Typically, disruptive technologies start by introducing something new. But this comes at the cost of being worse in the metrics that incumbents optimize for. The key requirement to catch up is their ability to rapidly improve. Just like computers used Moore’s law, disruptive innovations close the gap to surpass established technologies. But this needs rapid upgradability.

Bitcoin and Ethereum followed this exact pattern. They offered censorship resistance and decentralization, but faced limitations due to their tightly integrated designs. Ethereum’s acceptance of modular scaling outside of the core chain has fragmented ecosystem liquidity and diluted network effects. The problem with a monolithic system (like the Ethereum L1) is that it slows down the upgrade process. This is because the code, modules, and overall system are intertwined with dependencies.

Aptos gets the speed and ease of modular systems in its upgrade process. The upcoming Raptr consensus update and BlockSTM v2 are instances where Aptos independently swaps consensus and parallel processing engines with ease. It is due to this reason that Aptos has been able to implement around 100+ AIPs in the span of two years.

What does this mean for prospective builders? Aptos’ ability to upgrade ensures the platform responds to new technical breakthroughs, developer needs, and has steady improvements that aim to push the envelope on performance and security.

Performance

Performance for blockchains is rooted in two key goals: high throughput and low latency. Blockchains need to both widen the processing pipe to handle more transactions and speed up the flow through it. Think about an eight lane highway that suddenly becomes a four lane highway. Even if the highway has Formula One grade tarmac, this narrowing means all vehicles have to slow down. The only real solution is simple: widen the roads at the bottleneck.

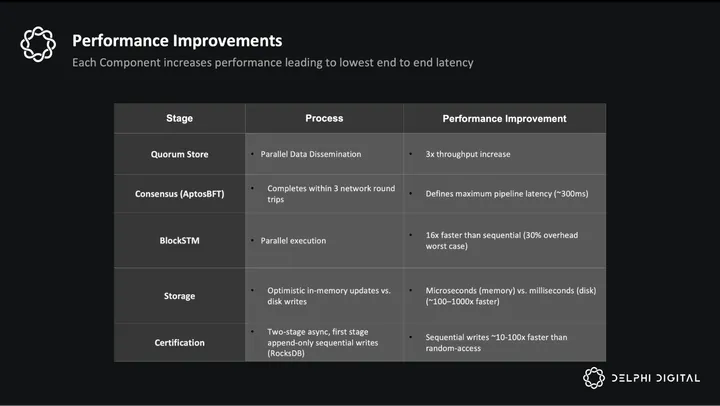

For throughput, all stages need equally wide capacity. Parallel execution can leverage multiple CPU cores, but if validators receive transactions through the network bandwidth of a single validator, that becomes the bottleneck. This also applies to storage, consensus, and certification, where each component must match the capacity of the others.

From the user’s point of view, what they experience is latency i.e., how long it takes from submitting a transaction to having it confirmed. Even if each stage is fast, when they’re run one after the other, the latency adds up. Pipelining, which has been effective in speeding up CPUs for decades, addresses this by overlapping stages. But for that to work well, each stage must first be optimized individually.

AptosBFTv4

Aptos uses AptosBFTv4 for its consensus mechanism, which is an iteration of Jolteon. AptosBFTv4 has a lesser number of communication rounds relative to most other consensus protocols. As a result, latency is sharply reduced. This is possible because:

- Pipelined consensus reduces the time between consecutive blocks and also processes more blocks at the same time, increasing throughput.

- Consensus on metadata and proofs instead of full transactions. This allows more transactions to undergo consensus because it needs less data.

- Reputation-based leader elections prioritize validators based on stake weight and performance for faster block confirmations.

- Direct peer-to-peer communication (PBFT-inspired) reduces delays and overhead by enabling validators to communicate directly without leader relay.

This makes the consensus both wider (because of pipelining and metadata use) and faster (because of fewer rounds).

Block-STM

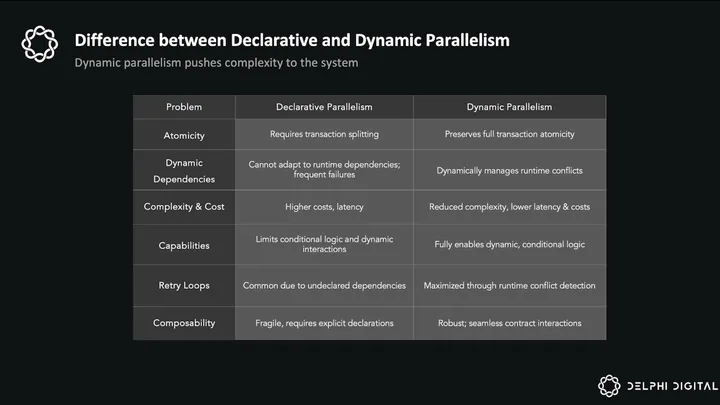

Block-STM is a fundamental shift in how parallel execution is done on blockchains. It makes parallelization completely automatic. Most execution engines like SVM rely on static analysis and up-front declarations to parallelize execution. Block-STM doesn’t need it. It automatically adapts to the runtime behavior of transactions without any additional inputs.

There are a few key innovations that make this possible. Block-STM is inspired by traditional Software Transactional Memory (STM), but has improved memory performance and efficient conflict management. Counterintuitively, having a fixed transaction order and batch-based execution makes it better. These are generally considered limits imposed by blockchains on traditional systems.

BlockSTM’s main components are:

- Multi-Version Data Structure

Block-STM uses a data structure that stores multiple versions of a data blob for each memory location. When different transactions update the same data, they each write to their own version. This allows them to run in parallel without blocking each other. Later, the system checks for conflicts and, if needed, re-executes only the transactions that didn’t align with the expected order. These are caught during the validation process. - Collaborative Scheduler

All of the tasks, including execution and validation, are organized inside a shared pool. Each CPU thread selects tasks based on their priority, giving preference to earlier (lower-numbered) transactions to maximize parallel processing. - Dynamic Dependency Estimation

When a transaction fails its validation, its writes are marked as “estimations”. This alerts later transactions that its data might change. These subsequent transactions are then paused until re-validation completes, preventing a chain reaction of failures.

The combination of these 3 components makes automatic parallel processing possible with high performance and low overhead. Block-STM achieves up to 17x higher performance compared to sequential execution with an overhead of 30% in worst-case scenarios. It reaches 170k TPS in Aptos’ benchmarks with 32 threads. BlockSTM will be upgraded to its v2, which will scale its capacity to 256 cores. This will increase the capacity of single nodes to handle increased throughput. This means users receive near-instant feedback, at even web-scale usage. Swapping, gaming, and other latency-sensitive apps begin to feel indistinguishable from their web2 counterparts.

Storage

Even with Block-STM’s high throughput, overall performance can still get stuck at the slowest part of the pipeline, which is storage. Disk operations are very slow. They take milliseconds, while memory operations happen in microseconds. So if transactions are written to disk too often, execution becomes slow. The CPU threads sit idle even though the execution engine is capable of running faster.

Aptos changes how storage is handled to avoid this inefficiency. Instead of writing each transaction to disk immediately, it first commits those changes in memory. Then, it batches multiple transactions together and writes them to disk in larger chunks. This reduces the number of slow disk operations, which lowers latency.

When new transactions come in, they can read the recent state directly from memory instead of disk. This keeps execution threads active at all times. As a result, the system can fully take advantage of Block-STM’s parallelism without being slowed down by storage.

Ledger Certification

State certification is slow on most chains because it requires hashing the full global state and building the Merkle trees from scratch. Both of these need random memory or disk access. This slows down block production. It is a major bottleneck in Ethereum, and Solana is exploring a two-stage approach similar to what Aptos already has in production.

Certification is important because it enables trustless state access. Users can independently verify that the state is valid without relying on any intermediaries. But it also introduces latency and slows down the pipeline, leading to a degraded user experience. Therefore, it’s important to balance both trustlessness and the pursuit of low latency.

To solve this, certification on Aptos is split into two stages. The first stage is fast, while the second is slow but comprehensive.

In the first stage, ledger history certification is done on every block. Instead of hashing the entire state, it only hashes the state diffs and appends them to a sequential, append-only tree. This is faster than a Merkle tree, which requires random access. Validators then sign the result and produce a quorum certificate, which allows users and clients to independently reconstruct and verify the latest state changes if they want to.

In the second stage, Aptos creates full-state snapshots at the end of each epoch. These are hashed and stored. Light clients can verify any specific state by combining these snapshots with the diffs that have followed it. This avoids rehashing the entire state for every block while still preserving the ability to reconstruct and prove any part of the global state.

This separation leads to low-latency and trustless access without compromising performance.

Zaptos

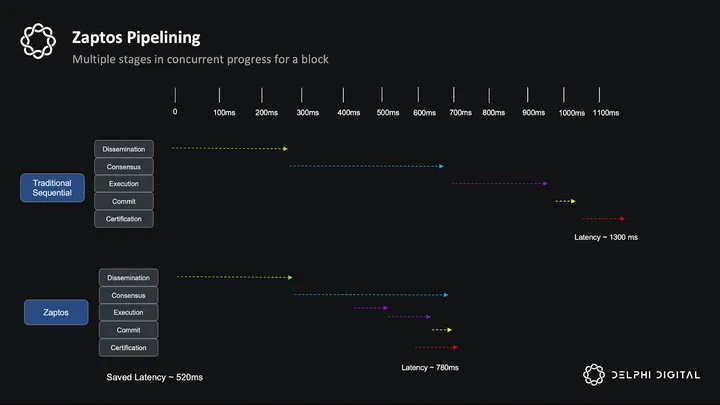

Now that we have seen how each stage of the Aptos pipeline – execution, storage, certification, and consensus – is individually optimized, we can explore how all of this is packaged together. When each of these stages is fast but is run one after another, it still builds up latency.

To bring down latency, Zaptos has been proposed as an improvement over its previous method of pipelining. This core idea is also used in traditional computing. If multiple stages are going to run, performance improvements can be achieved by overlapping them. If execution, commitment, and certification stages are run in parallel with consensus, we get reduced overall latency. The goal is to make consensus latency the upper bound so that by the time a block is finalized, everything else is already done in parallel.

Zaptos introduces three more optimizations:

- Optimistic Execution: As soon as a block is disseminated to all validators, execution begins with consensus in parallel. This avoids idle CPU time while consensus is still in progress.

- Optimistic Commit: After execution finishes, state is written to storage immediately, even before certification is complete. If consensus fails to finalize the block, then this write is rolled back.

- Optimistic Certification: Certification normally starts after consensus. Zaptos moves it earlier, overlapping it with the last round of consensus. This keeps certification within the same time window.

The result of this is that execution, commitment, and certification happen during consensus and not after. These stages are “shadowed” by consensus rounds. So instead of stacking latency from each stage, total latency now depends only on how long consensus takes.

This leads to big improvements in end-to-end latency. On the 64-core machines at 20,000 TPS, Zaptos’ latency is 0.78s. Compared to that, Aptos’ baseline latency is 1.32s. This is a reduction of 40%, leading to theoretical sub-second confirmations even at peak throughput. This means developers can build high-frequency, latency-sensitive applications – like real-time trading platforms, gaming engines, and payment systems – with confidence that user actions will be confirmed almost instantly, even under heavy network load.

As block sizes grow, execution becomes a bottleneck. But because it’s already overlapped with consensus, Zaptos still saves time. Under ideal conditions, when execution and commitment each take less than one network round, Zaptos reaches the theoretical latency floor.

Apart from this, two major components are expected to undergo upgrades in the near term. The consensus mechanism will transition to Raptr, which has the lowest latency under Byzantine and real world scenarios. BlockSTM will evolve to its v2, which will be scalable to 256 core machines. Aptos will later integrate Shardines, which would scale its execution capacity to 1M TPS.

Scalability

Most chains perform well early in their lifetimes when activity is not bursting. But almost every chain, from Bitcoin and Ethereum to Solana, struggled when it mattered most – during periods of sudden virality and large user influxes. During these periods: latency increases massively, throughput stalls, and it’s hard to land transactions successfully.

It’s in situations like this that a blockchain has the ability to show users and builders that it can handle surges of demand. But it’s a tall task because most chains are not elastically scalable with demand. As a result, opportunities to build on momentum from high-demand scenarios are lost.

Aptos’ built-in scalability emerges from the decoupling of its core components. Each layer can add resources exactly where they’re needed. If an application takes off suddenly, Aptos can scale execution by adding more machines. If consensus throughput becomes a constraint, more validators can be added.

This fine grained control on scale is a strategic advantage. When a chain can absorb these spikes seamlessly, it can convert short-term frenzies into long-term growth.

In the following sections, we’ll see how each of these layers is scalable, beyond just decoupling.

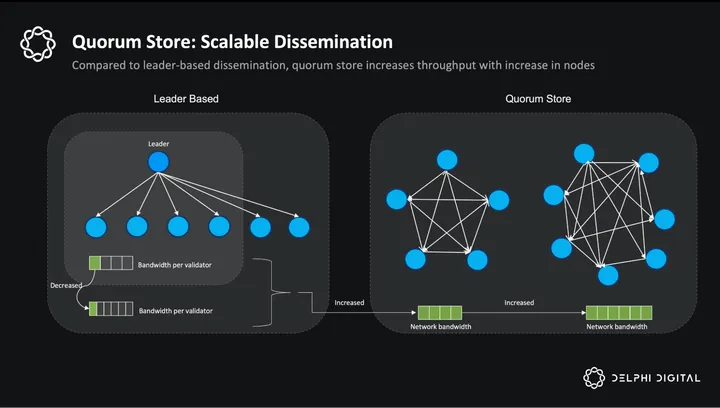

Consensus Scalability – Quorum Store

Most consensus mechanisms are monolithic. The transaction dissemination and ordering are tightly coupled. A single validator is responsible for broadcasting full transaction payloads to all validators. This leads to two bottlenecks. First, all validators receive the transactions through a single internet connection, which limits how many transactions can be shared. Second, every consensus message contains full transaction data. This slows down the rounds as more data has to be shared per round.

Counterintuitively, adding more validators makes things worse. The network still has to rely on a single node to push transactions to even more nodes. Most of the network capacity remains underutilized, and throughput decreases, even as the validator set grows.

Aptos instead uses the Quorum Store. It is built on Narwhal, which decouples dissemination from ordering, breaking the monolithic consensus that would’ve otherwise existed. Instead of a single leader, all validators independently collect transactions from users and form their own batches. These batches are continuously streamed to peers in parallel. Validators sign each batch they receive, and once the sender gathers enough signatures, it creates a proof of availability that shows the data has been propagated to the majority of nodes.

During block proposals, the leader no longer includes the full transaction payload. Instead, they include just the metadata and availability proofs. This reduces the amount of data that consensus has to process in each round. Rounds can progress faster since proofs and metadata are much lighter than full transactions. Rounds can also handle more transactions.

This makes the consensus layer scalable with the size of the validator set. As more validators join, total network bandwidth increases. The dissemination bottleneck is removed, leading to a 50x increase in raw consensus throughput and a 10x improvement in end-to-end throughput. Quorum Store makes consensus faster and scalable.

Execution Scalability – Shardines

Block-STM performs great on a single machine. Shardines is the next evolution of the blockchain execution engine, which can add more machines and push throughput beyond 1M TPS. Shardines uses a cutting-edge dynamic partitioner and an innovative ‘micro-batching and pipelining’ strategy.

Shardines decouple execution from other consensus and storage, allowing it to scale independently.

It’s important to divide the workload carefully, otherwise coordination challenges would outweigh the benefits. Instead of a random assignment, Shardines use a dynamic partitioner that assigns transactions to shards in real time. It uses a fanout metric that measures how many shards each transaction touches. A lower fanout means fewer cross-shard dependencies. The fanout should be as close to 1 as possible to process more transactions inside a single shard and not add overhead.

After partitioning, the transactions are grouped into micro-batches and pipelined. Each shard independently executes these batches using Block-STM. The entire pipeline has multiple stages running at the same time in parallel:

- Sending transactions from coordinators to shards

- Fetching data from storage

- Executing transactions

- Communicating results between shards

- Returning results to the coordinator

Because these pipeline stages progress independently and simultaneously, delays from network hops and data dependencies are minimized, significantly improving throughput and latency.

In its current architecture, Shardines can scale-out execution to 30 shards and achieve a throughput of 1M TPS for non-conflicting workloads and greater than 500K TPS for conflicting workloads.

Surpassing 30 shards and achieving higher TPS is not primarily constrained by execution scaling through additional nodes. Instead, the key limitation lies in the storage capacity of a single node. Implementing multi-machine sharded storage could overcome this bottleneck and enable even greater TPS. Horizontal scalability in execution remains a difficult problem in blockchains. Shardines represents a significant step towards achieving it.

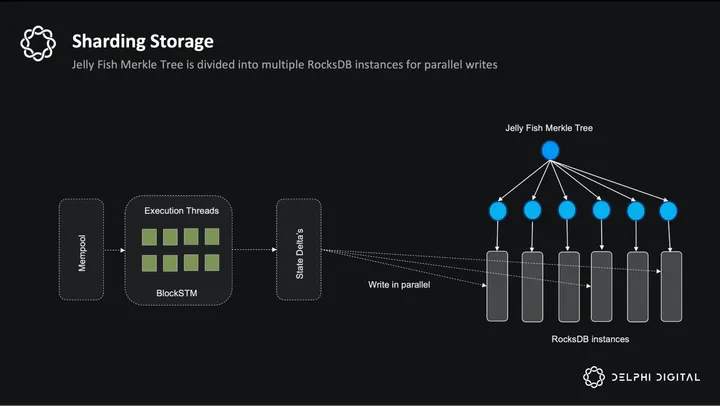

State Scalability

Even with scalable consensus and execution, the state itself can become a bottleneck under heavy load. A monolithic database can’t keep up with other layers because the access to it is too narrow and limited.

Aptos instead represents its global state using a Jellyfish Merkle Tree (JMT) for both efficient authentication and partitioning. Here, the key innovation is in how state is stored. Instead of writing the entire JMT to a single database, Aptos splits it across multiple RocksDB instances within each validator node. The tree is physically partitioned based on the leading bits of account addresses or state keys. This means different sections of the state can be updated independently and in parallel by different cores of the CPU.

During execution, each CPU core can handle a different partition of the state tree, allowing state updates to scale along with compute.

Ongoing research aims to extend this to multi-machine sharded storage, where partitions are no longer confined to a single node. Instead, they’re distributed across physical machines in the network.

Crafting a Richer Developer Experience

The developer experience is one of the most powerful moats for a platform. Throughout the history of technology, platforms that attract a critical mass of developers become a standard, even if they are not the most performant. Aptos was among the top five fastest growing developer ecosystems in 2024, with more than 1800 new developers joining the network. Its 96% year-over-year growth suggests a strong demand driven by its performance and developer experience.

Developers need to ship fast, reach users, and iterate frequently. That’s only possible when platforms abstract away as much of the complexity as possible. They want reduced development times, deployment costs, audit costs, and better security. When safety is built in, builders can be confident in launching high value applications. All of this doesn’t just benefit the developers, but starts a feedback loop with downstream effects: better tooling, more applications, and a better overall user experience.

But today, even chains with parallel execution like Solana or Sui push that complexity back on the developer. Teams are expected to know in advance exactly which read/write sets their applications will touch, even when the logic is runtime-dependent and hard to predict. Developers also have to write manual safety checks which bloat the codebase, increase audit costs, and lead to longer development times. With limited tooling, many teams end up having to build their own infra.

Aptos’ approach towards the developer experience is to absorb all of the complexities into the chain, so that developers get everything they need out of the box.

This is through a combination of:

- Block-STM’s Automatic Parallelism: no manual concurrency

- The Move Language: safe, secure, and expressive.

- Tooling and Ecosystem: streamlined development workflow.

Block-STM – Automatic Parallelism

For application developers, using conditionals, branching logic, or nested data structures makes it difficult to track and declare read and write sets accurately. This gets even more difficult in dynamic, runtime-dependent applications like DEXs. They need to over-declare access to avoid errors, which leads to false conflicts and forces transactions to run sequentially even when they could have been parallelized during runtime.

Block-STM absorbs all of this complexity. It automatically resolves dependencies at runtime, allowing applications to execute in parallel without requiring developers to manage conflict detection themselves.

This unlocks a much wider range of applications, including those which were previously too complex to parallelize manually. It also reduces debugging cycles, lowers development costs, and shortens time-to-market.

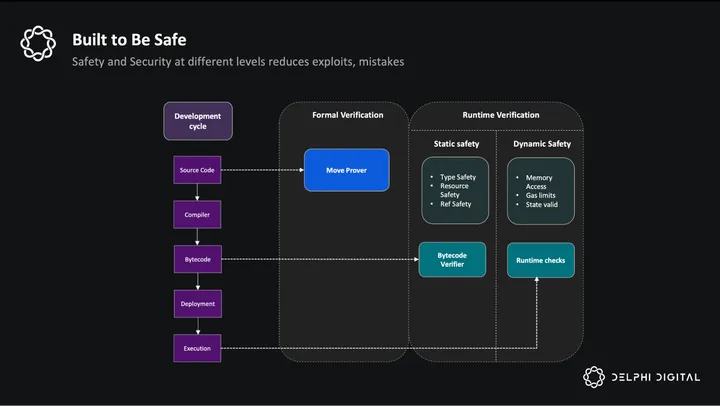

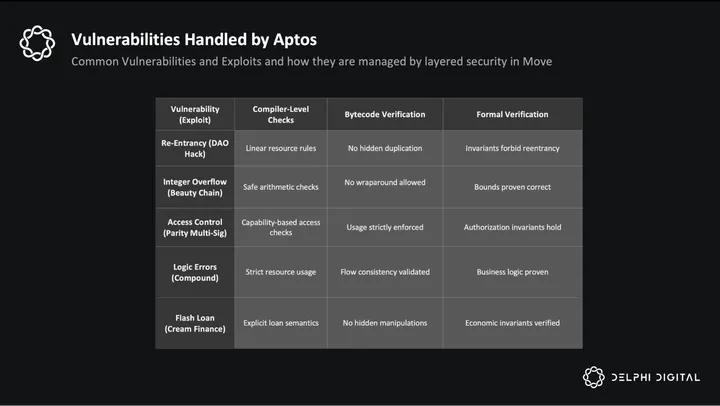

The Move Language: Safety by Default

Smart contract languages like Solidity and Rust leave safety up to the developer. Ownership checks, asset validations, and access controls have to be written manually, which add up to 30-40% of extra code. It also has higher chances of errors, is harder to audit, and is the root cause of many high-profile exploits.

Like BlockSTM, Move handles all of this automatically. Originally developed for Diem, Move was purpose-built for digital assets. It treats assets as its first-class “resources” which are governed by strict rules like physical assets. They can’t be duplicated, accidentally deleted, or reassigned without explicit logic.

This safety is also enforced at multiple levels. The compiler performs bytecode verification to catch bugs even before deployment. Runtime checks ensure that safety rules hold during the actual execution. And the Move Prover allows developers to formally verify that their contracts behave as expected before they are live.

This removes an entire class of vulnerabilities and also reduces code size, audit costs, and attack surfaces. Developers can spend more time building applications, while safety and security – in large part – are automatically a part of the chain’s features. For teams building high-value and regulated applications, Move’s formal verification capabilities make it possible to prove compliance-related guarantees before code is deployed.

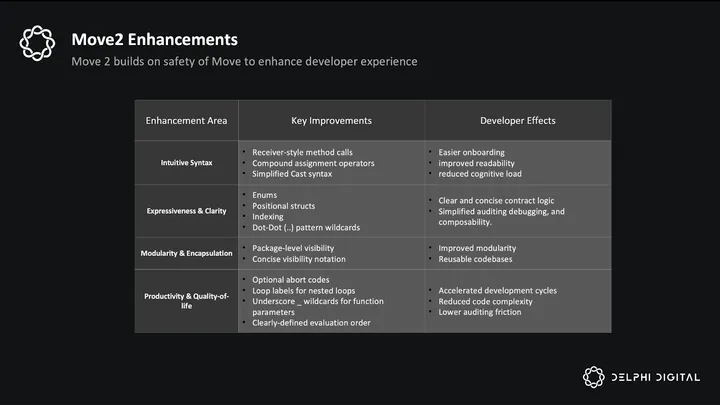

Collectively, Move v2’s improvements further reduce complexity and strengthen contract composability.

Aptos Build

Aptos Build, a product from Aptos Labs, offers everything developers need to go from prototype to production without managing backend infrastructure. It has the following tools,

- API Access to interact with the chain without needing to run nodes or backend services

- NFT Studio for creating, managing, and embedding NFTs into applications

- Identity Solutions to simplify user onboarding using Web2 logins. zk ensures accounts remain both secure and recoverable.

Tools and Frameworks

Beyond Aptos Build, a range of tools is available to enhance developer productivity and application potential:

- Aptos SDKs (TypeScript, Python, Rust): To query NFTs, submit transactions, and interact with Move contracts.

- Aptos Explorer: Transaction tracing, resource flow visualization, and detailed diagnostics for debugging.

- Petra Wallet: A user-friendly, self-custodial wallet supporting transaction simulation, hardware wallet integration, and easy dApp connectivity

While this tooling makes development easier, creating an intuitive user experience requires more than just dev tooling. Apps often burden users with non-intuitive interfaces and security concerns. This, in most cases, is not the fault of the developer, but the lack of integrated account management and security tools at a platform level.

To address this, Aptos includes built-in account primitives designed to improve UX from the ground up. These include multi-sig accounts, isolated “sandbox” accounts for risk management, delegated permissions to trusted applications, and conditional blind signing. These features seem abstract at first, but collectively they lead to a much better experience. Using these, developers can integrate recovery methods, automation, and other features into their applications to make life easier for users.

Enabling a Smooth Experience

UX is the biggest hurdle to crossing the chasm and getting mainstream adoption. Many argue that it’s unreasonable to expect an average user to manage unrecoverable keys, sign incomprehensible transactions, install wallets for each chain, or wait a minute for basic interactions. Users are used to smooth and safe online interactions in their existing apps.

This experience is their benchmark. Anything slower, riskier, or more confusing feels broken. They expect speed, simplicity, and safety, which current onchain experiences often lack.

Aptos is trying to bridge this gap. Instead of forcing users to adapt to new and difficult onchain interactions, it brings back a familiar Web2-like experience. These improvements extend beyond onboarding to include key management and automations, covering a spectrum of workflows across use cases.

User adoption hinges on minimizing friction, particularly at critical junctures. New users arrive either from other blockchains or from centralized exchanges. Both face different hurdles. Tasks like wallet creation, seed phrase management, and asset bridging are common drop-off points.

For first-time users, Aptos Keyless removes the need to manage seed phrases. Instead, they can log in using Google Sign-In, just like any Web2 app. On-chain key rotation and recovery mechanisms mitigate the risk of key loss. For users coming from other chains, X-Chain Accounts will soon allow them to connect their MetaMask or Phantom wallets to access Aptos apps. This bypasses the need for cumbersome bridges, on-ramps, or new wallet setups. Native USDC can be transferred using Circle’s CCTP, and session keys allow trusted dapps to take care of signing flows on behalf of users.

Users expect standard features like account recovery via trusted devices; account abstraction enables multisig accounts on Aptos to fulfill this. Other advanced intents like placing limit orders, automating portfolio rebalancing, or scheduling recurring deposits require automation. Relying on off-chain keepers, like many Web3 applications do, introduces latency, trust assumptions, and complexity. Aptos solves this by separating onchain automations into two layers, a policy layer that governs what the automation is allowed to do, and an execution engine to carry out those actions onchain.

At the policy layer, users can define rules regarding actions using a permissioned signer. This is proposed to be added to the chain. Using Blind Signing, users can pre-authorize actions under specific conditions. Session Keys provide similar temporary, limited permissions.

On the execution side, scheduled transactions are being explored for precisely timed or condition-triggered execution directly on-chain. These transactions cannot be censored and are executed at the start of every block. This opens up the door to a smoother use of autonomous agents in Aptos DeFi.

A core part of UX isn’t just what users can do, but also how it feels. In today’s age of 120 Hz refresh rates and low attention spans, predictable sub-second finality ensures interactions feel nearly indistinguishable from Web2. Consistently low transaction fees also make usage feel nearly free. Users don’t have to trust any intermediaries for their account balances. They can instead get light state proofs inside their own wallets to independently verify information.

Transaction signing is where a lot of users lose their funds, something recently emphasized with the $1 bn+ Bybit attack as well. Attackers can compromise frontends and misrepresent what a user is approving. Aptos provides Transaction Pre-execution results by simulating the transactions so that users get a clear and human-readable preview of the outcome before signing each transaction. This helps users spot potential threats, making them feel safe.

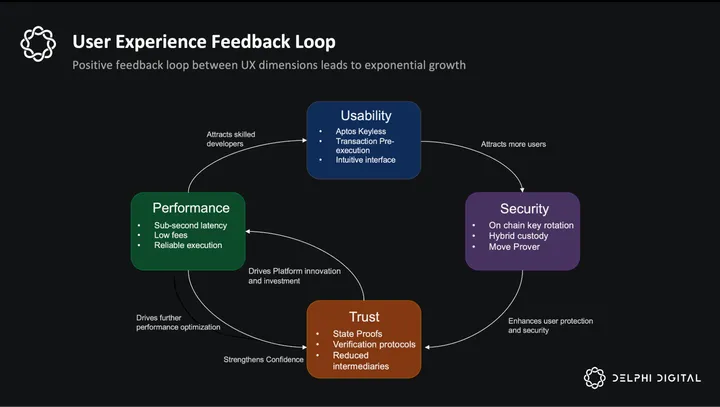

These improvements span the entire lifecycle of user interactions – easier onboarding, intuitive interactions, Web2-esque speed, and verifiable trust create a feedback loop that is beneficial for Aptos native applications. When users feel safe and the platform is easy, they use dapps more frequently and get comfortable transacting with larger amounts. This growing user activity also attracts more developers, who build richer applications and increase the overall platform value. This positive feedback loop is important for developing a vibrant ecosystem.

Keyless authentication, transaction simulation, sub-second finality, and on-chain key management are all live and operational on Aptos mainnet today.

Future Vision

With over 100+ AIPs already integrated, Aptos has a number of technical innovations in the pipeline to push the performance further. They are:

- BlockSTM V2 (Execution upgrade) – scale parallel processing engine to 256 core CPUs in a single node.

- Raptr (Consensus upgrade) – combines the benefits of a DAG-based and single leader approach for optimal consensus latency under high loads and real world conditions.

- Zaptos (Pipeline upgrade) – end-to-end transaction pipeline producing the best known end-to-end latency.

- Tiered storage (Storage upgrade) – dividing I/O into hot and cold tiers to optimize performance even with a large number of accounts.

- Execution Pool – buffer between consensus and execution to extract maximum parallelization.

Additionally, there are proposed improvements in user experience and developer tooling. We have covered them in previous sections. These are:

- X-Chain Accounts

- Account Abstraction

- Permissioned Signers

- MultiSig Accounts

- Scheduled Transactions

Conclusion

With DeFi leading the charge for blockchain PMF, every chain is contending for a single goal: to be the platform of choice to host the most robust and liquid internet capital markets. There are trillions of dollars of TAM on the line with financial services – asset management, asset issuance, payments, and more.

Today’s financial markets are plagued with structural inefficiencies: high settlement times, fragmentation, high costs, and disproportionate access to assets. It is increasingly clear that blockchains can usher in a new era of finance given newfound regulatory clarity, institutional adoption, and stablecoin growth. The hunger for efficiency, transparency, and global access to financialized assets has never been higher.

For these contending blockchains, performance and fast confirmation times are not enough. Solana, Monad, Sui, and MegaETH all provide similar throughput and latency. Every chain is a fast chain. Incremental speed improvements are no longer a structural advantage; they are now table stakes.

Winners in this new era will be those who solve for the needs of the future of finance – not just throughput, but fairness, composability, compliance, developer productivity, and user experience. Winners of the previous technology wars, like Magnetic core memory and DRAM, weren’t the fastest among their competitors, but those that were able to nail all the needs of the market.

Aptos is well positioned to become a core hub for internet capital markets. And while the technology stack has been rapidly iterated on and improved over the past year, there are a few key markers to watch for. In order for Aptos to be a true contender in the race to win mindshare, the following must be true:

-

- Performance: Aptos consistently delivers low latency and high sustained throughput under load.

- Independent Scalability: Each component actually scales independently without degrading UX.

- Security and Compliance: Move’s resource model and formal verification help it become the tool of choice for aspiring product founders.

- Developer Productivity: Automatic parallelism and safety are consistently successful in reducing code size, audit surface, and development cycles; thus opening the floodgates to new, less capitalized product teams.

- Superior User Experience: Easy onboarding for all users, automations, lower costs, and high safety.

As performance becomes commoditized, these layers will matter more. Application teams won’t pick just the fastest chain but the one that’s fastest to build on, safest to deploy to, and gives their users the smoothest experiences. Aptos is setting itself apart—not just as a high-performance blockchain, but as the foundational rails for tomorrow’s capital markets.

0 Comments