Bless Network: Transforming Edge Computing Through Decentralized Infrastructure

JUL 28, 2025 • 32 Min Read

Report Summary

Summary

Bless Network is a next-generation decentralized computing platform designed to transform edge computing by tapping into the vast, unused power of everyday devices — smartphones, laptops, gaming PCs — instead of relying solely on centralized data centers. The goal: build a global, resilient “shared computer” that can power demanding workloads like AI, gaming, DeFi, and smart infrastructure while keeping costs low and performance high.

Key Takeaways

1. Solving Centralized Cloud Weaknesses

-

Traditional cloud services are expensive, monolithic, and increasingly outdated for emerging workloads like AI at the edge.

-

Centralized infrastructure creates vendor lock-in, scaling costs, single points of failure, and inefficiencies.

-

Bless tackles this by distributing workloads across billions of idle devices worldwide.

2. Why Old Decentralized Models Failed

-

Too complex for normal users (technical setup, maintenance).

-

Rigid, inefficient verification that slows performance.

-

Static resource allocation leads to bottlenecks.

-

High latency and low throughput make them impractical for serious applications.

3. Bless Network’s Practical Edge Computing

-

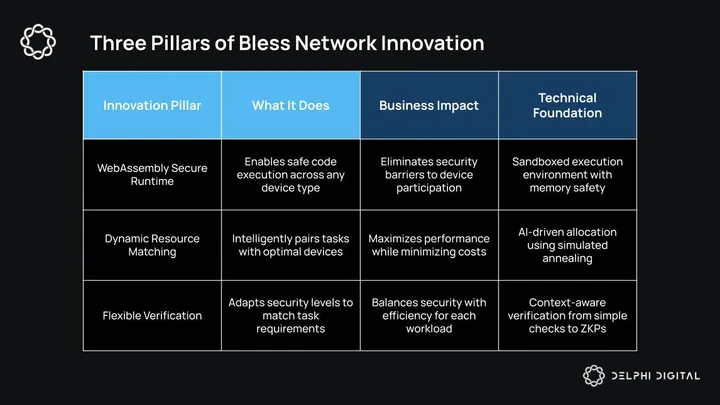

WebAssembly (WASM): Provides secure, language-agnostic execution on diverse devices.

-

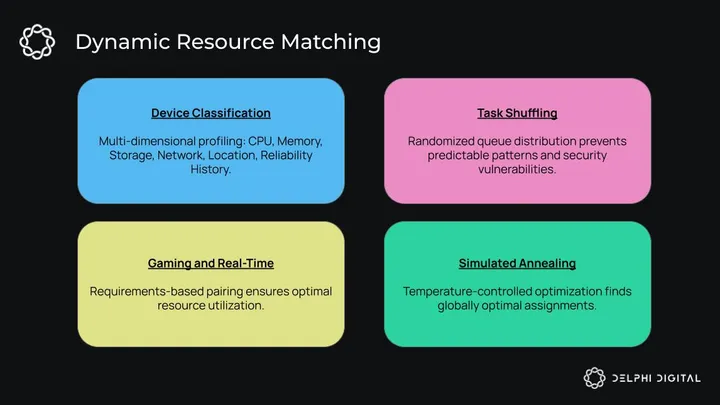

Dynamic Resource Matching: Tasks are matched to the best hardware in real-time using simulated annealing — a smart optimization approach.

-

Flexible Verification: Uses adaptive methods, including zkSNARKs, to prove computations without compromising speed or privacy.

-

Hybrid Architecture: Combines user devices with high-spec native nodes for enterprise-grade reliability.

4. Security & Trust

-

Secure WASM sandboxes isolate tasks to prevent malicious code risks.

-

zkSNARK-backed execution proofs (ZAWA) guarantee work is valid without revealing sensitive data.

-

Greco-Latin square math keeps node assignment unpredictable, thwarting Sybil and targeted attacks.

-

Sybil resistance uses multi-factor node profiling to detect fake nodes.

5. Real-World Performance

-

Testnet processed over 14,000 workloads with an average failover response under 1 second.

-

Over 5.4 million nodes registered across 210 countries, proving large-scale viability.

-

Can upscale tasks like video rendering massively, e.g., 55,000 HD videos to 4K in one hour with 1M nodes.

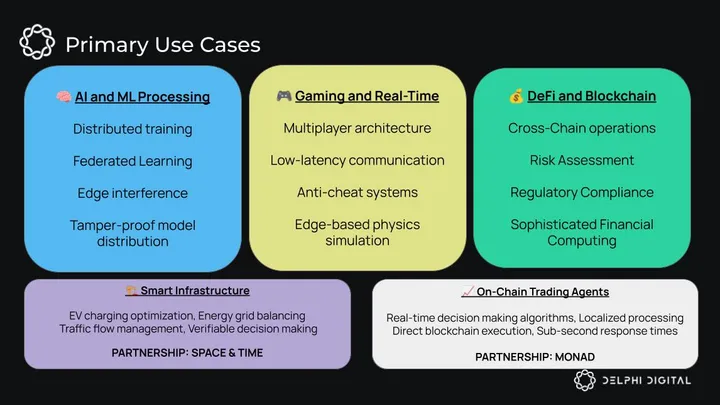

6. Diverse Use Cases

-

AI & Machine Learning: Distributed model training, federated learning for privacy, and low-latency edge inference.

-

Gaming: Edge-based physics, anti-cheat, real-time state sync for smoother multiplayer.

-

DeFi: Cross-chain computation, risk assessment, on-chain algorithmic trading.

-

Smart Infrastructure: ZK-secure AI agents manage smart grids, EV networks, and city traffic.

-

Partnerships: Working with Space and Time for verifiable data delivery and Monad for ultra-low latency trading AI.

7. How Bless Stands Apart

-

Compared to Celestia: Bless focuses on computation + storage vs. pure data availability.

-

Compared to Polygon: Runs device-agnostic WASM on consumer hardware vs. dedicated validators.

-

Compared to AWS/Google Cloud: Decentralized, no single-point failures, community-owned infra.

-

Compared to Akash: Broader than pro-hosting — everyday users can participate with no heavy setup.

-

Compared to Grass: Monetizes computing power, not just bandwidth.

Conclusion

Bless Network is building what other decentralized computing projects failed to deliver: a practical, secure, accessible edge computing network that anyone can join, that scales globally, and that solves real problems in AI, gaming, DeFi, and smart infrastructure.

If they execute the full mainnet launch and overcome global rollout challenges, Bless could break the monopoly of Big Cloud, democratizing compute the way Bitcoin democratized money.

Bottom line: Bless turns idle devices into a vast, verifiable “shared computer” — the missing piece for the AI and edge computing future.

-

Executive Summary

Introduction

The computational landscape is changing fast. AI is everywhere, edge computing is becoming essential, and we need way more processing power than ever before. But here’s the thing: traditional centralized cloud services, while enormously successful, are increasingly revealing their age. They’re expensive, monolithic, and frankly, not built for what’s coming next.

Computing power, once the exclusive domain of governments and large corporations housed in massive data centers, is now distributed across billions of devices worldwide. Think about it. You’ve probably got a powerful computer in your pocket and another one sitting on your desk. Yet, counterintuitively, our devices becoming more powerful hasn’t given us more independence. Our reliance on centralized cloud infrastructure has only increased. Despite all your computing power, you don’t have much choice but to remain a customer of a distant server farm owned by a tech giant. That’s weird, right?

The collective computational power of humanity’s devices goes largely untapped, while applications struggle with critical challenges that traditional infrastructure cannot efficiently solve. All the while, cloud monopolies consolidate their control.

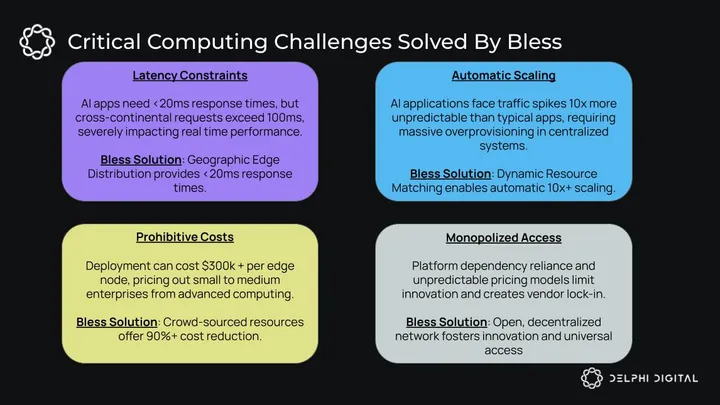

Critical Challenges in the Computing Landscape

Modern applications face four big problems that traditional infrastructure cannot handle well (shown in the image below). Meanwhile, the move from centralized to decentralized computing is happening, and current decentralized solutions face significant barriers that prevent mass adoption.

Centralized infrastructure gives you predictable performance and familiar development patterns. But it also creates limits. Scaling costs become too high and platform dependencies limit what you can build.

On the other hand, while existing decentralized computing networks sound great in theory, in practice they are too complex. Lack of flexibility adds to these woes. As a result, most consumers can’t and won’t use them.

All this leads to an inevitable question: how do we get the benefits of decentralized computing without the problems that have stopped people from adopting it?

The industry needs a solution that bridges the gap between centralized efficiency and decentralized resilience. It has to be both technically sophisticated and accessible to your average consumer.

Bless Network: A Practical Approach to Edge Computing

Bless Network rethinks the whole paradigm. Instead of building more data centers, Bless taps into the massive amounts of unused computing power already distributed across billions of devices. It turns them into one massive computing network. Your gaming PC when you’re not using it. Your laptop during lunch break. Even your phone while it’s charging overnight. All of it working together.

Previous attempts at decentralized computing failed due to complexity. Bless focuses on solving real problems for real applications. The network uses WebAssembly runtime environments, dynamic resource matching algorithms, and flexible verification mechanisms. This creates advantages over both traditional cloud providers and previous decentralized computing networks.

At its core, the Bless Network can be thought of as “the world’s first shared computer” – a global computational mesh where millions of everyday devices work together to power the next generation of applications. AI workloads, edge computing, and globally distributed applications all benefit.

And the network isn’t decentralized just for the sake of being decentralized. It provides tangible advantages: automatic scaling, reduced latency, simplified infrastructure management, and cost efficiency.

This practical approach extends to every aspect of the network design:

WebAssembly provides a secure runtime environment. Dynamic resource matching algorithms efficiently pair tasks with appropriate devices. Flexible verification mechanisms adapt to different computational requirements. The result combines the best aspects of centralized and decentralized approaches.

Developers get a simple, serverless interface while leveraging a globally distributed network that provides required computing resources on demand. Scaling matches demand and infrastructure management overhead drops significantly.

To understand how Bless Network delivers on its promise while solving today’s most pressing computational challenges, let’s explore its unique architecture, performance characteristics, and the transformative impact it could have on application development, user experience, and the broader technological landscape.

Why Traditional Decentralized Computing Has Failed to Achieve Mass Adoption

Before we explore Bless Network’s architecture in detail, it’s crucial to understand the real challenges facing traditional decentralized networks. Below are some fundamental design flaws that have prevented them from becoming viable alternatives to centralized infrastructure:

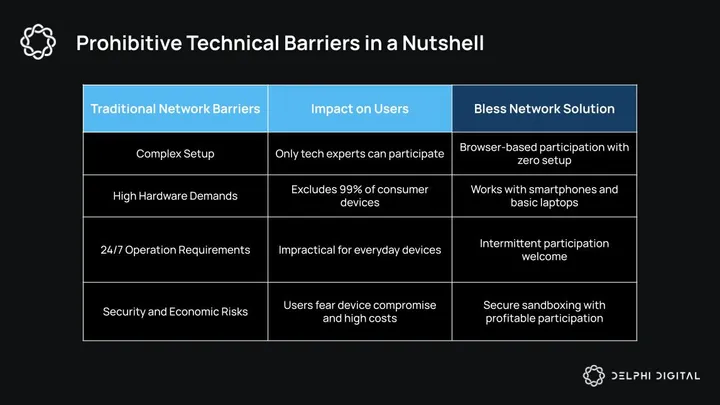

Prohibitive Technical Barriers Block User Participation

The biggest obstacle to widespread adoption has been complexity. Traditional networks create multiple layers of technical barriers that exclude most potential users and contributors.

These barriers show up in several ways. Complex installation procedures require technical expertise. Specialized hardware requirements exclude consumer devices. Network configuration challenges demand a deep understanding of distributed systems. Ongoing maintenance overhead makes participation impractical for casual users. Only technically sophisticated users with dedicated hardware can meaningfully participate. This drastically limits potential network size and diversity.

Security concerns create another barrier. Users worry about their devices being compromised, excessive resource consumption, and potential malicious code execution on their personal devices. These valid concerns, combined with complex setup procedures, create a participation threshold that excludes almost all potential contributors.

Bless Network addresses these barriers through its WebAssembly-based runtime. It enables secure participation across a wide range of devices without complex setup procedures or dedicated hardware requirements. We’ll dive deep into this later in the architecture section.

Rigid Verification Systems Fail to Accommodate Diverse Computation

Traditional decentralized networks implement rigid, one-size-fits-all verification and consensus mechanisms. These create inherent inefficiencies and fail to accommodate diverse computational tasks:

- Verification-Task Mismatch: Different computations need different verification. Deterministic calculations, non-deterministic processes, numerical outputs, and boolean results each demand tailored verification. Complex tasks like machine learning inference or gaming updates benefit from specialized verification approaches.

- Performance Bottlenecks: Heavy consensus protocols applied to simple tasks create unnecessary overhead, and time-sensitive applications suffer when forced through multi-node verification processes instead of using the fastest available responder.

- Verification Gaps: Fixed verification models struggle to support the entire application stack. This leaves portions of decentralized applications unverifiable or inefficiently verified.

Bless Network implements adaptive verification, selecting the optimal mechanism based on the specific computation type, security requirements, and performance needs. This enables fully verifiable applications while maintaining practical efficiency.

Static Resource Allocation Creates Systemic Inefficiencies

Conventional decentralized computing networks rely on static resource allocation methods that create fundamental inefficiencies:

- Resource Concentration: Computing power gravitates toward high-performance nodes, simultaneously leaving average machines underutilized while creating bottlenecks on powerful devices.

- Capability-Task Alignment Failure: Tasks are distributed without proper consideration of hardware requirements, geographic proximity to users, or current system load.

- Adaptation Deficiency: Fixed assignment mechanisms cannot respond to changing network conditions, evolving computational demands, or optimize for energy efficiency.

Bless Network’s dynamic resource matching ensures computational tasks are intelligently paired with appropriate devices across the entire hardware spectrum. This includes everything from enterprise servers to everyday machines, based on specific capabilities, geographic location, and real-time availability. Every node contributes meaningfully to the network. We’ll explore the importance of this participation in detail in the following architecture section.

Performance Limitations

Decentralized applications have historically suffered from significant performance limitations compared to their centralized counterparts:

- High Latency: The overhead of consensus mechanisms and distributed execution results in higher latency compared to centralized solutions.

- Limited Throughput: Many decentralized networks have limited throughput capacity, restricting their utility for high-demand applications.

- Inconsistent User Experience: Variable performance across different nodes and geographic regions frequently results in an unreliable experience for end users.

- Scaling Challenges: Traditional decentralized networks often struggle to scale effectively in meeting growing demand.

Bless Network addresses these performance limitations through its pragmatic approach to decentralization. It optimizes for real-world performance while maintaining the benefits of a distributed architecture.

Bless’ Dynamic Architecture: Making Every Device Count

At the heart of the Bless Network lies a new approach to resource allocation that transforms how computational tasks are distributed. Unlike traditional computing models that rely on static resource allocation, Bless dynamically matches tasks with the most suitable nodes across its distributed network. This creates an adaptive ecosystem where computational power flows naturally to where it’s needed most. That’s not just efficient – it’s new.

How User Devices Are Categorized

When devices join the Bless Network, they undergo a sophisticated classification process. The system evaluates them across multiple dimensions. It examines processing power, memory, storage speed, network connection, location, and reliability. This creates a comprehensive digital fingerprint of each node.

This rich profiling enables the network to understand not just what a device can do, but how well it can do it within specific contexts.

Say you’ve got a gaming PC in Seoul with a great GPU but mediocre internet. The system knows your PC is perfect for graphics-heavy tasks, but maybe not the best for communication-intensive work. Meanwhile, a server in Frankfurt with blazing-fast internet gets matched with tasks that need lots of data transfer. This multi-dimensional understanding creates a vibrant marketplace of computational resources. Each device’s unique strengths are leveraged appropriately.

How Computational Tasks Are Distributed

Once the network understands its available resources, it employs a sophisticated distribution algorithm that transforms chaos into harmony. The process begins by creating clean slates – empty task arrays for each eligible computer. This is followed by purposeful shuffling of pending tasks to prevent predictable patterns and potential security vulnerabilities.

The system then methodically matches tasks to computers based on specific attribute requirements. This ensures each computational job finds its ideal home. Before finalizing any assignment, the network verifies that selected devices meet or exceed minimum requirements for their assigned tasks. This creates a quality assurance layer that maintains performance integrity across the ecosystem.

This intelligent distribution ensures that a complex machine learning task doesn’t end up running on a device with insufficient memory. Meanwhile, a simple calculation won’t unnecessarily consume resources on a high-performance node that could be handling more demanding work.

How Simulated Annealing-Based Evaluation Works

The true innovation in Bless Network’s resource matching appears in its evaluation methodology. The network employs simulated annealing, an algorithmic approach inspired by the metallurgic process in which metal is strengthened through careful heating and cooling. Bless employs simulated annealing to find optimal matches between tasks and resources.

The system begins with “high temperature.” It welcomes a wide range of potential node candidates and allows for exploratory matching. As the process progresses, this temperature gradually cools. It focuses increasingly on optimal pairings. Each potential match receives a comprehensive suitability score based on how well the device fulfills required attributes.

What makes this approach particularly powerful is its dynamic acceptance probability. Early in the process, even somewhat suboptimal matches might be accepted, preventing the system from becoming trapped in local optimization patterns. As the temperature decreases, the acceptance criteria become increasingly stringent. By the end, you’ve got near-perfect pairings between tasks and devices.

This balance between exploration and exploitation makes a quantum leap beyond traditional node selection methods, creating a system that continuously learns and improves its matching capabilities over time.

How Optimization for Various Forms of Hardware Works

In today’s computing landscape, diversity is reality. The Bless Network embraces this heterogeneity by designing an architecture that doesn’t just tolerate hardware differences – it thrives on them. The system achieves this flexibility through intelligent scaling of task complexity based on device capabilities. It implements architecture-specific optimizations for different CPU types. It also dynamically adjusts memory allocation according to available resources, prioritizing geographic proximity for applications where latency matters most.

This flexibility enables the network to effectively utilize everything from high-end servers to consumer-grade devices. It significantly expands the potential resource pool. A modest smartphone might excel at certain localized computational tasks, while a powerful workstation handles more demanding processes. By embracing this diversity, Bless creates a more resilient and capable network than would be possible with a homogeneous approach.

How WASM Creates an Unbreakable Security Foundation

Security and trustlessness form the foundation of Bless’ decentralized computing stack. The network’s WebAssembly (WASM) secure runtime environment enables computational workloads to be executed across untrusted devices without compromising security or integrity.

The network leverages the Blockless Runtime, a specialized WASM execution environment that provides rigorous process isolation. This security model capitalizes on WASM’s deterministic execution and memory safety features. Think of it as a secure sandbox where code can run in its own isolated environment. This comes with strict limits on CPU usage, memory access, and system interactions to prevent resource abuse. Constrained memory access ensures operations remain limited to allocated module space. And communication between execution nodes follows strict protocols that eliminate side-channel vulnerabilities.

Taking security a step further, Bless integrates ZAWA, a zkSNARK-backed WASM emulator. This is a system that generates calligraphy proofs, verifying that computations were performed correctly. All of this is done without revealing sensitive information. It produces succinct zero-knowledge proofs (ZKPs) confirming that computational results follow specifications, execution was performed honestly, and no sensitive information leaked during processing.

This unique approach translates WASM semantics into arithmetic circuits. These are verified through frameworks like Halo2, ensuring execution traces are both provably correct and ZK compliant, which enhances both auditability and privacy simultaneously. Think of it as a mathematical guarantee that the work was done honestly.

Cross-Language Compatibility

The WASM runtime supports a language-agnostic interface, empowering developers to write smart modules in multiple languages:

- Rust

- C/C++

- Swift

- AssemblyScript

- Kotlin and more

These are compiled into portable WASM bytecode, reducing barriers to entry and expanding the developer ecosystem.

How High-Performance Execution is Achieved

Performance isn’t sacrificed for security, as the Bless Network’s runtime optimization focuses on throughput and scalability:

- Optimizing Code Generator: translates WASM bytecode into efficient machine-level instructions

- Low-latency Instantiation: minimizes module startup overhead for rapid job execution

- Concurrent Scalability: supports parallel execution of multiple isolated instances

These enhancements deliver performance comparable to traditional cloud infrastructure while maintaining decentralization benefits.

Developers and validators gain fine-grained control through configurable execution policies that set CPU and memory quotas, manage network and file system access permissions, and enforce dynamic WASM restrictions through stake-weighted governance. The network also supports the WebAssembly System Interface (WASI). WASI provides consistent syscall interfaces across hardware variations, controlled access to networking and file operations, and extensibility without modifying module logic. So, this implementation enables future capabilities through dynamic interface extensions without breaking compatibility.

Bless Network’s WASM runtime represents a trustless, high-performance execution layer designed for decentralized computing. By combining WebAssembly’s security model with ZAWA’s zkSNARK-backed verification system, Bless provides a foundation for running verifiable, private, and secure user-defined code across diverse applications from decentralized AI to ZK service orchestration.

Bless’ Deployment and Execution Flow

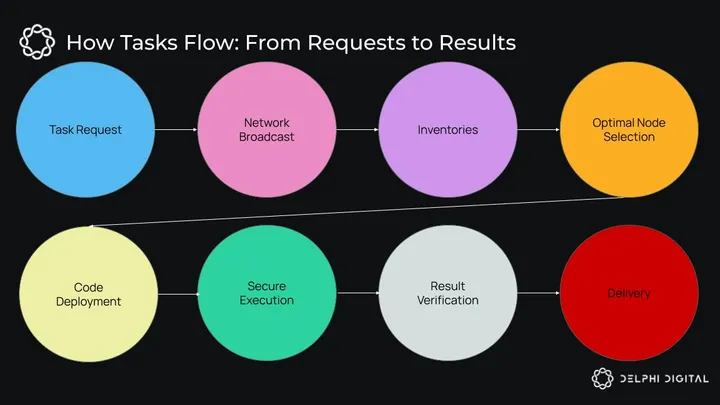

The journey of a computational task through the Bless Network is carefully orchestrated to maximize efficiency and security. When a request for computational work enters the system – whether from a blockchain or API – it initiates a cascade of coordinated actions.

The orchestrator network broadcasts deployment requests. This creates network-wide visibility, allowing all potential nodes to evaluate their capability to handle the incoming work. Network machines respond with detailed inventories of their installed functions and available capacity. This provides a real-time snapshot of resources across the distributed system.

Armed with this comprehensive resource map, the head node selects the most appropriate machine based on inventory responses and the task’s specific requirements. Selected machines either deploy new functions as needed or utilize cached versions from Filecoin gateways. This optimizes for both speed and network efficiency. Once properly equipped, the machine executes the requested work and returns results to the head node, which ultimately delivers them to the original requestor.

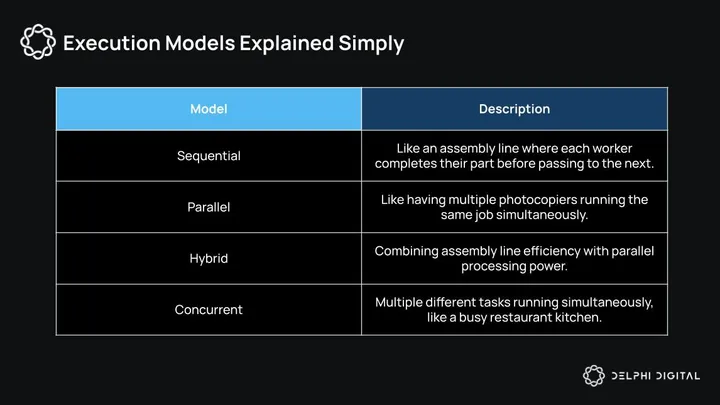

This system supports multiple execution models tailored to different computational needs. Sequential execution creates pipelines where methods run in order on different workers. Each step depends on the completion of previous operations. For compute-intensive tasks, parallel execution deploys the same method across multiple workers simultaneously – this enables horizontal scaling limited only by available network resources.

More complex workloads benefit from hybrid approaches like sequential + parallel execution. Hybrid approaches combine the sequential execution of different methods with the parallel execution of specific resource-intensive steps. The concurrent model takes flexibility even further by allowing different methods to execute on different machines simultaneously. It optimizes for overall execution time rather than sequential dependencies.

This sounds complex, but is pretty straightforward in practice. Here’s a simplified overview:

For storage and distribution of WebAssembly files and associated assets, the network employs the IPFS Container Archive (CAR) format. It uses content-based identifiers to ensure integrity and tamper resistance while simplifying application management across the network. This reduces centralization and enhances security when loading and executing code.

The network’s extension system adds another layer of flexibility. Network Agents download CGI Extensions, launch applications using the WASM runtime, and manage a verification process that scans and validates all extensions before storing information in a SQLite database. The structured approach enables the runtime environment to expand its capabilities while maintaining security and reliability.

Through this complex yet elegantly designed flow, Bless transforms abstract computational requests into tangible results. All while preserving the security, efficiency, and decentralized nature that defines its architecture.

How Bless’ Testnet Performance Stacks Up: Proving Real-World Capabilities

The Bless Network testnet has demonstrated impressive real-world performance metrics that validate its architectural approach:

- 14,211 workloads processed during testnet operations

- 900ms average failover response time, ensuring network resilience

- 5,481,371 registered nodes across the testing period

- 378,236 users have successfully referred others to join

- 1,131,503 weekly active nodes operating from 210 countries and regions

Based on current participation data, the network estimates that with 1 million active nodes (representing roughly 250 PFLOPs of computing power), the system could upscale 55,000 videos (30 minutes each) from 1080p to 4K in just one hour if running at 100% utilization.

How Bless Outsmarts Attackers: Advanced Security Design

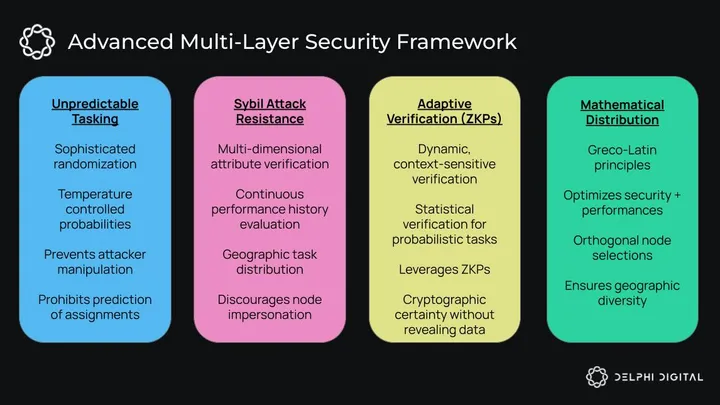

Bless’ security framework represents a fundamental evolution beyond traditional approaches. It implements multiple layers of protection that make attacks prohibitively expensive while maintaining optimal performance:

- Generating Unpredictability to Thwart Attackers

Bless prevents attacks by making task assignment unpredictable. Traditional networks suffer from predictability since attackers can anticipate which nodes are to receive specific tasks. Bless eliminates this through sophisticated randomization that maintains efficiency while preventing exploitation. The system shuffles task queues, randomly selects candidates, and uses temperature-controlled probabilities to ensure no attacker can predict or manipulate assignments.

So what truly distinguishes the Bless approach is its sophisticated use of temperature-controlled acceptance probabilities. Like simulated annealing in optimization problems, this technique introduces controlled variability that prevents deterministic prediction while still ensuring tasks are appropriately matched to capable nodes. The suitability score comparisons create a system where even the most sophisticated observer cannot reliably predict which node will receive a specific task next.

This carefully calibrated randomization strikes a delicate balance. It maintains optimal performance and appropriate resource matching while generating sufficient unpredictability to thwart attackers. The result is a system where attackers face prohibitive costs when attempting to manipulate node assignment.

- The Work That Goes To Resisting Sybil Attacks

What are Sybil attacks? They happen when bad actors flood a given network with fake nodes they control to gain malicious influence.

Bless Network defends against these attacks by examining nodes across multiple dimensions. Such dimensions include performance history, geographic distribution, and hardware characteristics. Monitoring these patterns makes it extremely difficult to create node imitations at scale. They would require enormous resources, making them prohibitively expensive for attackers.

- Adaptive Task Verification

Unlike traditional networks that apply one-size-fits-all verification approaches, Bless reimagines verification as a dynamic, context-sensitive process. This adaptive approach recognizes that different computational tasks have fundamentally different security requirements and verification needs.

For straightforward deterministic computations, the system might employ simple result comparison across multiple nodes. When dealing with probabilistic algorithms, statistical verification methods may be employed instead. These look for consensus within acceptable margins rather than exact matches.

For particularly complex calculations, the network can leverage ZKPs. This allows for cryptographic certainty without the computational expense of redundant execution.

- Forward-Thinking Security via ZKPs

Bless’s sophisticated integration of ZKP systems for appropriate computational tasks demonstrates its forward-thinking security architecture. These cryptographic techniques allow the network to verify computation correctness without revealing sensitive inputs – a crucial capability for privacy-preserving applications.

Beyond privacy benefits, ZKPs can dramatically reduce verification overhead for complex calculations. Rather than requiring multiple complete executions of a complex task, the network can achieve cryptographic certainty through simple mathematical proof validation.

- Mathematical Distribution: The Greco-Latin Square Advantage

Perhaps the most innovative aspect of Bless’s security architecture is its application of Greco-Latin square principles to node selection. This mathematical approach creates a balanced system that optimizes security, performance, and resource utilization simultaneously.

Drawing inspiration from combinatorial mathematics, this method creates orthogonal sets of node selections. This ensures no single node becomes a central point of failure. Tasks are systematically distributed across diverse geographic regions, preventing both accidental clustering and targeted attacks on specific network segments.

Simply put, the mathematical structure ensures hardware capabilities are appropriately matched to requirements without creating obvious patterns that could be exploited.

Breaking the Data Center Monopoly: Bless’ Tiered Computational Model

Transforming Idle Devices into Productive Infrastructure

The Bless Network fundamentally reimagines how computational power is sourced and distributed. Rather than relying on massive, centralized data centers that consume enormous amounts of electricity and require substantial capital investment, the network taps into the vast ocean of computing resources that already exist in our pockets, on our desks, and throughout our homes.

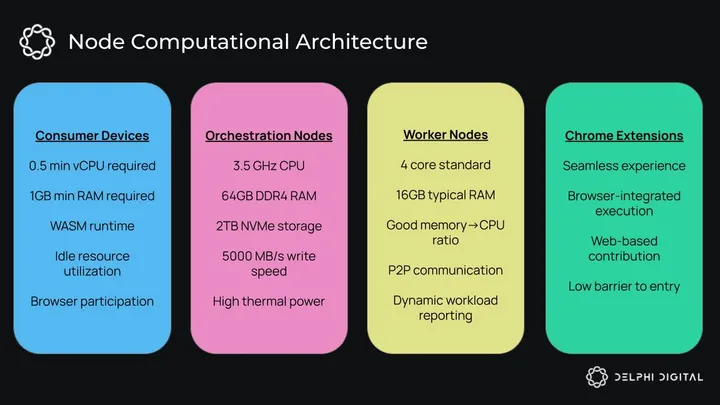

This democratized approach transforms everyday devices into valuable contributors to a global computational grid. Your smartphone charging overnight, your gaming PC sitting idle between sessions, or even your laptop during lunch breaks can all become productive participants in this revolutionary network.

The barriers to entry are intentionally minimal. Worker nodes can operate effectively with just 0.5 vCPU and 1GB of RAM. Standard 4-core processors with 16GB of RAM can handle substantial workloads that would traditionally require enterprise-grade infrastructure.

Hybrid Architecture: Best of Both Worlds

While user devices form the vibrant foundation of the network, Bless strategically incorporates dedicated infrastructure to ensure enterprise-grade reliability and performance consistency. This hybrid approach creates a robust backbone that can weather the natural fluctuations inherent in crowd-sourced computing.

Native Nodes represent the network’s premium tier. These feature purpose-built machines optimized for peak performance. Blockchain Orchestration Nodes deploy high-performance specifications including 3.5GHz CPUs with substantial thermal design power, 64GB DDR4 RAM, and lightning-fast 2TB NVMe storage capable of 5000 MB/s write speeds.

Chrome Extension Nodes represent perhaps the most innovative aspect of the support system. They enable zero-friction participation through browser-based computing so users can contribute computational resources without any installation process. This seamlessly integrates network participation with everyday web browsing activities. Bless dramatically expands the potential node operator base by eliminating technical barriers that have traditionally prevented casual users from participating in distributed networks.

Use Cases and Applications

The Bless Network unlocks a new spectrum of possibilities for decentralized applications. Bless moves beyond theoretical concepts to address real-world computational needs. Its unique architecture, combining distributed edge computing with verifiable execution, positions it as a foundational layer for the next generation of internet services.

Bless’ capabilities extend into multiple domains, where partnerships are already in place, to deliver immediate value when the mainnet launches. The network powers advanced AI agents for smart infrastructure, enables real-time trading agents that operate on-chain, and redefines how internet economics works through verifiable compute and data access protocols.

Machine Learning and AI Processing

The democratization of artificial intelligence has long been hindered by the astronomical costs and technical barriers of traditional centralized infrastructure. Bless changes this equation by creating a distributed AI ecosystem, making machine learning accessible to developers and organizations regardless of their resource constraints.

Revolution of Distributed Training

Traditional machine learning training requires expensive GPU clusters that often remain idle or underutilized. The Bless Network transforms this landscape by enabling model sharding across multiple devices – different parts of neural networks can be trained simultaneously across geographically distributed nodes. This approach not only reduces costs but can actually improve training times through massive parallelization. Gradients are computed in parallel and synchronized through sophisticated parameter server architectures.

The network’s federated learning capabilities are particularly compelling for organizations concerned about data privacy. Rather than centralizing sensitive datasets, companies can contribute to model training while keeping their data local. Only gradient updates are shared across the network. This approach addresses one of the most significant barriers to AI adoption in regulated industries.

Optimization of Edge AI

The geographic distribution of Bless nodes creates an ideal foundation for AI inference at the edge. This brings computation closer to end-users and dramatically reduces latency. Such a proximity advantage is crucial for real-time applications like autonomous vehicles, augmented reality, and interactive AI assistants, where milliseconds matter. The network’s hardware-aware assignment system ensures that computer vision tasks leverage GPU-enabled nodes. And natural language processing workloads are matched to CPU-optimized workers for optimal performance.

Tamper-Proof Model Distribution

The CAR (Content Addressable Archive) format provides an elegant solution to one of AI’s most pressing challenges: secure and efficient model distribution. Through content-addressed storage, the network ensures model integrity while enabling efficient caching that reduces redundant downloads. Each model version receives an immutable identifier. This creates a tamper-proof distribution system that eliminates the need for centralized repositories while providing cryptographic guarantees of model authenticity.

Gaming and Real-Time Applications

The gaming industry has always pushed the boundaries of what’s possible with distributed systems. The Bless Network offers solutions to challenges that have plagued multiplayer gaming for decades. From latency issues that ruin competitive experiences to the massive infrastructure costs that limit indie developers, the network provides a comprehensive platform for next-generation gaming experiences.

Transforming Multiplayer Architecture

Traditional multiplayer games struggle with the fundamental tension between global consistency and regional performance. Players on different continents often experience vastly different gameplay quality. Some regions suffer from high latency, which makes competitive play impossible. Bless addresses this through hierarchical state synchronization. For example, regional game states are maintained locally for responsive gameplay while being synchronized across regions to maintain global consistency.

This distributed approach enables edge-based physics simulation. Game physics calculations happen close to players rather than in distant data centers. The result is responsive gameplay that feels consistent regardless of geographic location. And redundant state management ensures high availability even if individual nodes fail.

Real-Time Communication Renaissance

The network’s low-latency execution capabilities make it ideal for real-time communication systems that demand consistent quality worldwide. Voice and video processing can happen at edge locations, reducing the delay that makes international calls feel awkward and unnatural. Distributed relay systems improve connectivity by finding optimal routing paths, while real-time translation services can process conversations with minimal latency.

These capabilities extend beyond traditional communication to enable new forms of interactive experiences, preserving privacy while enabling seamless global collaboration. Imagine virtual conferences where real-time language translation happens locally, for example.

Anti-Cheat Systems That Scale

Gaming security has always been an arms race between developers and cheaters. The Bless Network’s distributed verification mechanisms provide a new approach to this challenge.

By distributing verification across multiple validation points, the network makes it exponentially more difficult for cheaters to compromise game integrity. The WASM sandboxing environment provides tamper-resistant execution, and reputation systems can identify suspicious behavior patterns across the entire network.

DeFi and On-Chain Applications

Decentralized finance represents one of the most transformative applications of blockchain technology. Yet, many DeFi protocols still rely on centralized infrastructure for computation-heavy operations.

This contradiction undermines the philosophical foundations of decentralization while creating single points of failure. In contrast, the Bless Network offers a path toward truly decentralized financial infrastructure that aligns with DeFi’s core principles.

Breaking Down Chain Barriers

The multi-chain ecosystem has created a fragmented landscape where assets and liquidity are siloed across different blockchains. Here, Bless can serve as a neutral execution layer, enabling seamless cross-chain operations without requiring trust in any single blockchain.

Sophisticated Financial Computing

DeFi protocols often require complex calculations that strain blockchain computational limits and drive up transaction costs. Risk assessment algorithms that incorporate real-time market data, yield optimization across multiple protocols, and algorithmic trading strategies with low-latency execution all become feasible when distributed across the Bless Network.

Regulatory Compliance Through Transparency

As DeFi protocols face increasing regulatory scrutiny, the network’s transparent computation and immutable execution logs provide audit trails. These satisfy regulatory requirements without compromising decentralization since geographic node selection enables regional compliance. And zero-knowledge techniques can preserve privacy where necessary.

Bless’ combination of transparency and privacy protection positions DeFi protocols to meet regulatory requirements while maintaining their decentralized nature.

Partnership with Space and Time

Smart cities and infrastructure networks need autonomous systems that can make split-second decisions. Energy grids require constant balancing. EV charging networks must optimize based on demand patterns. Traffic systems need real-time route optimization.

The Bless Network enables AI agents deployed on edge devices to handle these critical infrastructure decisions autonomously.

Autonomous Infrastructure Management

ZK-focused blockchain and Bless partner Space and Time provides sub-second ZK coprocessor capabilities for indexed blockchain data, creating the perfect foundation for smart infrastructure applications. AI agents deployed through Bless can access cryptographically verifiable inputs, including weather forecasts, grid load projections, and energy prices. Each decision made by these agents becomes auditable. The data used and the compute path followed remain transparently verifiable.

This architecture works particularly well for EV charging networks. Agents can analyze real-time grid capacity, electricity prices, and user demand patterns. They optimize charging schedules to prevent grid overload while minimizing costs for users. The same approach applies to public transit routing, where agents process traffic data, weather conditions, and passenger flows to optimize routes in real-time.

Verifiable Data Integration

The integration between Bless and Space and Time enables ZK-proven data delivery to smart contracts powering data-driven crypto applications. Infrastructure agents need reliable, tamper-proof data sources to make critical decisions. Weather data affects energy production forecasts, market prices influence grid balancing decisions, and traffic patterns determine optimal routing algorithms.

ZK-proof-enabled SQL queries ensure that data inputs remain cryptographically verifiable. This creates a transparent and accountable AI system where every infrastructure decision can be audited and verified. The same infrastructure generalizes to other proof-generating data providers, enabling a new design space for AI systems that provide verifiable proof of their activities.

Redefining Internet Economics

The current web operates on extractive models where crawlers operate without consent. AI agents powered by Bless could negotiate access to web data using on-chain protocols like x402, with sites receiving compensation in exchange for structured content. With verifiable data access and decentralized execution, agents can respect digital property rights while maintaining open access for verified human users. This lays the foundation for a more balanced, accountable web.

Partnership with Monad

Financial markets operate in milliseconds, but traditional trading infrastructure relies on centralized systems that create single points of failure and introduce unnecessary latency. The integration between Bless and Monad enables deployment of real-time, autonomous AI trading agents that run entirely on user devices while interacting directly with high-throughput blockchain infrastructure.

Edge-Based Trading Intelligence

Monad delivers 10,000 transactions per second with 1-second block times and single-slot finality, creating the perfect environment for high-frequency trading applications. Bless provides the decentralized compute layer, where agents perform low-latency inference tasks locally. Trend detection algorithms analyze market patterns in real-time. Order book analysis happens on user devices rather than remote servers.

This architecture supports a new class of market agents capable of sub-second response times. Local compute eliminates network latency for inference tasks, and trust-minimized on-chain execution ensures transparency without sacrificing speed. Once a trading decision is made, agents use integrated RPC access to Monad to submit transactions or read on-chain state.

Democratized Trading Infrastructure

Traditional high-frequency trading requires expensive co-location services and specialized hardware. This creates barriers that favor large institutional players over individual traders. The Bless/Monad integration democratizes access to high-performance trading infrastructure, allowing anyone to deploy sophisticated trading agents on their own devices.

By removing centralized intermediaries and minimizing latency, the approach enables fairer and more composable DeFi applications – trading logic can be both shared and personalized. Open-source trading strategies can be deployed by multiple users while maintaining individual customization. The process remains transparent, but users’ personal data stays private where necessary.

Scalable Market Operations

Monad’s parallel execution and superscalar pipelining enable parallelized performance in the EVM. This high-throughput environment supports complex trading strategies that would be impossible on traditional, general-purpose blockchains. In this new environment, multi-asset arbitrage strategies can be executed simultaneously across different markets. And algorithms can monitor positions in real-time, adjusting them automatically on an ad-hoc basis.

How Bless Stands Out from Other Networks

The distributed computing landscape features numerous projects attempting to democratize computational resources. Each takes a fundamentally different approach that reveals distinct strengths and limitations. Understanding how Bless Network differentiates itself requires examining not only technical specifications but also the philosophical and practical approaches that drive its architectural decisions.

Modular blockchain architectures aim to specialize different layers of the blockchain stack, such as data availability, execution, and consensus. While these solutions bring significant advancements, Bless Network takes a complementary yet distinct approach. It focuses on decentralized computation and execution across a wide range of devices.

Celestia and Data Availability

While Celestia has carved out a strong position in the data availability space, Bless Network takes a fundamentally different approach. It provides computational execution rather than just data storage and availability. Instead of separating concerns like traditional modular architectures, Bless combines storage and processing in a unified system. This leverages user devices for both storage and computation, eliminating the need for dedicated validators. Bless Network’s focus on WASM execution environments opens up possibilities beyond blockchain-specific data structures. It addresses the broader challenge of distributed computation and execution.

Polygon and Execution Environments

Bless Network’s execution model represents a paradigm shift from Polygon’s validator-centric approach. Rather than requiring high-performance validators, Bless operates across device-agnostic consumer hardware, making its network accessible to everyday users. Bless’ language-flexible WASM runtime breaks free from EVM-specific limitations. Its geographic distribution focus prioritizes real-world accessibility over purely blockchain-centric scaling. Most notably, Bless transforms idle resource utilization into productive computation. This contrasts sharply with Polygon’s dedicated staking infrastructure model.

Amazon Web Services (AWS)

The centralized nature of AWS creates inherent vulnerabilities that the Bless Network elegantly sidesteps through distributed execution. This eliminates single points of failure and regional outages that have plagued traditional cloud services. While AWS requires additional CDN services to achieve edge computing capabilities, Bless delivers edge computing by default through its distributed architecture. The network’s dynamic resource allocation adapts to real-time needs rather than forcing users into fixed instance types and sizes–all while operating on community-owned infrastructure that challenges the corporate-controlled data center model.

Google Cloud Platform

Google Cloud Platform’s substantial competitive moat, achieved through large capital investments, stands in stark contrast to Bless’s democratized participation model. Bless welcomes anyone to contribute resources to the network. The flexibility extends to hardware utilization, supporting diverse device types rather than standardized server configurations that limit participation. Decentralized governance replaces corporate policy control. Native Web3 integration feels natural rather than retrofitted like traditional cloud providers’ blockchain services.

Web3 Competitors

The Web3 space has seen the emergence of various decentralized computing projects – each attempting to leverage blockchain technology for distributed computational power. While these networks share a common goal of decentralization, Bless Network applies a different framework.

The biggest product differentiators boil down to its unique approach to resource utilization, execution environment, and economic model. It focuses on inclusivity and real-world performance.

Akash Network

Akash Network’s focus on professional hosting providers creates a different ecosystem than Bless’s consumer device utilization strategy. While Akash operates through containerized applications in a marketplace-based resource allocation system, Bless leverages WASM-based execution with dynamic task distribution. This responds to real-time network conditions. The built-in verification mechanisms provide an additional layer of trust compared to systems that rely primarily on economic incentives. This makes Bless more accessible to everyday users than approaches focusing on professional hosting providers.

Grass Network

Grass Network focuses on monetizing unused internet bandwidth. It essentially allows users to sell their idle network resources. While this aligns with the broader concept of utilizing spare device capabilities, Bless Network takes a different approach. It focuses on monetizing computational power rather than just bandwidth. Bless provides a robust framework for executing complex computational tasks, particularly AI and machine learning workloads, directly on a global network of distributed devices. This distinguishes Bless from Grass by offering a more comprehensive and versatile platform for general-purpose decentralized computing. It moves beyond simple data routing to active processing and verifiable execution.

Conclusion

The Bless Network has built something genuinely different. The platform has taken the theoretical benefits of decentralized computing and made them practical. The testnet numbers show this isn’t just sophisticated academic research, it’s working at scale.

The combination of accessible participation (anyone can join), powerful capabilities (serious AI workloads), and economic incentives (monetize idle resources) creates a compelling platform for new consumers. No complex setups or hardware. No maintenance headaches. Just install and contribute. Undoubtedly, the potential for real traction is here.

But let’s be realistic, Bless is currently in the testnet phase. The real test comes when the project scales to full production and faces the messy realities of global deployment. Regulatory challenges, technical edge cases, and user adoption at scale are all unknowns.

That said, the foundation looks solid. The impressive metrics achieved during testing, including over 14,000 processed workloads and more than 5.4 million registered nodes across 210 countries, demonstrate significant progress. The technical architecture addresses real problems in smart ways, and the economic model aligns incentives properly. Clearly, the Bless team understands both the theoretical computer science and the practical engineering challenges.

If they can execute on this vision, we might be looking at the beginning of a real shift toward decentralized internet infrastructure. Computing power would become a shared resource instead of a corporate-controlled commodity. Innovation would accelerate because the barriers to accessing computational resources could drop dramatically.

Bottom line: Bless has laid a solid technical foundation and is well-positioned to translate its vision into reality – excited to see where it goes from here.

0 Comments