Introduction

Delphi Labs is a blockchain protocol R&D arm, with a team of ~50 dedicated to building new Web3 primitives. Previously, the team was focused on researching & developing protocols on Terra. In the wake of the Terra collapse, Delphi Labs was faced with a big decision regarding where to focus our builder efforts going forward.

With Terra’s collapse demonstrating the potential downsides of building on the wrong platform, we wanted to make sure we took our time, learned our lessons, and made the right choice regarding where to focus our efforts moving forward. Our goal was to research every major L1/L2, both live and upcoming, understand their tradeoffs, and figure out where the next most exciting DeFi frontier lies.

Before we begin, it’s important to highlight that this post should not be taken as an absolute judgment about which ecosystem is best, but rather a subjective analysis of which ecosystem best suits our specific context, vision, and values. In Part I, we outline these design constraints and the resulting platform requirements we looked to optimise for. In Part II, we analyse each of the platforms based on these requirements and explain why we ultimately decided on the Cosmos ecosystem.

This was an enlightening process, and this post is our attempt to open-source our findings from this research in the hopes that they may be helpful to others in the space. We welcome feedback and criticism from the community to stress test our thinking and ensure there’s nothing we’ve missed.

Part I – Labs Design Constraints

While we tried as much as possible to approach this exercise from a blank slate, Labs has existing context, vision, and values which constrain our decision space. This includes our focus on DeFi and our vision for how it should be built, our views on multi-chain and where the space is headed, and the resulting emphasis on cross-chain.

Building for DeFi

There are many different kinds of Web3 protocols and products and each will face different design constraints when selecting the appropriate platform to build on. Delphi Labs has focused its research & development efforts primarily on DeFi protocols, since this is the vertical we’re most interested in and best suits our team’s existing background and skill set. It’s also one that we’ve been thinking about deeply for a long time, having begun covering DeFi for the research business in 2018, and investing in it via Ventures in 2019. Before Labs was officially launched as a separate Delphi unit, we also had the privilege of spending years consulting for world-class DeFi pioneers. This is the area that we feel we understand best and we therefore approached this entire exercise from that point of view.

DeFi Rebundled

We don’t believe the end-game DeFi UX involves people using separate protocols for each of their DeFi jobs-to-be-done (spot trading, lending & borrowing, leveraged trading, yield farming, derivatives, etc.). We believe this will be rebundled into a single, vertically integrated UX which looks and feels more like a CEX.

Specifically, DeFi credit lines such as those made possible by Mars can facilitate the creation of a “Universal DeFi credit account”, which users could use to perform leveraged interactions with whitelisted DeFi apps with a single account-level LTV. This recreates the “sub-account”-like experience on centralised exchanges while maintaining the advantages of decentralisation such as being non-custodial, censorship-resistant and integrating key DeFi primitives. This requires speed and synchronous composability (we don’t believe an experience based on asynchronous cross-chain contract calls can ever compete with a CEX), as well as a vibrant ecosystem to facilitate integrations and liquidity.

This is our best guess on what the DeFi end-game experience might look like and so we wanted to make sure we selected an ecosystem that would facilitate this vision.

Where We See the Space Going

There are two extreme views of how crypto will end up. The first is that all activity will converge onto a single general purpose execution environment (i.e. ‘Monolithic’ view). The second is that there will be a large number of specialised execution environments, each with their own designs and tradeoffs (i.e. ‘Multi-chain’ view). Obviously, there is an entire spectrum of views in between these two extremes. (For more on this, you can read our previous Pro report on it here.)

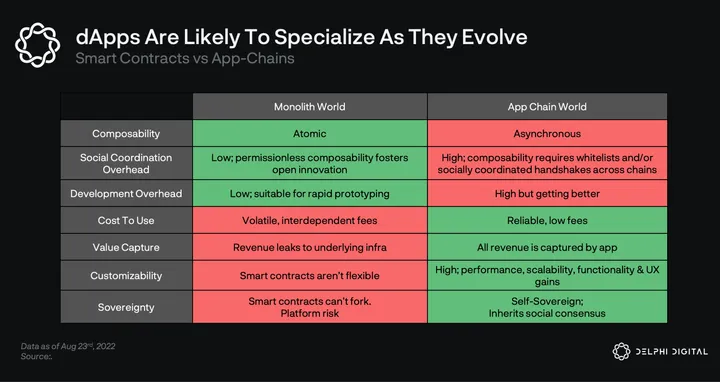

Ultimately, we believe the key tradeoff here is between the synchronous composability provided by monoliths versus the benefits of specialisation. Our view is that projects will increasingly opt for specialisation, with crypto becoming more and more multi-chain as a result. In this section, we explain why we believe this to be the case.

We believe there are three key benefits to specialisation: lower/more predictable resource costs, customizability and sovereignty.

Lower/More Predictable Resource Costs

Our base assumption is that demand for block space, similar to demand for computation, is elastic; the cheaper the block space, the more different kinds of computation are able to move on-chain. This means that no matter how fast a monolithic chain is, demand for blockspace is likely to outstrip supply, with costs rising over time.

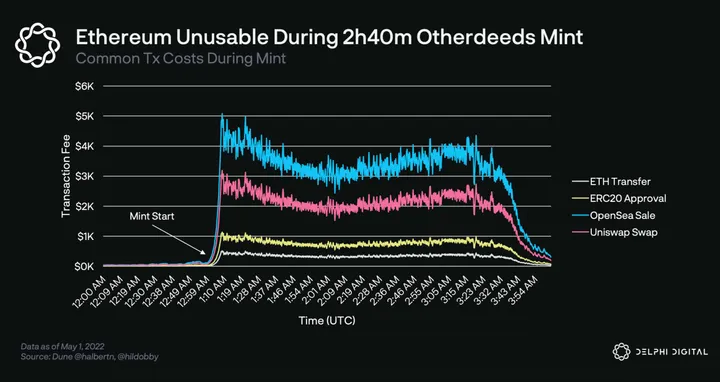

In addition to this, an application on a monolithic chain constantly competes for blockspace with all other applications on that chain. This leads to network congestion, which interrupts usual UX either through extraordinarily high fees or chain halts.

ETH transfers exceeding 500 USD/tx during Otherdeeds mint

Collectively, this means dApps on monolithic chains will:

a) Face increasing costs over time, as more activity moves on-chain

b) Face greater uncertainty regarding resource costs, since this depends on demand for blockspace from other dApps

While some dApps may be willing to accept these tradeoffs in exchange for the benefit of rapid prototyping, synchronous composability and ecosystem network effects, we believe there are many applications for which this tradeoff doesn’t make sense.

An example of this is gaming, a sector we’re particularly excited about. As games place more and more of their economies, and eventually game logic itself on-chain, certainty around resource costs will become more important. If a popular NFT mint causes tx costs to spike or the chain to halt, the game may become unplayable for its users. This is a high cost, especially considering games are largely isolated ecosystems and receive minimal benefits from composability.

While monolithic chains can continue to scale block space vertically, this doesn’t actually solve the problem because demand for block space will continually increase and applications are still competing with each other for that block space. Specialised app-chains offer a free market solution, allowing block space to be broken up horizontally by application which ensures high levels of data locality.

Customisability

All applications launching on a monolithic blockchain inherit and must accept a series of design decisions, including the platform’s consensus model, security, runtime, mempool, VM, etc. In contrast, an application-specific chain can be customised across all components of its stack to optimise for that particular application (or the relevant class of applications). As Dan Robinson and Charlie Noyes of Paradigm tell us:

“Blockchain protocol design is nebulous. There is no “correct” level of scalability or security. Qualities like credible neutrality cannot be exhaustively defined. Today, application platforms enshrine static setpoints on those design decisions.”

To see how customisability might be useful, we can look at a few examples.

- Optimise trade-off space: Rather than accepting the decentralisation-security-scalability choices of a given platform, an application-specific chain can tune its scalability trilemma strategy based on the needs of that application. A game might not care as much about decentralisation/security and therefore can be run by a smaller and/or permissioned validator set with higher hardware requirements to increase performance. For example, DeFi Kingdoms (Crabada) started as a smart contract dApp on Avalanche C-Chain and eventually moved to its own subnet, thereby sacrificing security for cheaper gas.

- State machine customisation: Platforms can customise all aspects of their state machine including mempools, transaction propagation, ordering of txs in a block, staking reward distribution, execution models, precompiles, fees, etc. A few examples:

- Custom fee models:

- Osmosis allows users to pay tx fees in any token trading on its DEX. It also allows this to be bundled into swap fees, further simplifying UX.

- dYdX charges swap fees on trades and not gas fees on txs.

- Custom MEV solutions:

- Swap ordering on THORChain is determined by the swap queue logic baked into the state machine. Highest fee generating swaps always get in front of the queue. THORChain nodes don’t have the ability to re-order swaps.

- Injective orderbook can be settled via batch auctions that automatically run every block to minimise MEV.

- Osmosis will be adding threshold encryption to mitigate “bad MEV” (eg. sandwich attacks) while also internalising “good MEV”: the protocol will be able to arbitrage its own pools with the profits flowing to OSMO stakers.

- Custom fee models:

- Performance/scalability optimisations: Solana, Sui, Aptos, Fuel, Injective, Osmosis, Sei, and others leverage parallel execution to process transactions that don’t touch the same state (i.e. separate trading pairs / pools), greatly increasing scalability.

- Validators taking on additional services

- NFT-focused chain Stargaze has validators supporting IPFS pinning services to make it easier to upload NFT data on IPFS.

- Injective includes a validator-secured bridge to Ethereum, ensuring economic security of the bridge is the same as that of the chain.

- Mempool/consensus customization

- Sommelier is experimenting with a novel DAG-based mempool design, capable of providing availability and causality guarantees and reducing the work that the consensus algorithm needs to do; a breakthrough first adopted by fast monoliths like Aptos and Sui.

- dYdX is making its nodes do off-chain computation by running an orderbook matching engine, with transactions settling on-chain. This achieves much greater scalability.

- ABCI++ is a tool that adds programmability to every step of the Tendermint consensus process. Celestia uses ABCI++ to make erasure coding part of its block production process.

- Privacy

- Secret Network is a general purpose private-by-default smart contract platform, leveraging hardware by using Intel SGX enclaves in a Trusted Execution Environment (TEE) to keep data secure and anonymous.

- Penumbra is another private by default blockchain but with more of a focus on DeFi and governance and uses cryptography vs. Secret Network’s reliance on hardware (Intel SGX) for privacy. Penumbra uses Tendermint and connects over IBC but replaces the Cosmos SDK with its own custom Rust implementation. They are integrating threshold encryption directly into consensus which will allow them to do things like shielded swaps.

- Value capture: In any blockchain, apps leak value in the form of fees & MEV to the underlying protocol layer, or more accurately to the underlying gas/fee token. In the long-term, we believe the biggest dApps may be larger than any single L1, straddling across several L1s/rollups, compounding liquidity/brand/UX network effects. They will also own the user relationship, enabling them to eventually vertically integrate into their own specialised L1s and internalise fee revenue/MEV leakage (i.e. dYdX). This level of specialisation aligns interests of application and underlying layer(s) (execution, settlement, consensus) under a unified token.

Sovereignty

A key difference between smart contracts and app-chains is that the latter are self-sovereign while the former are not. The governance of smart contracts ultimately relies on governance of the blockchain. This introduces a platform risk where new features/upgrades on the underlying blockchain can potentially harm UX of smart contracts, and in some cases even break them. The importance of sovereignty also becomes obvious during software bugs; an exploited dApp can’t recover by forking without convincing the underlying chain to fork, which will be an impossible lift besides exceptional cases.

Drawbacks of Specialisation

There are also a few key disadvantages to specialisation:

- Cost – launching a standalone chain is more time consuming/expensive than simply deploying smart contracts on an existing chain, requiring more niche developer skill-sets, bootstrapping validator sets, as well as imposing additional infrastructure complexities (indexing, wallets, explorers etc.).

- Lack of synchronous composability – on a monolithic chain, all applications run on a shared state machine and thus benefit from synchronous, atomic composability. Interchain infrastructure is currently unable to facilitate this and in any cases introduces additional trust assumptions.

On cost, while a specialised chain has never been as easy to deploy as smart contracts on an existing chain, we believe the gap has narrowed significantly and is likely to continue to narrow over time as the technology matures and developments like Interchain Security come online. The real drawback is the loss of synchronous composability. We’ve already seen the benefits of this with the growth of DeFi powered by token rehypothecation on Ethereum, and there may still be a long tail of yet undiscovered use cases for permissionless composability.

While this is significant, there are two important counter-points here. Firstly, we would argue there are only a few kinds of applications that truly benefit from synchronous composability. These are mainly DeFi use cases for which rehypothecation of tokens is arguably crucial (e.g. yield farming). That said, even for DeFi, it can be argued whether synchronous composability is truly necessary as evidenced by the success of dYdX. For most other dApps, we would argue asynchronous composability is fine as long as there’s strong cross-chain tooling to port assets over and make the UX of interacting with different dApps seamless.

Secondly, specialisation doesn’t necessarily mean deploying a chain with a single application on it, but can instead mean a cluster of applications that synergise well together or facilitate a certain use case. For instance, while Osmosis is often seen as an AMM-chain, it is evolving to become more of a DeFi chain with many different dApps deploying on it (money markets, stablecoins, vaults, etc.). We believe applications that benefit from composability will naturally tend to cluster around specialised chains, effectively allowing for “opt-in” composability for dApps that require it.

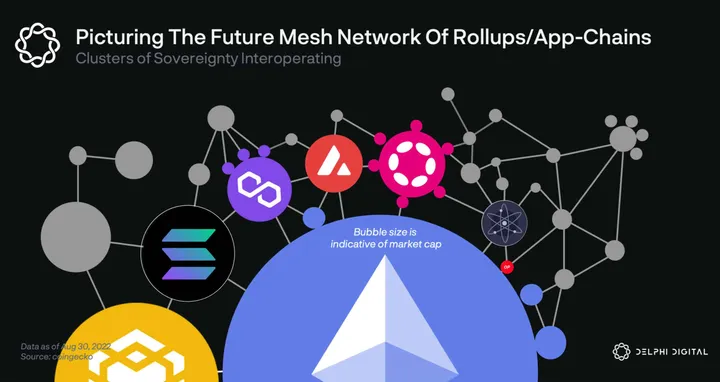

For these reasons, we expect that rather than all activity coalescing on a single monolithic chain, the space will instead evolve into a mesh network of interconnected specialised chains/rollups organised into clusters around specific use cases.

Cross-Chain Architecture

To summarise the above, we believe that while the DeFi application layer is likely to be rebundled, the blockchain layer will further fragment, with dApp teams/communities increasingly choosing to deploy their own specialised app-chains. However, we think it’s unlikely that each of these chains will spin up its own DeFi ecosystem since: a) it forces each chain to rebuild an entire DeFi ecosystem, which is a difficult task and b) results in liquidity fragmentation and sub-optimal UX.

Instead, we believe there will be a few DeFi-focused hubs, with app-specific chains deploying their tokens/economies on one or more of these DeFi hubs. One metaphor we use to visualise this is that of specialised app-chains as suburbs, with bridges providing the transport layer that links these suburbs to the city center financial hubs (i.e. DeFi hub chains).

Given that composability is critical for the rebundled UX experience mentioned earlier and betting on one chain is undesirable, we expect winning DeFi dApps to deploy on several of the winning DeFi hubs, compounding liquidity/brand/UX network effects across chains. As such, we wanted to make sure we spent some time exploring architectures and what ecosystem(s) would most easily facilitate this.

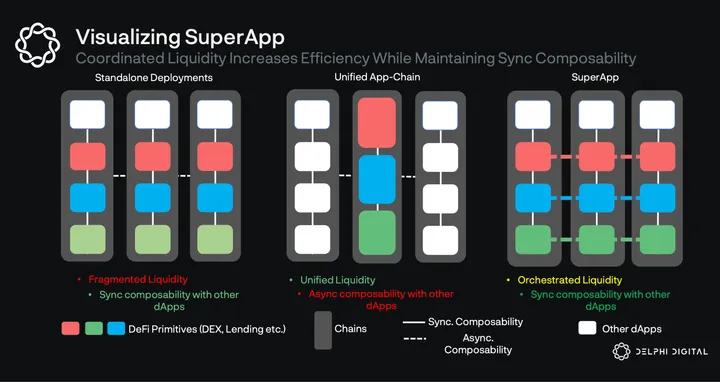

As of right now, cross-chain applications follow two main approaches:

- Standalone deployments which do not communicate with each other (e.g. Aave, Uniswap, Sushi, Curve). This means the dApp exists natively on each chain it’s deployed on and can synchronously compose with all native primitives. However, it also leads to liquidity fragmentation and poor UX as traders/borrowers receive suboptimal execution and LPs must manually move capital to optimise utilisation.

- Deploy one unified app-chain which all liquidity sits on (e.g. Thorchain, Osmosis). This is more capital efficient but means no synchronous composability with dApps on other chains.

Delphi Labs is currently exploring a third way, in which application instances would be deployed on multiple chains (outposts) but connected by leveraging a coordination layer which facilitates communication and liquidity allocation between outposts. You can read more about how we think this third strategy could play out for Mars here. If successful, this would improve performance for LPs (deposit once and earn fees across all integrated chains), execution for traders/borrowers, as well as allowing both primitives to be synchronously composable with other dApps on the chain. This is especially important for the super-app vision which relies on synchronous composability both for integrations and speed (cross-chain contract calls are too slow to provide a good advanced trader UX).

Platform Requirements

To summarise, our constraints are: a) we’re focused on DeFi applications b) we believe DeFi will be rebundled into an integrated experience c) we believe the world will be increasingly multi-chain, and DeFi applications should architect themselves such that they can deploy natively on multiple chains.

Based on these constraints, there are a few key platform requirements:

- Speed: While it will never be as fast as a CEX, it should ideally be as close as possible. Block times will determine how much worse the experience is compared to a CEX. Faster block times improve capital efficiency by allowing quicker oracle updates, liquidations, and consequently higher leverage. While this isn’t essential, faster block times combined with high throughput also enable on-chain order-books, providing a better trading UX for users.

- Ecosystem: In addition to being non-custodial and permissionless, the big advantage of the DeFi super-app over a CEX is composability and the number of integrations it can provide. While a CEX is limited to its own products, the app can integrate with any DeFi primitive, allowing users to cross-margin LP positions, vaults, farms, staking positions, NFTs, etc. As part of this, liquidity on the chain is also important since it will directly affect the trading experience.

While speed and ecosystem are the primary requirements, there are also several other factors that matter in selecting the right platform:

- Decentralisation: The primary differentiator of the super-app compared to a CEX is decentralisation, i.e. being non-custodial, permissionless, and censorship-resistant. Decentralisation is a loaded term, but ultimately whatever chain we deploy on needs to have strong security and liveness guarantees. Many rollups and chains achieve low latency but it often comes at the expense of one or both of these. Our assessment of centralisation is subjective but ultimately will take into factors such as: centralised points of failure, resiliency to regulatory attack, governance/stake concentration, node count, number of independent entities contributing towards development, etc.

- Cross-chain interoperability: In order to achieve the cross-chain architecture vision described earlier on, chains will need mature, reliable and trust-minimised cross-chain messaging and asset bridge infrastructure. Without this, the instances will not be able to communicate with one another, or would only be able to do so while exposing the overall system to additional risk.

- Technical maturity: As we’ve seen with Solana and other chains, especially those based on completely new and experimental innovations, an immature technology can lead to a bumpy development process and risks such as downtime for early adopters. Downtime is very problematic for an application featuring leverage (since liquidations need to occur in a timely manner), and added technical risk is generally undesirable when building already complex protocols.

- Portability of code: While this wasn’t a primary factor in our analysis, we also considered the portability of code written for the specific platform. Ecosystems with niche languages or VMs represent higher costs since code cannot be ported elsewhere if that ecosystem fails.

Part II – Choosing an Ecosystem

Ecosystem Comparison

When looking at the blockchain space we considered a variety of different ecosystems, where an ecosystem might comprise a cluster of multiple domains, such as Cosmos zones, Avalanche subnets or Ethereum rollups, or standalone chains such as Near or Solana. While this might seem like comparing apples and oranges, it seemed the natural approach when narrowing down the options.

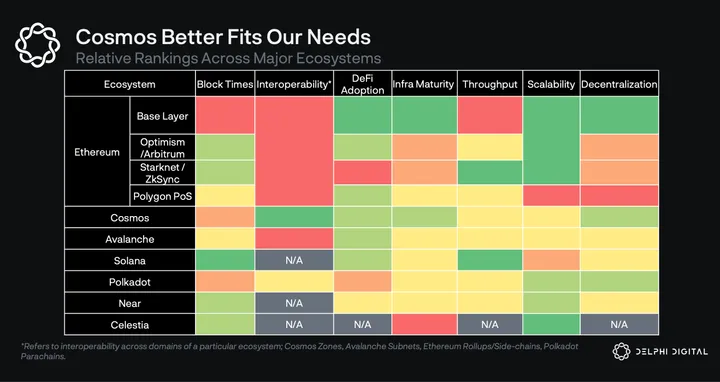

We then compared each option based on the factors given in Part I. A summary of our comparison is given in the table below.

We expand on some of the motivations behind our rankings as we take a closer look at each ecosystem.

Ethereum L1

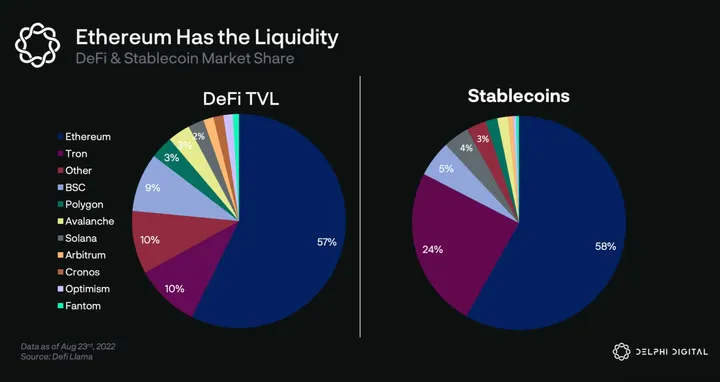

Let’s start with the Ethereum base layer. Today, the Ethereum base layer captures the largest demand for blockspace and liquidity. As Ethereum moves to a rollup-centric world, more rollup activity will settle on Ethereum, further solidifying Ethereum’s position as the liquidity hub.

From our perspective, the biggest advantages of moving to Ethereum L1 would be:

- Ecosystem – Ethereum L1 has the largest and most developed dApp ecosystem as well as highest liquidity, allowing for a large number of integrations for potential credit account functionality.

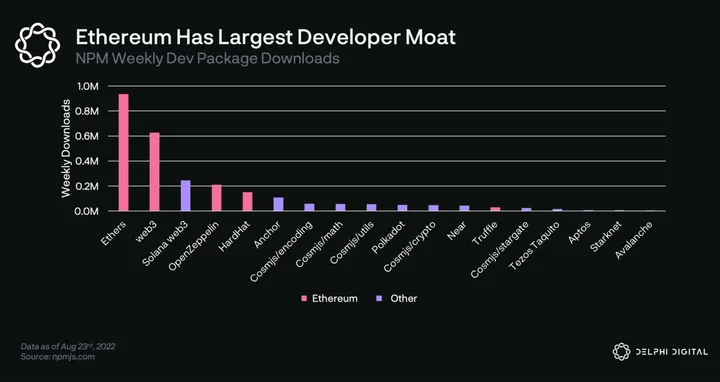

- EVM network effects – Ethereum has the largest developer community which may solidify its ecosystem moat by ensuring the ecosystem continues to grow faster than alternatives.

- Decentralisation – Ethereum is arguably the most decentralised L1 on all major vectors. Ethereum has multiple clients developed by independent teams, with the most diverse set of clients among L1s. It also has the highest economic security, it’s the most battle-tested and has a social consensus which favors minimal changes at the base layer.

The biggest drawback is speed/cost. ~12 second blocks make it extremely difficult to provide high leverage and generally hurt the trading experience, especially when inclusion in those blocks is often competitive. High gas costs drive inefficient liquidation models which are punitive to users. All of this results in an inferior trading UX.

Although EVM currently dominates the smart contract development market, we’ve noticed increasing competition in the VM space with the likes of SeaLevel, CosmWasm, MoveVM and FuelVM. We expect this competition to put EVM’s network effects to the test.

Rollups

As a base layer, Ethereum sacrifices speed for resilience, and instead aims to offer fast UX through its rollups.

Rollups promise Ethereum-level security with lower costs and faster UX, but as always this comes with tradeoffs. Unlike an L1 where no one knows the final order of txs until consensus is achieved, rollups can have a single privileged actor (known as the sequencer) with full discretion over tx ordering. This allows rollups to strike a balance between UX and decentralisation, offering instant confirmations while relying on L1 for censorship resistance and finality.

While this balance is more useful for the trading experience we are looking to offer, relying on centralised sequencers isn’t ideal as potential sequencer outages can interrupt the UX. In the case of Mars, outages present a critical risk, as the protocol can potentially accumulate bad debt during downtime. While rollups plan to decentralise these sequencers: (i) almost all teams have pushed it back in their roadmaps, and (ii) decentralising sequencers will increase confirmation latencies that can be offered to users due to the inherent latency introduced by requiring quorum from of a group of sequencers rather than a single sequencer.

Interoperability is also problematic. Rollups have withdrawal times which adds complexity to any low latency bridge (which would need to assess and cover the risk of a challenge). Generally, cross-chain infra is some way behind alternatives, leading to rollups being considered currently unsuitable for what we’d view as an optimal cross-chain architecture.

EVM ORUs

Scaling execution capabilities of a shared state has to do with the choice of VM and execution model. The first generation of ORUs on Ethereum, like Optimism and Arbitrum, value EVM compatibility/equivalence. While they can leverage existing Ethereum tooling, they also inherit the limitations of geth. Due to this, we’re unlikely to see them achieve an order of magnitude higher tps than L1 geth forks like Polygon or BSC (≈50 tps).

Indeed this partially explains why Arbitrum goes after a multi-rollup vision, with Arbitrum One and Arbitrum Nova being the first ones. In a multi-rollup world, bridges play a key role. Unfortunately, the design space of rollup bridging is still immature. Existing bridges’ functionalities don’t go beyond simple token transfers, L1 call data remains expensive (though future Ethereum developments such as EIP-4488 could mitigate this), and latency on ORUs will continue to be a challenge for generic cross-chain apps. Again, this is problematic for us given our view of optimal cross-chain architectures.

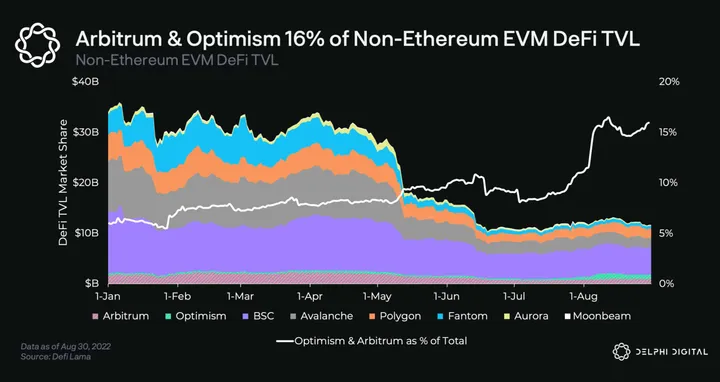

On a positive note, a major advantage of EVM ORUs is that they easily leverage Ethereum’s liquidity and community to bootstrap their DeFi ecosystems. Optimism and Arbitrum already have billions of $s in TVL with bluechip protocols like Aave, Uniswap, Curve, Synthetix, and GMX driving user adoption.

On the other hand, infra for rollups is still immature. While ORUs have large TVL, almost none of them (including Optimism and Arbitrum) have permissionless fraud proofs in production and therefore aren’t trust minimised. While we are fully confident rollups will get there, it’ll take significant engineering effort and time.

While EVM compatibility made sense for ORUs given ease of portability for the existing Ethereum ecosystem, it does not benefit all existing protocols that may wish to pursue cross-chain strategies. We also see a risk that as alternative rollup tech evolves and new builders without EVM experience enter the space, alt L1s and ZK rollups may draw usage away from ORUs. So while relatively high scalability, TVL and volume is attractive, poor connectivity, centralisation risks and an uncertain future combine to discourage adopting this option.

ZK Rollups

Like many, we think of ZK proofs as a core pillar tech for blockchains’ end-game. At the heart of every scaling solution lies cost effective verification. ZK proofs allow anyone to prove full integrity of execution (w/o additional assumptions), making them extremely useful as secure, efficient bridges between discrete systems. Today we observe this in the form ZK-rollups.

In recent years, the incentives to push the boundaries of ZK tech have increased dramatically. That said, it’s still hard to predict how soon ZK rollups can capture substantial market share. The ZK space is still in its infancy with a few players at varying stages of readiness – the main ones being Starkware, zkSync, and Polygon. (For more on ZKRs, you can read our previous Pro report on it here.)

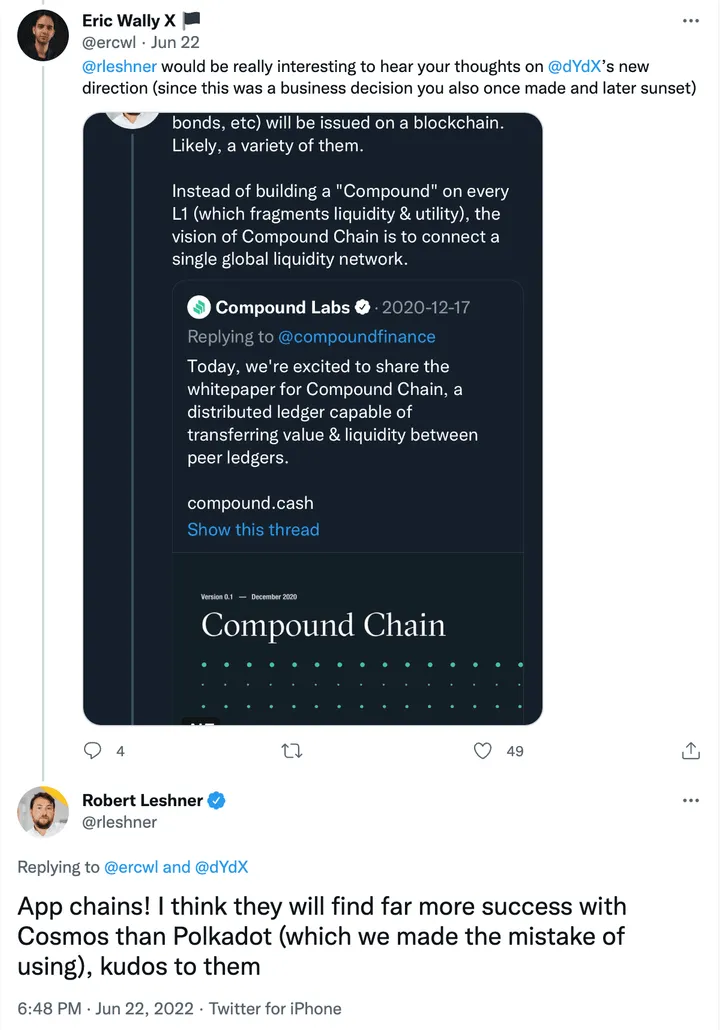

Starkware has been ready with ZK tech for some time, but in the form of a software vendor for their StarkEx offering. StarkEx was not an open general purpose platform as we have come to expect in this space, but the technology itself was excellent as evidenced by its adoption by some of the most used dApps such as dYdX, Immutable, and others. dYdX however recently announced they would move away from StarkEx to a Cosmos app-chain, due primarily to concerns regarding decentralisation.

StarkNet is a recently launched permissionless open platform based on Starkware’s tech. It is production-ready and offers synchronous composability with other StarkNet dApps. However, having just launched, its community, infrastructure and DeFi ecosystem are immature – for example the canonical Ethereum<>Starknet bridge known as StarkGate (built by the Starkware team) has deposit limitations in place and liquidity in StarkNet is negligible (~650 ETH). Like other roll-ups, StarkNet also relies on centralised sequencers, with plans to decentralise over time.

This nascency presents adoption risk given that applications built in Cairo (Starkware’s language) offer limited portability should that turn out to be a failed bet. That said, the Warp team at Nethermind is developing a Solidity to Cairo transpiler, so Solidity could potentially be used in place of Cairo which of course offers much greater optionality and tooling.

Many ZK-EVMs such as Polygon Hermez, Scroll, and zkSync 2.0 make different trade-offs on the speed<>EVM compatibility spectrum. While it’s exciting to witness their progress, they are pre-launch and timing regarding their future roadmap tends to be unreliable.

Finally, we note that all ZK rollups rely on a highly complex, nascent technology that only a very limited number of subject experts truly understand. We think this increases the chance of software implementation bugs and other unforeseen circumstances that can negatively affect sophisticated DeFi dApps and also makes it harder for us to reason about their progress.

While we appreciate the potential of ZK technology to become end-game tech and will be watching its progress closely, the above concerns, plus the general issues with rollups led us to decide against building there at this time.

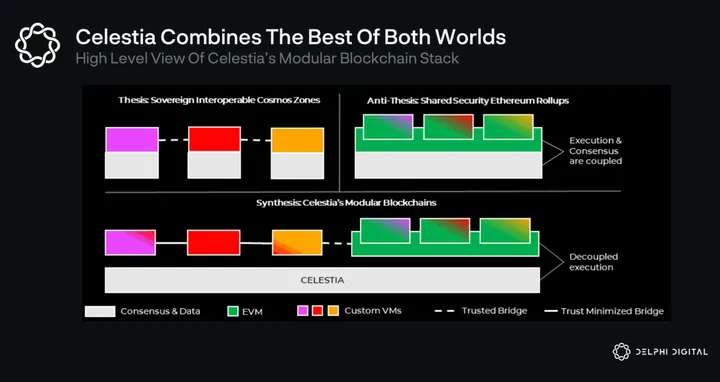

Modular (Celestia Rollups)

As we outlined in Part 1, we see the future as multichain, with a number of app-chains, general purpose chains and hybrid chains, each making different tradeoffs and customisations. This lends itself to the modular blockchain development stacks as offered by Tendermint from Cosmos and Substrate from Polkadot. These provide a collection of reusable and customisable components via an SDK for the creation of new blockchains.

Celestia approaches the modular blockchain thesis from a different perspective, decoupling execution from data and providing just the base layer of data availability and ordering. This allows Celestia to offer security for rollups/app-chains in a highly scalable manner. It also means that Celestia is focused on just one element of the blockchain stack, which is perhaps more effective than the jack-of-all-trades approach. (For more on Celestia, you can read our report on it here.)

This solves one of the main issues with Cosmos – the requirement for each chain to source its own validator set – something which takes significant time and effort and is infeasible for many dApps. It also fragments consensus security of each chain, resulting in low security budgets at the tail end. Interchain security from Cosmos is another alternative here, however it isn’t permissionless; requiring mutual consent of the hub and the consumer chain and isn’t a scaling solution; validators of the hub take on additional resources to validate consumer chains. While it could be a good interim solution, it’s unlikely to be the end-game.

The user-facing side of the Celestia network is its execution layers; notable projects in the works are Cevmos, Sovereign Labs, and Fuel V2. Fuel V2 appears the closest to the finish line but it’s still very early. Fuel V2 uses a UTXO data model and a brand new VM to promise fast and scalable execution. While we like their design choices and will be monitoring them closely, they present a technical risk which we feel is too great for applications we might work on.

As with other new ecosystems, the cost of migrating to a new and largely untested language, plus a new paradigm altogether in the case of UTXOs, is considerable. We’d also have path dependence on a specific execution environment while there is risk that another future Celestia rollup is more successful in attracting adoption. There’s also the risk that future Ethereum developments such as EIP-4844 render Celestia’s main data availability use case less necessary.

While this sounds pessimistic, we are actually very optimistic about the future of modular blockchain networks. We see sovereign rollups on Celestia as the potential successor or perhaps as the end-game scaling path for Cosmos-based chains. While these technologies are not ready now, they represent a great potential mid-term solution while also being well positioned for the modular future. Celestia is certainly an ecosystem we’ll be keeping an eye on.

Polkadot

Polkadot’s mission has always been to have heterogeneous execution environments (parachains) with shared security. Although this was a unique goal when Polkadot embarked on this mission, it’s no longer the case given the presence of rollups.

That said, a unique advantage of Polkadot is the years of effort and mindshare that went into developing its cross-chain message passing protocol XCMP (akin to the IBC of Cosmos). XCMP is not yet fully functional, but once it’s out it will play a key role in interoperability and thus the creation of cross-chain applications.

Substrate and Cumulus are SDKs for the creation of parachain-compatible blockchains provided by the Polkadot team. To be a parachain, one is not required to be built using Substrate/Cumulus, nor are Substrate-created chains forced to be parachains. However, only parachains can be interoperable with one another. Since there are a max number of parachain slots, chains can only become parachains by successfully bidding for a lease via an auction process. This means that the cost of interoperability is a sacrifice of sovereignty to Polkadot governance, which could in theory revoke its parachain status at any time. By contrast, the Cosmos approach is to provide opt-in interoperability modules without requiring any connection to the hub chain, an approach we prefer.

Polkadot scores highly on decentralisation, with 996 validators, a diverse and thriving developer community, as well as multiple independent clients.

Despite the tech and large dev community, user adoption on Polkadot hasn’t been impressive. Currently, the top 3 parachains – Acala, Moonbeam, and Parallel – have a combined liquidity of $150M, well behind its competitors. This also recently took a hit as the biggest stablecoin aUSD lost its peg following a free mint bug on the Acala parachain.

In terms of scalability, we’ve marked Polkadot as being ahead of many ecosystems yet behind Ethereum and Celestia. While Polkadot adopts scaling technologies like data availability sampling and dispute protocols, validators still take interest in executing state transitions of parachains (or parathreads), which limits their scalability. The same motivations apply to our scalability ranking for Near.

Overall, despite the positives, we do not see Polkadot as offering any significant advantages compared to Cosmos or rollups for DeFi dApps, and it does have some comparative disadvantages.

Fast Monoliths (e.g. Solana and Now Sui, Aptos etc.)

The everything-in-one place approach taken by monolithic chains is certainly attractive to developers. It is much easier on multiple levels to build a dApp as a collection of smart contracts rather than create a new app-chain: the development cost of writing smart contracts is much less than writing application logic at the chain level; smart contracts on an existing chain don’t require bootstrapping new validators; existing wallets and infrastructure can be used; and generally it’s easier to attract users when building on a pre-existing chain by tapping into that existing community. Composing with other applications on the same chain is also much easier than attempting to do so asynchronously across bridges. So the fast monoliths, though in contradiction to our multichain vision, were certainly something we considered carefully.

Solana

Ethereum is the original monolith but quickly became congested. Solana was able to become the first credible high throughput chain, achieving sub-second block times – the lowest among any chain in production. It managed to do this while maintaining decentralisation, with 1972 validators and a strong Nakamoto Coefficient compared to other PoS chains. That said, it seems many of these validators would be unprofitable if not for Solana foundation subsidies, so it remains to be seen what this looks like once the subsidies end. Jump also recently announced they’ll be developing a separate Solana client called Firedancer, an important first step in improving validator diversity.

Solana attracted a strong ecosystem of projects and $1.5B TVL, placing it first amongst non-EVM compatible chains by TVL. The developer experience, which was initially perceived to be difficult, has meaningfully improved after the introduction of SeaLevel framework Anchor. Solana has also managed to grow a meaningful and differentiated developer ecosystem, which we believe is arguably top 3 in the space alongside Ethereum and Cosmos. This has also resulted in a differentiated culture, reflected in developers with more traditional/financial backgrounds and a thriving NFT ecosystem.

Solana’s cost and speed contribute to it being an attractive place to build DeFi apps, and as such has a rich DeFi ecosystem, with many AMMs, money markets, perps and other DeFi products (including excellent ones like Mango). While this opens up many potential integrations, the flipside is that it’s also quite a crowded space, with a large number of competitive DeFi dApps relative to the number of users / TVL. There’s also some risk of further fragmentation given the launch of fast monolith challenger chains like Sui and Aptos.

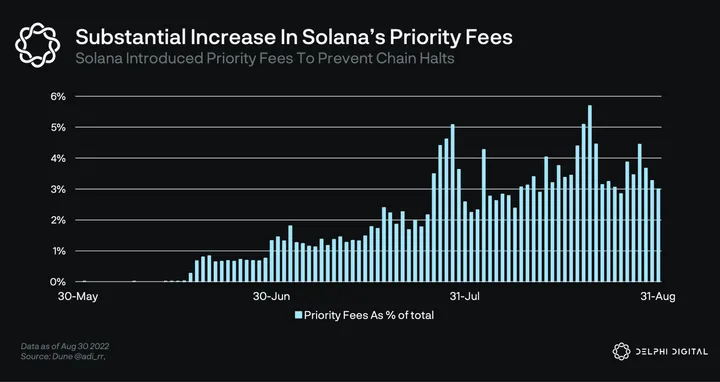

Lately, Solana’s biggest challenge has been network halts. As mentioned before, downtime is risky for some DeFi projects because protocols can accumulate bad debt since liquidations can’t take effect during downtime. The root cause of chain halts have been cheaply priced operations which have been exposing the network to spam. As a workaround, Solana has implemented a priority fee. These issues and the uncertainty around when they might be fully solved highlight the risk of adopting new technologies for DeFi apps such as those we contribute to.

Accurately pricing resources remains a challenge for all permissionless blockchains. Today, blockchains have a single fee market, where all resources (I/O, storage, computation, bandwidth etc.) are metered in the same abstract construct known as gas. This makes it challenging to accurately price operations relative to each other. Eventually, Solana (and others) will implement localised fee markets so that congestion on a particular dApp isn’t detrimental to UX for all others. We keep a close eye on this progress and its implications for Solana.

Overall, while Solana has faced much criticism (often unfair) regarding being unstable, centralised, hard to build on etc., we are impressed at the ability of the Solana Labs team and ecosystem to improve in those areas. Solana is now credibly decentralised by most factors and the dev experience has also improved. We’re also confident that stability will become a problem of the past and soon be forgotten.

However, given upcoming competition from challenger fast monoliths like Sui and Aptos, bridges requiring additional trust assumptions and the monolith thesis generally not fitting in with our multichain vision, we decided that Solana wouldn’t make sense to build on at this stage.

Aptos and Sui

Both Aptos and Sui aim at maximising the throughput of the network at every node, similar to Solana but with a very different technical approach. One of the core ideas of the design seeks to optimise the mempool dissemination layer by distributing a DAG of transactions and guaranteeing availability.

Both share the potential ability to scale beyond individual validator performance, via internal sharding of a validator and homogeneous state sharding. Internal sharding means that a validator won’t need to scale vertically, increasing its specs to match that of the network, but it can spawn other machines behind a load balancer and shard the state as if it were a single node. This is essentially addressing a concern with Solana that validator specs will bottleneck performance and elegantly achieve scalability.

These ideas are promising and seem likely to result in some of the lowest latencies and highest throughput in the L1 blockchain landscape. This is interesting to us as the more performant and scalable a monolith turns out to be, the less pronounced the need for new chains. However, given our general optimism around Web3, we still feel that the need for a wide range of use cases will spill over into new specialised chains leading to the multichain architecture we’ve outlined.

Another advantage of these chains is their usage of the Move language (or a Move variant, in the case of Sui). Our initial experiments with Move on these chains was very promising, with the parallelisation exposed elegantly. So we’d expect that the smart contract development experience would not be a significant drawback compared with other ecosystems with new languages, especially given some time for development patterns to emerge. This is partly thanks to Move being in development ever since Facebook’s Libra (from which many of the development team came).

These chains ultimately have similar downsides to other new technologies we’ve considered – with immature DeFi ecosystems, community, connectivity and substantial technical risk leading them to be unsuitable for migrating projects with existing communities and technical choices made in other ecosystems. As a counterpoint, MoveVM and variants are likely to be used across multiple chains, which does provide some optionality should there be a need to migrate down the line. As they evolve and as bridges are developed, they may become suitable and we’ll definitely be keeping a close eye on them going forward.

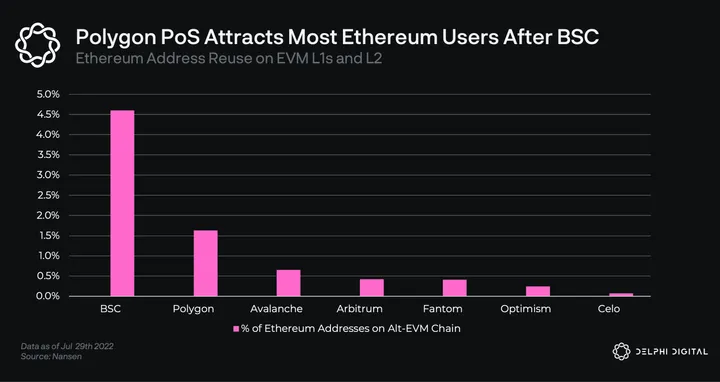

Polygon

As Ethereum’s rollup centric roadmap was late to market, Polygon PoS filled a much needed gap by becoming the go-to sidechain for Ethereum. Polygon is quite fast, with block times averaging around the two second mark. That, combined with the very strong ecosystem, does satisfy our two main requirements to build a good DeFi experience.

These factors, hugely helped by the effectiveness of the Polygon team at business development and deployment of treasury, lead to it gaining significant market share while solidifying the legacy of EVM. In the EVM space, Polygon is second to BSC in terms of capturing Ethereum users and has a very rich DeFi and gaming/NFT ecosystem and $2B TVL.

As usual, these advantages do come with downsides. In the past, Polygon has been repeatedly experiencing deep reorgs resulting in bad UX. Furthermore, we notice that governance decisions in Polygon have at times been opaque and centralised. An example of this was the core team’s decision to increase gas prices by a drastic 30x which was seemingly presented without much involvement from the community.

Notably, becoming a validator on Polygon isn’t currently a permissionless process. Polygon’s intention is to have periodic auctions where anyone can replace existing validators by staking a higher amount. However the auctions haven’t been held since the max cap of 100 nodes was reached and currently the only way for anyone to become a validator is if one or more of existing validators to unstake. The last community proposal has addressed this issue by outlining a mechanism for the network to self-regulate; an important step in the network’s gradual decentralisation plan.

Last but not least, the safety of the ecosystem hinges on a small committee controlling billions of dollars through the canonical Ethereum<>Polygon PoS bridge.

On the flip side, we see Polygon as an ecosystem rather than a single chain. The core team has been putting significant resources in building new scaling solutions including ZK rollups Hermez, Miden, Zero, DA Layer Polygon Avail, and others. We spent some time diligencing the ZK technology in particular and were extremely impressed by what we found. Polygon Edge is also promising and aligns very closely with our specialised app-chain vision, although the tech is still nascent and connectivity between supernets is an unsolved problem. Overall, given its existing adoption, BD excellence, and the technical strength of its upcoming ecosystem, Polygon scored second highest on our ranking and we would likely view it as a competitive option for DeFi dApps built on EVM.

However, the current trust assumptions of the bridge and current PoS chain were the main factor for us deciding it is not the best place to build right now.

Near

The primary differentiator of Near is its dynamic sharding architecture. The goal of this design is for users and developers to not have to be cognizant of which shard they are on. Instead, validators dynamically (every 12 hours or so) determine which txs will be grouped together based on statistical analysis, effectively adding/removing shards in a seamless manner. Sounds like science fiction? Maybe, but it’s a uniquely ambitious and therefore a commendable vision.

This architecture can achieve highly competitive scalability with 1.3 second block times. It manages to do this while maintaining acceptable decentralisation, with ~100 nodes currently and plans to make validation more inclusive via “chunk-only producers”, which should significantly reduce hardware requirements.

However, the architecture also does have some drawbacks; smart contracts must communicate using asynchronous patterns since they are not guaranteed to be part of the same shard. In fact, even today, when Near operates on a single shard, app developers have to perform async smart contract calls. DeFi applications typically require the precise coordination of many contracts, and asynchrony can introduce additional complexity, failure modes, and time to finality. For example, a liquidation involves at least 3 different smart contracts working together (the money market, an oracle, and an exchange). Asynchrony between these components introduces additional latency and safety assumptions that could impact the market’s solvency.

The Near community and ecosystem are not as robust as others, with very little in the way of a DeFi ecosystem to integrate with right now. That said, the Near team has a strong vision and has been aggressively executing on it, raising an $800M ecosystem fund and investing aggressively in BD where they’ve managed to attract some strong applications.

Near has built an EVM compatibility layer called Aurora which makes up ~half the activity. This increases portability for EVM based dApps. It also has a trustless Ethereum bridge called Rainbow, creating strong connectivity with Ethereum, although connectivity with other ecosystems is currently lacking.

Overall, the complexity introduced by asynchronous calls, especially for highly composable DeFi applications, combined with the small existing ecosystem, lead us to discount this as a near-term option – although we’ll be keeping a close eye on the progress of the ecosystem going forward.

Avalanche

Architecturally, Avalanche is quite similar to Cosmos with multiple domains (subnets instead of zones) interoperating with each other. Just like Cosmos zones, subnets are sovereign networks that bring their own security. This architecture enables a smoother way for dApps start off as smart contracts, build a community, and once mature enough, become a subnet for further customizability. As smart contracts migrate to subnets, they also offload congestion on the primary network, which reduces fees. We saw an example of this with P2E game DeFi Kingdoms’ launching of their own subnet. This strikes us as a healthy way to grow an ecosystem.

The downside of Avalanche is that it’s a relatively new ecosystem. As such, the existing cross-subnet functionality, infra-tooling and dev community remain immature.

The competitive edge of Avalanche is its novel consensus. Unlike others, Avalanche’s consensus can tolerate a large number of validators w/o degradations in consensus performance. Today, Avalanche’s primary network is run by 1000+ consensus nodes while maintaining an impressive 1-2 seconds finality.

However, it’s debatable how effective consensus node count is when it comes to censorship (without being accompanied by a high Nakamoto Coefficient) resistance and/or decentralisation. Ultimately, the influence of each validator is determined by its stake weight. In permissionless setups, stake typically gets concentrated in the hands of few due to the centralization tendencies. In absence of careful measures on MEV and accountability, we don’t find the number of consensus nodes a very useful way to measure decentralisation.

Cosmos

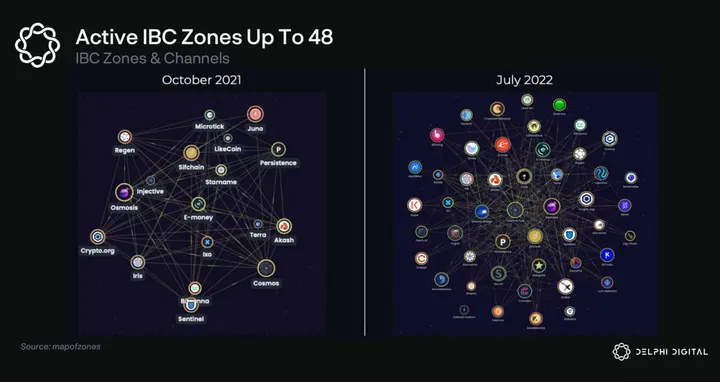

Best described as an ecosystem of interoperable blockchains, Cosmos is a network of blockchains connected with the IBC protocol.

As stated in Part 1, we expect to see a shift towards specialised or application-specific chains due to the benefits of customisability. However, this would be infeasible if every chain was required to design and implement consensus, storage, networking and so on from scratch. Cosmos SDK is a collection of customisable modules that together act as a template for creating new blockchains, thus reducing the effort to be within reach for many dApps.

Substrate from Polkadot provides a similar toolkit, but anecdotally from our conversations it seems generally agreed that it is harder to work with than Cosmos SDK and has far fewer app-chains in production than Cosmos SDK. And as mentioned earlier in the Polkadot section, interoperability is only available to parachains whereas Cosmos chains can directly interoperate with one another without requiring the involvement or approval of the Cosmos Hub.

IBC (Inter-Blockchain Communication) is the interoperability protocol for communicating arbitrary data between arbitrary state machines (blockchains). While in the future any blockchain with finality can implement IBC and join the Cosmos network, the only production ready implementation is as a set of Cosmos-SDK modules. IBC is trust-minimised, as though two IBC enabled chains require a third party relayer, it only needs to relay 1) signatures of the source chain’s validators attesting to the block header, and 2) a merkle proof which, together with the block header, proves a certain tx exists in the source chain’s block. Neither of these can be forged. (For more on Cosmos, you can read our previous Pro report on it here.)

We see IBC’s trust assumptions as a huge advantage. Most bridges work by introducing one or multiple different stakeholder groups sitting between two chains and relaying messages, creating additional trust assumptions and attack vectors. IBC only requires trust in the chains being connected. Given cross-chain messaging is at the heart of the cross-chain architecture we’re exploring, ensuring the bridge is trust-minimised is a key consideration for us.

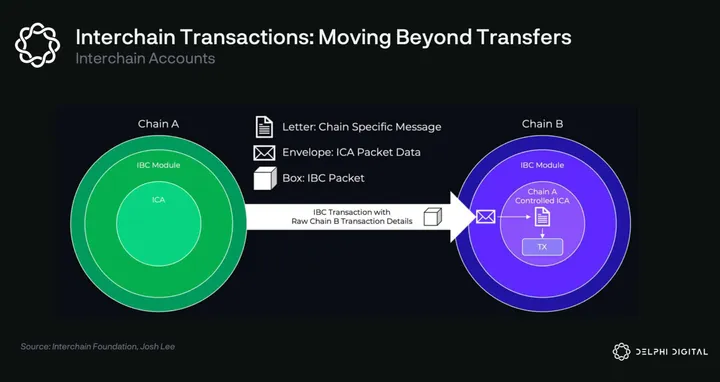

IBC provides more functionality than just message passing. Interchain Accounts is a new feature that allows blockchains to control an account on another chain via IBC. With IA, multi-chain UX gets drastically simplified. Instead of opening many accounts across chains, moving tokens between them, paying fees in different denominations, users will be able to use dApps across different chains from a single account. For a cross-chain project, this feature would allow for example governance on a central chain to control smart contracts on connected chains. There’s also Interchain Queries, which allow one chain to query the state of another. These features are however still immature and not quite ready for production usage, but once ready will significantly broaden the design space for cross-chain applications.

Despite its technical advantages, the Cosmos ecosystem is still small, with under $1B in TVL, most of which sits on chains without CosmWasm or IBC support. The DeFi space on Cosmos is going through a big shift at the moment. As written in the introduction, Cosmos’ largest chain Terra recently collapsed which led to most of the assets in Cosmos being destroyed or fleeing. Terra was a blessing and a curse for Cosmos; while the implosion hit it more than any other ecosystem, it also brought a large passionate community of users and developers, many of which are now deciding to stay. We’ve already seen Terra projects such as Kujira and Apollo commit to launching app-chains and others relaunching as smart contracts on existing chains, such as Levana on Juno and the upcoming Vortex (formerly Retrograde) launching on Sei Network. Aside from ex-Terra projects, other large projects are also seeing the benefits of Cosmos, with dYdX being the most notable. Nonetheless, the liquidity in the ecosystem is currently developing, so committing to build in Cosmos is a bet on future growth.

As for speed, block times vary from chain to chain and depend on a set of tradeoffs such as number and geographic distribution of validators. Historically, a sufficiently decentralised Cosmos chain would typically have block times of around 6 seconds which is mediocre. Newer chains are improving on that however, with Evmos and Injective for example achieving ~2 second block times with a globally distributed validator set and Sei achieving ~1 second block times on testnet. Speaking to the Tendermint team, it seems there is significant head room for improvement via storage and consensus optimisations – with sub-second blocktimes appearing feasible in the near future for some applications. In addition, Cosmos’ modularity is an advantage here, with independent teams being able to work on their own improvements to consensus. One example here is Optimint, being developed by the Celestia team, which would enable an ABCI compliant rollup on Celestia, giving Cosmos chains a potential future path to scalability.

As for decentralisation, validator counts on Cosmos chains are much lower than the Solana and Ethereum PoS networks. However, we feel that validator counts are actually not a good measure of decentralisation. Instead, we prefer to look at the Nakamoto Coefficient (number of colluding entities it takes to censor transactions), which is 7-10 for most Cosmos chains, 2 for Ethereum 2.0 and 31 for Solana. A big positive for Cosmos is the high level of decentralisation of the core development, with multiple independently funded teams working to contribute to the core development. Informal, Strangelove, Interchain Gmbh, All in Bits, Confio, Regen Network, and others are all working to contribute parts of the core Cosmos codebase with others such as Quicksilver, P2P, and SimplyVC working to contribute peripheral components such as ICQ. Having many unaffiliated, independently-motivated contributors to an ecosystem, as with Ethereum and Bitcoin, is the only model proven to achieve ‘sufficient decentralisation’ from a legal/regulatory perspective.

Conclusion: Cosmos

After considering the options we summarised above, we decided that our best path was to focus our research & development efforts on the Cosmos ecosystem.

As mentioned in Part I, we believe that the space will increasingly fragment into a mesh network of generalised smart contract chains and specialised app-chains connected via trust-minimised bridges. In such a world, multiple DeFi hubs will emerge, each with their own set of tradeoffs, ecosystems, and communities. DeFi dApps that are deployed on multiple platforms, with well-designed architectures, will benefit from liquidity and other network effects that will make it difficult for local DeFi dApps to compete. We feel Cosmos is best positioned to both benefit from the increasing number of app-chains and enable the most advanced cross-chain architectures.

In addition, it’s also fast enough to enable a seamlessly integrated DeFi UX with order books, high leverage, and fast trading execution. At the same time, we feel it’s sufficiently decentralised to provide strong security, liveness and censorship-resistance guarantees, especially when compared to many of the alternatives we considered which are more nascent and thus have more centralisation vectors.

Its biggest weakness is the ecosystem, with current TVL lower than single ETH L2s. Relatedly, Cosmos also suffers from lack of hype, funding, and memeability, perhaps due to the lack of a schelling point L1 token to rally around. While we believe that inflow of projects from the Terra crash and an increasing number of specialised app-chains will serve to remedy this somewhat, it’s undeniable that we’re betting on future ecosystem growth. This remains the biggest risk to Cosmos and to our thesis.

For these reasons, we’ll be focusing on the Cosmos ecosystem for the foreseeable future. That said, we’re not Cosmos maximalists (except Larry), and so we’ll continue actively researching and monitoring other ecosystems. The following are a few of the areas we’ll be watching closely as a sign that our thesis may need revising.

Growth of monolith chains relative to app-chains: If most interesting dApps are deployed on monoliths and never move to their own execution environments, this would be an invalidation of our thesis. After all, right now the costs of deploying app-chains are much higher than deploying smart contracts on monoliths, while the composability, brand and UX advantages of popular general purpose chains are also strong. In addition, something like isolated state auctions could make monoliths more competitive in terms of resource cost and predictability. We’ll be monitoring the fastest monoliths closely and be ready to reconsider our thesis if we see signs of this playing out.

Emergence of trustless bridges: Throughout our analysis we discounted many chains due to weak connectivity, meaning they either lack bridging infrastructure altogether or the existing infra is immature and/or has undesirable trust assumptions. That said, there are multiple extremely well-funded, brilliant teams working on bridge solutions and we’re confident this will improve over time. We’ll be monitoring this closely to see if there’s anything comparable to IBC in terms of trust assumptions, or if IBC (which is chain agnostic) propagates outside of Cosmos. We’ll also be paying particularly close attention to connectivity between specialised execution environments such as Avalanche sub-nets and Polygon supernets, since these fit most closely with our multi-chain thesis.

Maturity of high potential but currently high risk tech: Throughout this process, we researched several nascent technologies that have some chance of becoming best-in-class end-game tech, particularly in the rollup space – ZK rollups and Celestia rollups such as Fuel V2 in particular. We’ll be monitoring this closely and seek to deploy once we think the risks are minimised.

To conclude, this report has taken us deep down a variety of different rabbit holes and we’ve emerged more bullish than ever on the future of the space. There are multiple well-funded, highly talented teams working on making order of magnitude improvements to scalability, connectivity, and DX/UX. Collectively, these improvements will enable entirely new kinds of cross-chain DeFi applications, which we believe will serve to pull more activity on-chain.

While we’ve put considerable effort into researching each ecosystem and ensuring the accuracy of our analysis, given the sheer breadth of material covered we’re bound to have missed something. If you think we have, we welcome you to reach out.

0 Comments