Data Will Get Us to AGI

We’re getting closer to AGI, but we’re not there yet.

Some leading voices in AI – like OpenAI’s Sam Altman – believe we’re on the cusp of achieving it, while others argue we’re still years away from systems that can truly generalize like humans.

The best AI models now outperform most humans at many cognitive tasks, whether it be writing code, solving complex math problems, reasoning through multi-step scenarios, or even passing medical and law exams. But at the same time, these same models struggle with tasks humans find trivial. The path forward isn’t just about bigger models and more compute.

It’s about data.

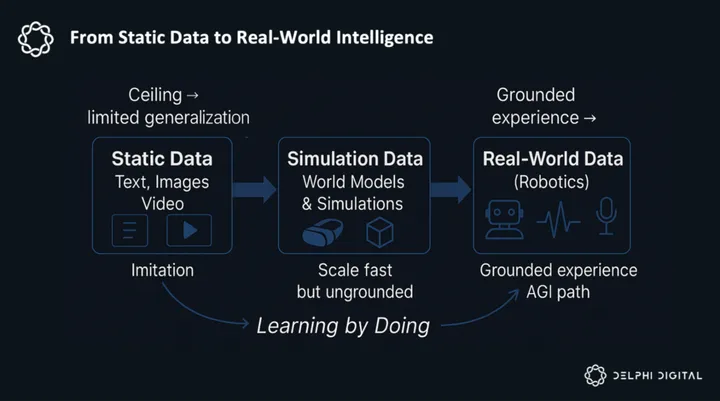

Current AI hits a ceiling because they’re trained on human knowledge – text, images, video demonstrations of tasks, etc. – which works for mimicking humans, but leaves the real world untapped. You can’t reach true AGI by only learning from static data. Like humans, AI needs to learn through experience.

David Silver and Richard Sutton from DeepMind call this the transition to the “Era of Experience,” where AI breakthroughs require continuous streams of real-world or simulated interaction.

Consider the following breakthroughs by Google Deepmind:

- AlphaGo – learned from a large set of human-played Go games, then simulated millions of games against itself, experimenting with moves no human had ever tried. With this approach, it was able to beat the world champion by devising entirely new strategies that hadn’t ever been used by humans.

- AlphaProof – trained on millions of math problems, then used trial-and-error to test out novel approaches for solving math proofs. As a result, it was able to generate 100M new proof strategies (that humans had never discovered) and become the first AI model to medal at the International Mathematical Olympiad.

These show what’s possible when AI moves beyond limited human data, and this experiential data-focused approach is how I envision us eventually reaching ASI. Physical embodiment allows AI to persist in the real world, interact with it, and learn from the effects of its actions, potentially accelerating discovery and pushing beyond human cognitive limits.

Most AI models learn by merely studying what humans have already done or imagined, but Deepmind has proven that models can transcend human capabilities by doing. Imagine what could be accomplished if these techniques could be applied to the physical world.

The issue today is that gathering the type of experiential training data needed to simulate the physical world is infinitely more complex. AlphaGo and AlphaProof succeeded because they had access to training data that taught them the rules of their environments before they were let loose to experiment with their own approaches. However, the countless data modalities (vision, touch, force, audio, etc.) that need to be captured, as well as the logistical challenges of organizing consistent data collection at scale, make it nearly impossible to accomplish this in the real world.

Genie 3 is here – it can generate an entire world simulation that you can interact with in real-time, just from a text prompt! It’s pretty mind-blowing really when you stop to think about it, and it’s rapidly improving – one day we will be able to build the Holodeck for real! pic.twitter.com/wJbXfEFJm2

— Demis Hassabis (@demishassabis) August 5, 2025

World models like DeepMind’s Genie 3 (see above) will undoubtedly help speed this process up by creating interactive environments that agents can train in (at exponential speed and scale), but even the state-of-the-art simulators continue to struggle with basic physics, multi-agent interactions, long-horizon logic, and complex action spaces as found in real-world environments.

The reason for this is that they lack grounding in real-world data. Simulations can accelerate learning at scale, but without anchoring these environments to the physics and variability of the real world, agents risk learning flawed or incomplete causal rules. To put this into perspective, AlphaGo wouldn’t have been able to beat the world’s best Go player if it was initially trained on games full of illegal moves.

My thesis: robotics is the critical missing ingredient for true AGI. It’s the only path to giving AI real, grounded understanding of the world through continuous, real-world data.

In the near future, robots will be able to act in physical environments, test hypotheses, and learn from the consequences of their actions to generate the continuous streams of data that simulations can’t provide – I’m talking about unlocking capabilities that could transform every industry. Imagine AI systems that can conduct scientific experiments autonomously, develop new manufacturing processes through physical iteration, or solve complex logistics problems by actually testing solutions in the real world.

Whoever consistently produces this high-quality, real world data at scale will be able to sell it at a premium.

So What’s Holding Robotics Back?

I’ll start with some background.

Robotics companies today collect training data through a labor-intensive process called teleoperation, where human operators remotely control robots to demonstrate how tasks should be performed. Whether it’s folding laundry, sorting packages, or cleaning surfaces, these demonstrations are recorded as datasets that capture the robot’s movements, sensor readings, and environmental conditions.

Here’s how it works: a human operator uses controllers (joysticks, VR rigs, or motion capture equipment) to guide a robot through a task while cameras and sensors record every action. This creates “action-planning” data that shows the robot the sequence of movements needed to complete specific tasks.

This tele-op setup runs on

Unlock Access

Gain complete access to in-depth analysis and actionable insights.

Tap into the industry’s most comprehensive research reports and media content on digital assets.

Be the first to discover exclusive opportunities & alpha

Understand the narratives driving the market

Build conviction with actionable, in-depth research reports

Engage with a community of leading investors & analysts

0 Comments