As an ecosystem of chains, Superchain has the responsibility of satisfying not just the users who are looking for an ecosystem of dApps, but also the dApp builders yearning for easy access to liquidity, along with the chain builders who are looking to be closer to a existing user-base and liquidity. Interoperability affects all three of these stakeholders. With the fragmentation of liquidity, all of these stakeholders are aligned with one goal, interoperability.

In this report,

- We will first understand the expectations of stakeholders from an ecosystem of chains.

- Dive into how different ecosystems of chains have solved the interoperability problem, the trade-offs they’ve made, and what we can learn from them.

- We will study the EVM-based ecosystem through the lens of laissez-faire development and attempt to understand what interoperability might look like without any preset standards.

- We will then look into the main topic of the report, shared sequencing. It is the solution that will unite not just Superchain, but the whole of Ethereum.

- Finally, we’ll compare different solutions and propose different ways the Optimism Foundation can potentially achieve seamless interoperability inside the Superchain ecosystem. This may include adopting certain interop standards, modifying Superchain sequencers to enable certain features, or just backing off and letting the market solve the problem itself.

Interoperability, although a niche space inside Crypto, is broad enough to have many concepts that might be new for many people reading this report. So we would like to define a few concepts that are necessary before we dive into the existing solutions.

Essential Definitions of Interoperability

Interoperability of blockchains first became prominent when Bitcoin bridged to Ethereum through the WBTC token, minted and custodied by BitGo in 2019. WBTC is a simple token transfer bridge. This is the most widely used form of interoperability that exists today. But there is another kind of interoperability that is growing in need, secure data transfer between smart contract chains. These two are the foundations of interoperability.

Token Bridges

Since blockchains are primarily financial, it should come as no surprise that the most prominent use case for interoperable solutions is moving financial assets across chains.

There are two methods for transferring tokens across chains. Liquidity Networks, which work like a DEX for swapping, and Lock and Mint or Mint and Burn Bridges, where tokens are locked (Wormhole) or burned(Circle’s CCTP) on the source chain, and representations of them are minted on the other chain. For both token transfer methods, some entity needs to ensure that the tokens are locked or burned on the source chain before minting on the destination chain. In other words, the state change on the source chain should be verified on the destination chain.

In the case of WBTC, BitGo ensures that BTC is locked in its custody before minting WBTC on Ethereum. Wormhole uses a set of guardians to ensure that the tokens are locked/burned before minting/unlocking them. It can also be a single server passing data and verifying the state change on the source chain(Hyphen) or an entire PoS chain with validators doing the same(Axelar). It can even be an Optimistic Oracle (UMA).

Token bridges are an important concept to understand for Superchain because…

Messaging Bridges

Protocols that pass arbitrary data from one chain to another chain in a secure manner are called Messaging Bridges.

Token bridges are a subset of messaging bridges. That’s because there are other use cases for messaging bridges, like cross-chain governance, multi-chain identities, etc., although token transfer across chains remains the biggest use case for messaging bridges.

In general, messaging bridges allow for any piece of arbitrary data to be passed between chains. This can be value, instructions to execute a transaction on the destination chain, or just a proof that happened on the source chain. Each messaging bridge creates a unique system for sending, verifying, and executing cross-chain data transfers – these protocols compete against each other on ease of integration, chain support, speed of verification, cost of verification, extensibility, and a few other factors. As with token bridges, the security of the underlying message bridge can vary – zk proofs, POA, optimistic oracles, centralized oracles, or some combination thereof can be used to prove that something X happened on chain A.

Messaging bridges are an important construct for Superchain because if Superchain can facilitate message passing in a global, secure manner from any OP stack chain to another OP stack chain, it will allow for universally accepted tokens, contracts, and developer tooling.

Now, just as token transfers are a subset of message transfers, message transfers are just one subset of the total interop stack. Let’s fully zoom out and look at how the entire interop stack.

The Interoperability Stack

Just like how many underlying layers work in tandem to make the Internet possible, we believe it is beneficial to divide the Internet of blockchains to allow layer-level optimizations.

The interoperability stack can be categorized into 3 layers.

- The Application Layer: The layer where developers build cross-chain applications like token transfers, intent protocols (CowSwap, UniswapX), cross-chain governance, etc. This is the user-facing layer, everything below this is something the user does not need to know about.

- The Transport Layer: This layer consists of messaging interfaces the developers interact with to build cross-chain applications. It consists of components like, encoding/decoding logic, message ordering system, replay resistance logic, timeouts, relaying, retries, etc. These are all the features a transport layer would have to ensure that the messages get delivered under the right settings.

- The Verification Layer: This layer consists of entities tracking the source chain events to create a proof or an attestation that a source chain event has occurred and is secure to be transmitted to the destination chain(s). These entities can be a single server, a multi-sig, a PoS chain with validators, a zk-proof, an optimistic oracle, or the source chain validators themselves (the best-case scenario).

The separation of these layers is important for the interoperability space because it allows experts to emerge in each of these layers. These layers are complicated enough alone, but if we expect just one team to work on all three of these layers, they are bound to make mistakes. That is probably why we saw a lot of hacks in the interoperability space.

Modularization of these layers compels us to dive into how different solutions in the market have focused on specific layers and the trade-offs they’ve made. But just before that, let us look at the goals we have in the interoperability space along with some more definitions. What do we aim to achieve with all this?

The Monolithic Experience – Synchronous Atomic Composability

Over the past few years, the standards for user experience in terms of interoperability have increased multi-fold. The bear market users say that bridge aggregators like Jumper and Bungee support bridging from any chain to any other chain out there with a good UX. We are used to this.

However, the new entrants of the bull market will not be satisfied by this experience. Cross-chain swaps fail often due to slippage, users will not have gas on the other chain, they have to wait for 10 secs – 15 mins for bridge transfers based on the chains and the amounts involved, have to approve 4-5 times if it is an unbatched cross-chain swap, etc. These are just half of the interoperability issues.

The new entrants seem to be happy with the Solana experience. Now that the EVM ecosystem has a competitor with similar fees (after blobs) but a much simpler UX, there arises a dire need to compete with these high-performance chains on UX. And for that, we need:

Synchronous Atomic Composability

Let’s dissect this phrase. First, what is composability?

Any smart contract can interact with open functions inside a chain. This allows for a lot of innovation to be built on top of other protocols inside a chain. This applies to token contracts as well. A token can move inside a chain, be lent on a lending market, borrowed, and be used for some yield strategies. All these things become extremely hard without composability.

However, it is possible to retain composability across chains. It requires an ecosystem of chains like Superchain to take a step forward in search of such solutions. That is what we will discuss here.

Asynchronous Composability: This is the most common method of composability right now. It is possible to asynchronously bridge tokens from one chain to another by simply watching a burn/lock event and proving that it happened. Similarly, it is possible to call another smart contract, change contract parameters through governance, or manage a yield strategy across chains through existing interop solutions. In this method, the destination chain action is executed in a few seconds to a few minutes after the source chain event.

The problem with asynchronous composability is that it is fairly easy for anyone to figure out what will happen on the destination chain by just watching the source chain as the intent protocol requires the intent to be posted on-chain. Plus, this method is much more vulnerable as a hacker can provide fake proof that the source chain event occurred either due to software bugs or due to security assumptions not holding true.

Synchronous Composability: In this case, both the source chain event and the destination chain action are added to their corresponding blocks at the same time by the validators or the sequencers of the chains. The users are trusting the validators/sequencers of both chains/rollups to behave here. Or maybe, they can just trust the cryptography if such a system can be built.

All batched actions in a block on the same chain happen synchronously.

It is also possible to batch multiple transactions in a single block and write the smart contract logic in such a way that either all those transactions are included in the block successfully or none of them are included. This concept is called Atomicity. It is helpful to make sure to have atomicity to avoid getting stuck with an IOU token in the middle. We can achieve this in cross-chain as well.

Synchronous Atomic Composability: In simple terms, this is a way of ensuring either both the source chain and the destination chain transactions are included or none are included in the blocks. There are two more important definitions required here. Atomic Inclusion and Atomic Execution.

- Atomic Inclusion: This is a feature where the sequencers of two rollups ensure the inclusion of two transactions in the rollup blocks before executing them. If one of the transactions doesn’t end up being included, the other transaction should also revert. Inclusion does not guarantee execution because the blockchain state of one of the chains could be in such a way that it is impossible to execute that transaction and update the state. This is very common in DeFi especially during DEX swaps due to slippage or while borrowing funds when there are no funds left to borrow.Atomic Inclusion is still a good enough feature for infallible transactions, like simple token burn and mint. Unless there is a burn/mint cap, these transactions are most likely to succeed. There are many use cases for just Atomic Inclusion.

- Atomic Execution: The users and developers get an execution guarantee in atomic execution. That is, if all the transactions aren’t successfully executed in an atomic bundle, none of those transactions will be included in the blocks.

As an industry, we are still working towards improving the security of Asynchronous Composability. The absence of certain advanced features in the interoperability space can be attributed to the increasing complexity of implementation as we progress from Synchronous Composability to Atomic Execution. Each step along this path presents significant technical challenges that must be overcome to ensure secure and seamless communication between disparate blockchain systems.

Let us first look into the stakeholders of Superchain that are demanding these solutions and understand the need for such solutions better.

Users, dApp Builders, and Chain Builders

Users

All of us here are building for end users. We are all providing value to end users in one way or another. Users can earn yield, deposit collateral to borrow cash, trade tokens or real estate prices, pay for an NFT using the OP token, and do many more things on-chain if they have assets on that chain. But it would require them to find a bridge, swap to the bridgeable asset, sign a transaction on that chain, and wait for 2-5 mins before they can interact with a protocol they initially wanted to, the users might give up somewhere in the middle.

There are many factors involved in a user’s expectations of a dApp. In this section, we will go through some aspects of using a chain that matter the most to them in terms of User Experience, Costs, and Security.

User Experience

- Speed: Users expect the transaction confirmation and finality of a transaction should be fast.

- Transaction Failures: Users expect successful execution of transactions with very low failures. A pre-confirmation is good enough despite the trust involved, it just should not revert too often.

- Ease of Onboarding: A user expects the ease of onboarding to be fast, simple, and smooth. They can have fiat in their banks, Crypto on a CEX, Crypto on a different chain, or Crypto inside Superchain but on a different rollup, they should be able to use any dApp on-chain.

- Wallet Infrastructure: A user expects simple transaction confirmation and probably delegation of control to a third party for repeated actions. This can be things like a simple EOA wallet that requires them to sign transactions but asks for chain switches, smart accounts that allow them to delegate part of the control to a third party, or embedded wallets that abstract away everything.

Costs

Users expect all these costs to be very cheap, of course.

- Onboarding Costs: Since some chains like OP Mainnet and Base are very well connected to outside chains, to onramps, centralized exchanges, etc., onboarding should be easy. One factor that matters here is the fact that the target chain (that the user is trying to access) is well connected to OP Mainnet through the shared sequencer. What if OP Mainnet does not want to sequence with this target chain?

- Interoperability Costs: This involves moving assets inside the Superchain. If we make interoperability very expensive, moving inside Superchain will be a hurdle for users.

- Transaction Costs: Transaction costs are localized on Superchain and ideally only inter-rollup transaction costs should be higher. But what if the obligation to join the shared sequencer increases transaction costs inside a Superchain rollup?

- MEV Costs: Swaps happening on Superchain could be exploited by the shared sequencers or builders, if there is a sequencer and builder separation. In that case, the MEV mitigation would have to happen on the application level.

Security

- Security of Assets: Users expect their bridged assets secured by the underlying interoperability protocol to be as secure as the native assets. Plus, bridged assets are especially tricky on Optimistic rollups as rollbacks might lead to a significant loss in certain designs (or gain, if lucky).

- Censorship Resistance: Users expect enough decentralization to not be censored by the shared sequencer or any other entity (builders or proposers).

- Trustless Exit: Users expect to be able to exit to Mainnet or other chains if there is an upgrade to the core protocol they don’t agree with or they are being censored by the sequencers.

- Finality: Although not thought of much. A user does not expect the chain to reorg or rollback under any circumstances.

Let us use this framework to compare user expectations and the reality between Solana and the OP Stack Chain ecosystem.

| Expectations | Solana | OP Stack Chains in the current stage |

|---|---|---|

| Speed | Instant | Instant |

| Transaction Failures | Highly Likely | Comparatively Low |

| Ease of Onboarding | Easy | Good to very bad UX depending on where users have their assets |

| Wallet Infrastructure | Simple | Complicated |

| Onboarding Costs | Low | Relatively higher |

| Interoperability Costs | – | Relatively higher |

| Transaction Costs | Very low | Relatively higher |

| Security of Assets | Secure | Third-party bridge security. Low. |

| Censorship Resistance | Relatively less prone to censorship | Highly prone to censorship |

| Trustless Exit | Sovereign Chain | Not live yet |

| Finality | Fast Finality | 7-day delay |

dApp Builders

Builders, like users, have several expectations from the chain they are deploying on. One can say that the dApp builders would have all the expectations in the above section plus more of their own. Whether they need composability to build on top of one of the existing protocols on a different rollup or having easy access to millions of users from well-known chains like Base or OP Mainnet, it is a rollup’s responsibility to be attractive for talented developers to build on the rollup. Developers and dApp builders will be used interchangeably going forward. Let’s define some expectations devs would have from our interoperability stack.

Composability

- Ease of Token Bridging: The ease of bridging yield-bearing assets like yETH to various Superchain rollups enables builders to build a lending market for those assets. It would be ideal to have tokens fluidly move across all Superchain rollups to avoid path dependencies using burn/mint token standards like xERC20, OFTs, ITS, NTTs, etc.

- Cross-Chain State Verification: Builders expect to be able to read and verify the state of a different chain without having to initiate a message from that chain, for instance, if there is a collateral locked on a chain that needs to be verified on another chain to allow borrowing against that collateral.

- Atomic Inclusion: Builders would expect to have the ability to include burn/mint-like transaction pairs through shared sequencers to have secure cross-chain bridging.

- Atomic Execution: Atomic Execution is a big unlock for dApp Builders who are building cross-superchain dependent applications. Atomic Execution enables flashloans across chains and to match the coincidence of wants between chains. Something unthinkable in this current state of rollups. Many loans on Ethereum are stuck there despite having lower interest on L2s because moving loans is not possible without cross-chain flashloans.

Standards

- Messaging Standards: Developers would benefit a lot from cross-chain messaging standards. IBC helped developers a lot in the Cosmos ecosystem as it was widely adopted. Transport layer standards like LayerZero V2 and Polymer’s vIBC help developers use battle-tested code rather than building these transport layers from scratch.

- Freedom to Choose: The other side of it is not forcing developers to abide by any of these standards. Plus, competition among the messaging standards is healthy. It forces them to build better modules for their transport layers. Even an endorsement by Superchain might create an uneven playing field.

Misc. Tools

- Native Account Abstraction: Native AA enables developers to create a gas-abstracted dApp experience for their users. It is also possible to fetch any user assets across chains using account abstraction to offer a seamless cross-rollup onboarding experience.

- Inter-chain Accounts: This is a feature available in the Cosmos/IBC land. Inter-chain accounts allow users to control a dApp on a different rollup by just interacting with the rollup that the user is connected to. This will be a game changer especially if a rollup is non-EVM, thus would have a different wallet address than the Base or OP Mainnet Address.

- RPC Management: Developers would be happy to have a system where they are interacting with a single RPC endpoint for Superchain instead of a hundred different endpoints.

| Expectations | Solana | OP Stack Chains in the current stage |

|---|---|---|

| Ease of bridging tokens | Single chain | Only available through trusted third-party bridges |

| Cross-Chain State Verification | The on-chain state is accessible | Not accessible right now. |

| Atomic Inclusion | Native | Not available |

| Atomic Execution | Native | Not available |

| Messaging Standards | – | Fragmented Standards |

| Freedom to Innovate | – | Free to innovate |

| Native Account Abstraction | Available | Available |

| Inter-chain Accounts | – | Not available |

| RPC Management | Single RPC | Multiple RPCs |

Chain Builders

Chain builders would have some demands from Superchain as well along with all the above demands from dApp Builders and Users, as they are their customers. Chain builders building on Superchain would have several reasons to build a rollup they operate. To attract top-notch chain builders, Superchain would have to offer many things like interoperability without sacrificing sovereignty and the ease of running a chain. Let’s look at what these chains care about in terms of interoperability. So far all other stakeholders only had positive effects of interoperability. But the rollup builders are the only ones that might have some negative consequences.

- Sovereignty: Chain builders expect full sovereignty of the sequencer to allow and disallow certain actors from interacting with their rollups. They want to control the spectrum of censorship. Sovereignty to join and exit Superchain.

- Block-Building: Rollups might prefer to build their own blocks with their own fair-ordering rather than having their blocks built by third-party block-builders. These block-builders are likely to maximize MEV extraction.

- Dependency Sets: Rollups should be able to choose what chains they are sequenced with. This is crucial because if a rollup is sequenced with rollbacks, they have no choice but to roll back as well, because the states are interdependent.

- Liveness and Decentralization: Chain builders expect to have the shared sequencer system be live at all costs. Chain builders, if they are to trust a shared sequencer, would prefer it to be a decentralized, permissionless system with a robust consensus algorithm.

Let’s look at how these things compare to the existing systems.

| Expectations | Solana | OP Stack Chains in their current form |

|---|---|---|

| Sovereignty | – | Fully sovereign right now |

| Block-Building | Decentralized | Rollups have the autonomy |

| Dependency Sets | – | Freedom to choose |

| Liveness and Decentralization | Relatively lower liveness guarantee due to the high-performant nature of the chain | Rollups are responsible themselves right now |

Interoperability So Far – The Era of Asynchrony

Now that we know the expectations of Superchain’s stakeholders, let us look into different ecosystems of chains to see how they have met their stakeholder expectations. We have to mention that there is not a single ecosystem of L2s that has solved interoperability in production at the time of writing.

Cosmos and IBC

IBC is an asynchronous and trustless messaging protocol widely used in the Cosmos ecosystem. IBC is said to have the most robust messaging system in the market. Although popular in the Cosmos ecosystem, it can be connected to any blockchain through an IBC implementation.

By standardizing the transport layer, IBC pushes builders to innovate on the verification and application layers of the Interoperability Stack. A chain can interoperate with another chain by adding IBC modules into its implementation and then plugging light clients of the chains into IBC. A light client helps one chain to verify the state and consensus of another chain before processing a message from it. Light client-enabled IBC connections rely on the security of the underlying chains themselves and that is the reason why IBC inside Cosmos chains is considered to be the most secure messaging system. The reason why IBC has not seen adoption in the non-Tendermint ecosystems is because of the homogeneity of all the Cosmos chains using the Tendermint/Comet Consensus. It is much easier for a Tendermint chain to implement and verify the state of a light client of another Tendermint chain than light clients of other consensus mechanisms.

Light clients here work as the verification layer of the stack. It is possible to switch this to any other verification system as well, be it a multisig or a PoS chain. These different verification systems are referred to as “Clients” in the IBC stack. “Connections” are created between two chains to create awareness of the link between them. Once Connections are live, any dApp or protocol can create “Channels” between the two chains to pass any arbitrary data (e.g., token transfers, smart contract calls, or data feeds) in a standard manner. All of these features make up the transport layer of the stack. This is why IBC-like protocols are so powerful.

As an ecosystem, IBC/Cosmos has nailed the standards not just in the transport layer, but also in the application layer; although they might not be as widely adopted as the standards in the transport layer. Application layer standards include the ICS-20 token standard that is similar to omni-chain token standards like OFTs, xERC20, etc. There is also IBC Middleware, which is IBC modules in the application layer that provide some features like acknowledgments, timeouts, etc.

Stakeholder Expectations Met by IBC

Users: The user experience is not ideal, because of the need to bridge across chains that have different addresses with significant bridge delays and interoperability costs. The tokens on IBC are path dependent, which means, tokens bridged from chain A → B → C are not fungible with a token bridged from chain A → D → C. Security of the tokens could be compromised if a chain’s validator set is compromised. But the relatively cheap and secure bridging is fantastic for users, better than anywhere else. On-chain costs are relatively low compared to other ecosystems due to the fragmented nature of demand for blockspace. Users get instant finality as a cherry on top.

dApp Builders: This is the ideal stack for dApp builders as they benefit from the standards put in place by the community. Plus, the IBC Transport Layer has versioning of the verification methods that allow updating all the instances of IBC on all the chains in tandem. Most tokens are bridged to Osmosis for trading and the dApp developers are not concerned about security like in other ecosystems. But there is no atomic inclusion (or execution), thus limiting cross-chain innovation while fragmenting liquidity and users.

Chain Builders: Maximum amount of sovereignty. Low-cost infrastructure to support secure interoperability. Autonomy to choose the chains to interoperate with, plus, to not be contingent on the state of another chain. However, the lack of liquidity and the difficulty of attracting users due to the interop UX has been an issue for chain builders.

One thing to take away from the Cosmos ecosystem is that a secure, native interoperability layer makes it a no-brainer for dApp builders to bridge their tokens. Solutions like Polkadot’s XCM and Avalanche’s AWM are solutions similar to IBC. So our takeaway is that the base layer security could be game-changing for Superchain.

Fragmentation of Ethereum/EVM Interoperability

The one thing that fascinates me and frustrates me about the Ethereum and EVM ecosystem is that there are no standards that are widely adopted by any sub-section of the community when it comes to interoperability. We have competing standards and aggregation of competing standards, which is a new standard by itself.

Since the shift in Ethereum’s roadmap to a rollup-centric future, we have seen the number of rollups explode. Even before that, EVM-based alt L1s like BNB Chain, Polygon, Avalanche, and Fantom have led to a lot of demand for interoperability in the EVM space. It is futile to learn about the history of EVM Interoperability. Let’s dive into the status quo of EVM Interoperability instead.

As we discussed in the Token vs Message Transfers section, messaging bridges have fully taken over the space. There are very few bridges that are pure token bridges. Most of them are built on the messaging bridges. These are the different kinds of messaging bridges ordered from the most trusted to the most trust-minimized bridges, aka, from the least secure to the most secure,

- A single validator bridge

- A multi-sig

- Proof of Authority bridges

- Proof of Stake bridges

- Optimistic Bridges

- zk-light client bridges

- Light Client Bridges

- L1 ↔ L2 Native Bridges

With bridges, security is a spectrum, or trust is a spectrum. From a fully trusted, to trust maximized, to trust minimized, to a fully trustless bridge, we have all kinds of them, reasonably priced. Extreme security is expensive, not everyone needs it. A user transferring $10 between BNB and Polygon does not need a light client bridge to secure that transaction, a simple single validator bridge is sufficient. That is another reason to use bridge aggregators that put a user transaction through the right bridge based on its size.

LayerZero, Hyperlane, Axelar, Wormhole, Socket, Polymer and more

The EVM interoperability space has taken inspiration from IBC and is moving towards being a modular interoperability stack as well. All of the above protocols have many similarities to IBC. These protocols are essentially standardizing the transport layers and offer the dApp builders to choose their own verification layers. By standardizing the transport layer with on-chain interfaces to initiate cross-chain messages that are verified by the verification methods of choice and relayed to the other chain by the relaying infrastructure, all these protocols are pushing innovation onto the verification and application layers.

With these standard interfaces to interact with, the dApp builders can choose one of these transport layers they are comfortable with, and switch to a more secure and cheaper verification layer as they come out while hoping that the transport layers implement the state-of-the-art verification layers as they come out. Even if they are not, the dApp developers can technically plug any verification layer themselves with some effort and auditing costs, although undesirable. It is helpful to think of transport layer implementations as training wheels for dApp at this moment. As more secure light client or zk-light client verification layers come out, dApp can switch over to them.

There are a lot of differences between these transport layers and their features as well. Polymer offers versioning of all the verification methods set at a time and lets developers maintain these versions across all chains. LayerZero offers Pre-crime, a feature that allows detecting malicious transactions before they happen, Axelar and Wormhole have similar features too. While most of these transport layers require permission to deploy on a new chain, Hyperlane is one of the only messaging protocols that allow permissionless deployment of the interfaces on any chain out there. Comparison of these transport layers individually is out of scope for this report, but it is ideal to let developers choose their own transport layers.

The difference between all these protocols and IBC+Cosmos is that IBC has native light client verification between all the Tendermint Consensus chains. It is not a viable solution to build light-client bridges across all the chains because each direction of bridging requires a unique light-client implementation. Ethereum -> Cosmos would need a light client on Cosmos that can verify Ethereum blocks and Cosmos -> Ethereum requires a different light client implementation. So, we will need n(n-1) light clients built for n number of chains connected.

Since most verification methods under these transport layer protocols are somewhere along the trust spectrum, it is a no brainer to combine these verification methods to offer better security.

Multi-Message Aggregation(MMA) and Messaging Standards

The Uniswap Foundation reported on the state of bridges in August 2023 when they needed a solution for cross-chain governance to control its deployment on the BNB chain. The report concluded by saying that there is a dire need to build the Multi Message Aggregator (MMA), an aggregation layer on top of the existing bridges in the market where a quorum of m out of n bridges is required to validate a cross-chain message. This ensures that if one of the bridges gets hacked, the system is still secure and Uniswap can swap that bridge out for another one.

LayerZero V2 is of a similar architecture to MMA with their DVNs, where a quorum of up to 31 DVNs can be used to validate a cross-chain message. DVNs are basically different verification methods integrated by LayerZero and others. Some of these include LayerZero’s competitors like Axelar. We can expect more and more of such m/n quorum interoperability solutions to come up in the future. Hyperlane also does this.

To be clear, there have been messaging standards in the space to standardize the messaging interfaces. In fact, Uniswap Foundation chose to build MMA using the ERC-5164 standard, which is created for the EVM chains. And we also have ERC-6170 that supports non-EVM chains along with EVM. However, these standards have not seen wide-scale adoption. Uniswap Foundation’s MMA is not live for Uniswap Governance at the time of this writing either.

Many of the above protocols are not just competing on the transport layer, but also on the application layer with competing token standards as it allows lock-in benefits with the transport layer.

OFTs, xERC20, NTT, ITS, Warp Routes, and more

OFTs from LayerZero, xERC20 from Connext (although open source and agnostic), NTT from Wormhole, ITS from Axelar, and Warp Routes from Hyperlane are all omni-chain token standards that fluidly move across the supported chains through a burn and mint mechanism. This solves the path dependency problem present in the Cosmos ecosystem ( i.e., A → B → C =/ A → D → C ). Having these token standards is helpful for the dApp builders as they are battle-tested and easier to understand for the users as there won’t be multiple versions of the same token on some chains.

There are differences between these token standards. Some token standards like OFT support m/n quorum security, xERC20 has a way to limit the number of tokens bridged per verification method per day, etc. Warp Routes and xERC20 are agnostic to the chain dApp builders wish to bridge to. Whereas OFTs cannot be bridged to a chain LayerZero is not deployed on.

Superchain does not need to choose one or the other token or messaging standard here. The dApp developers should have the autonomy to choose the token standard that works for their applications.

Stakeholder Expectations Met by Ethereum’s Fragmented Interoperability

Users: The Ethereum ecosystem faces a lot of challenges in terms of user experience and onboarding, especially to a new rollup. Users have had to trust third-party bridges between rollups in the past, but this is getting better with better transport layer standards. Bridging time between rollups has gotten faster(10s). Although people have waited for decades in total for bridges, bridging time was 2-10 minutes on average in the past. The wallet infrastructure is just getting better. Plus, in the context of burn/mint bridges, there is a possibility of double spending due to rollup rollbacks, but it has never happened so far.

dApp Buidlers: The dApp builders are puzzled by tens of bridging standards in the market. Bridging their tokens is not simple as they have to weigh in pros and cons. Atomic inclusion and execution are not possible. It is not even possible to verify the state of a different chain despite the common data availability layers even though they all operate in the same consensus layer. But research is being done in this direction at the moment.

Chain Builders: Rollup builders right now are the happiest in terms of the available liquidity and millions of existing users in the Ethereum ecosystem. Sovereignty is celebrated in the ecosystem as well.

Even with all the issues, Ethereum is still the most vibrant ecosystem in the market. There is so much innovation happening here that many other ecosystems can and have taken away from.

One major takeaway Superchain can have from this section would be that transport layers are key to interoperability. dApp developers can use any of the existing transport layers between Superchain rollups to bridge their tokens via third-party verification systems for the time being and when Superchain comes out with a more secure, native verification layer via shared sequencing, they can switch over to that. Superchain can have the best of both the above systems, Ethereum’s transport layer competition and Cosmos’ secure and native verification layer.

Even without atomic inclusion and execution between rollups, the Ethereum ecosystem has been innovating to mimic the monolithic experience on the application layer via intents. There are some things we can learn from this new paradigm and help accelerate it through base-layer improvements.

Intents and the Application Layer Abstractions

Intent-based cross-chain protocols, in a nutshell, let users express their intent to swap to a specific token on any supported chain and allow solvers or third parties to fill these intents on another chain. The solvers compete with each other on price or speed to be the ones to fill an intent. Once filled, this event is then proved on the source chain to unlock the user funds for the solver. Intent-based protocols are a version of liquidity bridges.

What is interesting here is the proving part. To prove a filled intent on the source chain, these protocols can use a messaging bridge. So, essentially, the user is trusting the messaging bridge to not steal their funds without fulfilling their intents. It is also possible to use storage proofs to verify an intent fill, maybe once storage proofs between rollups are live.

It all comes down to the verification layer. Some intent-based protocols in the market are Across, DLN, Router, SynapseX, UniswapX, etc. Across uses its optimistic Oracle bridge, DLN uses the deBridge messaging protocol, Router, and SynapseX use their own PoS chains for messaging and UniswapX is not live for cross-chain yet, so we don’t know what they will use.

Superchain can offer instant cross-chain intent execution by offering message passing with Atomic Execution. With that, in theory, a user can go from any token on any rollup to another token or directly into a DeFi protocol on any other rollup inside Superchain. Everything in between is abstracted away from the user. This is the monolithic experience Superchain can offer to its users.

Challenges with the Current Intent-based Protocols

We have not discussed MEV until now because so far we have looked into message passing and simple token transfers. It is important to note that the ordering of messages is up to the transport layer protocols and this might lead to some MEV extraction. IBC employs a fair ordering system while other EVM transport layer protocols have their systems for ordering messaging.

Simple token transfers are not usually prone to cross-chain MEV extraction as the user is minting the exact number of tokens they burned. But cross-chain intent protocols that involve complex actions emit some MEV in the form of EV_Signal as the user is expressing their intent on-chain for the public to see. Without cross-chain atomic execution, a user’s intent needs to be public on the source chain. If the intent involves a cross-chain swap of a substantial amount, it is a signal to the whole world that a solver might be swapping on the destination chain in a few seconds. This problem is discussed in detail in the CAKE Framework Research piece by Frontier.Tech.

Atomic execution solves this as the solver will be performing the swap in the same transaction as the claim transaction on the source chain. But the biggest unlock would be CoWs(Coincidence of Wants) across chains. CoWs are not possible without Atomic Execution.

With so much weight behind this, Superchain must make changes to its base layer to enable atomic execution in a secure and trustless manner. We believe it is possible to have synchronous atomic composability between not just L2s but also with L1. Hint: Based Sequencing. Just need to read through a few more sections.

Before we look into how atomic execution can be achieved, let us look into

Storage Proofs.

A unique solution that solves for n->1 connections that are crucial for governance and lending inside Superchain.

Storage Proofs with Moon-Math

Storage proofs are a combination of inclusion proofs, proofs of computation, and zero-knowledge proofs that enable smart contracts to securely and efficiently access and verify a wide range of on-chain data. They enhance data integrity, security, efficiency, scalability, and flexibility within the Ethereum ecosystem. In the context of cross-chain, the receiving chain can independently verify the storage proof against the provided block hash or accumulator root, without relying on any intermediary or trusted third party.

The costs of generating proofs and verifying them are unknown as these solutions for rollups are yet to be launched. There is one bridge between Ethereum to Gnosis that verifies Ethereum’s consensus on the Gnosis Chain using zk-proofs. Here is some more information on how it works.

Storage Proofs offer a unique solution to some of the problems faced in cross-chain applications. There is no need to initiate a message on the source chain in this case. This is the most efficient way for cross-chain applications that are looking to verify states of multiple chains at once, the n→1 connections.

Take governance for example. Many tokens in the market right now are omni-chain tokens. But most of these protocols with omni-chain tokens would like to have governance on a single chain. A user would have to send messages from all the chains where they hold tokens to the governance chain in order to vote on proposals. This is inefficient and expensive. Instead, by using Storage Proofs, it is possible to create a proof of ownership on all those chains and prove them all at once on the governance chain. This is a lot more efficient as the proofs are generated off-chain.

Ideally, these proofs require consensus validation from all those chains, but this might not be required for rollups as they all operate under Ethereum consensus. This could be an important solution for Superchain as we will probably see a lot of tokens that move around inside the ecosystem for various reasons.

Everything we have explored so far offers asynchronous composability. No solution in the market offers synchronous atomic composability at the time of writing. Let us talk about an application layer cross-chain dApp that benefits tremendously from synchronous atomic composability.

On-going Research for Synchrony

Shared Sequencers

If there is a way for rollups to offer the monolithic experience, it has to involve shared sequencers or some version of sharing a sequencer set across rollups. With SSF (Single Slot Finality) on the horizon, we can optimistically build towards a shared sequencer network that can offer us atomic execution of transactions with near-instant finality (36 seconds for full finality).

There are two main reasons for rollups to start sequencing together.

- One of them would be if they wanted to share resources and outsource collection, ordering, decentralization, and consensus of the sequencer layer to focus on the VM innovation and governance of the rollups.

- But the main reason we are interested in it is because it can support interoperability between rollups and achieve atomic inclusion and/or execution.

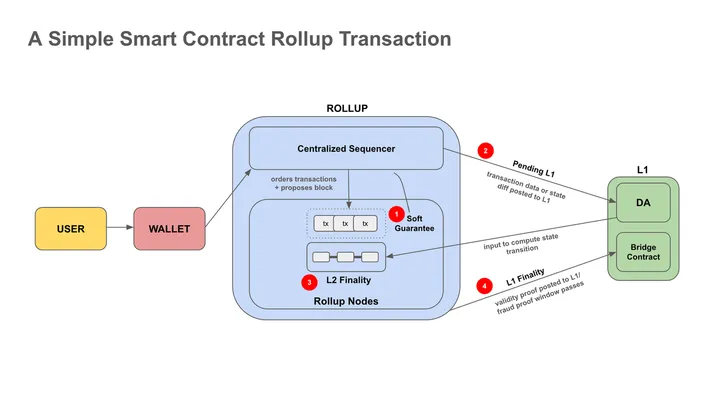

Briefly, an Optimistic Rollup’s transaction life cycle works like this.

Source: Archetype

- The user signs a transaction on an L2 and sends it to the sequencer

- A sequencer aggregates multiple transactions, orders them, and posts them on the DA layer.

- A rollup node picks up these transactions, executes them, and submits them to the state transition function on Ethereum. At the same time, a fraud-proof window opens up for verifiers to challenge invalid state transitions.

- If no one challenges the state transition in the 7-day time period, the state transition is considered final.

Note: A sequencer also assumes the role of a block builder in the current system. This is an optional preference for the sequencer.

In this system, a sequencer can be replaced by a shared sequencer that aggregates transactions from multiple rollups instead of just one. This is important because a shared sequencer can then include cross-chain transactions for multiple rollups on concurrent blocks.

A shared sequencer is like any sequencer that orders transactions of multiple rollups instead of a single rollup.

Here are the features a shared sequencer can offer:

Message Transfers: If a cross-chain transaction is initiated on Rollup A and is meant for Rollup B, the shared sequencer can give an assurance that they will include a corresponding message on Rollup B with the right callData to execute the subsequent cross-chain transaction. This is how a shared sequencer can offer cross-chain messaging. The sequencer, in essence, becomes a messaging bridge.

Atomic Inclusion: A shared sequencer can also offer another assurance that either both of the transactions are included or none of the transactions are included. Useful for simple burn/mint bridging.

Atomic Execution: An additional assurance that ensures execution of both or none. Sequencers cannot guarantee atomic execution because they often don’t run full nodes of a rollup and cannot predict the state transition after some transactions. Useful for DeFi actions like cross-chain swaps, cross-chain deposits, etc.

Synchronous Atomic Composability: A shared sequencer can also offer to include two atomic transactions into two blocks of different rollups at the exact same time as they would have the ability to produce blocks for both rollups. Useful for complex DeFi Actions like arbitrage, flashloans, etc. But what is the guarantee that the shared sequencer provides all these services in a trustless manner? In theory, a shared sequencer could maliciously do the following:

But what is the guarantee that the shared sequencer provides all these services in a trustless manner? In theory, a shared sequencer could maliciously do the following:

- Submit a malicious transaction and call the destination rollup cross-chain contract.

- Not include one of the atomic inclusion transactions thus double spending their tokens.

- Ensure that one of the cross-chain transactions fails to extract money in a cross-chain swap.

- Break synchronous composability to perform arbitrage themselves.

In IBC, a cross-chain message is validated by the consensus of a chain. A user is trusting the decentralization and the economic security of a Cosmos SDK chain itself.

But in this case of Superchain, if the shared sequencer is a single entity, then the rollups are trusting them to act in good faith. If there is a decentralized set of shared sequencers, the rollups are trusting the consensus of this network. Are there better ways to achieve this? Can we use the fraud-proof system and penalization of malicious behaviors to minimize the trust in the shared sequencer?

Several proposed solutions try to solve this. Let’s look at them one by one.

Shared Validity Sequencing – Umbra

This design, proposed by Umbra Research, achieves synchronous atomic execution with minor changes to the rollup contracts and the fraud-proof system.

The solution involves having copies of a system contract called GeneralSystemContract on all rollups, this contract contains two MerkleTree variables each. One MerkleTree would store all the transactions sent from a rollup and is called triggerTree . Another contains all the transactions received by a rollup, called actionTree.

A user can send a message from one rollup to another by adding a transaction hash with the destination rollup ID to the triggerTree. A shared sequencer is responsible for watching this smart contract to execute a smart contract call on the destination chain along with adding the same transaction hash to the actionTree .

With this setup, the Merkle roots of triggerTree and actionTree should match, thus ensuring secure cross-chain messaging. This clause is added to the fraud-proof mechanism of both the rollups and can be challenged by anyone if the Merkle Trees don’t match. If a mismatch is proved, the sequencer is penalized and both chains are rolled back.

A rollup can have many one-to-one connections with different rollups by having a pair of Merkle Trees for each. One-to-many message broadcasting is also possible by batching multiple send transactions.

One caveat with this design is that this requires the shared sequencer to run full nodes of all the rollups it is sequenced with, which might become harder and harder as more rollups are sequenced together. Rollup nodes are also required to run nodes for all other nodes for verification of cross-rollup transactions. Additionally, if a rollup is rolled back due to an invalid state, all the rollups that are sequenced with that rollup are rolled back as well. Thus, it is important to choose the rollups carefully.

Stakeholder Expectations Met by Umbra’s Shared Validity Sequencing

Users: By offering synchronous atomic execution, this design gives a monolithic experience to users. The onboarding experience depends on all the rollups that opt in and their connections to Onramp/CEXes. The transaction costs might be higher due to the high hardware costs borne by the sequencers. Plus, MEV costs could be high as well, since the sequencer set will likely be just one or centralized. On a more positive note, this design ensures maximum security for cross-chain assets. But the finality of a user transaction would be frailer than ever before.

dApp Buidlers: With synchronous composability on the table, dApp developers can build some of the best cross-chain applications out there. This system also enables FlashLoans and easy cross-chain arbitraging. The dApp developers are free to build any standards on this model. This is truly a monolithic experience. There is also the liveness concern for DeFi applications if the single sequencer goes down.

Chain Builders: Chain builders are the most affected by this design. They would be giving away part of their sovereignty for the above benefits. They also get to choose the chains they are sequenced with to mitigate finality issues, but they should choose with care. Liveness and decentralization must also be a concern due to the heavy computational demands of the shared sequencer.

Although this design offers an esteemed monolithic experience, it makes significant tradeoffs. It is better to look at other designs that do not trade off decentralization for secure atomicity.

Optimism Interop Draft Specs

The Optimism Foundation has also been working on a solution to solve synchronous interoperability inside Superchain. This solution is similar to the Shared Validity Sequencing in terms of how the system is secured.

Optimism’s approach to solve interoperability involves a dependency set that a chain inside Superchain will opt-in to interoperate with. It is a list of chains destination chains allow as source chains. When it comes to actual messaging, the source chains emit logs known as Identifiers that contain cross-chain messages targeting a specific contract on another chain, these are called Initiating Messages. On the other hand, the sequencers on the destination chains have to listen to these events on all the rollups in their dependency sets to execute them through a system contract called CrossL2Inbox, these are called Executing Messages.

In this system, instead of a Merkle Tree where both the messages are posted like in the Shared Validity Sequencing (SVS) design above, a shared sequencer or the cooperating sequencers work in tandem to ensure all the cross-chain messages are received and executed. Similar to the SVS design, if there is a mismatch between the Initiating and Executing messages, a verifier can challenge the state via fraud proofs.

This system is modular enough for anyone to build a shared sequencer on top. By default this system allows secure inclusion of transactions. And with crypto-economic guarantees, it is possible for shared sequencers to offer atomic execution as well.

Similar to the SVS design, it is crucial to choose the right set of rollups as its dependency set. If there is a faulty behaviour by another rollup both rollups would have to be rolled back. It would also be challenging for a rollup to watch all the rollups in its dependency set for Initiating Messages. Optimism team aims to solve this with a P2P gossip network of between the sequencers and rollup nodes to ensure easy access to the messages.

This design has very similar trade-offs to the Shared Validity Sequencing design above for all its stakeholders.

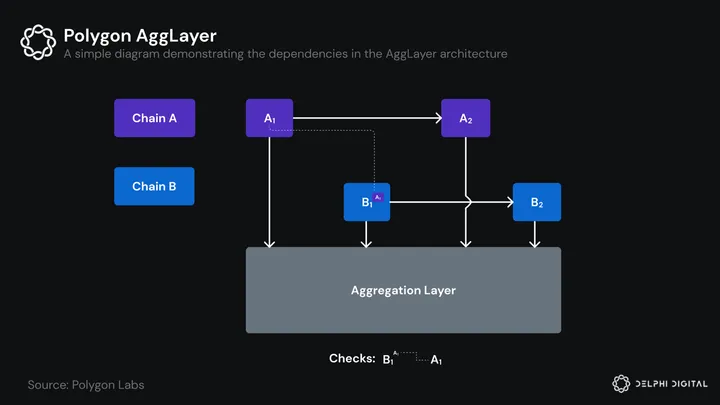

Polygon’s AggLayer

Polygon’s new zkEVM ecosystem is the closest relative to Superchain among the various ecosystems of chains we have discussed. It is also the only ecosystem of chains that promises synchronous atomic execution.

Polygon’s Aggregated layer solution takes a new approach to sequencing and proposing blocks for all the chains that join the AggLayer. If Chain A’s block is dependent on a transaction on Chain B’s block, A’s block will not be finalised on Ethereum until Chain B’s block is confirmed as valid and vice versa. If two chains are dependent on each other, there needs to be an additional zk proof to prove that the dependencies are met. If one of the chains fails to create a zk validity proof and submit it to the mainnet contract, both of the blocks are discarded and the chains are halted. This ensures synchronous atomic inclusion of cross-chain transactions.

Of course, this system adds some latency to the finality of blocks and transactions. Along with the 2 epochs delay(12 mins) for Ethereum’s finality, there is an additional delay of 3 mins or more for the creation of zk validity proofs for a block. But when (or if) Ethereum achieves single-slot finality and as the time for the creation of zk proofs keeps plummeting, we will see near-instant confirmations for cross-chain transactions.

Atomic Execution works similarly to inclusion. The zk proof created also proves that the dependent transactions were successfully executed and the state transition is valid. This solution is a version of Shared Validity Sequencing that utilizes the convenience of zk-rollups.

There are trade-offs in this model. If a chain chooses to be sequenced with other chains in the zkEVM ecosystem and one of those chains halts for whatever reason, all the dependent chains will also halt temporarily. That chain can be penalized for affecting other chains after a certain time threshold. This allows a chain to be late in submitting proofs and thus it might create some latency for all the chains it is sequenced with.

Avail recently announced a solution that is very similar to Polygon’s Agglayer.

This approach is best suited for zk-rollups. The optimistic rollup version of this design looks similar to the Shared Validity Sequencing design we discussed earlier. If all rollups end up becoming zk-rollups, Optimism can choose this design over others.

It is difficult to gauge if there are some drawbacks in this system as it is still being researched. One thing is for sure, it offers a fantastic user experience promising the prophesied monolithic experience while being a modular stack. The developers will also have the ability to build applications utilizing atomic inclusion and execution. The chain builders, however, will have to agree to the rules of the system.

Stakeholder Expectations Met by Polygon’s AggLayer

Users: It offers a monolithic experience. With account abstraction, it is possible to abstract everything away for the users in a secure manner while providing instant finality as the system evolves.

dApp Buidlers: Builders enjoy the atomic inclusion and execution features. Plus the Polygon chain already has significant liquidity for all zkEVM chains to pull from.

Chain Builders: Chain builders lose some of the sovereignty to the rules of the system. But this innovation is compelling enough to build on the Polygon Ecosystem.

This system is not possible to be implemented into Superchain unless it switches over to becoming a zk-rollup ecosystem. Although there have been discussions around this, in the short term, Superchain needs to use a solution while it stays as an ecosystem of Optimistic Rollups. There are some proposed solutions for Optimistic Rollups to achieve the monolithic experience using shared sequencers.

Astria & Espresso – Shared Sequencing as a Service

Astria and Espresso are decentralized shared sequencer networks offering synchronous atomic inclusion with potential atomic execution. So these two protocols have taken a different approach to start from a decentralized front.

In the rollup transaction cycle, the centralized sequencers that are live right now on Optimism and Base run by the teams give instant preconfirmations to the users, even though the L1 finality is 15 mins away. The user is essentially trusting the sequencer to stick to their promises here, i.e., if L1 reorgs, the sequencer should not discard the previous blocks and maintain the L2 blocks the same before the reorg. Here, we are trusting the centralized sequencer. Astria and Espresso offer a solution where we don’t have to trust the sequencer. They use their own consensus layer to offer more secure finality guarantees than a single sequencer.

Although there are many differences between Astria and Espresso, in the context of cross-chain, there are very little differences. Espresso, which works very similarly to Astria, uses a specific Hotshot consensus algorithm that promises fast finality and security through the 2/3 majority consensus. The shared sequencers here are required to restake ETH. Ideally, if a significant portion of L1 validators join Espresso, its finality guarantees would be as secure as Ethereum itself.

With these consensus mechanisms by Astria and Espresso, bridging between rollups can be as instant as pre-confirmations. Across is using this. There is no need for the liquidity providers or mint/burn bridges to wait longer for the secure L1 finality. All rollup transactions achieve finality after the majority of the shared sequencers attest to the blocks and are rollback together if necessary to avoid double spends.

Espresso and Astria promise atomic inclusion to the users with assurances from the shared sequencers while being cryptographically secure. However, since shared sequencers do not run full nodes of all rollups (it would be expensive in a decentralized shared sequencer network), they cannot guarantee atomic execution. So even if a shared sequencer promises atomic inclusion, one of the transactions may fail during execution by the full nodes of a rollup.

Espresso’s design for atomic inclusion is described here. Briefly, it involves adding a string with a bundle public key to the transaction callData of all the bundled transactions and signing them. The sequencer signs this bundle and ensures that none of the transactions in the bundle are excluded, if they are, the whole bundle becomes invalid as they are signed together. The transactions with invalid signatures are filtered out from the rollup derivation pipeline thus making partly included bundles invalid.

While atomic inclusion is figured out, the eyes are on atomic execution. We do not have a clear answer for this yet. Espresso and Astria propose a solution where the users are crypto-economically trusting the common super block builder (or a decentralized block-building protocol like SUAVE) to include an atomic bundle on the top of the block. Since the block builders know the latest state of all the rollups, they can be certain that a state transition in the order they have provided will not fail when executed.

In this system, the users are trusting the block builders, but it can be set up in such a way that the builder is slashed if they don’t abide by their promises. But the block builder is also trusting the shared sequencer to not reorder the bundle. Maybe Espresso/Astria can auction off the top of the block spot to builders. This topic is wide open for innovation.

Stakeholder Expectations Met by Astria and Espresso

Users: There are rumors that multiple rollups will opt into these shared sequencer networks. With a lot of rollups, the onboarding experience and interoperability will improve. It might lead to higher transaction fees as someone has to pay for the shared sequencers. The cross-chain MEV extraction is likely to be high as well because someone needs to pay the builders’ heavy bills. Atomic execution is trusted. Finality is more reliable now.

dApp Buidlers: With synchronous composability on the table, dApp developers can build cross-chain lending, cross-chain DEXs, and many more innovative applications to unite the fragmented liquidity and users. This system also enables FlashLoans and easy cross-chain arbitraging. The dApp developers are free to build any standards on this model. This is truly a monolithic experience.

Chain Builders: Chain builders would be giving away a significant portion of their sovereignty for the above benefits. MEV will be fully out of their hands. Although there will be MEV redistribution mechanisms, it would be hard to distribute cross-chain MEV in a fair manner. Liveness is now fully dependent on the decentralized network of shared sequencers.

This could be the solution we all have to go with. Further breakthroughs are needed to push the boundaries of cross-rollup interoperability.

Based Espresso

While it is beneficial for a new rollup deployed on Superchain to interoperate with Base and Optimism Mainnet as they have billions in TVL, it is a lot more beneficial for a new rollup to interoperate with Ethereum Mainnet itself. While all rollups have a combined TVL of around $50B, Ethereum alone has a TVL of around $500B (including native ETH).

Based Rollups, a concept introduced by Justin Drake almost a year ago has come far in terms of practical implementation. Based Rollups are normal rollups with L1 proposers proposing rollup blocks. This allows for the rollups to seamlessly interoperate with Ethereum while having synchronous atomic inclusion and execution guarantees provided by the L1 proposer. Having all these benefits in mind, Espresso has designed a shared sequencing model that enables any rollup opting in to be a based rollup.

Based Espresso inherits many design principles from the Espresso Shared Sequencer protocol we discussed earlier. Any rollup can join Based Espresso by giving the reigns of their block proposal rights away to the HotShot consensus protocol. In this updated model, Espresso operates with two different entities that work together to offer interoperability and finality guarantees. These are proposers and attesters.

Proposers are block producers who win the rights to propose blocks for a bundle of rollups in any given HotShot slot.

Let’s unpack that sentence.

- A HotShot slot is a period of time for which a proposer gets to propose blocks for a bundle of rollups.

- Bundles are formed dynamically based on the demand for interoperability between subsets of rollups. A bundle can also have a single rollup.

- A proposer deposits collateral to bid in a combinatorial auction of a HotShot slot 32 L1 blocks before the slot.

- Rollups have the sovereignty to demand a reserve price to propose for them and decide who gets to propose for them as well.

- When the time comes, the winner can propose the blocks or sell the rights to anyone else.

- The corresponding L1 proposer gets a free option to propose by paying the same amount as the highest bid for that slot. This ensures guaranteed interoperability with L1 if the incentives are big enough.

It is worth noting that some L1 proposers might not be part of the Espresso protocol, even though they are well incentivized to.

Since atomic execution is not guaranteed by the protocol, the proposer can guarantee it for a tip and with insurance. Until Single Slot Finality is live on Ethereum, the reorg risks in the 15 min time window will need to be priced in.

Attesters are L1 validators that have chosen to restake their ETH to become finality guarantees in the HotShot consensus protocol. If more than 1/3 of the attesters collude or go offline, 1/3 of their restaked ETH will be at risk.

Cross-chain applications can have 2 kinds of preconfirmations with different security assumptions.

- Proposer Preconfirmation, which is beneficial for entities that do not need hard guarantees that a transaction is included in the block. Usually given out in < 1s. Useful for the intent solvers that are competing on speed.

- Attester Preconfirmation, this preconfirmation is given after 2/3 of the attesters attest to the block to enjoy the reorg resistance of HotShot consensus. Useful for large token transfers that require better guarantees. Besides, attester preconfirmation is said to be just 2 seconds later than proposer preconf.

Based Espresso seems to be the most promising design for rollups and rollup ecosystems. Superchain can join this system by choosing their own reserve price for the bundle of Superchain rollups and while allowing bundle formation with other rollup ecosystems based on demand.

Stakeholder Expectations Met by Based Espresso

Users: There are not many differences between this and the previous design by Espresso. The users get to enjoy the benefits of atomic execution with Ethereum. The UX of the entire system should mirror that of a monolithic chain while having local fees markets. The interop costs will be reasonably priced and the onboarding experience must be the best with so much competition between the rollups.

dApp Buidlers: dApp Builders now get to finally help their users move loans from L1 to L2 using flashloans.

Chain Builders: Chain builders should be extremely happy with based sequencing. With this design, new rollups now have easy access to $500B of TVL on Ethereum. Liquidity can finally move freely to chains with the best opportunities for users. If the rollups wish to retain the rights of block proposals to have fair ordering, they can choose to allow external block proposals for every other HotShot slot.

There are many directions Superchain can go in. It all comes down to the tradeoffs Superchain is comfortable with. Now that we have explored the latest solutions for verification systems, let us dive into how Superchain can proceed from here.

Superchain’s Future

There are different ways Superchain can tackle the interoperability problem. The goal for Superchain should be to make changes to the Superchain’s base verification layer enabling interoperability to flourish between the rollups. This often means to not interfere with the transport or the application layer standards. The reason is that anyone can permissionlessly build these standards and innovate on top. Nobody except Superchain can make changes to the base layer.

In fact, people who do not want to use Superchain’s base verification layer can use third-party verification layers even between Superchain rollups, but if there are no changes in the base layer, it is impossible to enjoy the benefits of synchronous atomic execution between rollups.

Let us go through a few ideas.

Verification Layer Improvements

Superchain can choose to go in any of these directions to offer a secure verification layer that is feature rich to offer the monolithic experience.

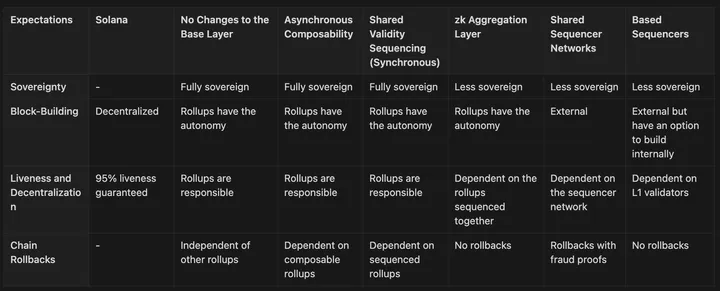

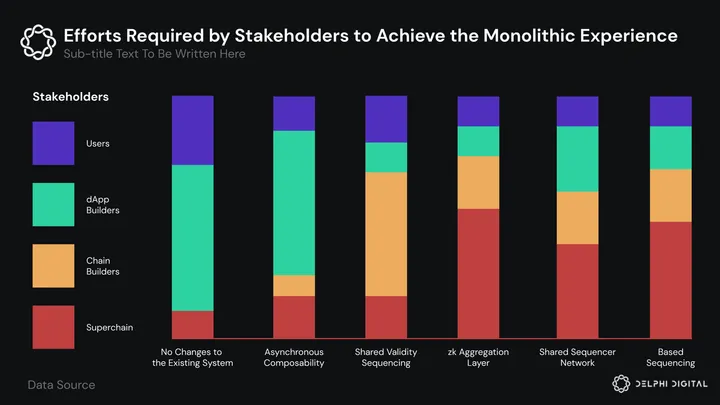

No Changes to the Base Layer

In this approach, we let the market figure things out. This future looks similar to the Ethereum/EVM interoperability space right now. Although it is a subpar experience for the users, with intent protocols and smart wallets on the horizon, we will be able to enjoy the monolithic experience with an abstraction layer on top of the dApps. The user experience, costs, and security will all improve over time with competition and innovation. dApp Developers can use MMA-like solutions for trust-minimized bridging of assets across chains.

But still, in the short term, users and dApp builders will suffer the most in this scenario. The UX will be subpar, interoperability will be expensive, security will be trusted, MEV will be more centralized, and users will be stuck in the corners of Superchain and might switch to a monolithic chain after getting tired of bridging. Additionally, intent-centric applications will always bleed MEV without atomic execution guarantees, so they might lose hope.

Chain Builders might be fine with these tradeoffs. App rollups often do not need interoperability with all the chains out there. Chain builders get to enjoy sovereignty over the extraction of MEV from their users. Plus, they would have the freedom to innovate in sequencer decentralization, fault-proof systems, sequencer-builders separation, etc.

There are reasons other than interoperability to join Superchain. Shared security, scalability, community, ecosystem support, unified interfaces, unified social front, etc.

Despite some benefits, we do not recommend inaction from Superchain when it can enable so much innovation and improvements to the current systems by offering better interoperability.

Also, when discussing Superchain connecting with the crypto ecosystem writ large, third-party bridging is easiest to extend to non-EVM, Orbit, Polygon, Solana, and Cosmos chains.

Asynchronous Composability

Superchain can choose to go the Cosmos/IBC route and offer trustless interoperability between the Superchain rollups. It is worth mentioning this is not an easy endeavor as it took 2 years and a lot of effort for the Avalanche ecosystem to build AWM (Avalanche Warp Messaging) and Teleporter, offering a system similar to Cosmos/IBC. The improvement in this option compared to the previous one is that it offers secure bridging of assets without MMA and third-party bridges. This will lead to cheaper bridging for users and a standard way to bridge assets between chains. Asynchronous composability combined with intents can offer a near-monolithic experience as well. One caveat is that interdependent rollups will likely lead to combined rollbacks if one of the chains is rolled back. Plus, cross-chain MEV will be a problem in this case similar to our previous option.

Chain builders will still retain a good amount of sovereignty in this design. Although their nodes will now have to validate transactions from other rollups.

Asynchronous composability can be achieved by making sure that a block is confirmed only if all the blocks of other rollups it is dependent on are confirmed as well. We believe Optimism’s approach described in the specs leads to asynchronous composability if nothing else is built on that system as it offers a way for rollup nodes to verify cross-chain message origins and mark blocks as safe only if the source chain transactions were confirmed on the corresponding Superchain rollup. But it is also possible to build a shared sequencer system on top of this and offer features like atomic inclusion and execution.

Shared Validity Sequencing – Umbra

This approach offers the most secure way to support synchronous atomic execution between the chains sequenced together.

Users and dApp developers get to enjoy the monolithic experience with minimal cross-MEV costs, everything will be securely abstracted away. But there are trade-offs.

- High operational costs will lead to centralization.

- The liveness risks will be high due to centralization.

- The operational expenses of sequencers and full nodes will be passed on to the users eventually.

- These heavy infrastructure costs almost make it similar to high-performance monolithic chains like Solana while retaining very few benefits of modularity.

Chains builders will be tasked with running full nodes for their chains to execute blocks before posting them onto the mainnet. This will also further complicate the fraud proofs and the watcher systems as they are now tracking the Merkle trees on all chains.

If Superchain chooses to go in this direction, it would be betting on the advancement in semiconductor technologies, through which decentralization could also be achieved despite having high infrastructure requirements. This could also be a step towards becoming zk-rollups if that is still the goal for the OP stack.

zk Aggregation Layer

This solution requires the Superchain stack to switch over to being zk-rollups. Since the ecosystem was pessimistic about the pace of zk advancement, Optimistic rollups were thought of as a step towards becoming zk-rollups with zkEVM. Now that zk-rollups are in production in many stacks, that vision can be achieved sooner rather than later.

If Superchain and the OP stack choose to become zk-rollups, the synchronous atomic composability problem becomes much simpler as verification of inclusion and execution can be trustlessly done via zk-proofs. Or, maybe it is a wiser decision to choose Shared Validity Sequencing with the centralization tradeoffs while the zk tech fully evolves to offer fast finality with super fast zk-proof generation.

Users and dApp developers can enjoy all the benefits of a monolithic experience at a slight increase in costs due to the aggregation layer between rollups and the settlement layer. It is yet to be seen how significant this increase will be as the system is not live yet. Since being part of the AggLayer is expected to be permissionless, we might see some rollups failing to meet the expectations and causing liveness issues for the rollups dependent on them, but this is a rare scenario as they will be slashed or banned from the AggLayer.

Chain builders might have to put up collateral to join AggLayer due to the liveness trust assumptions. Apart from that, Chain builders still enjoy quite a bit of sovereignty and block-building autonomy.

Overall, the zk-aggregation layer is a very promising solution for the zk-rollup ecosystems. But, for now, not necessarily feasible.

Shared Sequencer Networks

This design seems to tick the most boxes among our stakeholder expectations with minimal trade-offs. A solution that offers secure atomic inclusion and atomic execution via crypto-economic guarantees, while still being decentralized on the shared sequencer layer.

Superchain can choose to build its own decentralized sequencer network or opt into networks like Astria and Radius that might allow interoperability with other rollups outside Superchain. Although the security of atomic execution in this design is not as secure as Shared Validity Sequencing, one can argue that the overall liveness and security of the rollups are better.

In this system, the users enjoy atomic inclusion by default but will pay extra to the block builders for the atomic execution guarantees. The shared sequencer network offers fast finality through preconfirmations. Restaked ETH-backed finality and liveness guarantees are great but restaking comes with its own risks, if the AVS that is protecting Superchain gets slashed while protecting something else, the security of the Superchain network will be affected significantly. However it is possible for the block builder to censor a user transaction if the block building is not decentralized via systems like SUAVE.

Chain builders would be giving up the block-building rights in this setup. The chain still get to choose what chain they are comfortable being dependent on.

Overall, this is a good solution for Superchain as it fulfills many expectations of its stakeholders. But this next solution is much better than all other solutions presented so far.

Based Sequencing

With the emergence of Based Sequencing as a solution, it seems more likely that not just an ecosystem of chains can operate as a monolithic layer with atomic guarantees, but the entire Ethereum ecosystem can seamlessly interoperate with each other.

There are a very few downsides to this approach. All of Superchain’s users get to interoperate with each other, with other L2 ecosystems, and Ethereum mainnet itself. It is difficult to predict the changes in various costs users will have to bear, but interoperability costs will vary based on the layers a user is touching. The costs should increase as one goes from being inside a rollup to another rollup inside Superchain, to a rollup outside Superchain, and to Ethereum Mainnet. The users enjoy fast finality and finality guarantees as well.

One additional benefit users get in this approach compared to the previous one is the inheritance of liveness and censorship resistance from L1, as an L1 proposer can also be the Based Sequencing layer proposer. Chain builders have more autonomy than Shared Sequencer Networks, as in this design proposed by Espresso, the rollup gets to decide who and how much needs to be bid on their block building rights. And they also have an option to retain the building rights.

Since this solution is still in the nascent form, Superchain approach to reaching this stage involves collaborating with other players in the ecosystem and reevaluate the next steps it needs to take to move towards the end goal of Based Sequencing.