The Delegated Authorization Network: Trust Minimization in the Age of AI

JUL 02, 2024 • 21 Min Read

The Notorious UX Problem

If there’s one thing that’s been consistent over the past two crypto market cycles, it’s the deafening scream of how convoluted it can be to use on-chain applications. The crypto UX problem has been front and center for years now. There have been a significant number of ideas thrown at the issue alongside venture funding and talent. Yet, we haven’t managed to meaningfully move from the status quo established in 2020.

At least for EVM ecosystems, the predominant way to interact with blockchains still involves hopping from frontend to frontend accompanied by a trusty Metamask browser extension that may or may not be supplemented by a hardware wallet. Other ecosystems have their own version of this, but it’s the same flavor from a high-level.

While on-chain UX is not horrendous, most people in the industry will agree that we need to revamp the on-chain experience to make it more welcoming to newer users. We’re still at the stage where new users can be onboarded to a CEX rather easily, but the jump to a self-custodied wallet and smart contracts is still steep.

Of course, where there’s a will there’s a way. And those who are moving from CEXs to their own wallets are people willing to put in the effort to learn and experiment with how this stuff works. But the simple argument against allowing this to remain cemented as the status quo is that it significantly filters out prospective users and makes the funnel much narrower.

After all, you don’t need to understand how the NYSE works to use Robinhood; you don’t have to understand how Robinhood’s trading engine submits transactions under the hood to use the platform.

Abstraction tech, at large, is a fundamental part of re-shaping the UX of on-chain products. From account abstraction to the recent buzz around chain abstraction, builders en masse have recognized the need to present alternate UX options. The most recent and notable example of the desire for revamped UX is the Telegram bot meta.

The rise of Unibot, Banana Gun, and others has birthed an extremely lucrative business model as people search for easier ways to have their on-chain interactions facilitated. Even with a relatively poorer custodial model, these trading bots have seen a massive surge in usage over the last year.

It’s clear that even the most seasoned on-chain veteran is looking for ways to simplify this convoluted experience. In the case of Telegram bots, they are even willing to compromise on security to achieve this. This trend cannot be ignored, and justifies the search for newer UX models that make good on existing compromises.

The on-chain interaction layer is ripe for disruption; it has been for a long time now. But there are new puzzle pieces that help point us in the direction of what the future may hold.

Agents of Innovation

The latest idea in the bid to supercharge the on-chain experience are AI agents. Agents, or bots, have existed for a long time. Even in the context of crypto. Liquidation bots, arbitrageurs, MEV searchers, and keepers are some examples of existing agents in crypto. These agents, however, are not consumer-oriented. They are developed by professional searcher operations and hedge funds.

Given the complexity in spinning up this software and the financial edge in keeping their core design & functionality a secret, there has been no incentive for developers to help non-technical users launch their own liquidation bot, for instance.

Been thinking a lot about agents recently – what the use cases are and what the impact will be to crypto.

I see a lot of people saying they don’t understand or don’t agree with this narrative.

So here are my thoughts.

— Luke Saunders (@lukedelphi) May 7, 2024

Telegram sniping bots and copy trading bots are examples of more primitive agents that offer no significant edge other than simplifying UX and perhaps reducing the amount of time taken to construct, submit, and finalize a transaction. But there are only certain things you can do with these retail bots that exist today – mainly token swaps.

It’s not simple to configure an agent that can help manage all of your positions. From rebalancing your Uniswap v4 LP positions, to managing liquidation risk on your Aave loans, all the way to adding more USDC liquidity to Gearbox’s lending market when yields cross a certain threshold.

With all of this in mind, AI agents promise to be an agent of innovation on the interaction layer.

At a Glance: How They Work

AI agents, at large, are a way to tap into an LLM’s knowledge base to create comprehensive answers or solutions to a problem. In the specific context of on-chain agents, they are essentially a medium that enables users to construct blockchain transactions from a natural language prompt through an LLM.

Simply put, AI agents are a way to convert a user intent (I want to swap 10 ETH to USDC on Ethereum at the best execution price and supply the USDC to Aave on Arbitrum) to a multi-pronged transaction written in Solidity using the correct opcodes. The entire process can be automated out, allowing the agent to submit transactions on behalf of users.

Without getting into the gory details, an agent for on-chain interactions would require an LLM that has been trained on the various opcodes that exist for its supported networks. It needs to be able to take a user’s input in English (or any other natural language) and translate it into Solidity, Rust, or MOVE code in a way that is accepted by a network’s virtual machine. This LLM could also be supplemented by Retrieval Augmented Generation (RAG), which helps the agent either search the internet or some internal troubleshooting documentation to confirm the answer it is generating is in line with what the user wants.

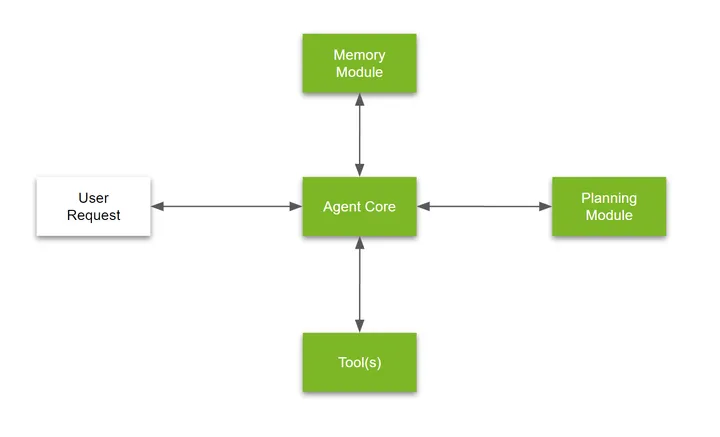

AI agents typically comprise several components: the core software, a memory module, planning module, and other tools required to fulfill their purpose. In a nutshell:

- Agent core is the software that makes a final decision after receiving inputs from all of the other modules. You can think of this as akin to the ECU in a vehicle.

- The memory module stores both short-term information based on data generation from a user prompt, and also long-term information based on queries the agent has historically received and the answers that it served.

- The planning module breaks down the user’s prompts into several parts and assembles it into a pipeline of smaller questions to answer in order to serve the user the correct answer.

- Tools are outside resources that the agent can use to help facilitate the user’s intended outcome. In the context of on-chain AI agents, an Infura RPC node or Flashbots Protect could be a tool that helps the agent submit user transactions for execution.

(Source: NVIDIA)

The Path to Transforming Crypto’s UX

The intents meta is largely an exercise of going from natural languages like English to blockchain VM friendly code. However, looking at existing implementations, these systems developed by protocols tend to be specific. Across Protocol’s intents are asset bridging centric; CoW Protocol’s intents are swap centric.

On-chain AI agents promise the ability to be a more evergreen solution to intent translation. These agents are likely to piggyback off existing intent infrastructure to help their users get their desired end result without recreating everything from scratch. How it’s packaged up and works on the backend is not a concern for users, so long as these agents are constructing correct transactions in accordance with a user’s intent and optimizing for best-in-class execution.

Even if agents piggyback off multiple intent frameworks that already exist, their value-add is in aggregating this and acting as a unified user interface for on-chain environments. Delphi Research has previously highlighted the need for unified interfaces in crypto that abstract away the need to hop from frontend to frontend. Agents are yet another solution with this end-state in mind – arguably a more comprehensive one.

Like any other solution, however, there are potential pitfalls here too – other than the fact that we are probably a few years away from this tech being fully functional in the intended way.

On-chain agents, as the name suggests, require a high degree of agency. That is, in order to act on a user’s behalf to carry out their mandate, they need the ability to autonomously take actions. Whether that is fixing your health ratio to minimize liquidation risk on Aave, selling a token when it hits a certain level of drawdown, or moving funds from one farm to another as yields change. The autonomous component is crucial to the AI agent thesis.

LLMs, and thus agents, have a tendency to hallucinate. In short, they can spit out a totally random and unrelated output to a user’s prompt. It looks completely coherent, but is unrelated to what the user is trying to do. This can happen for a variety of reasons like vague prompting, sections of poor data in the training process, in-built bias, etc. While a RAG pipeline can minimize these occurrences, they cannot be eliminated.

And so arises the question of how you can trust an agent to make unilateral decisions with your capital if they have a tendency to hallucinate and potentially make huge mistakes. Ideally, you would like some safeguards to limit these instances if not eliminate them entirely.

A lot of agent frameworks being developed will have the ability to set limits for what the agent can do. However, you don’t want to unilaterally give the agent the ability to sign transactions for you in all situations. Off-chain authorization frameworks that allow users to tap into the potential of AI agents without slipping up on security may become integral in allowing this tech to reach a large mass of users.

The Delegation Authorization Network: Empowering Humans in the Age of AI

Architecture

First, let’s start with the technical layout.

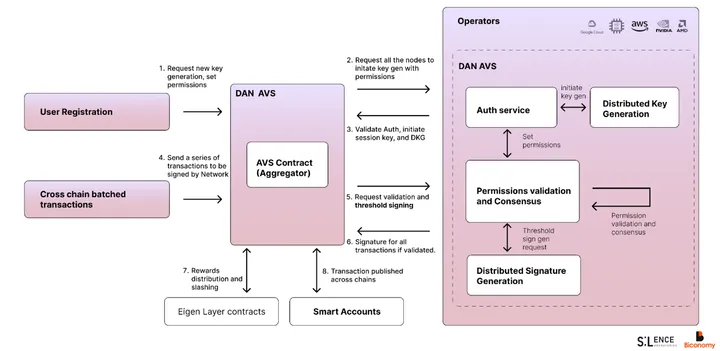

Biconomy, in conjunction with Silence Labs, has built what they call the Delegation Authorization Network (DAN). The DAN is an off-chain network built to enable users to configure how they authorize transactions. If you’re aware of what session keys are in the account abstraction context, you can think of this as an extension of this concept with greater security guarantees.

The DAN is set up as an Eigen Layer AVS since it’s a simple P2P network with no block building component. While there is no need for block validation per se, there is an aspect of the network that requires economic security or bonding – node operations.

Node operators are entities running infra on behalf of those who have restaked ETH to Eigen Layer. They are the central participants in the Biconomy AVS. The network uses a Threshold Signature Scheme (TSS) to offer MPC-grade security.

The previous iteration of session keys developed by Biconomy required user keys to be stored locally in their browsers in order to eliminate the need to sign every transaction inside of a particular session. Key storage handled in this way obviously raises security concerns.

With the DAN, user keys are not visible to the users themselves and are instead stored by the AVS’ node operators as fragments or shards. A user’s key is broken into TSS shards and encrypted. When the prescribed threshold of nodes signing off is reached, a valid signature is generated. The key shards are encrypted in a Trusted Execution Environment (TEE) and stored as cipher text (unreadable without decryption key) by each node.

When a new user registers on the DAN, a distributed key generation process is initiated to split the key up into shards, each of which is held by a single node operator. Every time the user requests a transaction, it is passed through the DAN where all nodes take part in a signing protocol to generate a valid signature for the user without revealing their key fragment (shard). Producing a valid signature is also contingent on the constructed transaction meeting the policy criteria set by the user – a concept covered in the next section.

Users can authenticate themselves using options like passkeys, email, and sign in with Ethereum (SIWE), which deviates from the previous norms of controlling authentication via Externally Owned Accounts (EOAs) only.

The MPC cryptography employed by the network is also designed to facilitate fast and efficient signature generation to ensure transactions don’t get held up by the DAN.

Virtually all of crypto runs on Elliptic Curve Cryptography. Bitcoin and Ethereum, for instance, use the secp256k1 curve on ECDSA. Chains like Solana, however, use the ed25519 curve on EdDSA, which does key generation much faster. Both of these tend to be effective with single-party computation i.e. a user generating their own signature in Metamask or Phantom.

When trying to layer on multi-party computation (MPC), the traditional method revolves around Paillier encryption. However, ECDSA uses secure multiplication while Paillier encryption uses integer-factoring. The different form of applied math in ECDSA and Paillier encryption means it’s more complex to compute, and thus introduces latency in the key generation process.

Silence Labs built a DKLs23 implementation that uses Oblivious Transfer instead of Paillier encryption. Oblivious Transfer uses secure multiplication, just like ECDSA cryptography, resulting in much faster key generation. For a network that is intermediating transactions – receiving constructed payloads and simply authorizing them with the user’s signature – latency reduction is crucial in ensuring this process does not hold up transaction finality. This implementation reduces the computation time for keys from 100s of milliseconds – 1 second down to 10s of milliseconds, according to Silence Labs.

To sum it all up:

- Nodes on the network post a bond in restaked ETH. They require an economic bond that incentivizes them to act honestly or risk slashing.

- Nodes hold a fragment of every registered user’s key. They come together to participate in a distributed signing process when a user requests authentication for a transaction.

- Silence Labs has implemented a new cryptographic primitive, DKLs23, that helps the network perform multi-party computation in a less complex, latency-minimized manner.

- Older versions of this concept, often referred to as session keys, used to store user keys locally in their browser. In the DAN, user keys are broken up into pieces and stored as cipher text by node operators who are economically bonded for higher security guarantees.

That’s a lot of technical meat, but the bottom line is that the AVS’ cryptographic setup is primed for low latency MPC signing and the ability for users to securely delegate their authority to execute transactions.

And with policy or permission management features, this opens up the door to users being able to set a mandate for what an AI agent can and cannot do.

Permission Management on the DAN

So far we’ve covered how the core tech works on a network level. But the real interesting bit is in permission management and how it can let users utilize third party applications to automate their workflows.

We’ve established that LLMs, and thus agents, are prone to hallucinations which opens the door to possible “mismanagement” of capital in certain situations. Imagine a scenario where you use a consumer-grade AI agent for on-chain interactions to maximize yield on your stablecoin stack. The mandate you’ve set is fairly simple:

- Find the best risk-adjusted stablecoin yields across EVM chains and deploy stablecoins in an equal weighted manner across the top 5 venues.

- Money market pools your capital can be deployed to should have a utilization ratio lower than 75%. If utilization crosses 90% in a pool with your capital, withdraw funds immediately.

You set this up as a sub-account with partial control given to an AI agent. The obvious goal is to automate yield opportunity selection and generate an above-average net yield on your stablecoin holdings. In the most primitive scenario, the agent has the keys to this specific sub-account.

What happens if the LLM hallucinates and the agent decides to swap your stablecoins to ETH because the yield on Aave’s Arbitrum deployment is now 25%? What if it decides the highest risk-adjusted yield is to borrow USDC on Gearbox and deploy it all into Ethena? Or what if the LLM makes a mistake and the agent structures the transaction wrong to instead lend the lowest yield stablecoin and borrow the highest? What if the core methodology the LLM prescribes is wrong and it considers any token with a market price of $1.00 to be a stablecoin?

This is where permission management comes in – the ability to not just spell out a mandate (what to do) in natural language, but to also set specific limitations on the kinds of actions that can be taken (what not to do).

Leveraging the Biconomy-Silence DAN, users could hypothetically set the following parameters:

- Swapping can only be done between USDC, USDT, DAI, sUSD, sUSDe, USDe, and USDB.

- Leverage or borrow limits are $0. Sub-account cannot under any circumstances borrow capital to deploy.

- Arbitrum, Ethereum, Optimism, Blast, Avalanche, and Base are the only approved chains.

If the user utilizes the DAN for permission management, any transaction that doesn’t meet the specified criteria will not be authorized by the network, and thus will not be signed. The agent thus does not have the unilateral ability to pass transactions on the user’s behalf.

It’s worth noting many agent frameworks also have in-built policy management. But given how intertwined it is, there is still a non-trivial risk of things slipping through. The implementation of policy management from an external, trust-minimized module that is solely built to authorize transactions adds an extra layer of guarantees.

Smart Accounts

Smart account infra is essential to smooth functioning of consumer-grade AI agents. None of what has been laid out in terms of agent automation and usage flows are viable without smart accounts. With just an EOA, agents can help users decide what kinds of actions they should take, but there is no scope for them to actually facilitate the execution of transactions.

It’s important to note that the account controlled by the agent and the agent itself are two separate primitives. Smart accounts are the actual user controlled account that an agent can construct transactions for. Agents should be able to construct and submit these transactions on behalf of a user. But the agent has no role in the actual custodying of these assets, and as such there are base security guarantees that minimize mismanagement of capital.

All assets and transactions happen from the smart account. And using a smart account over a regular EOA paves the way for features like gas abstraction and building custom authentication flows.

It’s worth noting that the DAN and Smart Accounts under the Biconomy banner are also two separate products. So there is a route where a different kind of smart account product gains widespread adoption, but the DAN still succeeds as the authentication layer for users. Any smart account infra built on top of Gnosis’ SAFE mutli-sig will be able to use the DAN for authentication.

Biconomy’s smart accounts and session keys are usable in production today, but only for dApps like Kwenta and RageTrade that have integrated the Biconomy SDK. The introduction of app-agnostic AI agents broadens the applied uses of smart accounts, making it viable to interact with any application on a supported chain.

Ultimately, the market will decide on what makes the most sense. Smart account infrastructure from Biconomy is being built with the agent-centric biz dev bet in mind, but there are numerous ways this can play out. Given the current usage of smart accounts, the rise of AI agents for on-chain interactions make smart accounts the most relevant they have ever been.

A Concentrated Bet on AI Agents

There are broad applications of the DAN in on-chain environments. However, the core strategy around integrations and growing the network for Biconomy and Silence is centered around AI agents. The team behind the network believe agents will become an integral part of the crypto experience, and are making an early bet into building infrastructure that will allow users to seamlessly and securely interact with these products.

AI agents are still in a nascent stage. Between training models that are capable of generating reliable code for one or many blockchain virtual machines to building review mechanisms to make sure the right outputs are generated, there is still a lot of work to be done. But the path forward with AI agents and their impact on UX is starting to materialize. Anyone who spends a few days, or weeks, going down to this rabbit hole recognizes the immense potential this kind of a product holds.

While AI agents are the key to the long-term success for the network, they aren’t the only intended integrations. The core functionality of the DAN can be likened to a more secure version of session keys. In a nutshell, users could authorize a single signature that is valid for an entire session of usage. Session keys are a UX improvement for any on-chain platform that experiences user interactions in spurts.

Day traders on derivative exchanges can issue a single authorization and not have to manually sign any transactions till they log out of their DEX account. Gamers can play as many matches and make as many NFT/token trades over the course of a session with only one authorization at the beginning. Betting platforms, NFT marketplaces, and enterprise payroll apps are other examples of products that can improve their UX with session keys.

In the on-chain world, the need to sign every single transaction – and in many cases also sign approvals – is a massive pain point and time sink. Session keys through the DAN are a legitimate solution that enables users to conduct virtually unlimited transactions in a session with continuous signing. But it can also help attain this without large compromises on security.

Looking to the Future

In a recent essay, GoodAlexander laid out the thesis for “agentic protocols”. The main premise of this is the idea of developers creating agents who are responsible for protocol development and idea execution. Governance becomes the driver of what the agents need to do, and the agents – somewhat autonomously – create on-chain products.

Human intervention has been necessitated till now because:

- Protocols are in early stage of development

- The LLM rush only kicked in late 2023

- There is widespread belief that human-engineered systems are more trustworthy

As AI models grow and become more resilient, the idea of agentic products and protocols draws closer and closer. A fully autonomous development system that is driven by humans via token governance. In many ways, this is the desired end-state all of us talk about when we say “decentralized, trust-minimized, and permissionless”. Autonolas, one of the projects building the infrastructure for AI agents, seems to be moving towards this vision too with their Open Autonomy Framework.

Of course, there is a long way to go before a vision like this is materially realized. Agents need to be able to understand what is being said in governance channels, ingest key context from successful proposals, and implement rigorous feedback loops to ensure the end product is as close to perfect as it can be.

This idea is the agent thesis taken to its theoretical limit i.e. AI can fully replicate — or even outperform — the outputs of an experienced and talented software engineer in a more efficient way. Continuing on this path, a key question is whether we expect LLM hallucinations to continue. And consensus seems to be yes, they will.

In a world where agents develop and execute code, it becomes clear that authentication and policy management (what it is allowed to do) will play a crucial role here too. And systems like the Delegated Authorization Network are a step in this direction. While the current functionality of the DAN and what it enables is limited to consumer use-cases, there is obvious scope for it to evolve and serve agentic protocols too.

Jumping back to the present, there hasn’t been this much excitement around a fresh approach to on-chain UX since perhaps the conceptualization of intents or the original account abstraction EIPs. It’s not something that’s expected to materialize in weeks or months though. Optimistically, we are probably 6-12 months out from users actually starting to use agents for their day to day operations. Realistically, it could take a couple of years to refine the experience into something that is objectively superior to every thing else in the market.

Biconomy and Silence Labs are making a bet that AI will shape the future of crypto UX, while also acknowledging that this tech cannot be flawless – necessitating the existence of authentication infra.

0 Comments