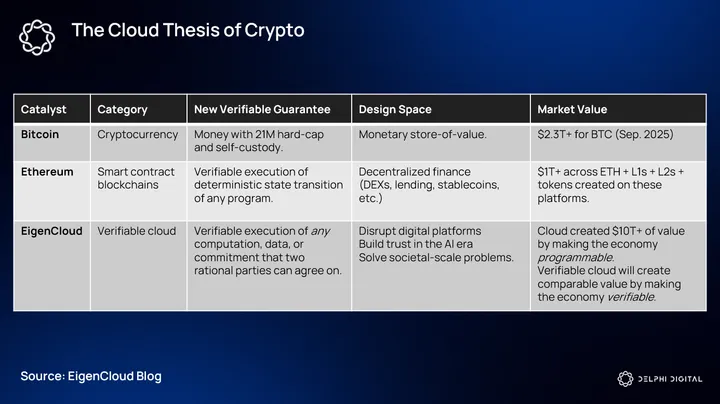

The Verifiable Cloud: How EigenCloud is Unlocking Crypto’s App and AI Era

OCT 06, 2025 • 29 Min Read

Report Summary

Key Takeaways

-

Programmability Cliff

-

Crypto has solved throughput (scaling), but programmability remains limited compared to Web2 cloud services.

-

EigenCloud aims to remove this barrier by providing verifiable, cloud-like infrastructure for crypto apps.

-

-

EigenCloud Stack

-

EigenDA → Durable, censorship-resistant storage (like AWS S3).

-

EigenVerify → Standardized modules for verifying correctness (objective + intersubjective).

-

EigenCompute → Verifiable containers for off-chain workloads (like AWS ECS/Lambda).

-

Together, they abstract validator management and enable modular, trust-minimized services.

-

-

Trust Dial

-

Developers can choose trust modes: Instant, Optimistic, Forkable, or Eventual, balancing speed, cost, and security.

-

This flexibility allows applications to align verification cost with their risk profile.

-

-

Economic Model

-

Dual-token: EIGEN (coordination, fork deterrence) and bEIGEN (bonded verification collateral).

-

Fees flow to ETH stakers (execution security) and bEIGEN verifiers (correctness guarantees).

-

“Fork as nuclear deterrent” ensures long-term incentive alignment.

-

-

Applications Unlocked

-

AI inference with verifiable outputs.

-

zkTLS & data oracles for secure data ingress.

-

Prediction markets with finality.

-

Sovereign AI agents with verifiable reasoning and execution.

-

-

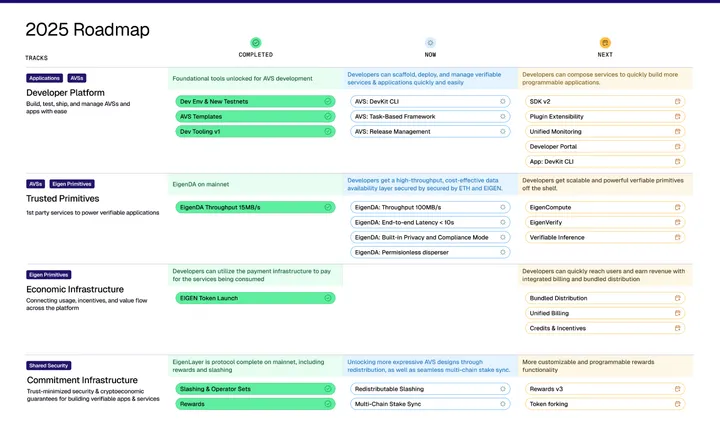

Roadmap

-

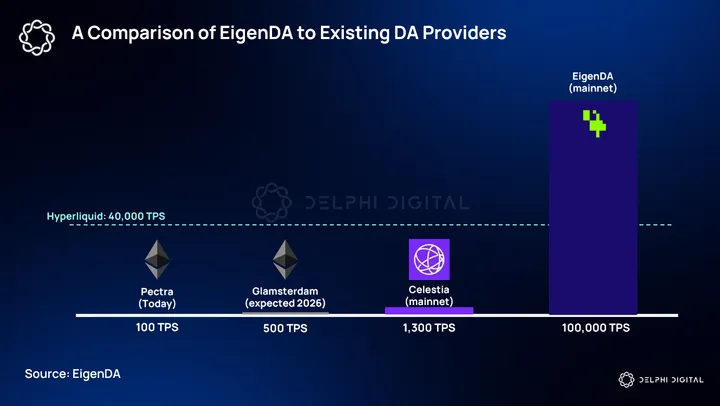

EigenDA v2 live with 100MB/s throughput (fastest DA solution).

-

EigenVerify & EigenCompute mainnet slated for Q3/Q4.

-

DevKit for AVS development launched on testnet.

-

Incentive revamp proposal to strengthen EIGEN staking rewards.

-

-

Competitive Positioning

-

Competes not directly with Celestia/Akash/Render individually but by offering a full verifiable cloud stack.

-

TAM is not commodity compute but verifiable workloads where correctness is more valuable than cheap cycles.

-

Summary

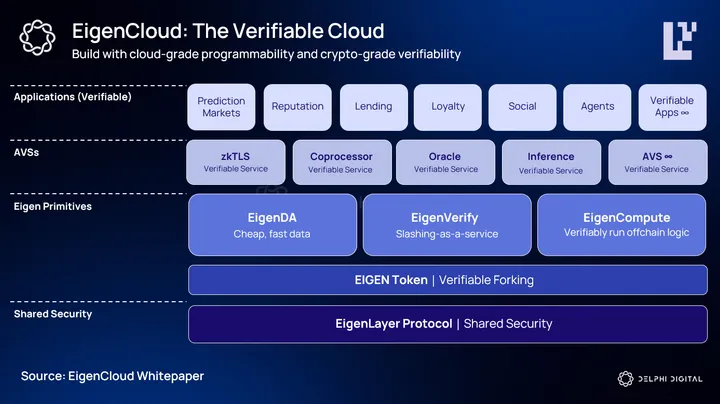

EigenCloud positions itself as crypto’s AWS moment—a verifiable cloud that abstracts infrastructure complexity while providing cryptographic guarantees. By combining EigenDA (storage), EigenVerify (dispute resolution), and EigenCompute (verifiable execution), it enables developers to deploy scalable, trust-minimized applications without managing validator sets or building bespoke security.

The system introduces a flexible trust model, letting developers choose between speed and security, and is underpinned by a dual-token economy (EIGEN + bEIGEN) designed to secure both execution and verification.

This unlocks powerful new application domains—verifiable AI inference, zkTLS-secured data feeds, finality-grade prediction markets, and sovereign AI agents. With nearly $20B TVL already secured in EigenLayer and strong operator adoption, EigenCloud is well positioned to become the foundational layer of the verifiable economy, much like AWS catalyzed the Web2 era.

-

Prelude – From On-Prem Chains to Cloud-Grade Verifiability

Blockchains today look a lot like the internet of the early 2000s: powerful and secure, yet rigid. In that era, building an internet application meant racking your own servers, managing your own networking, and maintaining uptime the hard way. Developers typically leased dedicated servers or rented rack space in co-location centers from companies like Rackspace and Equinix.

Then came the public cloud. AWS abstracted away the physical layer, gave developers a host of purpose-built services, and made scalable infrastructure accessible to anyone. The result was an explosion of new application categories, from Airbnb to Stripe, and a $10 trillion wave of market cap creation.

The evolution of blockchain development is following a similar arc. In its first decade, we saw two major eras: the age of app-specific chains like Bitcoin and Namecoin (bespoke security, custom validator sets) and the rise of general-purpose L1s like Ethereum and Solana, where developers built on top of pre-existing security and infrastructure. Each era expanded the surface area of what could be built, but both still required teams to manage core pieces of blockchain infrastructure themselves.

To unlock the next wave of applications, especially those outside the financial domain, crypto needs its cloud moment. A platform where developers can access “rentable” verifiable compute, pair it with an expressive set of verifiable services, and deploy without ever touching a validator set or spinning up a chain. This means data availability, settlement, off-chain execution, and even AI inference are all provisioned as modular, trust-minimized services.

EigenLayer was the first step, giving developers on-demand access to a global network of restaked operators and the cryptoeconomic security of the EIGEN token. With EigenCloud, that foundation expands into a full-stack, crypto-native cloud: a rich catalog of third-party AVSs, built-in primitives like EigenDA, EigenVerify, and EigenCompute, and the ability to compose them like AWS services.

The result is a verifiable cloud, where the guarantees of crypto converge with the usability of cloud computing. Just as the public cloud was a huge unlock for the web economy, the verifiable cloud is a huge potential unlock for a trust-based economy.

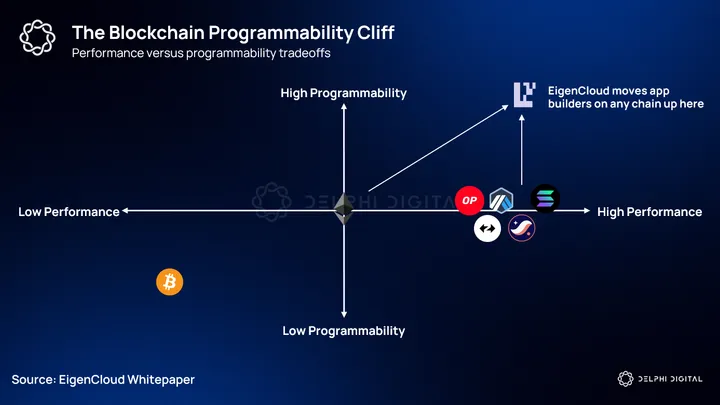

The Programmability Cliff

Crypto has made significant progress on throughput. Over the last decade, the scaling bottleneck that defined early blockchain design has largely been addressed via high-throughput L1s, rollups, modular DA layers, and ever-more efficient proof systems. Yet even as blockchains can now process orders of magnitude more transactions, the types of applications still remain extremely sparse.

The real limiting factor here is not block space, but rather programmability. In web2, most cloud workloads came in the form of copying and pasting existing applications. This was impossible in Web3, until now.

If we zoom out, programmable infrastructure is what made the modern internet economy possible. AWS gave developers the ability to connect to any API, provision any hardware, and compose managed services like databases, identity providers, or machine learning pipelines without rebuilding them from scratch. Be it from social networks to streaming platforms, this flexibility is why every category of modern software still runs on the public cloud.

But blockchains still operate on the narrowest possible canvas when compared to modern cloud services today. Their execution environments are intentionally deterministic, their hardware is uniform, and their consensus rules are fixed at the protocol level. These constraints are necessary for trust, but they come at a steep cost, shrinking the design space to a sliver of what’s possible in conventional computing. This is why crypto’s killer apps have, so far, clustered almost entirely around the domain of finance – where deterministic logic and self-contained state work best.

This “programmability cliff” is what prevents crypto from expanding into the broader software economy. The moment a developer needs a GPU, a TEE, low-latency external data, or even a socially-interpreted outcome, the current stack forces them off-chain. And once logic leaves the chain, trust in that computation is gone.

EigenCloud’s ambition is to make crypto more programmable; to become crypto’s cloud moment which enables the verifiable economy.

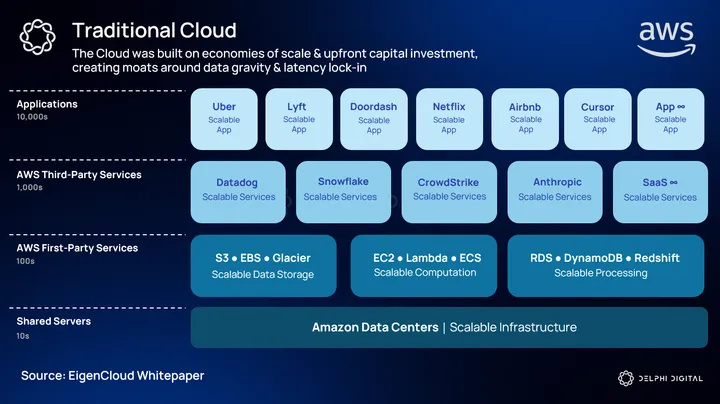

Web2 Parable

Before the cloud, building on the internet was as much an infrastructure problem as it was a software problem. Scaling up was a gamble: you either over-provisioned and wasted money, or under-provisioned and fell short if your app gained any reasonable momentum.

AWS broke this cycle in 2006 with the launch of Elastic Compute Cloud (EC2) and Simple Storage Service (S3). EC2 turned compute into a metered utility: virtual machines you could spin up or shut down on demand. S3 did the same for storage, offering an infinitely scalable object store without a single developer needing to touch a physical disk. Together, they abstracted away the headaches of infrastructure and let developers focus entirely on the application layer.

The impact was immediate. By removing capital expenditure, operational complexity, and capacity risk, AWS unlocked application categories that simply weren’t viable before. The barrier to entry for launching a web app plummeted, and the long tail of software flourished. Niche SaaS products and globally-scaled marketplaces all became viable because infrastructure friction had been abstracted away.

A small distinction I want to make here, however, is the removal of capex when we compare AWS to EigenCloud. To be more precise, EigenCloud abstracts away the prohibitive costs of running intensive workloads on-chain. Instead of burning gas per FLOP, you can move the heavy logic off-chain while still retaining crypto-level verifiability. Both approaches differ in that respect, but the analogy of abstracting away the friction to deploy a wider range of applications holds true.

There was also a strategic inversion with AWS. In the pre-cloud world, infrastructure dictated what was possible. In the cloud era, infrastructure bent to the needs of the application. AWS’s service expanded far beyond compute and storage into databases, analytics, identity management, and machine learning, making it possible for small teams to stand on the shoulders of enterprise-grade systems without owning them.

The AI–Agent Collision Course

Web2 systems are already plagued by opacity. We rely on reputation, trust-in-brand, or regulatory oversight because we can’t independently verify what happens inside centralized infrastructure. Now, what happens when you layer a rapidly developing technology like AI onto that, more specifically, agents?

The fundamental problem of agents without verifiability is that they collapse the trust model of automation. If you cannot verify how an agent reached its decision, you cannot trust it to operate autonomously on your behalf. Unlike human-mediated systems, agents won’t pause for review before executing (unless specifically specified).

The risk compounds as agents move from “decision support” into “decision execution.” In a chatbot setting, unverifiable reasoning might mean a hallucinated answer. But in a trading agent, unverifiable reasoning could mean a mispriced arbitrage, a manipulated oracle, or an adversarial exploit. Once assets, legal commitments, or even human life are on the line, the inability to verify the correctness of an agent’s output makes it impossible to assign accountability or guarantees. The risk far outweighs the automation.

At a systemic level, unverifiable agents force us back into Web2-style silos.

Let’s consider the following example:

An AI system tasked with adjudicating a car crash between Geico and Allstate. Both insurers submit evidence (photos, witness data, police reports), and the AI produces a determination of fault. If that output cannot be independently verified, then neither side can trust the process. One insurer may suspect hidden bias, tampered inputs, or misapplied logic. In high-stakes domains like insurance, unverifiable computation is indistinguishable from arbitrary judgment. Trust collapses, not because the AI is wrong, but because its correctness cannot be proven.

However, the challenge here isn’t limited to just trust, but also practicality. AI workloads are also orders of magnitude more computationally intensive than the typical onchain transaction. Even inference for modest models involves billions of floating-point operations and gigabytes of parameters. Running that onchain is not just inefficient, but economically and technically impossible under current blockchain constraints. Without off-chain verifiability, these workloads remain stranded outside the cryptoeconomic security envelope, confined to opaque, reputation-based Web2 systems.

EigenLayer: Restaking’s Incomplete Revolution

Before we dive into EigenCloud, it’s worth recapping the shared security and restaking component of EigenLayer.

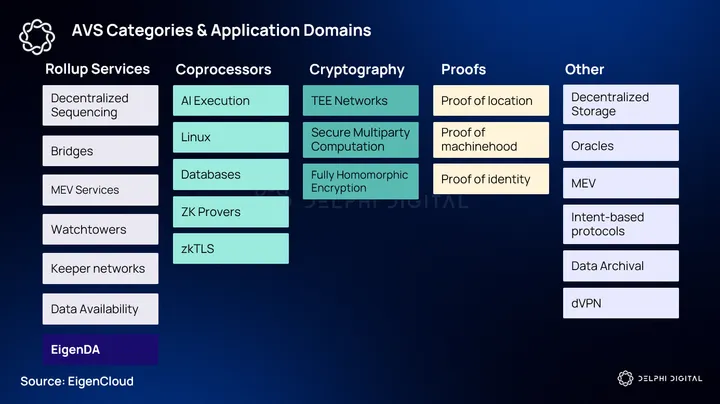

EigenLayer is often framed as a way to “extend Ethereum’s trust” beyond its native consensus through restaking – allowing ETH stakers to reuse their existing staked capital to secure new and previously impossible services known as Autonomous Verifiable Services (AVSs).

On paper, this is a step-change in blockchain architecture. Rather than spinning up a new L1 with a bespoke validator set or a fragile PoS token, builders can tap into Ethereum’s existing validator set, inheriting its economic security without re-creating consensus.

But the reality is much more nuanced. Restaking does not magically extend Ethereum’s trust guarantees to any arbitrary computation, but rather creates an opt-in security model where validators voluntarily enforce the rules of the AVS. The security is not uniform across services; it depends on validator participation, AVS slashing logic, and the strength of cryptoeconomic incentives. In other words, “shared security” in EigenLayer is not the same as “Ethereum security” in the L1 sense.

While it can incentivize correct behavior through slashing, that is still probabilistic enforcement; an AVS that cannot produce a cryptographic proof of correctness is asking participants to accept some level of trust in validator honesty. “Stake without verifiability” is still beholden to this programmability cliff, in that restaking alone is powerful but incomplete, because it scales validator participation without resolving how arbitrary computation can be made provably correct.

EigenCloud was precisely designed as the system to resolve this mismatch. It introduces a framework where AVSs can not only inherit Ethereum’s staked security but also tap into scalable, off-chain verifiability, subsequently turning EigenLayer closer to what could be interpreted as a complete trust model.

Birth of EigenCloud – Design Goals & Stack

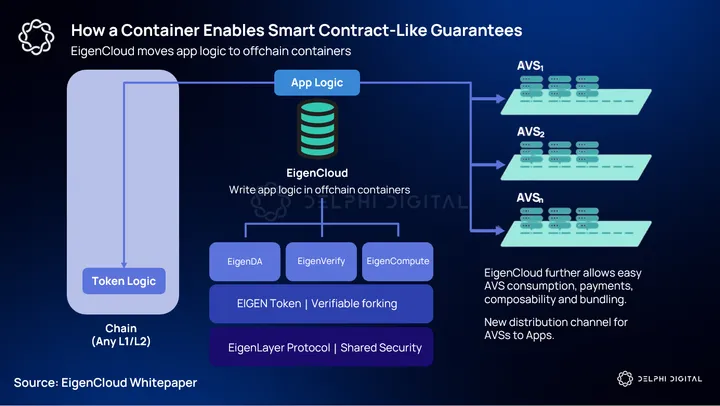

EigenCloud extends EigenLayer from an infrastructure protocol into a true developer platform. If EigenLayer solved the cost of bootstrapping security for AVSs, EigenCloud solves the cost of building and consuming them.

EigenCloud provides the components that let developers build cloud-scale applications with the same ease as AWS, but backed by cryptographic guarantees instead of opaque trust in a provider. The framing here is important. Just as AWS abstracted away physical servers and let developers think in terms of elastic compute, EigenCloud abstracts away validator sets, slashing logic, and raw operator coordination.

The suite is composed of three components: EigenDA, EigenVerify, and EigenCompute. Each corresponds to a pain point developers face when attempting to deploy verifiable offchain services. These pain points include:

- Publishing and persisting data

- Adjudicating correctness

- Containerizing computation

Let’s break down each of these components:

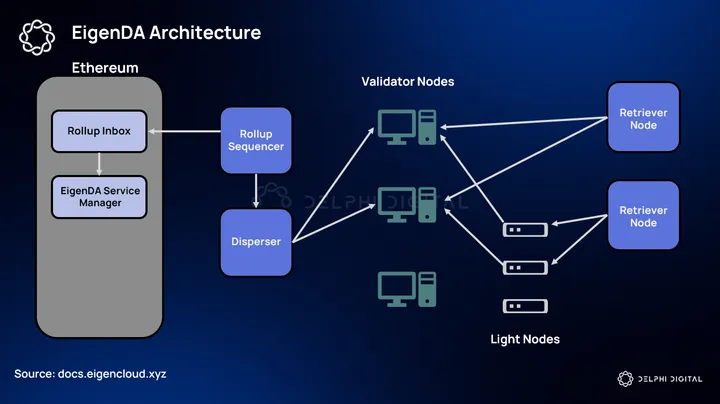

EigenDA plays the foundational role, akin to S3 in AWS. Offchain verifiability depends on the ability to both publish and retrieve inputs and outputs into a medium that is durable, auditable, economically secure, and censorship-resistant. Without a hyperscale DA layer, proofs and fraud challenges would be bottlenecked by Ethereum’s data throughput and gas costs. Whether it’s proof objects, fraud logs, rollup transaction data, or large offchain datasets, EigenDA solves this by offering transparent, low-cost storage for these types of computations while remaining censorship-resistant.

The most significant unlock here is that developers no longer need to make trade-offs between economic security and cost. If an application publishes data to EigenDA, any observer can reconstruct, verify, or challenge the associated computation. In practice, this means an AI model inference log, a zk-proof artifact, or a fraud proof trace can be made universally accessible without bloating Ethereum.

In our cloud provider analogy, EigenDA becomes to EigenCloud what S3 buckets are to AWS.

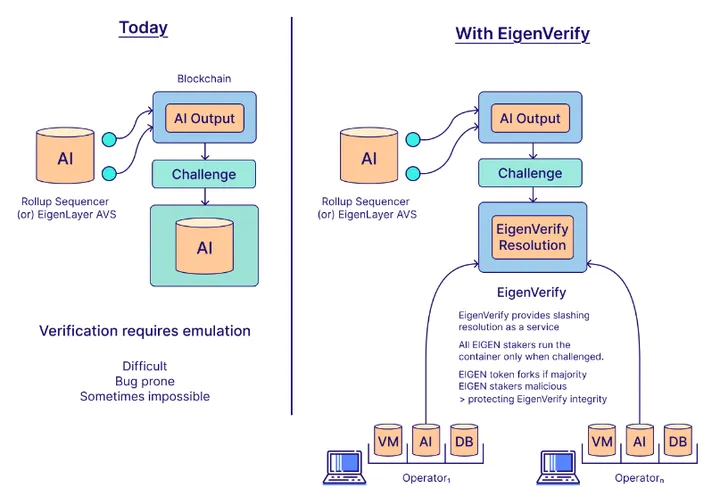

EigenVerify is the enforcement layer, the equivalent of a court system for dispute resolution.It works by enabling workloads to be re-executed in order to adjudicate whether they were run correctly. The crux of building AVSs has always been the complexity of writing custom fraud proof logic for every new workload. Unfortunately, all tasks aren’t created equally. Some, like double-signing, are trivial to detect, but others such as verifying a complex AI inference or a custom rollup VM, have historically been prohibitively hard to encode into onchain contracts.

EigenVerify abstracts this problem by offering standardized modules for both objective verification (re-executing deterministic code to check correctness) and intersubjective verification (adjudicating outcomes humans can agree on, like prediction markets).

Objective verification is for cases where correctness is purely deterministic. If the service outputs a result (a zk-SNARK proof, or a price feed), anyone should be able to recompute or re-verify the output given the same inputs.

Intersubjective verification is for services that can’t be reduced to deterministic correctness. For example, a prediction market payout, or a content moderation decision involving “human consensus”. In these examples, people must agree on what the “correct” outcome is. EigenVerify standardizes this via intersubjective verification: fork-choice rules, challenge mechanics, and token-holder adjudication pathways.

Source: EigenCloud Whitepaper

By anchoring this service to slashed stake and an EIGEN token forking mechanism, EigenVerify introduces a system where not only disputes can be easily raised, but collusion by the verifying set is punished at a nuclear level – which we will cover under the EIGEN tokenomics.

EigenCompute completes the trifecta by giving developers the abstraction layer to run arbitrary workloads as if they were deploying containers in AWS ECS or Lambda. Developers package application logic into verifiable containers, which are then run by EigenLayer operators, secured by staked capital, logged through EigenDA, and adjudicated through EigenVerify if challenged – similar guarantees to that of smart contracts (verifiable, forced inclusion, etc.).

Now, aside from verifiable off-chain computation, the more interesting unlock comes when you start composing services on top of EigenCloud. Because EigenCompute containers are verifiable and logged through EigenDA, they can be chained together, almost like microservices in Web2 cloud, but with each step individually verifiable.

For example:

A service might use EigenDA as its storage layer → call into an ML inference container on EigenCompute → pass the result through EigenVerify for objective adjudication.

Instead of treating verifiability as something only enforced at the final output, each service in the chain is checkpointed and subject to EigenLayer’s dispute/forking logic.

The Trust Dial

Rather than being locked into a single security model (like the rigid finality of Eth L1 or the probabilistic finality of rollups), we could view EigenCloud as a continuum of trust. Each mode represents a different point on the spectrum between latency, liveness, and security, effectively creating an API for risk.

Instant → Optimistic → Forkable → Eventual

At one extreme, instant trust allows an application to act on results immediately, treating outputs as final with no verification overhead. Here, developers rely primarily on EigenDA with minimal delay. The security trade-off is that no verification or slashing is built-in during this window. If the data is later challenged, the system may effectively roll back, however.

With optimistic trust, EigenCompute executes the workload, results are published to EigenDA, and EigenVerify lies in wait as a dispute-resolution guard. Outputs are assumed valid unless challenged within a grace period. If an error is flagged (via re-execution or a challenge), EigenVerify triggers slashing and rollback as needed.

Forkable trust leverages both the EIGEN token’s forkability and onchain observability of contested forks. For workloads with subjective outcomes (e.g., prediction markets), social consensus or governance mechanisms resolve disputes by optionally triggering a token fork if consensus deviates. Applications, chains, or services can pre-commit to fork alongside EIGEN in the case this happens, ensuring they follow the “canonical outcome.”

On the maximal security spectrum, all components of EigenCloud play their active roles. Workloads are fully adjudicated through cryptographic proofs, social consensus, and economic enforcement via slashing or forking. Essentially, eventual trust starts to approximate traditional blockchain security but with off-chain scale and verifiability.

How a Container Becomes a Smart Contract

One of the main components that allows EigenCloud to provide cloud-grade programmability while maintaining crypto-grade verifiability is the ability for developers to deploy an offchain container for specific application logic.

The process is quite conventional. A developer deploys a container (Docker/Nomad/Kubernetes) that includes the service logic into EigenCompute. Unlike a standard cloud deployment, this container does not run in isolation, rather, its execution is collateralized and enforced by restaked operators.

This should not be confused with an AVS, however. A container is just the packaged runtime for the code (the service logic, dependencies, binaries). It’s simply the deployable unit backed by stake.

An AVS only comes into existence when that staked container is protocolized. Meaning you’ve specified:

- Service rules – how tasks are scheduled, how results are validated, and what constitutes misbehavior.

- Slashing conditions – the contract logic that makes operator collateral slashable for faults.

- Verification layer – fraud proofs, ZK proofs, quorum signatures, or any other validation scheme.

- Onchain anchoring – the mechanism by which outputs are posted back to Ethereum (or another chain) and recognized as final.

Container = building block. AVS = container + EigenLayer rules + crypto-economic enforcement.

Once the container is live, EigenCompute automatically initiates a stake-matching process. Validators and operators who choose to support the service allocate restaked ETH or LSTs as collateral, effectively underwriting the container’s correct execution. The matching is not arbitrary; it ensures that sufficient stake backs the service relative to its economic and computational profile. From here, the container is no longer just “compute in the cloud” but a verifiable service with economic guarantees (slashing logic and challenge-response rules).

As the container executes, proofs are generated depending on the trust mode defined by the developer. This could involve validity proofs, optimistic proofs with a dispute window, or cryptographic attestations. Once these proofs are propagated through EigenVerify, other nodes can validate the container’s outputs without re-executing the entire workload.

The critical point here is that execution is decoupled from verification. A container may run heavy computation off-chain, but its results are made lightweight and trust-minimized through cryptographic proofs.

Traditionally, if you wanted to deploy an off-chain service in crypto (oracles, coprocessors, zk-proofs, or AI inference), you had two choices:

- Spin up your own operator set and bootstrap trust (capital-intensive and highly fragmented)

- Try to jam the workload directly onchain (expensive, throughput-limited, impractical for heavy compute)

Deploying a container lets developers package these arbitrary workloads in a standardized unit that can plug directly into EigenLayer’s restaking framework. The concept of a container itself isn’t new, but the novelty is that EigenCompute binds that container to Ethereum-backed collateral and a verification pipeline.

This unlocks composability at the middleware layer. A developer can ship a container and immediately inherit Ethereum’s security via stake-matching. That container can then expose verifiable APIs, participate in challenge-response games, and settle results onchain. All without requiring downstream users to trust the developers infra.

Developer UX

With EigenCloud, developers aren’t just renting infrastructure, they’re also paying for the economic security that their apps need. Through a “pay-as-you-stake” model, you only incur costs based on the security margin of how much economic collateral your app chooses to back its offchain logic with. This means if you deploy a container for something low-stakes (for example, a non-financial AI inference), you can opt for minimal stake backing, effectively saving resources. On the other hand, if you’re delivering high-trust components for settlement or compliance logic, you can dial up the stake to the level of assurance you need.

The important part is that you control the capitalization risk curve, and you aren’t paying for more than your service requires.

On top of that, developers aren’t forced to build everything from scratch. A huge UX win comes from the ability to consume and compose off-the-shelf AVSs. These are what you might think of as “verifiable microservices”, such as DA layers, rollups, oracles, and AI inference applications.

The web2 analog for AVSs is akin to that of importing SaaS libraries. You delegate underlying complexity, adoption is faster, and you inherit a composable and secure service without rebuilding the operator/delegation stack.

Economics of Verifiability

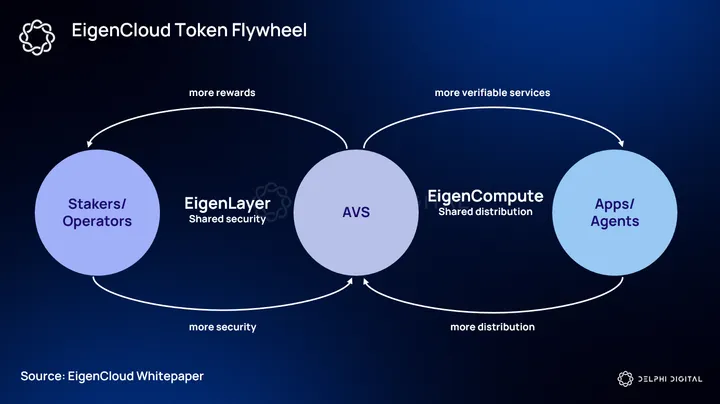

The EigenLayer token design is explicitly crafted around the idea that verifiability requires a form of underwriting the cost of detecting, challenging, and punishing misbehavior in a system where computation is externalized. To solve this, a dual-token model (EIGEN and bEIGEN), which anchors both security and verifiability, is introduced.

The base token, EIGEN, functions as the native asset of the system and the resource that defines the credible commitment of EigenLayer as a protocol. A portion of EIGEN can be locked into its bonded form, bEIGEN, which represents collateral staked explicitly to back verification.

This distinction is critical. While ETH (or LSTs) restaked into EigenLayer secures the execution of AVSs, bEIGEN secures their verifiability. It’s what ensures that proofs, challenges, and attestations carry enforceable weight, since operators holding bEIGEN can effectively be slashed for failing to verify or for colluding in inaccurate outcomes.

From here, the “fork-as-nuclear-deterrent” mechanism comes into play. The idea is that the system must always preserve the option of forking EigenLayer in the event of catastrophic consensus failure or systemic collusion. This threat creates an incentive alignment, where if operators or tokenholders corrupt verification markets, the community can credibly fork the chain, leaving malicious actors holding worthless EIGEN while honest participants migrate their stake. The deterrent effect of forking is therefore embedded directly into the token’s value proposition – EIGEN only accrues long-term value if it remains fork-resistant.

I’ll caveat this by mentioning that passive EIGEN holders do not have to participate or even be aware of forking consensus. The design goal is that only those who actively bonded (bEIGEN) or otherwise participated in securing/verifying services need to worry about forks. The fork mechanism should also be considered as a last resort option.

On the revenue side, fee flows are designed to reinforce this feedback loop. Every containerized service deployed through EigenCompute/EigenCloud generates fees, either from developers deploying workloads or from applications/users consuming verified outputs.

A portion of these fees flows to operators who stake ETH/LSTs to secure execution, while another portion flows to bEIGEN verifiers who guarantee correctness. The net result is that bEIGEN accrues value as the “verification collateral” of the network, while EIGEN accrues meta-value as the coordination layer that enforces the system’s credible commitments (fees, slashing, and fork deterrence).

Unlike generic staking tokens that merely collect fees, EIGEN formalizes the economics of verifiability. Its bonded form underwrites verification, its liquid form coordinates across services, and its value is backed by both the fee flows of EigenCloud workloads and the systemic nuclear option of forking. In practice, this means EIGEN is tied to the growth of the verification market itself. The more off-chain compute migrates into containerized AVSs, the larger the fee pool secured by bEIGEN, and the stronger the deterrent value of EIGEN as the network’s Schelling point.

Why bet on what the next winning app will be when you can own the substrate that powers them all – the capital that backs the cloud?

Patterns & Parables – What You Can Build

While we’re on the topic of winning apps, let’s explore the set of application domains that EigenCloud unlocks.

There’s a clear throughline in the kinds of apps EigenCloud is optimized for. They tend to share a few common traits:

- They rely on heavy off-chain compute that Ethereum or L2s cannot natively handle

- They demand trust-minimized guarantees

- They sit at the boundary between crypto and the outside world, where verifiability has historically been weakest

AI Inference

One natural frontier is AI inference. Today, models are deployed behind black-box APIs controlled by centralized providers. This requires implicit trust that the output hasn’t been tampered with and that the operator is faithfully returning results. Even if malicious outputs are the least of our concerns, controversies over model biases have been very real within the top AI labs. Most of today’s current “AI integrations” within crypto are arguably just API wrappers around OpenAI or Anthropic.

In a verifiable cloud environment, outputs are coupled to proofs. This could look like zk proofs (zkML), optimistic schemes with fraud proofs when outputs are heavy, or EigenVerify attestations that deterministically re-run the model on a different set of operators to ensure correctness.

zkTLS & Data On-Ramps

A second application class is data ingress via zkTLS and secure data oracles. How do you prove that data from the outside world actually came from the claimed source?

With EigenCloud, data ingress becomes verifiable. Containers can run zkTLS protocols to prove that they fetched a website or API response faithfully, or leverage enclave attestations to show that a dataset was queried without manipulation. Instead of trusting an oracle provider, onchain contracts can consume external data with cryptographic guarantees. This opens up the design space for a broad set of real-world application categories, most notably prediction markets.

Prediction Markets with Finality

Standard prediction markets typically rely on governance or oracles to resolve outcomes. These typically introduce latency and attack surfaces, or the oracles can be too expensive to incentivize at-scale participation for resolution. In EigenCloud, outcome resolution can be anchored directly in verifiable compute. For example, the result of a sporting event or election could be finalized through zkTLS proofs (as we mentioned) of data sources, secured by bonded EIGEN.

This turns prediction markets into real-time trading venues where liquidity behaves more like derivatives or sports betting platforms. And with the recent success of Polymarket and growth in online gambling, this is likely to be one of the standout use cases since the early iterations that Augur pioneered back in 2017.

Sovereign AI Agents

Perhaps the most forward-looking application of EigenCloud is the rise of sovereign AI agents. These are autonomous entities that own keys, hold capital, and act in onchain ecosystems. But to function credibly, they need more than just compute power. They require compute, reasoning, and tool calls that are verifiably aligned with their objectives, as well as insurance if they happen to step out of bounds.

Let’s lay out what this would theoretically look like when integrated into the EigenCloud stack:

An agent’s stack would decompose neatly across Eigen primitives. It perceives the world through verifiable ingress (zkTLS-style notaries/enclave attestations) and writes those inputs to EigenDA. It runs inside a container on EigenCompute with an explicit trust mode. And it proves/defends its outputs through EigenVerify with slashing semantics that extend even to token fork alignment when a majority is corrupt.

This framework provides a proper alignment surface that was missing from current “onchain AI” applications.

10/ What about Web2 data access?

Through zkTLS AVSs like @OpacityNetwork and @reclaimprotocol, Level 1 Agents can tap into existing Web2 services.

Imagine verifiably accessing reputation data from Uber or Airbnb to make informed decisions.

— EigenCloud (@eigenlayer) February 4, 2025

Alignment lives in three places that are all verifiable:

- The policy contract (a smart contract that encodes what the agent is allowed to do)

- The container hash (pinning the exact code/model/weights that implement that policy)

- The data attestations (what the agent observed)

Each of these has an onchain commitment and a slashing path. If an operator swaps the model or misreports inputs, the commitment mismatch is objective and slashable. If the policy is honored syntactically but violated semantically (eg. “fair execution” cases), intersubjective verification provides a venue to adjudicate with bEIGEN at stake. The point here is not that everything becomes purely objective, but that every failure mode has an enforceable recourse with explicit costs.

But how soon can we expect these services, and what does the timeline look like for EigenCloud?

Roadmap & Leading Indicators

The Eigen Economy has amassed nearly $20B in total TVL, 2,000+ registered operators, and 40+ active AVSs with more than 150 in development. EigenDA V2 on mainnet has introduced 100MB/s of throughput, far surpassing existing competitors, mainnet release of slashing, and plans to go multi-chain have begun with the first verifications integrated to Base.

The EigenCloud roadmap is organized around four major pillars: developer platform, trusted primitives (EigenDA/ EigenCompute/ EigenVerify), the EIGEN token, and commitment infrastructure built on EigenLayer. The practical test of the roadmap will be whether these primitives actually produce a two-sided market (AVS builders & stakers/operators) where service economics flow organically from apps to stakers, not primarily from token emission incentives.

The current pipeline:

Developer Platform – DevKit recently launched on testnet – a CLI toolkit that streamlines AVS development, and enables prototyping, testing, and deployment in live environments. It uses the Hourglass task-based execution framework. Hourglass standardizes how developers define, distribute, execute, and verify compute tasks across decentralized operator networks.

Trusted Primitives – EigenDA v2 has successfully introduced a throughput of 100MB/s, making it currently the fastest DA solution – a 2x bump from its original 50MB/s testnet target. The rollout of EigenVerify and EigenCompute mainnet is slated for late Q3/ early Q4 this year.

Economic Infrastructure (EIGEN token) – Programmatic Incentives V2. The Eigen Foundation recently proposed a revamp to their programmatic incentives, with the focus of increasing the overall reward rate and a rebalancing of total allocation to emit more incentives to staked EIGEN. The goal here is to accelerate application development and incentivize innovation on EigenCloud.

The proposal would update the following rewards allocations (if passed) as follows:

- 3% to ETH stakers & their Operators (no change)

- 4% to EIGEN stakers & their Operators (up from 1%) > net increase of 3%

- 1% to Business Development & Ecosystem Growth Rewards (net new)

Note: At the time of writing, this is currently in its proposal stage.

Commitment Infrastructure – Redistribution goes live on mainnet. Redistributable slashing is a mechanism in EigenLayer that allows an AVS to not just penalize a misbehaving operator by burning their stake, but instead to reallocate the slashed funds to a designated recipient.

Multi-chain verification has begun with the first integrations on Base with EigenLayer operator sets and stake weights available currently on Sepolia testnet. Multichain verification enables AVSs to operate across multiple networks.

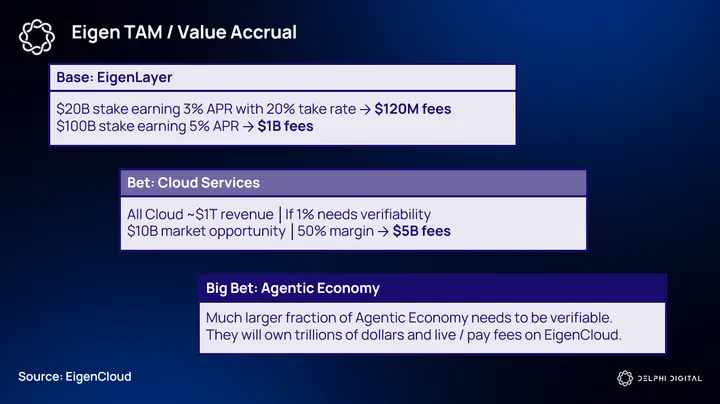

Eigen TAM & The Competitive Landscape

When it comes to competitors, the likely question is: how does EigenLayer compare to Symbiotic, EigenDA to Celestia, and EigenCompute to the likes of Akash and Render?

When asking this question, we realized that comparing each Eigen primitive on an individual basis wouldn’t do justice to the actual market EigenCloud is aiming to capture. The best analog to EigenCloud is traditional cloud services of today. Although Symbiotic and Celestia could be considered competitors to some degree, no other protocol in crypto is actively attempting to become the verifiable cloud.

Comparing EigenDA against Celestia, or EigenCompute against Akash or Render, doesn’t necessarily make sense since each of these primitives is not functioning in isolation, but rather as cohesive components that enable verifiable cloud services at scale.

For example, data availability is arguably a commodity with extremely thin margins. Celestia doesn’t make any meaningful amount of fees to justify a competitive advantage against EigenDA. This is not to mean that EigenDA accrues more fees, but rather acts as a single piece under the umbrella of EigenCloud to enable the highest throughput, even if it means acting as a loss leader in the process. The end goal here is to enable cloud-scale programmability with crypto-grade verifiability, and EigenDA is only one component of this architecture.

Regardless, even if we do make the comparisons, with V2, EigenDA substantially outperforms its immediate competitors.

Assuming this, the best comparison for EigenCloud then becomes the traditional cloud market.

But EigenCloud doesn’t need to displace AWS or Azure to capture this TAM, it only needs to become the default platform for workloads where verifiability is the product. Because verifiability carries a trust premium, even a fraction of traditional cloud dollar-volume converts into disproportionately large economic value for EigenCloud.

If we look at traditional cloud services like AWS or GCP, they price on raw compute cycles, bandwidth, and storage. The classical cloud value proposition is cheap, ubiquitous compute.

With EigenCloud, the approach is slightly different. Instead of the cheapest compute cycle, it sells trust as a metered service. For many high-value applications the marginal cost of being wrong is orders of magnitude larger than the cost of compute.

These applications are willing to pay materially more for a guarantee that an offchain computation, or an adjudication outcome is auditable, re-runnable, and economically enforceable. Theoretically, this creates a higher average revenue per verifiable workload when compared with an equivalent raw compute job on AWS.

Here’s a hypothetical breakdown of what this might look like if we compare EigenCloud against the current cloud market:

Traditional cloud TAM sits in the trillions over the next decade (expected to reach $2 trillion by 2030), but most of that revolves around commodity compute and storage. The verifiable cloud’s TAM is narrower today but arguably more defensible and higher-margin. From onchain applications to verifiable AI services, it starts with use cases that demand cryptoeconomic assurance. In the research report published by Goldman Sachs, there is a clear acknowledgment that AI will make a significant contribution to this demand growth. But what happens when those AI services require levels of verifiability?

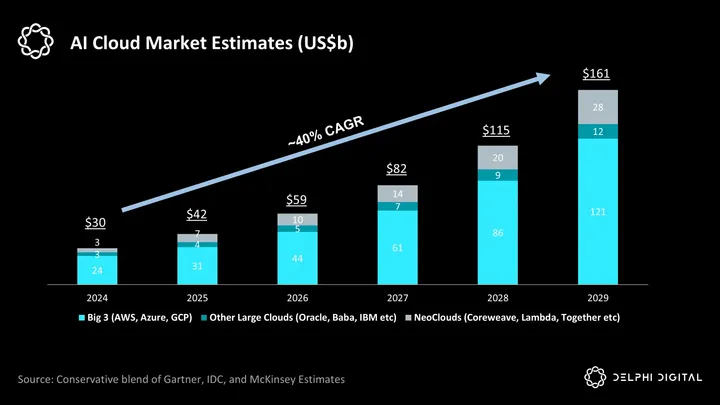

The chart below is a conservative estimate of the AI cloud market projection into the next few years.

While this seems like a niche use case compared to all of cloud, it’s the most valuable subset of workloads where correctness is worth more than raw throughput. If EigenCloud becomes the default layer for verifiable compute, its TAM expands as verification becomes a requirement in adjacent markets.

Conclusion – Towards the Verifiable Economy

Over the last decade, we’ve seen multiple approaches and iterations in infrastructure design within the space. Two of the largest architectural theses in crypto were big-chains (monolithic environments) and the app-chain thesis. EigenCloud proposes a third: the cloud-chain thesis – the idea that the “chain” is no longer just a ledger of transactions but a platform that enables programmable trust.

This has been Eigen’s thesis since inception. Bitcoin introduced verifiable money. L1s like Ethereum and Solana have enabled verifiable finance. And EigenCloud aims to create a verifiable economy, assuming that agents will steadily increase and take part in it as time goes on.

Crypto has unironically followed a similar trajectory to that of the early days of the internet, and is now approaching a similar inflection point to when AWS was first introduced in the early 2000s. Just as the traditional cloud opened up an entire new set of application categories and created a $10 trillion market, a verifiable cloud could do the same – only this time in a trustless manner.

0 Comments