Report Summary

The report titled “zkVerify: Optimizing Zero-Knowledge Proof Verification at Scale” explores the transformative potential of zero-knowledge proofs (ZKPs) in improving blockchain scalability, privacy, and security, and introduces zkVerify, a specialized platform for ZKP verification. Below are the key takeaways:

1. Importance of Zero-Knowledge Proofs (ZKPs):

- Scalability: Enables off-chain processing and data compression before validity proofs are sent on-chain, reducing computational burden.

- Privacy: Verifies data validity without exposing the data itself, supporting confidential transactions and private smart contracts.

- Security: Provides mathematically sound mechanisms to detect fraud or errors without revealing sensitive information.

2. Challenges in ZKP Implementation:

- High Costs: Current blockchain environments like Ethereum impose significant gas costs for ZKP operations due to fixed block sizes and high demand for blockspace.

- Tooling Limitations: Existing platforms lack sufficient infrastructure for seamless developer onboarding, and compatibility issues with new proof systems like STARKs hinder adoption.

- Throughput Issues: Limited capacity for proof verification impacts scalability and system efficiency.

3. zkVerify Overview:

- Unified Verification Layer: zkVerify serves as a modular Layer 1 blockchain designed for ZKP verification, prioritizing cost efficiency, interoperability, and scalability.

- Core Features:

- Proof submission interface for various ZKP types.

- Native verifiers to handle different proof systems.

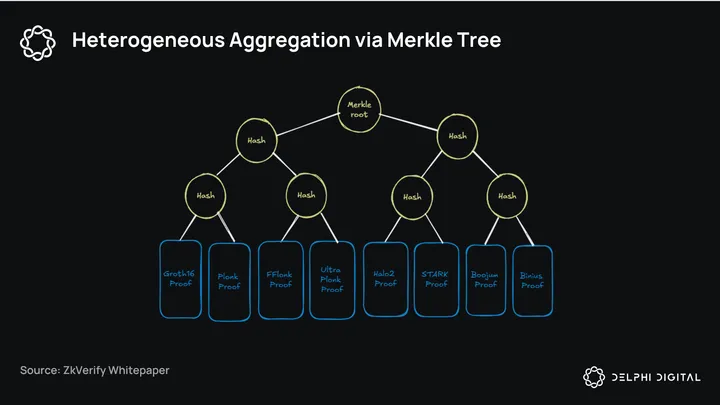

- Attestation mechanism using Merkle Trees to aggregate proofs efficiently.

- Specialized Design: Focuses on proof verification without competing for blockspace with other applications, enabling rapid and cost-effective operations.

4. Innovations in zkVerify:

- Heterogeneous Aggregation: Compresses proofs using Merkle Trees instead of resource-intensive cryptographic aggregation, reducing computational complexity.

- Developer Accessibility: Simplifies workflows by abstracting away verification complexities, allowing developers to focus on application-specific needs.

- Enhanced Throughput: Supports significantly higher proof verification rates compared to Ethereum.

5. Applications and Use Cases:

- DeFi and Private Finance: Supports private lending, trading, and dark pools for institutional-grade confidentiality.

- Digital Identity: Facilitates secure and private identity verification across applications using zkLogin and similar tools.

- Gaming and Prediction Markets: Enables verifiable random number generation for fair gameplay and predictions.

- Web2 and Web3 Synergy: Bridges traditional systems with blockchain, improving fraud prevention, data privacy, and advertising.

6. Future Potential:

- Market Expansion: zkVerify is poised to capture significant market share as demand for ZKP applications grows across industries, including finance, gaming, and digital identity.

- Scalable Design: The platform is designed to evolve with advancements in ZKP systems, ensuring long-term relevance and adoption.

Conclusion:

zkVerify addresses critical inefficiencies in ZKP implementation, offering a scalable and developer-friendly platform that enhances blockchain scalability, privacy, and security. By simplifying ZKP adoption and enabling new use cases, zkVerify is well-positioned to drive widespread adoption of zero-knowledge technologies across Web2 and Web3 ecosystems.

Introduction:

Over the past few years, zero-knowledge proofs (ZKPs) have emerged as a practical solution to three specific engineering problems for blockchains:

- Scalability: ZKPs enable a validity system where transactions are processed offchain, with data being heavily compressed before a validity proof is sent to the blockchain for verification. Instead of verifying all data onchain, this method significantly reduces the computational burden on the blockchain, enabling faster and cheaper transactions. Overall, ZKPs greatly enhance scalability without compromising the blockchain’s security guarantees.

- Privacy: ZKPs have the unique ability to prove the validity of data bundles without revealing any specific information about the data itself. This enables new privacy elements in blockchain systems, such as confidential transactions and private smart contract interactions. At the same time, the validity of the data remains publicly verifiable, ensuring correctness while keeping sensitive information confidential.

- Security: ZKPs provide a mathematically sound way to verify the correctness of computations without revealing the inputs or intermediate steps. This improves security by allowing for the detection of fraudulent activities or errors in critical processes.

The market for ZKPs is poised for substantial growth, with integrations spanning across all blockchain verticals. Rollups and bridges leverage ZKPs to achieve improved scalability, faster finality, and enhanced security. ZkApps employ ZKPs to anonymize transactions and protect user data confidentiality. The advancements in AI and language models further extend the market opportunity for ZKPs to provide efficient and verifiable complex computations via ZkCoprocessors. The adoption of ZKPs is poised to be a hockey stick visualization in terms of growth acceleration.

However, the current implementations of ZKPs in rollups and applications on a generalized smart contract platform pose multiple challenges and despite advancements, bottlenecks still hinder the adoption of ZKPs within the blockchain context.

First, implementing ZKPs is expensive due to the computational overhead. Instead of verifying transactions via re-execution, ZKPs run complex cryptographic operations to finalize the outcome. In the blockchain context, such operations have an extremely high gas cost and limited throughput, due to the fixed-sized nature of blockspace as it helps the network manage traffic and ensure consistent propagation time while avoiding attacks from excessive data flooding. On high-demand networks, such as Ethereum, proofs then have to compete with other applications for block inclusion, via first-in-first-out (FIFO) or priority-based ordering, thus further driving up the cost.

The second issue with implementing ZKPs is that most smart contract platforms today do not have sufficient tooling to onboard developers with ease. Ethereum, for example, currently only supports BN254, a pairing-friendly elliptic curve used for cryptographic operations. Implemented as part of the Byzantine hard fork in 2017, the elliptic curve can be used to run efficient SNARK operations, which were the standard and most widely-used implementations at the time. The widespread adoption of BN254 as a standard, however, has created an unexpected challenge: newer, advanced proof systems like STARKs must convert their proofs to maintain compatibility with established base layer operations. This conversion requirement restricts the options available to developers and impedes large-scale adoption of these newer systems.

Enter zkVerify – a modular L1 that aims to become a unified verification layer, at scale, for all infrastructure and applications using zero-knowledge proofs. As the one-stop-shop solution for all verification needs, zkVerify hopes to provide a much simpler solution for projects and developers utilizing ZKPs. By focusing on universal proof verification as the core offering, zkVerify prioritizes interoperability and developer accessibility. Compared to other solutions on the market, zkVerify also improves ZKPs operational efficiency, enabling proof verification at a fraction of the cost while expanding possibilities for scalable and interoperable applications.

zkVerify can usher the next wave of ZKP adoption in meaningful ways. The product helps remove developer complexity and friction when dealing with ZKPs, as it minimizes the workload for verifier deployment and maintenance. Instead of having to manage configurations for their verifiers’ contracts on different base layers, protocols can delegate the work to be maintained and updated by zkVerify’s system. Teams can rely on zkVerify to have the most up-to-date verifiers. Secondly, zkVerify enables new and novel use cases across both web2 and web3, especially within areas of fraud prevention, digital marketing, and verifiable AI. As the performant layer for ZKP verification, zkVerify can handle significant workload and demand coming from web2 while ensuring operational excellence. Developers can leverage zkVerify as a foundational piece to explore ZKP as a transformational technology and carve out new markets for production and growth.

As the technology matures, zkVerify is well-positioned to capture part of the growth in ZKP deployment and usage, paving the way for widespread ZKP adoption.

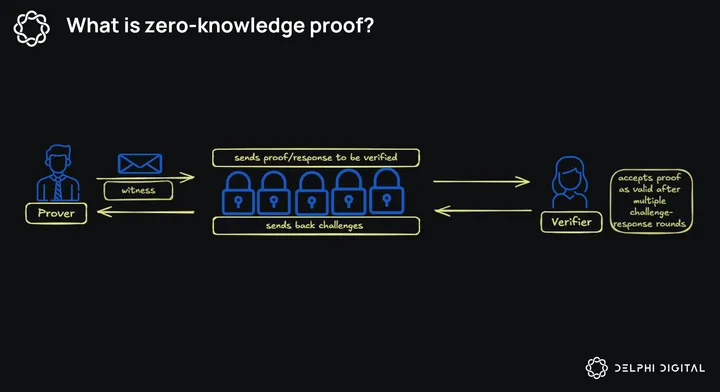

What is a Zero-Knowledge Proof?

To grasp the full potential of zkVerify, it’s important to first understand the basics of zero-knowledge proofs. At a high level, a zero-knowledge proof is a cryptographic method that allows you to prove the validity of a statement without revealing the underlying information to an untrusted counterparty. Consider the example below:

An international student wants to apply for a student visa abroad and has to prove to the embassy that he has the financial means to support his education. In this visa application, he would have to showcase the following:

- Bank Statement/ Account Balance

- Evidence of Family support (if the student is under 18)

- Proof of income and/or asset ownership

In normal circumstances, case officers will have access to the student’s personal information and make decisions based on what is provided. They are also required by the law to protect user privacy. From the student’s perspective, this means placing blind trust in the system to protect and safeguard the data.

Utilizing zero-knowledge proofs, the student can produce proof of asset ownership, such as ”the total value of my liquid assets is above the required threshold”, without providing the full documentation for verification. The officer then runs the necessary computation to determine the validity of the proof. Once the proof is accepted as valid, the visa is issued.

Properties of Zero-Knowledge Proofs:

There are three fundamental properties of zero-knowledge proofs:

- Zero-Knowledge: the verifier has no further information, other than the fact that the statement is true.

- Completeness: if the statement is true, then a verifier can be convinced by any honest provider that he has access to the secret information.

- Soundness: only the person with the correct information can convince the verifier. Playing a guessing game is almost impossible in this scenario. Any dishonest prover cannot convince the verifier of the correct knowledge of the input.

These properties allow zero-knowledge proofs to be complementary to the design of blockchain architectures and functionalities. Taking advantage of zero-knowledge proofs, developers have an elegant way to introduce privacy, scalability, and computation expressivity into the system. We will now explore this synergy in greater detail, and the potential market growth for zero-knowledge proofs and blockchains.

Blockchains x Zero-knowledge Proofs:

The Complementary Nature of Two Technologies

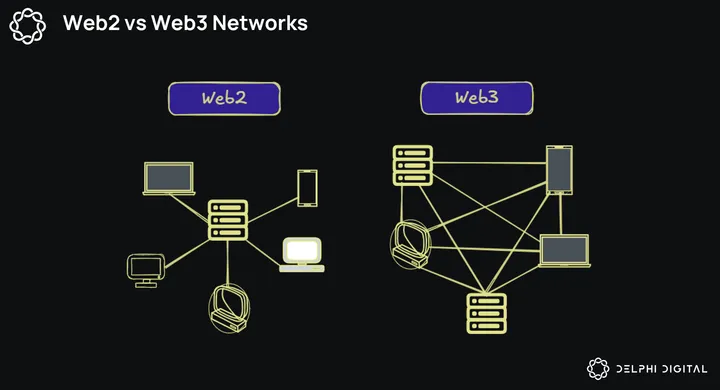

Blockchains are coordination systems for permissionless, peer-to-peer value transfers, without the need for intermediaries. Instead of relying on a centralized authority to settle the final outcome, blockchains implemented a network of distributed nodes to communicate and reach consensus on the current state of the system. This creates an environment where execution, storage, and communication between nodes are agreed upon and guaranteed in a trustless manner.

Such guarantees are enforced via economic rules (game theory and incentives) or cryptography (math), with most blockchain systems today using a combination of the two. Zero-knowledge proofs extend the existing guarantees by increasing expressivity, while also providing hardness:

- Expressivity: Zero-knowledge proofs stand to expand on-chain use cases while improving the underlying performance of blockchains. They enable an engineering shift from repeated execution for verification to a streamlined “execute once, verify everywhere” model, where compact proofs of computation or large datasets are generated and aggregated. This allows complex, multi-step computations to be performed offchain and verified onchain, dramatically improving scalability and expressivity by reducing data footprints and computation workloads. Since the data is never revealed to the proof verifier, ZKPs add a layer of privacy to the stack – a natural upgrade to the current paradigm of radically transparent blockchains.

- Hardness: Hardness in this context refers to the security guarantees of ZKPs – essentially, how difficult it is to introduce a faulty computation into the system. A dishonest prover cannot convince the verifier of an incorrect computation, thus helping reduce the trust requirements for the system to 1-of-N (honest minority) instead of relying on honest majority assumption (M-of-N). With ZKPs, users can rely on the complexity of Math and Cryptography alone as the basis for their security assumptions.

The Market for Zero-knowledge Proofs:

Below, we examine the potential market trends for zero-knowledge proofs and blockchains, leveraging the properties of expressivity and hardness:

Rollups:

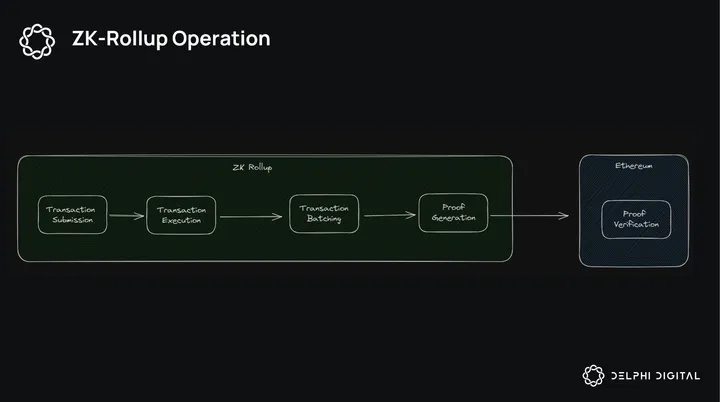

The excitement behind zero-knowledge proofs in blockchains can be attributed to its ability to improve scalability without increasing hardware requirements for verification. Instead of re-execution, blockchains can outsource the execution function to an offchain entity while maintaining on-chain verifiability and peer-to-peer communication guarantee. The ability to batch or aggregate proofs into a proof-of-multiple-proofs system also offers an order of magnitude more in terms of scalability and cost reduction. This is the thesis behind zk rollups on Ethereum, with the top 3 projects (Starkware, ZKsync, and Polygon) boasting a combined market cap of around $2.2 Billion

ZK rollups on Bitcoin: The rise of L2s on Bitcoin has been an interesting development over the past year. With Bitcoin’s scripting language limitations, various teams have proposed using Bitcoin as a Base layer for security, while having an additional layer for execution batching built directly on top. This would allow users to increase computational expressivity on top of Bitcoin, thus introducing further utility to the asset and helping improve its security budget.

Over the past year, interest in increasing Bitcoin’s computational expressivity has picked up tremendously, given the tailwind from the successful launches of the Bitcoin Spot ETFs. 20 investments in the category with over $76 Million committed at various stages of the funding cycle, indicates significant weight being put behind Bitcoin L2 efforts. ZKPs have the potential to provide a sustainable and elegant design for L2s building on top of Bitcoin, and projects such as Citrea, Alpen, and BoB are leading the way.

Beyond rollups, properties of zero-knowledge proofs are also desirable in the context of composability, architecture modularity, and computation complexity for onchain applications.

Bridges and Composability

Composability in a cross-chain environment, ie: transferring assets from Chain A to Chain B, is also an area where zero-knowledge proofs can shine. While most interoperability designs today utilize variations of optimistic verification for settlement, zero-knowledge proofs can complement the original architecture by introducing scalability, faster finality, and strengthened security:

- Scalability: zero-knowledge proofs theoretically enable validator bridges to compress computations via proof generation and batching for verification at the destination chain. In a multichain world, this means reducing operational overhead by amortizing costs via hardware improvements and acceleration of proving systems. Proof aggregation between multiple systems to be verified by the target chain can also introduce a design pathway for composability and asset bridging.

- Faster Finality: In a system using optimistic verification, there is always a time-delayed window for disputes using fraud proofs. Zero-knowledge proofs allow bridges to drastically cut down the time to finality, from days to minutes.

- Strengthened Security: Game theory, incentives, and multi-sigs systems are crucial in the current designs of bridges to ensure correct cross-chain execution. With zero-knowledge proofs, users can limit their trust assumptions to just math and code. On the operational side, zero-knowledge proofs can also introduce additional checkpoints via verifiable computations, thus hardening the bridge’s security and reducing potential attack vectors.

Today, bridges have demonstrated a high degree of product-market fit within crypto. According to DeFi LLama, during a market period where asset prices trend downwards and onchain activities dwindle, bridges still have a collective monthly volume of around 6.766B. Some of the top hacks in crypto are also bridge-related incidents, with the top 3 cases (Ronin Bridge, Wormhole, Binance BNB Bridge) totaling $1.519 Billion in value lost. In these cases, ZKPs could allow developers to minimize security issues by providing checkpoints for verification of asset transfer and enhancing the system’s scalability and flexibility.

The limited adoption of Zero-Knowledge Proofs (ZKPs) in these systems, however, is primarily due to their drawbacks in user experience, implementation complexity, and operational costs. We will explore these nuances in detail in later sections, and see where ZKVerify fits into the equation.

zkCoprocessors

Another exciting market opportunity for ZKPs over the next few years is the rise of the zkCoprocessors. As the need for more complex computations and data processing in blockchain applications continues to grow, zkCoprocessors are potential solutions to offload these tasks while maintaining security and privacy. These specialized components can be broadly categorized as follows:

- Data-access zk-Coprocessors: mainly feeds certain data (state history, block history, social graph from Web2 applications) to a certain place (smart contracts). StorageProofs and Space & Time are protocols working toward this direction. These protocols utilize ZKPs to create streamlined access to onchain data that can be queried and verified for smart contract operations, without processing all the underlying data.

- zkVM compute-Coprocessor: computation is generated off-chain, while the results get sent back onchain. While this is similar to the ZK rollup model, the major differences are that the coprocessor programs can be async, don’t manage state updates, and act more like service-on-demand providers. Axiom, Larange, and Brevis are products aiming toward this direction, providing external computation via ZKPs to power data-intensive applications.

zkApps:

At a high level, zkApps are applications that utilize zero-knowledge proofs to introduce additional functionality and feature support, including but not limited to, privacy, computation complexity, and scalability. Below, we will explore how ZKPs enhance application functionalities:

- Privacy-focused financial applications: ZKPs can enable confidential transactions, anonymous asset transfers, and private lending/borrowing platforms. Financial details can remain hidden while ensuring compliance. In these scenarios, user input is used as the witness for the proof, and only the user can reveal information to the authorities should they be required. Tornado Cash and Solana’s Private transfer (part of the token extension) are examples of this category. These tools allow users to obfuscate confidential information and maintain personal privacy. The information hidden from the public can include, but is not limited to, the asset type, the asset amount, the sender and recipient’s address, etc.

- Decentralized identity systems: zkApps can create and manage digital identities that allow users to prove specific attributes (e.g., age, citizenship) without revealing sensitive personal information. For applications relying on social graphs to onboard users, ZKP provides an elegant design to help segment and introduce new behaviors into the set, create stickiness, and reduce operational overheads. Worldcoin, a decentralized identity layer, uses ZKPs to provide secure and private verification of personal info, without revealing the data. When a person uses their World ID, a ZKP prevents third parties from knowing the person’s World ID public key or tracking them across applications. ZKPs also protect the use of World ID from being tied to any biometric data or the iris code of the person.

- Game logic and NFT ownership: Gameplay and Asset Verifications can be computed off-chain in real-time, thus improving performance and reducing costs while maintaining provable fairness and authenticity. Zero-knowledge proofs also enable developers to implement Fog-of-War mechanics, enriching gameplay and player immersion. While the idea is still being explored, significant advances have been made. The most notable progress this year has been zkHunt by Ingonyama, a real-time strategy game in which players compete with each other for resources and survival. ZKPs are used in this scenario to create a Fog-of-war/on-chain discovery process.

An Extension of the Modular Paradigm

Blockchains are coordinated computers, with the fundamental purpose of recording all valid transactions and data in the agreed-upon matter. At its core, a general-purpose blockchain performs four critical functions:

- Execution: processing transaction to update the state of the blockchain

- Settlement: resolving disputes, ensuring transaction validity

- Consensus: reaching agreement with other nodes in the network on transactions ordering in the new block, and finalizing state update.

- Data availability: ensuring all data is available for network participants to see and accessible for transaction verification

As the tech stack continues to mature, what we have seen over the past two years is the trend towards modularity and specialization. Instead of having one general-purpose blockchain to handle all parts of the stack, each function now has a dedicated layer, optimized based on segmentations and needs. This allows for optimization and scalability across the stack, thus offering the design space for exploration and specialization.

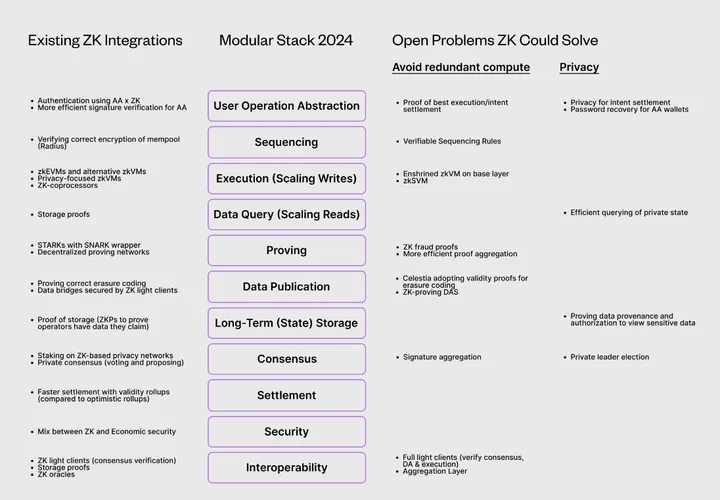

The modularity trend could continue to expand and accelerate as zero-knowledge-proof technology comes to fruition. The original version of the modular model can now be further unbundled and re-bundled into basic components, providing a more expressive and refined toolkit for developers, communities, and corporations to design blockchains that are best aligned with their collective goals.

Equilibrium Labs’ analysis, “Will ZK Eat The Modular Stack?”, provides a fantastic overview of the potential of the modular stack, focusing on existing integrations and highlighting problems ZK technology could solve going forward.

source: Equilibrium Labs, “Will ZK eat the modular stack?”

source: Equilibrium Labs, “Will ZK eat the modular stack?”

Trustless Verification and Data Interoperability within the Legacy system:

While ZKPs have garnered attention within web3 over the past 10 years, the market for ZKPs is expected to evolve beyond crypto and drive substantial improvement in legacy and traditional industries. Areas ripe for ZKP disruption would be information privacy, digital identity solutions, and fraud prevention across all industries. With ZKPs, users gain complete control over their data, sharing only the necessary details with the intended party while ensuring its accuracy. Corporations and organizations can utilize ZKPs for more secure data management systems, authenticating information for better fraud prevention, and staying compliant with regulations.

Information Privacy and Management

Compared to traditional privacy management software, ZKPs’ offerings are superior in several aspects:

- Data ownership belongs to the end user and does not necessarily have to sit on a siloed server owned by businesses. ZKPs can enable flexible data management and communication as the data being stored is a proof of data validity and not the actual data, thus saving businesses on costs and maintenance.

- ZKP can provide a superior privacy guarantee, since only the user can reveal the private information that is being invoked. This effectively removes the trust dependency placed on intermediaries and can prevent catastrophic incidents like data breaches and stolen identities on the black market, given that the data being stored is proof of data validity and not the actual information.

The data privacy market was valued at $2.76B in 2022. As projected by Fortune Business Insights, the market is forecasted to grow to $42.48B by 2030. These forecasts hinge on continued market growth, as user demand for better tools for privacy and compliance management accelerates in the future. Should this trend continue to unfold, we see emerging opportunities where protocols like zkVerify can move into and take up market share within the space, as ZKPs continue to mature as a technology.

Data Authentication:

Data Authentication is the process of verifying the origin and integrity of data to ensure that it is authentic and has not been tampered with. The application of this has wide-ranging implications in the real world today:

Digital Identity:

In the increasingly digital landscape, solutions to preserve, authenticate, and protect digital identities become a critical frontier. Over the next 10 years, we believe this is the second area where ZKPs can address the ongoing challenges and pave the way for a more secure and intuitive future.

ZKPs provide an easy and effective way to securely share digital identity information across multiple applications, without revealing sensitive personal details. Within the digital identity space, ZKPs can be used to aid in the following:

- Enhance user authentication experience and security by allowing users to prove their identity and access rights, without transporting passwords or sensitive credentials. The soundness property of ZKPs ensures the uniqueness of the digital identity to only one account, while the completeness characteristic allows ZKPs to be used across multiple systems verifying the same data format. This is very helpful in areas such as fraud prevention.

- User targeting in advertising and social media can be done by refining the verification to fit a predefined set of criteria, without compromising user private information.

- Organizations can verify user compliance with specific requirements by checking the proofs produced by the end user, without accessing or storing extensive personal documentation. This simplifies regulatory processes while maintaining individual sovereignty.

The escalating demand for secure remote access, regulatory compliance, and pervasive integration of digital services across sectors such as finance, healthcare, and retail could help propel the adoption and evolution of digital identity solutions market growth. ZKPs, given their characteristics, are poised to help businesses and developers to create much more refined, robust, and easier-to-use systems for digital identities that put users in control and utilization of their own personal data. Similarly, Fortune Business Insights forecasts digital identity to have a market size growth from $30.81B in 2023 to $101.37B by 2030.

The broader implication of a next-gen digital identity framework, built by ZKPs, is the potential for market disruption and transformative value capture across critical sectors, such as fraud prevention and digital marketing. Contingent on market growth, this emerging market has meaningful potential. We believe the advantages derived from this new system could reshape how businesses proactively tackle fraud prevention and deliver targeted advertising.

Fraud Prevention:

On a broader scheme, identity solutions pioneered with ZKPs would be a useful tool for fraud prevention, by providing a mechanism in which data issuers have to provide a validity proof to be verified by the counterparty. Upon correct validation, the end user then can proceed. The compressed nature of ZKPs and the complexity provided via math and cryptography ensure the following:

- Workload simplification: ZKP-powered solutions can simplify information validity checking by producing a cryptographically complex proof of identity that is extremely difficult to falsify. This can replace the intensive data matching process, reducing overhead and complexity.

- Strengthened data security: The method stores the mathematical proof of data validity instead of storing the personal credentials. This minimizes exposure risk, ensuring that sensitive information remains protected while still allowing for robust verification.

The major drivers for growth within Fraud Prevention could come from the increase in mobile application and online banking services that can have significant exploitable vectors. Within these markets, ZKPs could become the go-to tool for better information flow control, fraud detection, and data leak prevention.

Targeted Digital Advertising:

The Cambrian explosion of the internet, social media, and e-commerce over the past 20 years has made digital marketing and advertising the very core part of many businesses’ growth and development. Per Statista, within seven years, digital ad spend had grown from $243.1 billion in 2017 to $740.3 billion in 2024, and is expected to reach $965.5 billion by 2028.

As the internet penetration rate increases and the adoption of e-commerce and digital payments continues to soar, garnering attention in a post-scarcity world could result in significant market expansion for digital advertising. The journey from banner ads to the sophisticated programmatic advertising of today has been marked by the industry’s insatiable hunger for data, driving precision targeting and personalization.

Such evolution has consequences, especially in the age of personal sovereignty, with new regulations such as the GDPR and CCPA, and a more privacy-savvy user base. ZKPs could act as a solution to help deliver efficient advertising to the targeted audience while maintaining respect toward users’ data privacy:

- User privacy: users only share verification of information. If the user profile/ action fits a certain advertising bucket, the ad can be delivered to them. The user still has control over their personal data and their rights to be exposed to such content.

- Personalization: ZKPs allow for a refined degree of access, and thus, advertisers can finetune their information flow/request to properly deliver their ads to the right target.

Verifiable AI

Verifiable AI refers to AI systems designed to be transparent, accountable, and auditable. These systems allow users to trace, understand, and validate AI decisions, ensuring they are free from bias and errors. Black Box AI operates with limited transparency, making it difficult to understand and trust its decisions.

The combination of ZKPs and AI can be extremely useful, as it can enhance bias mitigation within machine learning models, and ensure that the model that users interact with behind a black-box API is indeed the precise model requested from the provider. The implications of this approach extend across domains, with particular relevance in fields such as healthcare and finance. The outcomes produced by AI models can profoundly affect the well-being of end-users, especially those belonging to underrepresented populations.

Recent developments have given rise to the field of ZKML (Zero-knowledge Machine Learning) in which researchers and developers utilize ZKPs to verify the integrity of the inference pass in AI models. Two primary purposes of ZKML are as follows:

Verification of model output: In scenarios where users submit input to a remote service hosting AI models, ZKML ensures that the output produced is consistent and resulted from the exact output that they paid and requested for. For generative applications like ChatGPT, this is essential given the different tiers for product usage involved.

Enhancing data privacy: in situations where data access is private, the demand will be to deploy the AI model locally. ZKML allows model deployers to be confident that the data output sent back by the user is an accurate output from the model and not interference from users’ software. The combination of data locality and the Zero-Knowledge property preserves user data privacy.

In a world where AI continues to accelerate our productivity, the threats of misinformation and deception also ring true. ZKPs could provide a complimentary toolset to help battle this and enforce the integrity of the world around us, especially in the age of information overload that we are in now.

How do zero-knowledge proofs work?:

At a high level, there are three major components to a zero-knowledge proof system:

- The prover that produces the proofs.

- The verifier that verifies the proofs.

- The witness is the hidden information the prover claims to have.

Zero-knowledge proofs work by having the verifier ask the prover to perform a series of actions that can only be done accurately if the prover has the underlying information. Since the verifier’s challenges can’t be determined in advance, the prover will eventually be proven wrong with a high degree of probability if the prover is only guessing the results of these actions. This is the interactive model of zero-knowledge proofs, first introduced by Goldwasser, Micali, and Rackoff in 1989.

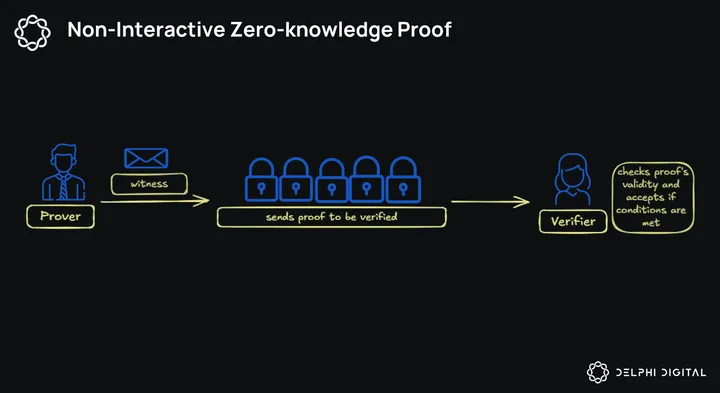

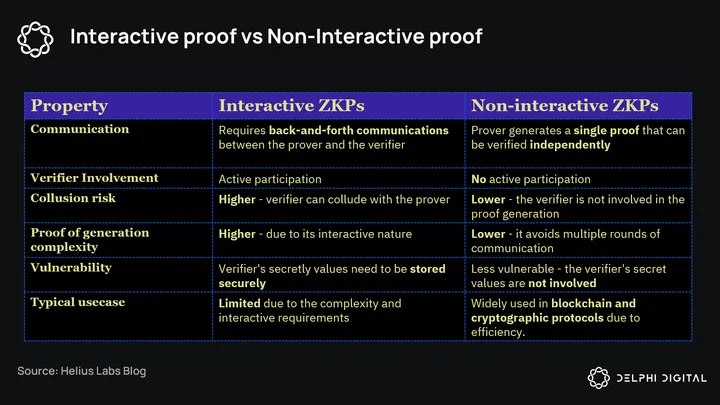

In blockchain applications today, what we commonly see are non-interactive zero-knowledge proofs. Instead of performing validation via the multi-round challenge and response protocol, the prover only needs to create a proof once that can be verified by anyone using the same proof. We’ll discuss its significance to blockchains in a minute, but to the proof system itself, being non-interactive offers practicality in terms of system scalability, overhead operations, and security. A full comparison breakdown is shown below:

The reason for the enablement of ZKPs is based on 2 key innovations: Fiat-Shamir heuristic and Polynomials.

Fiat- Shamir Heuristics

Fiat-Shamir Heuristics is a technique that transforms an Interactive Zero-knowledge Proof into a Non-interactive Zero-knowledge proof. Instead of waiting for the verifier to send back a challenge, the prover can produce a challenge himself, using a cryptographic hash function. The hash output is used as a challenge, and the prover computes its response against this self-generated challenge. Since the output of a cryptographic hash can’t be predicted in advance, and any changes to the input result in output changes, the hash satisfies the randomness requirement in challenge ordering that we discussed earlier.

The prover then sends this response as proof to the verifier, along with the hash output and the initial commitment. As a result, the verification process becomes non-interactive, since the verifier can check the proof’s validity without further communication with the prover.

Polynomials:

At a high level, polynomials are algebraic expressions that are the sum of a (finite) number of terms of the form cxk ( such as F(x) = axk + bx + 1). Polynomials are very efficient in representing computations because they can express complex operations and constraints using a compacted series of terms. This makes polynomials ideal for encoding a program’s intricate computational steps into a basic computation format that’s easy to work with and verify. Highly sophisticated algorithms can be simplified down to basic polynomials. Such efficiency in representation and evaluation is crucial for creating practical and scalable zero-knowledge-proof systems.

Polynomial commitment schemes allow provers to commit to a polynomial, without revealing the polynomial entirely. To put it simply, a polynomial commitment scheme is a special way to “hash” a polynomial. Checking the equations between polynomials has the same guarantee as checking the equations between their hashes, and the verifier can conclude that the proof provided by the prover is valid. The act of “hashing” generally also reduces the data size between the prover and verifier, enabling effective communications.

Via the commitment scheme, the prover proves that he has knowledge of the witness that can solve the polynomials. The commitment scheme ensures no info about the polynomials (and therefore the witness) is leaked to the verifier, thus maintaining zero knowledge of the private information. The verifier can check the prover’s public input and the hash output to determine whether the proof is valid.

Current Implementation of ZKPs

Flows and Operations

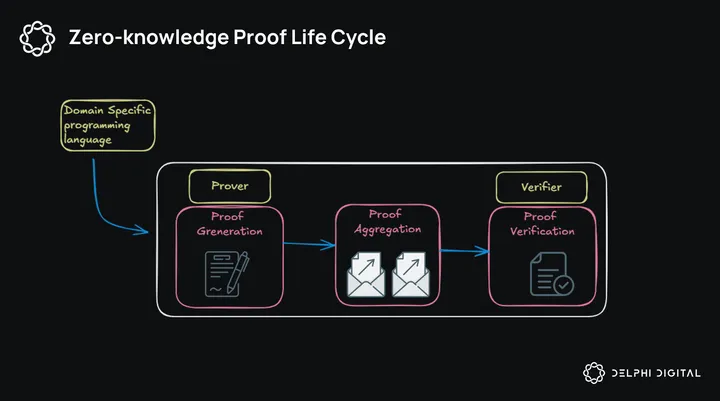

At a high level, the flow of a ZKP implementation is as follows:

Proof Generation:

The program, usually written in a Domain Specific Language (DSL), gets compiled into an Intermediate Representation (IR), which is a circuit representation along with additional metadata and structuring information. A circuit, in plain English, is a representation of the program in a network of basic, elementary mathematical operations (Arithmetic Circuits) or logic gates (Boolean Circuits). This is called the front end of the system.

The backend of the system is where the actual proof generation takes place. It takes the circuit representation (produced by the front end) and applies cryptographic techniques to generate a succinct, verifiable proof of the program’s execution. The process can be condensed to 3 distinct steps:

- Convert the IR into a system of polynomial equations: This is typically the first step in the backend process, transforming the computational problem into a mathematical representation. The prover creates polynomials that satisfy the equations representing the computation.

- Apply a polynomial commitment scheme: This step helps create a compact representation of the polynomials, allowing the prover to commit to them without revealing the actual polynomials (and thus doesn’t reveal the witness).

- Apply the Fiat-Shamir Heuristic technique: Transforming our interactive proof into a non-interactive one, the prover generates a proof and sends it to the verifier for verification.

After the proof is generated, it will be reformatted in a standard way that can be easily transmitted and verified. Depending on the Proof system being used, typically either SNARK or STARK, the backend also generates a verification key that verifiers use to check the data. Because of the complexity and intensive nature of the proof system, this proof generation step is usually conducted off-chain leveraging specialized hardware.

Proof Aggregation:

While this is not a requirement, aggregation via recursive composition can be used to create proof-of-multiple-proofs, thus amortizing cost and reducing system overhead. Various systems will differ in compatibility, aggregation speed, and overall requirements for implementation.

Verification:

Verification is the last step of the process, is usually much faster, and requires less computation power than the proving process. The verification process happens on-chain, with contracts for precompiles evoked for operations support.

At this step, the main executions come down to:

- Verify low-level computation integrity: ensuring the polynomials represent the computation correctly step-by-step, focusing on the structure and relationships of different elements within the proof.

- Proof verification: the verifier reconstructs the challenges using Fiat-Shamir Heuristics, and verifies that the open commitments are consistent with the challenges and the rest of the proof. Remember that during proof generation, the prover used Fiat-Shamir to generate a challenge with an unguessable output, and send that along with the prover’s response to the verifier.

- High-level verification: confirming at a high level that all parts of the proof agree with each other, and all rules of the computation are followed, ensuring that the prover didn’t use different values for the same variables for various parts of the proof.

- Final verification: Compressing multiple constraint checks into a single, more optimized verification, while having a final verification equation comprehensively encapsulates all previous checks. This final step serves as a powerful, concise test of the entire proof’s validity. If this equation holds true, it provides strong evidence that all individual checks and constraints within the proof are satisfied, offering an elegant conclusion to the verification process.

Trade-off considerations:

When implementing ZKPs, the following are considerations developers must take into account:

Circuits vs zkVM:

The comparison between circuits and zkVMs can be best understood through an analogy to ASICs and GPUs: circuits are more optimized for specific tasks, like ASICs, while zkVMs are for more generalized computations, like GPUs.

Traditionally, circuits have been the dominant way to write zero-knowledge programs thanks to their performance and efficiency. Circuits are program-specific and constraint-based, allowing the developers to specify how the computation is represented in zero-knowledge proof, with no additional data or parameters needed. The downside of the circuit approach is that developers need specialized knowledge about ZKPs, or even just the specific DSL, like Circom or Noir, to define the computation constraints correctly. Circuits are tailored to the specific programs, meaning that each circuit would require its dedicated verifier. If a program requires an update, the corresponding circuit must be rewritten to match the changes. This tight relationship results in additional workload for developers, thus hindering technology adoption.

zkVM is the newer approach that aims to provide a more comprehensive developer experience. Instead of needing to understand circuits, constraints, and arithmetization, developers can focus solely on building their application using a high-level language, like Rust or C++, and let the virtual machine take care of execution and proving in zero-knowledge proof. The tradeoff is the increased system complexity and prover overhead, due to the program decoding and computation over arbitrary instruction sets. This can result in a longer proving time and larger proof size compared to specialized ZKP systems. However, as optimizations, engineering, and hardware continue to improve, the accessibility and easy workflow integration make zkVMs an attractive option for developers.

SNARKs and STARKs

SNARK (Succinct Non-interactive Arguments of Knowledge) and STARK (Scalable Transparent Arguments of Knowledge) are broad categories of proof systems used in production today. They provide frameworks, libraries, and resources that developers can use to create and work with zero-knowledge proofs. Depending on several factors, such as proof size, verification time, security properties, etc, developers can select the proof systems that best fit their development needs.

- SNARK: Provides smaller proof size, faster verification time, and is more suitable for defined computations. The disadvantage of SNARK is the trusted security setup and the demand for complex implementation expertise.

- STARK: Offers scalability and performance for large computation, is quantum-computing secured, and does not require a trusted setup. However, STARKs have larger proof sizes, take longer to produce, and may incur higher verification costs onchain.

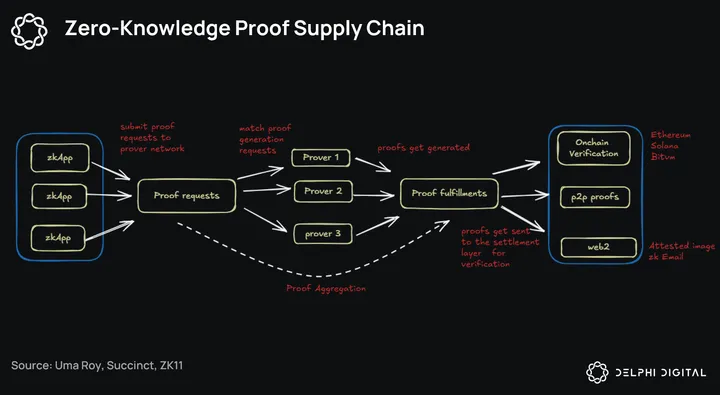

Rise of the ZKPs Supply Chain:

Given the complexity of implementing zero-knowledge proof and the various trade-offs to be considered, there has been a conscious effort to redefine the ZKPs supply chain. The aim is to provide better toolings and improve developer and end-user experience.

While this doesn’t detract much from the original framework, the key difference is dedicated networks for functions across the value chain. These networks, optimized and specialized for their specific workloads, can bring forward better optionality, lower the barrier for ZKP deployment, and ultimately bring ZK closer to the adoption endgame.

Current System drawbacks and inefficiencies

While applied ZKPs have grown tremendously over the last few years, system inefficiencies are still major hurdles that affect technology adoption. As we mentioned in the intro, two standout issues are the high verification cost and the lack of flexibility for various proof systems, hammering innovation and adoption of ZKP technology.

Take a look at Ethereum, for example. Ethereum is a generalized smart contract platform, allowing for permissionless program deployments. It’s also the ecosystem with the highest density in terms of applications and research regarding ZKPs.

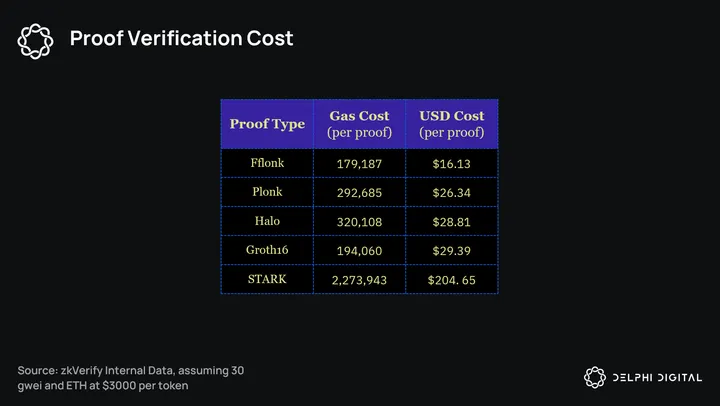

A standard token transfer has a fixed base fee of 21,000 gas, which means the cost of proof verification on Ethereum is typically 9 times (Fflonk proof) to 100 times (STARK proof) more expensive. As this is a per-proof cost, the direct expense from infra teams and applications using ZKPs to Ethereum is significant at scale.

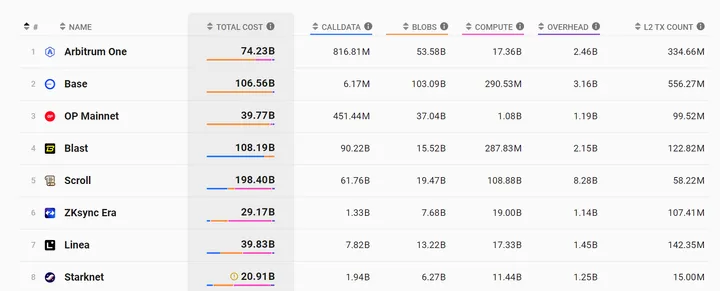

Source: L2Beats, 6-month range 02/2024 – 08/2024

Source: L2Beats, 6-month range 02/2024 – 08/2024

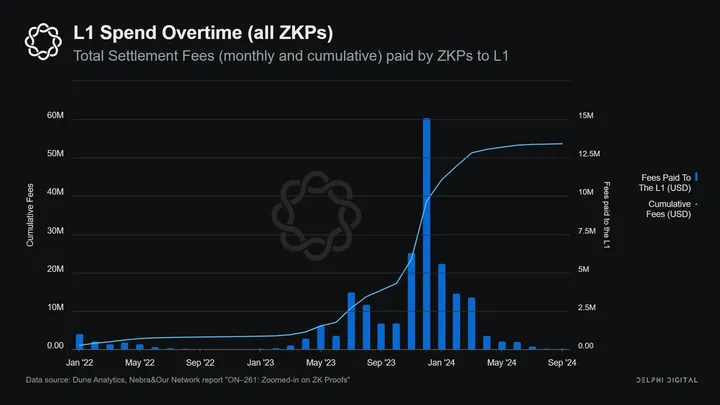

In less than 2 years, over $53M in settlement fees have been paid to the Ethereum L1, with ZKP infrastructure dominating apps in terms of utilization. It’s also important to acknowledge that this figure represents a period where user activities, as measured by transaction counts, are lower on zk rollups compared to their optimistic counterparts. This is due to the technical complexity of zk rollups, which results in slower go-to-market timelines. For instance, zkSync Era launched in June 2023, a year and a half later than Optimism (Dec 2021) and two years after Arbitrum (May 2021).

As we expect user activities on zk rollups to reach parity over the next few years, the total ZKP settlement payout to Ethereum L1 is anticipated to be a multiplier of the $53M figure above. For teams looking to utilize ZKPs as part of their infrastructure, this is a huge operating cost on the balance sheet.

The high cost of proof also impacts the proof throughput and utilization on Ethereum. Ethereum has a standard gas limit of 15M gas per block, and can be extended to 30M gas at max capacity. Given that a Groth16 proof has a cost of around 200,000 gas, a block operating at 30M gas could theoretically contain 150 Groth16 proofs. At Ethereum’s 12s block time, this translates to a theoretical number of ~ 12 proofs-per-second. This capacity vastly exceeds Ethereum’s actual performance.

In reality, the Ethereum throughput is much lower than the theoretical capacity. While calculations based on the 21,000 gas base fee suggest a throughput of 119tps, the actual number is only 12-15tps. Applying this same ratio to proof throughput on Ethereum provides a result of 1.2 proofs-per-second for Groth16 proofs, and a much lower number for more expensive proof types like STARK-proofs.

This reduced throughput can be explained by examining Ethereum’s block-building mechanism. On Ethereum, multiple applications with varying degrees of gas consumption also need access to the same blockspace for processing. These transactions are executed sequentially, with its ordering for block inclusion determined via price-gas-auction mechanism. Users can pay high-priority fees to have block inclusion guarantees. This, combined with the already high cost per proof, results in a low degree of throughput for proofs on ETH.

The heart of the problem

At a high level, these issues are effects of the lack of precompiles. Precompiles are built-in functions or operations implemented at the protocol level that optimize the execution of complex computations. They are typically more efficient in terms of computational resources when compared to equivalent operations directly in smart contract code or bytecode.

In the context of ZKPs, precompiles are needed to keep the cost of operations low. As a reminder, ZKPs are computationally intensive, due to their nature of execution in multiple basic arithmetic steps. Implementing that in blockchain systems exacerbates the operational difficulty, given that developers need to transform the ZKPs code to be compatible with the current runtime environments. Without precompiles, the operational cost for ZKPs becomes prohibitively expensive.

The computational intensity nature of ZKPs also has implications for network congestion and resource allocation. Given the fixed blocksize nature of these systems, resource-intensive applications like ZKPs can impact the market as they consume a disproportionate amount of blockchain resources. As a result, this leads to a crowding-out effect, as applications struggle to secure access to blockspace for operations. Consequently, network throughput diminishes, and user experience deteriorates. In this context, precompiles emerge as a solution. Precompiles, given their ability to optimize operations and increase computation efficiency, can free up additional block space. This allows for the mitigation of the trade-offs between ZKPs scalability benefits and network performance.

It’s important to note that BN254 support was introduced in 2017, via the Byzantine hardfork. Over the past 7 years, despite the introduction and maturation of STARKs as a more performant ZKP system, no new precompiles have been added to Ethereum for ZKP verification support.

This is not surprising, as adding new precompiles into the system will result in a hard fork – a significant network update that requires careful planning and coordination with participants in the ecosystem. A hard fork is a radical change to the blockchain’s protocol rules that creates a new version of the blockchain that runs incompatible with older software versions. Including the new precompiles, the core protocol is changed fundamentally in how different operations are handled, effectively changing how consensus on new state updates is achieved. The old software, without the new precompiles, cannot validate state changes coming from the new upgrade. This results in a fork, creating two versions of the blockchain state. The validators essentially have to choose which version of the state update to follow. In many blockchain systems, a hard fork is only accepted when all nodes agree to the upgrade, and the precompiles are implemented uniformly across all nodes.

Introducing a hard fork can have ideological and economic consequences, thus requiring careful planning, testing, and discussions within the community. An example of a hard fork is the Ethereum Merge, where the protocol changed its consensus mechanism from PoW to PoS. While the mention of PoS for the Ethereum system dates back to the whitepaper in 2013, it took active development starting in 2017 to be finalized in the Shanghai upgrade (2022). Many who followed the event can see that, given the nature of the change, careful planning took center stage. The same goes for introducing major code changes, like the precompiles, as it can have a direct impact on every stakeholder in the ecosystem.

Given the significance of hard forks, upgrading the protocol for better precompiles is a strenuous and time-consuming endeavor. This goes counterintuitive to the accelerated development of the ZKPs industry, as it took only 7 years for the field to go from being called “moon math” in 2017 to the plethora of generalized zkVMs in production now in 2024. Architecture limitations impose constraints on developers looking to utilize ZKPs in production and hinder their ability to leverage the latest advancements in the field. These limitations also prompt developers to come up with workarounds, such as STARK-to-SNARK conversion, which require additional computation resources.

zkVerify

The remainder of this report will explore the inner workings of zkVerify, outline its value propositions, and detail how value accrual occurs. At a high level, zkVerify proposes to bring verification outside of the protocol and add precompiles as needed to address the aforementioned challenges and inefficiencies.

While the industry has been focused on improving prover efficiency, zkVerify has positioned itself as an optimizer for the verification stage. From a more nuanced perspective, zkVerify is attempting to directly challenge the current ZKP supply chain. Its hyperefficiency allows the project to prioritize interoperability and accelerate developer onboarding to ZKPs, thus enabling novel use cases that bridge the web2 and the web3 world.

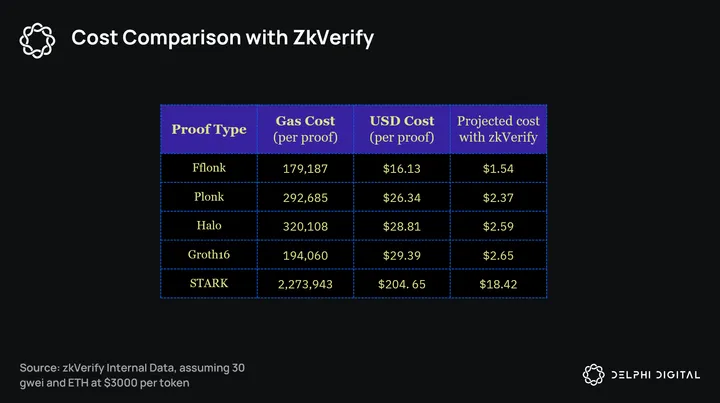

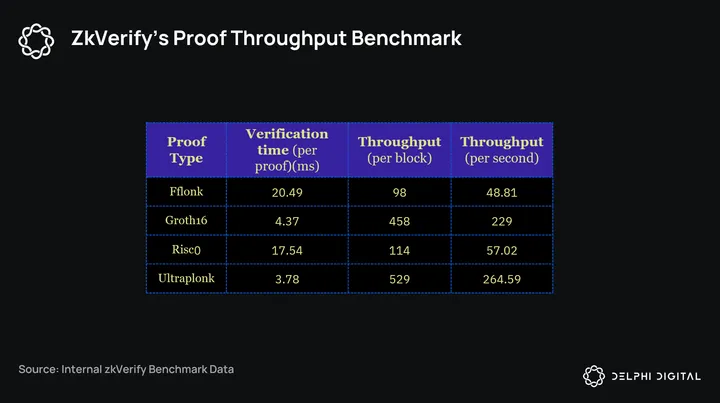

The table below represents benchmark data, demonstrating a remarkable product efficiency and scalability compared to the same cost for direct operations on Ethereum. With zkVerify, developers can simplify their workflows and reduce operational overheads working with ZKPs, ranging from risks, cost, maintenance, and platform dependencies.

Architecture Overview:

zkVerify is a Substrate-based L1 blockchain, specialized in zero-knowledge proof verification, with the goal of becoming the universal verification layer for all verifiable computing. It is designed to handle zero-knowledge proofs coming from various sources. While these proofs will have different characteristics, including formats, proof sizes, recursive compositions, and circuit complexity, zkVerify will be able to handle all with relative ease, The system is designed to help unload the complexity of dealing with ZKPs, while also enhancing composability with different tech stacks.

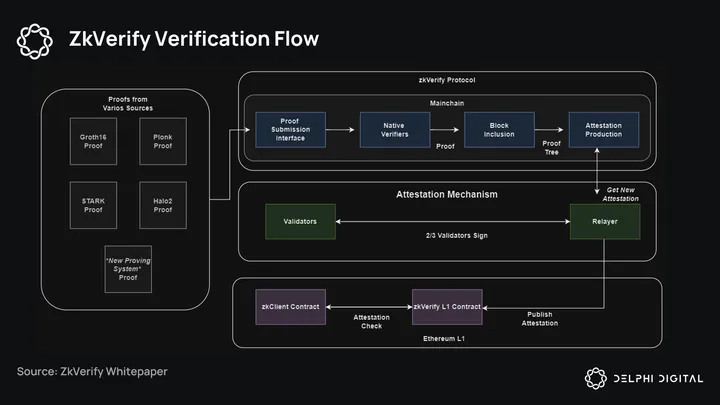

At a high level, there are 4 core components to zkVerify:

- Proof Submission Interface: This is the interface (for transactions and RPC calls) that receives heterogeneous proofs from various sources seeking proof verification.

- Mainchain: this is the L1 Proof-of-Stake chain, whose main responsibilities are to receive, verify, and store validity proofs. It houses the Verifiers modules for different proving system types such as Fflonk and Groth16, ensures that the proof is included in a block, and then creates the zkVerify Merkle Tree.

- Attestation mechanism: This refers to the protocol that creates and publishes an attestation, which contains the Merkle root (of a heterogeneous proof tree), onto the zkVerify L1 contract once a given policy is met.

- zkVerify L1 Contract: The core responsibility of this smart contract in Ethereum is to store new attestations, validate them, and provide capabilities for users to verify that their proof is part of the attestation.

zkVerify’s Verification flow:

The verification flow occurs as follows:

Proof submission: Proof Submitters send their verification requests to the Proof Submission Interface.

Proof Verification: Once submitted, the proof is then verified via a Native Verifier (written in Rust). If the proof is valid, it is relayed by the consensus and eventually included in a Mainchain block; otherwise, the transaction reverts with an error. To prevent DDoS, the failed transactions will also be included in a block, and users will pay a fee.

Block Production: zkVerify uses BABE (Blind Assignment for Block Extension) as a Block Authoring algorithm. BABE provides slot-based block authoring with a known set of validators that produce at least one block per slot. Slot assignment is based on the evaluation of a Verifiable Random Function (VRF). Each validator is assigned a weight for an epoch. This epoch is broken up into slots and the validator evaluates its VRF at each slot. For each slot that the validator’s VRF output is below its relative weight, it is allowed to author a block as a primary author. zkVerify also leverages secondary slots so that every slot will have at least one block produced resulting in a constant block time. Blocks produced via BABE are considered tentative until finalized by GRANDPA.

Block Finalization: GRANDPA (GHOST-based Recursive Ancestor Deriving Prefix Agreement) is a separate mechanism for block finalization that runs alongside BABE. Validators participate in rounds of voting to decide on the block that should be finalized. Each validator casts a vote for a block that it believes can be finalized, which implicitly includes votes for all ancestors of that block on the blockchain

- Vote rules: GRANDPA uses a variation of the Greedy Heaviest Observed Sub-Tree (GHOST) rule to select the block that has the majority of votes from validators. The GHOST rule helps select the block that is on the heaviest (most weighted) chain, which indicates the chain with the most cumulative support from validators. In this case, the support/weight is the validator’s staked amount.

- Vote Process: There are 3 steps to the process. Pre-votes: Validators vote on the block they consider should be finalized, based on the blocks they’ve seen and their current view of the chain. Pre-commit: Validators pre-commit to a block if it is a descendant of the highest block they have seen justified in the pre-vote phase. A block is justified when more than ⅔ of the validators have pre-voted for it, either directly, or for a descendant of it. Finalization: Once more than 2/3 of validators have pre-committed to a block that block is considered finalized. This means that the block and all its ancestors are agreed upon to never be reverted.

When the block in which the proof was posted is finalized, a ProofVerified event is emitted, containing a proof_leaf and an attestation_id value.

Attestation: An attestation is produced based on the attestation size and frequency of submission. Once the condition for attestation is met, an authorized relayer will publish the attestation to the verification smart contract on the L1. Users will have to pay for the cost of posting the attestation on the destination chain, which will fluctuate based on specific integrations.

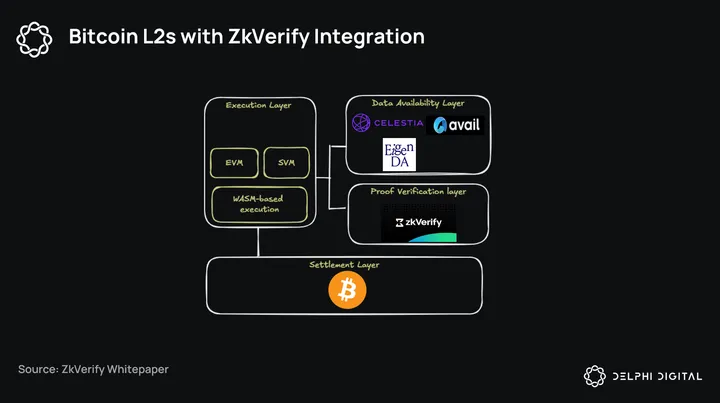

Integration

Here, we will take a look at an example of how integration between zkVerify and a rollup on Bitcoin would look like. While the implementation might differ between protocols, the underlying pattern of out-of-protocol verification should be similar for all other applications and infrastructure pieces looking to utilize zkVerify for cheap, fast, and native verification.

The zk(e)VM serves as an execution layer, where the transactions are executed and then batched. This batch will be used as input to create a proof to be verified by the base layer. The BitVM will handle the verification of this proof in an interactive manner, with most of the challenge-and-response procedures happening offchain. Should there be a dispute in computation mismatch, the resolution will occur on Bitcoin, with both parties committing and revealing specific computations.

Within this system, zkVerify can function as a decentralized proof verification network for proofs originating from Bitcoin L2s, thus offering additional guarantees beyond the optimistic assumptions.

Architecture Deep-dive

Given our basic understanding of how zkVerify works, it’s a good time to dive deeper into the nuances and understand how zkVerify reduces developer friction, improves cost efficiency, enhances interoperability, and positions itself to capture emerging web2 opportunities.

Proof Verification Design:

The proof verification mechanism is handled by the Mainchain, an L1 built with the Substrate Framework, pioneered in the Polkadot ecosystem.

As a Substrate-based L1, zkVerify inherits the flexibility of custom design logic that comes with the framework. This provides several advantages for overcoming the aforementioned issues:

- Fast-Tracked Innovation: Instead of being constrained and rigid like the EVM, the execution function of zkVerify can be easily upgraded to support new proof systems. This is done by integrating new pallets (custom programming logic) directly into the WASM runtime (Web-Assembly-based execution environment) without requiring a hard fork. zkVerify can quickly adapt to new progress within the field of ZKPs, thus helping teams accelerate the timeline and experimentation for ZKPs and blockchains. With zkVerify, developers no longer need to worry about system complexities and dependencies that can affect product stability and development timelines, since upgrading the ZKP systems is natively integrated into zkVerify.

- System Flexibility: Developers today have limited choices for ZKPs systems and have to overcome system dependencies, given constraints and implementations within the existing design for Virtual Machines. For instance, developers on Ethereum today are recommended to use proof systems that utilize KZG commitments (such as various Plonk-based SNARKs), as it’s the most cost-effective solution after the implementation of EIP-4844. However, not all proof systems use this commitment scheme. Proof systems using STARKs, for example, rely on different commitment techniques. To implement STARK-based systems on Ethereum today, developers must perform a STARK-to-SNARK conversion, which increases the required computation steps. For zkVerify, pallets can be designed as verifiers that take on a specific proof system with precompiles embedded, making the aforementioned conversion unnecessary. This approach can help simplify ZKP implementation for developers, as zkVerify can now handle native verification for different systems and route the verification to the desired destination for settlement. For developers, this means optimized, native ZKPs implementation and experimentation based on product needs, instead of being limited by current system constraints.

- Optimized Design: zkVerify’s architecture as a dedicated Layer 1 (L1) blockchain enables highly efficient Zero-Knowledge Proof (ZKP) operations. By focusing solely on proof verification and excluding smart contract capabilities, zkVerify eliminates concerns about competing for block space with other applications. This specialized design allows for rapid execution of complex proof systems and significant reduction in operational cost. Once a proof is sent to the Proof Submission Window, the runtime will verify it, and queue for block production. The entire process until finalization would take 6 seconds, with 2s for verification and 4s for chain operations.

- Throughput increase for proof verification: this optimized design enhances proof verification throughput. Unlike Ethereum, zkVerify dedicates its entire block space to proof verification, allocating 2s for verification and 4s for block production. Benchmark data shows promising results, with proof verification taking milliseconds per proof. zkVerify has 2-second block time, thus translates to substantially higher proof throughput compared to Ethereum’s capabilities. At theoretically max throughput for each type of proof only, the proof capacity is at 12.52 proofs-per-second for groth16 proof, and 18.25 proofs-per-second for risc0 proof (STARK-based).

Attestation mechanism:

Attestation is the action of bearing witness or formally certifying something is true or genuine. In the case of zkVerify, attestation serves as a validation to the underlying L1 that the proof(s) has been verified and included in a zkVerify block, thus authorizing zkApps to continue performing with already validated data. Proof verification can be quickly checked by querying the contract on the destination L1 to see if a proof has been included in a zkVerify block.

With zkVerify’s attestation mechanism, developers don’t need to build their verifiers everywhere they want their dApps to function. They simply verify once with zkVerify and the results are sent everywhere. There are two steps to the Attestation Mechanism: Heterogeneous Aggregation and Relay to the Destination Chain

Heterogenous Aggregation

Cryptographic aggregation is a method to compress proofs to be checked by the verifier at the base layer. For zkVerify, given that the verification happens outside of the protocol, all we need is aggregation via a Merkle Tree, allowing for fast and efficient proof checking. Compared to the recursive method, heterogenous aggregation helps achieve the same outcome in workload compression, while requiring a lot less computational power and resources. As a result, zkVerify achieves less operational cost for both developers implementing ZKPs and the end users.

Every new zero-knowledge proof that gets verified and included in a zkVerify block, will get hashed into a leaf appended to the Merkle Tree, along with an attestation_id of the attestation that the proof will be included in. Once the condition for publication is met, the Merkle Tree for the attestation_id is created and sent via the Relayer to the base layer verification contract. The attestation data structure is a digitally signed message that contains:

- Merkle root of the zkVerify Merkle tree that contains proofs as leaves, and

- Attestation ID is used for synchronization, identification, and security purposes.

Aggregation via merkle tree. Source: zkVerify Whitepaper

Attestation Policy

The policy leading to the publication of attestation to the base layer smart contract is met when one of the following rules is satisfied:

- The last attestation contains N proof, with N being the attestation size

- The last published attestation is older than T seconds, and there is at least proof in the new tree.

We can envision different policies for attestation publication on various destination chains. Users will have to pay for the cost of posting the attestation on the destination chain, which will fluctuate based on specific integrations. Ideally, this policy would change dynamically based on the user demand and underlying changes to the base layer.

The relayer:

The relayer handles the publication of the attestation to the verification smart contract at the destination chain. Given that the project is still in its early innings, the relayer will start as an authorized relayer from Horizen Labs publishing attestations on various destination chains.

Value proposition

The key takeaways from our Deep-dive Architecture Analysis can be summarized into two key points:

Increased Interoperability, Hyper Efficiency, and New Markets Expansion

zkVerify’s main value proposition is its ability to reduce developer friction, improve cost efficiency, and increase interoperability across different systems. In this novel architecture design, proof verification happens seamlessly, and is not limited by the functionality and design choice of the base layer.

The downstream effect is the efficiency gained for ZKPs deployment, not only in terms of overhead reduction but also the removal of developer friction and workload in auditing and maintaining these complex tech stacks. zkVerify is well-positioned to be the go-to partner for applications and systems aiming to implement ZKPs at scale. The advantage of a single verification layer not only comes in the form of performance efficiency, but also the improvement in cross-system interoperability, thus contributing to the possible white space of application designs. With zkVerify, the barrier for switching between ZKP systems and base layers is reduced significantly. Developers can verify once with zkVerify and have the results sent everywhere they want their applications to function.

Given the potential market growth for ZKPs, zkVerify is in a prime position to take up market share and become a dominant player in the years to come.

Simplified Developer Experience:

zkVerify’s architecture ensures fast integration and updates to support multiple proof systems without substantial time delay.

Instead of dealing with the intricacies and limitations of the underlying VM, which are computationally inefficient (STARK-to-SNARK conversion) or require careful planning and testing (hard forks), teams can leverage zkVerify to deploy proof systems that are the best fit for their product needs, with built-in precompile supports via Pallet integrations.

Before zkVerify, developers utilizing ZKPs have to manage their own verifier system, and figure out configurations that best fit the base layer technicalities. The problem gets complicated when accounting for a multichain future in terms of product development. With zkVerify, all these maintenance works are abstracted away, allowing teams to focus solely on iterations. Developers now have stronger control over product development and maintenance using ZKPs. This will result in a faster go-to-market strategy and better developer experience to help accelerate application maturity. Moreover, the ability to upgrade and integrate new proof systems natively will help zkVerify capture market share in the ZKPs space, giving it a first-mover advantage as one of the first toolkits to enable new proof systems in production. zkVerify will always have the most up-to-date verifiers, and can consistently stay top-of-mind for developers looking to quickly utilize ZKPs effectively within their systems.

Optimizations comparison:

zkVerify, alongside other protocols focused on zero-knowledge proofs, is part of a collective effort to optimize and simplify ZKP operations. This initiative aims to democratize ZKP technology, making it more accessible to a wider range of developers and end users. In this section, we will compare the key differences between zkVerify and other solutions currently on the market and showcase how zkVerify is directly challenging the currently proposed ZKPs supply chain design.

Proof markets, Prover networks VS zkVerify’s Proof Submission Interface

The fundamental difference between zkVerify, and the newly proposed ZKPs supply chain, are the differences in approach to optimization and the tradeoff in each design.

With proof markets and Prover networks, the focus is on proof generation, which is the biggest bucket of ZKPs cost. Similar to the MEV supply chain, proof markets rely on sophisticated actors with specialized hardware to amortize the cost of proof generation. While this design does overcome the cost bottleneck for generation, latency and finality will likely become the trade-off for cost, due to the requester having to wait on the most cost-effective solution submitted from the market. On the contrary, if the ZKPs submitter prioritizes speed by splitting proof for parallelization, there is an additional cost for re-joining split proofs required at the end of the proof generation process.

Assuming the rewards are distributed based on prover performance, a large portion will naturally accrue to the prover(s) with the most optimized stack, thus becoming a centralizing force in this market. The difference gained between an oligopoly of performant provers versus a centralized one is minimal in this case, looking from the decentralization point of view. On the other hand, the security concern can be challenging to navigate, given an out-of-system proof generation. This includes but is not limited to, system complexity, additional trust assumptions, and inventory management. Additionally, systems using STARKs will still have to go through the STARK-to-SNARK conversion before verification, resulting in inefficient resource allocation.

zkVerify’s Proof Submission Interface offers a much simpler path by allowing provers to submit their proof directly to the unified verification layer. Proofs reaching the submission interface will be included in a transaction queue for processing. This, in cọnjunction with mechanisms like BABE and Grandpa, thus provides the low latency and fast finality that many systems require. zkVerify, with its dedicated Pallets architecture for verification and built-in precompiles for operations, can also achieve the same competitive cost advantage as prover networks.

Cryptographic Aggregation VS Heterogenous Aggregation

At a high level, proof aggregation lets multiple proofs be aggregated into a single proof, either via recursion, or signature aggregation. The entire process is done cryptographically and outputs a single proof-of-proofs to be verified by the verification contract. Instead of evaluating each proof, the system can examine the validity of the proof-of-proofs and determine the computation correctness within the aggregated output.

While theoretically, ZKPs characteristics enable proof-of-multiple-proofs to be a very elegant design, three issues arise from practical implementation:

- Cryptographic Compatibility: Different zero-knowledge proof systems often use varied cryptographic foundations, making direct combinations a challenge. This includes differences in underlying mathematical structures, security assumptions, and verification processes. Creating a system that unifies all different proof systems while maintaining the security guarantees of all component proofs is a major hurdle.

- Performance and Scalability: Aggregating multiple proofs introduces significant computational overhead. As the number of proofs increases, the next bottleneck is to keep proof sizes manageable and verification times short. Balancing these factors while maintaining the benefits of each proof system within the aggregation is a complex optimization problem.

- System Maintenance: The lack of standardized interfaces between different proof systems complicates aggregation efforts. Developing common standards for proof interoperability, managing various verification keys, and handling different versions or updates of proof systems present ongoing challenges for creating a robust and flexible proof-of-multiple-proofs system. For developers, this means a high level of dependency on aggregator providers and constant maintenance for such a complex system, detracting away from the core product and user experience development.

zkVerify, on the other hand, introduces the concept of “heterogeneous aggregation”, in which aggregation happens regardless of the proof system. Rather than cryptographically compressing the verification workload via aggregation, zkVerify simply hashes the verified proofs into a Merkle tree and publishes the Merkle root to the base layer for attestation, provided that the publication policy is met. Proof submitters can check whether the proof has been finalized by querying the attestation contract on the base layer.

By hashing together all the proofs into a Merkle tree, zkVerify provides a similar form of workload compression without incurring additional computation complexity and enables efficient checking of proof inclusion. For end users and developers, this means cheap and fast operations for proof verification. Once the proof is checked to be included in the Merkle tree, proof submitters can be guaranteed that zkVerify has verified the proof to be valid, and included into a zkVerify mainchain block.

Understanding tradeoffs

Most implementations in production today require the verification contract deployed directly on the L1, especially in the case of rollups. By moving the verification process outside the base layer, zkVerify is making the trade-offs between the underlying trust assumption for speed and cost.

In the case of Rollups, users can trust that the transactions on L2s are secured and non-revertible, based on Ethereum’s cryptographic architecture and underlying incentives. With zkVerify, Ethereum users are trusting an outside party, instead of ETH validators, to ensure the integrity of the proof system. This doesn’t imply that zkVerify lacks security (as the combination of ZKPs, BABE, and GRANDPA offers a robust foundation). Rather, the point is that the additional trust layer introduces more complexity to the application of ZKPs. As a result, zkVerify focuses on use cases that demand high-frequency data feeds and computation compression, catering to sectors where speed and efficiency are critical. To help ease the mind, however, let’s examine the security combination of ZKPs and BABE + GRANDPA in greater detail:

- ZKPs, as we have covered throughout this report, provide hardness guarantees. This means that introducing a faulty computation into the system is an extremely hard thing to do, given that only prover(s) with the correct input can convince the verifier of a valid proof (soundness). STARKs, given its hash-based commitment scheme, can further ensure that the system is quantum resistant (Quantum computers can’t guess the right answer to the computation).

- With ZKPs already introducing preemptive validation (challenge first, confirm after), BABE and GRANDPA introduce randomization and majority confirmation, further extending our security assumptions. BABE selects the block producer(s)- and in the case of zkVerify, the executor(s) of ZKPs verification – based on the value of VRF, before broadcasting to the network, and GRANDPA only reaches finality (block added to the canonical chain) when two-thirds of the validator sets agree on the block content.

Additionally, zkVerify also implements Nominated Proof-of-Stake (NPoS). In NPoS, Nominators (token holders) can nominate a validator to participate in the block production/validation by delegating their token and in return, receive earnings distributed from the validator(s) that they back. NPoS also has slashing mechanisms enabled to penalize malicious behaviors from validators, thus affecting both the validators and its nominator’s stakes in the event of any violations. This mechanism allows participants to have more skin in the game, and thus, strengthens the system’s security and trust assumptions.

Initial Target Markets:

Below we will highlight the potential serviceable market that zkVerify can tap into. By focusing on these sectors early on as part of the GTM effort, zkVerify can be well prepared to capture the upside potential of technology adoption for zero-knowledge proofs.

Private DeFi

Decentralized Finance is one of the core sectors within crypto, enabling open and interoperable finance. Within just five years, the entire sector has reached a Total Value Locked of $126.004 Billion, with the total market cap of top projects coming in at $117.9 Billion.