Report Summary

Ethereum is the only major protocol building a scalable unified settlement and data availability layer

Rollups scale computation while leveraging Ethereum’s security

All roads lead to the endgame of centralized block production, decentralized trustless block validation, and censorship resistance

Innovations such as proposer-builder separation and weak statelessness unlock this separation of powers (building and validating) to achieve scalability without sacrificing security or decentralization

MEV is now front and center – numerous designs are planned to mitigate its harm and prevent its centralizing tendencies

Danksharding combines multiple avenues of cutting edge research to provide the scalable base layer required for Ethereum’s rollup-centric roadmap

I do expect danksharding to be implemented within our lifetimes

Introduction

I’ve been pretty skeptical on the timing of the merge ever since Vitalik said there’s a 50-75% chance people born today live to the year 3000 and he hopes to be immortal. But what the hell, let’s have some fun and look even further ahead to Ethereum’s ambitious roadmap anyway.

This is no quick-hitter article. If you want a broad yet nuanced understanding of Ethereum’s ambitious roadmap – give me an hour of your focus, and I’ll save you months of work.

Ethereum research is a lot to keep track of, but everything ultimately weaves into one overarching goal – scale computation without sacrificing decentralized validation.

Hopefully you’re familiar with Vitalik’s famous “Endgame.” He acknowledges that some centralization is needed to scale. The “C” word is scary in blockchain, but it’s true. We just need to keep that power in check with decentralized and trustless validation. No compromises here.

Specialized actors will build blocks for both the L1 and above. Ethereum remains incredibly secure through easy decentralized validation, and rollups inherit their security from the L1. Ethereum then provides settlement and data availability allowing rollups to scale. All of the research here ultimately looks to optimize these two roles while simultaneously making it easier than ever to fully validate the chain.

Here’s a glossary to shorten some words that will show up ~43531756765713534 times:

- DA – Data Availability

- DAS – Data Availability Sampling

- PBS – Proposer-builder Separation

- PDS – Proto-danksharding

- DS – Danksharding

- PoW – Proof of Work

- PoS – Proof of Stake

Part I – The Road to Danksharding

Hopefully you’ve heard by now that Ethereum has pivoted to a rollup-centric roadmap. No more execution shards – Ethereum will instead optimize for data-hungry rollups. This is achieved via data sharding (Ethereum’s plan, kind of) or big blocks (Celestia’s plan).

The consensus layer does not interpret the shard data. It has one job – ensure the data was made available.

I’ll assume familiarity with some basic concepts like rollups, fraud and ZK proofs, and why DA is important. If you’re unfamiliar or just need a refresher, Can’s recent Celestia report covers them.

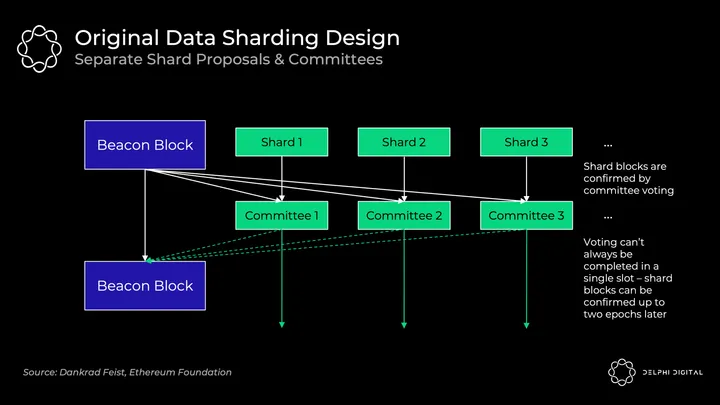

Original Data Sharding Design – Separate Shard Proposers

The design described here has been scrapped, but it’s valuable context. I’ll refer to this as “sharding 1.0” throughout for simplicity.

Each of the 64 shard blocks had separate proposers and committees rotating through from the validator set. They individually verify that their shard’s data was made available. This wouldn’t be DAS initially – it relies on the honest majority of each shard’s validator set to fully download the data.

This design introduces unnecessary complexity, worse UX, and attack vectors. Shuffling validators around between shards is tricky.

It also becomes very difficult to guarantee that voting will be completed within a single slot unless you introduce very tight synchrony assumptions. The Beacon block proposer needs to gather all of the individual committee votes, and there can be delays.

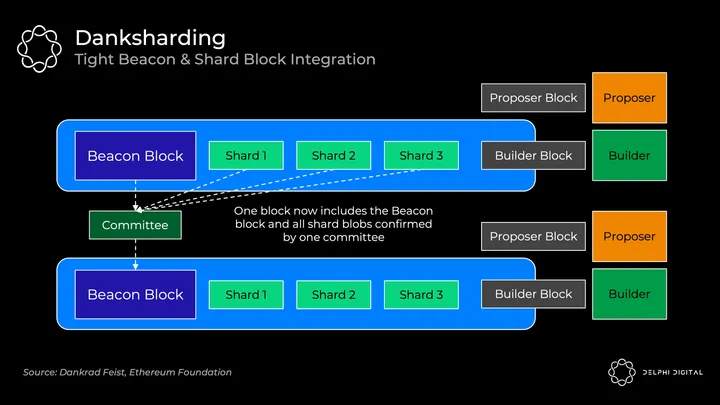

DS is completely different. Validators conduct DAS to confirm that all data is available (no more separate shard committees). A specialized builder creates one large block with the Beacon block and all shard data confirmed together. PBS is thus necessary for DS to remain decentralized (building that large block together is resource intensive).

Data Availability Sampling

Rollups post a ton of data, but we don’t want to burden nodes with downloading all of it. That would mean high resource requirements, thus hurting decentralization.

Instead, DAS allows nodes (even light clients) to easily and securely verify that all of it was made available without having to download all of it.

- Naive solution – Just check a bunch of random chunks from the block. If they all check out, I sign off. But what if you missed the one transaction that gives all your ETH to Sifu? Funds are no longer safu.

- Smart solution – Erasure code the data first. Extend the data using a Reed-Solomon code. This means the data is interpolated as a polynomial, and then we evaluate it at a number of additional places. That’s a mouthful, so let’s break it down.

Quick lesson for everyone else who forgot math class. (I promise this won’t be really scary math – I had to watch some Khan Academy videos to write these sections, but even I get it now).

Polynomials are expressions summing any finite number of terms of the form cxk. The degree is the highest exponent. For example, 2x3+6x2+2x-4 is a polynomial of degree three. You can reconstruct any polynomial of degree d from any d+1 coordinates that lie on that polynomial.

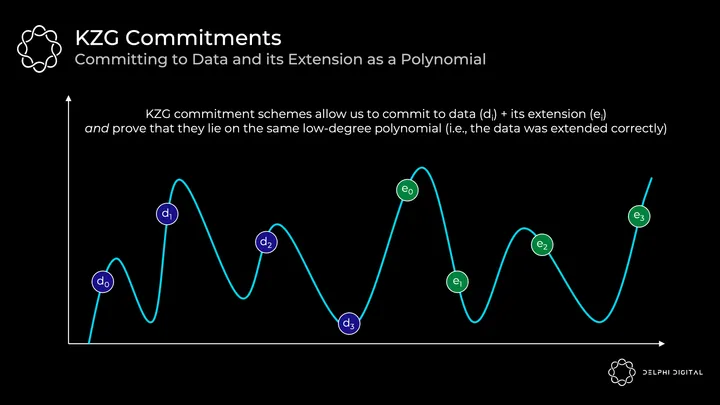

Now for a concrete example. Below we have four chunks of data (d0 through d3). These chunks of data can be mapped to evaluations of a polynomial f(X) at a given point. For example, f(0) = d0. Now you find the polynomial of minimal degree that runs through these evaluations. Since this is four chunks, we can find the polynomial of degree three. Then, we can extend this data to add four more evaluations (e0 though e3) which lie along the same polynomial.

Remember that key polynomial property – we can reconstruct it from any four points, not just our original four data chunks.

Back to our DAS. Now we only need to be sure that any 50% (4/8) of the erasure-coded data is available. From that, we can reconstruct the entire block.

So an attacker would have to hide >50% of the block to successfully trick DAS nodes into thinking the data was made available when it wasn’t.

The probability of <50% being available after many successful random samples is very small. If we successfully sampled the erasure coded data 30 times, then the probability that <50% is available is 2-30.

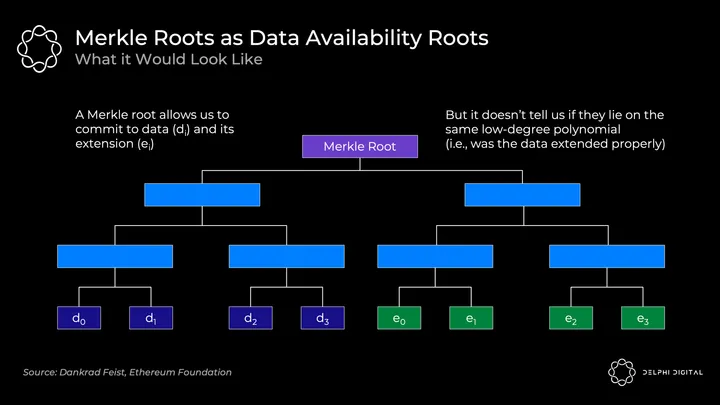

KZG Commitments

Ok, so we did a bunch of random samples and it was all available. But we have another problem – was the data erasure coded properly? Otherwise maybe the block producer just added 50% junk when they extended the block, and we sampled nonsense. In which case we wouldn’t actually be able to reconstruct the data.

Normally we just commit to large amounts of data by using a Merkle root. This is effective in proving inclusion of some data within a set.

However, we also need to know that all of the original and extended data lie on the same low-degree polynomial. A Merkle root does not prove this. So if you use this scheme, you also need fraud proofs in case it was done incorrectly.

Developers are approaching this from two directions:

- Celestia is going the fraud proof route. Somebody needs to watch, and if the block is erasure coded incorrectly they’ll submit a fraud proof to alert everyone. This requires the standard honest minority assumption and synchrony assumption (i.e., in addition to someone sending me the fraud proof, I also need to assume I’m connected and will receive it within a finite amount of time).

- Ethereum and Polygon Avail are going a new route – KZG commitments (a.k.a. Kate commitments). This removes the honest minority and synchrony assumptions for safety in regards to fraud proofs (though they’re still there for reconstruction, as we’ll cover shortly).

Other solutions exist, but they’re not being actively pursued. For example, you could use ZK-proofs. Unfortunately they’re computationally impractical (for now). However, they’re expected to improve over the next few years, so Ethereum will likely pivot to STARKs down the road because KZG commitments are not quantum-resistant.

Back to KZG commitments – these are a type of polynomial commitment scheme.

Commitment schemes are just a cryptographic way to provably commit to some values. The best metaphor is putting a letter inside a locked box and handing it to someone else. The letter can’t be changed once inside, but it can be opened with the key and proven. You commit to the letter, and the key is the proof.

In our case, we map all that original and extended data on an X,Y grid then find the minimal degree polynomial that runs through them (this process is called a Lagrange interpolation). This polynomial is what the prover will commit to:

Here are the key points:

- We have a “polynomial” f(X)

- The prover makes a “commitment” C(f) to the polynomial

- This relies on elliptic curve cryptography with a trusted setup. For a bit more detail on how this works, here’s a great thread from Bartek

- For any “evaluation” y = f(z) of this polynomial, the prover can compute a “proof” π(f,z)

- Given the commitment C(f), the proof π(f,z), any position z, and the evaluation y of the polynomial at z, a verifier can confirm that indeed f(z) = y

- Translation: the prover gives these pieces to any verifier, then the verifier can confirm that the evaluation of some point (where the evaluation represents the underlying data) correctly lies on the polynomial that was committed to

- This proves that the original data was extended correctly because all evaluations lie on the same polynomial

- Notice the verifier doesn’t need the polynomial f(X)

- Important property – this has O(1) commitment size, O(1) proof size, and O(1) verification time. Even for the prover, commitment and proof generation only scale at O(d) where d is the degree of the polynomial

- Translation: even as n (the number of values in X) increases (i.e., as the data set increases with larger shard blobs) – the commitments and proofs stay constant size, and verification takes a constant amount of effort

- The commitment C(f) and proof π(f,z) are both just one elliptic curve element on a pairing friendly curve (this will use BLS12-381). In this case, they would be only 48 bytes each (really small)

- So a prover commits to a huge amount of original and extended data (represented as many evaluations along the polynomial) at still just 48 bytes, and the proof will also just be 48 bytes

- TLDR – this is highly scalable

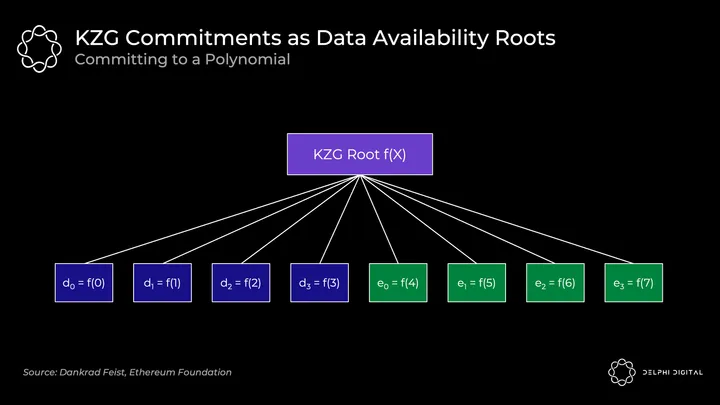

The KZG root (a polynomial commitment) is then analogous to a Merkle root (which is a vector commitment):

The original data is the polynomial f(X) evaluated at the positions f(0) to f(3), then we extend it by evaluating the polynomial at f(4) to f(7). All points f(0) to f(7) are guaranteed to be on the same polynomial.

Bottom line: DAS allows us to check that erasure coded data was made available. KZG commitments prove to us that the original data was extended properly and commit to all of it.

Well done, that’s all for today’s algebra.

KZG Commitments vs. Fraud Proofs

Take a step back to compare the two approaches now that we understand how KZG works.

KZG disadvantages – it won’t be post-quantum secure, and it requires a trusted setup. These aren’t worrisome. STARKs provide a post-quantum alternative, and the trusted setup (which is open to participate in) requires only a single honest participant.

KZG advantages – lower latency than the fraud proof setup (though as noted Gasper won’t have fast finality anyway), and it ensures proper erasure coding without introducing the synchrony and honest minority assumptions inherent in fraud proofs.

However, consider that Ethereum will still reintroduce these assumptions for block reconstruction, so you’re not actually removing them. DA layers always need to plan for the scenario where the block was made available initially, but then nodes need to communicate with each other to piece it back together. This reconstruction requires two assumptions:

- You have enough nodes (light or full) sampling the data such that collectively they have enough to piece it back together. This is a fairly weak and unavoidable honest minority assumption, so not a huge concern.

- Reintroduces the synchrony assumption – the nodes need to be able to communicate within some period of time to put it back together.

Ethereum validators fully download shard blobs in PDS, and with DS they’ll only conduct DAS (downloading assigned rows and columns). Celestia will require validators to download the entire block.

Note that in either case, we need the synchrony assumption for reconstruction. In the event the block is only partially available, full nodes must communicate with other nodes to piece it back together.

The latency advantage of KZG would show up if Celestia ever wanted to move from requiring validators to download the entire data to only conducting DAS (though this shift isn’t currently planned). They would then need to implement KZG commitments as well – waiting for fraud proofs would mean significantly increasing the block intervals, and the danger of validators voting for an incorrectly encoded block would be much too high.

I recommend the below for deeper exploration of how KZG commitments work:

- A (Relatively Easy To Understand) Primer on Elliptic Curve Cryptography

- Exploring Elliptic Curve Pairings – Vitalik

- KZG polynomial commitments – Dankrad

- How do trusted setups work? – Vitalik

In-protocol Proposer-Builder Separation

Consensus nodes today (miners) and after the merge (validators) serve two roles. They build the actual block, then they propose it to other consensus nodes who validate it. Miners “vote” by building on top of the previous block, and after the merge validators will vote directly on blocks as valid or invalid.

PBS splits these up – it explicitly creates a new in-protocol builder role. Specialized builders will put together blocks and bid for proposers (validators) to select their block. This combats MEV’s centralizing force.

Recall Vitalik’s “Endgame” – all roads lead to centralized block production with trustless and decentralized validation. PBS codifies this. We need one honest builder to service the network for liveness and censorship resistance (and two for an efficient market), but validator sets require an honest majority. PBS makes the proposer role as easy as possible to support validator decentralization.

Builders receive priority fee tips plus whatever MEV they can extract. In an efficient market, competitive builders will then bid up to the full value they can extract from blocks (less their amortized costs such as powerful hardware, etc.). All value trickles down to the decentralized validator set – exactly what we want.

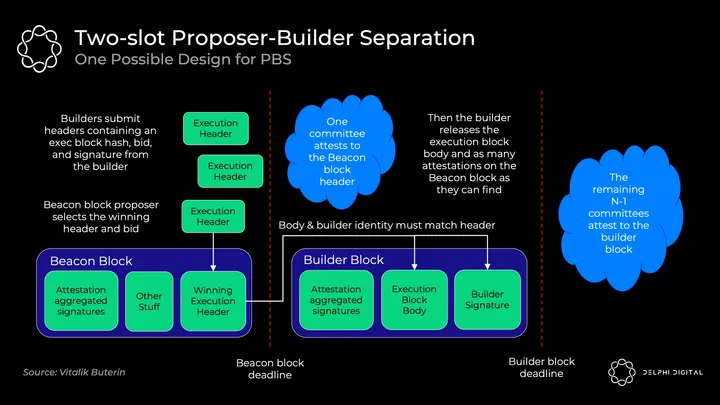

The exact PBS implementation is still being discussed, but two-slot PBS could look like this:

- Builders commit to block headers along with their bids

- Beacon block proposer chooses the winning header and bid. Proposer is paid the winning bid unconditionally, even if the builder fails to produce the body

- Committee of attestors confirm the winning header

- Builder reveals the winning body

- Separate committees of attestors elect the winning body (or vote that it was absent if the winning builder withholds it)

Proposers are selected from the validator set using the standard RANDAO mechanism. Then we use a commit-reveal scheme where the full block body isn’t revealed until the block header has been confirmed by the committee.

The commit-reveal is more efficient (sending around hundreds of full block bodies could overwhelm bandwidth on the p2p layer), and it also prevents MEV stealing. If builders were to submit their full block, another builder could see it, figure out that strategy, incorporate it, and quickly publish a better block. Additionally, sophisticated proposers could detect the MEV strategy used and copy it without compensating the builder. If this MEV stealing became the equilibrium it would incentivize merging the builder and proposer, so we avoid this using commit-reveal.

After the winning block header has been selected by the proposer, the committee confirms it and solidifies it in the fork choice rule. Then, the winning builder publishes their winning full “builder block” body. If it was published in time, the next committee will attest to it. If they fail to publish in time, they still pay the full bid to the proposer (and lose out on all of the MEV and fees). This unconditional payment removes the need for the proposer to trust the builder.

The downside of this “two-slot” design is latency. Blocks after the merge will be a fixed 12 seconds, so here we’d need 24 seconds for a full block time (two 12-second slots) if we don’t want to introduce any new assumptions. Going with 8 seconds for each slot (16-second block times) appears to be a safe compromise, though research is ongoing.

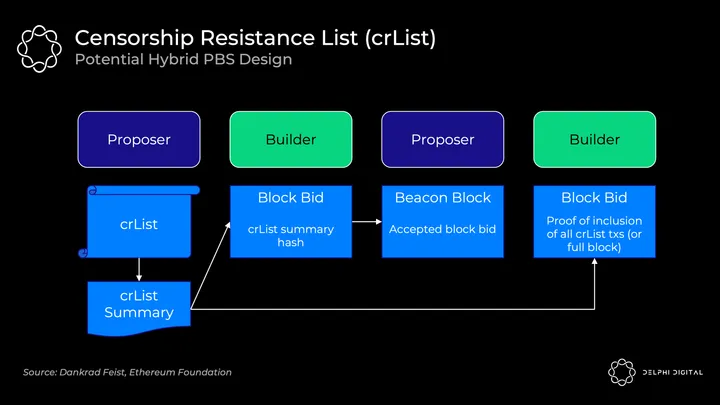

Censorship Resistance List (crList)

PBS unfortunately gives builders heightened ability to censor transactions. Maybe the builder just doesn’t like you, so they ignore your transactions. Maybe they’re so good at their job that all the other builders gave up, or maybe they’ll just overbid for blocks because they really don’t like you.

crLists put a check on this power. The exact implementation is again an open design space, but “hybrid PBS” appears to be the favorite. Proposers specify a list of all eligible transactions they see in the mempool, and the builder will be forced to include them (unless the block is full):

- Proposer publishes a crList and crList summary which includes all eligible transactions

- Builder creates a proposed block body then submits a bid which includes a hash of the crList summary proving they’ve seen it

- Proposer accepts the winning builder’s bid and block header (they don’t see the body yet)

- The builder publishes their block and includes a proof they’ve included all transactions from the crList or that the block was full. Otherwise the block won’t be accepted by the fork-choice rule

- Attestors check the validity of the body that was published

There are still important questions to be ironed out here. For example, the dominant economic strategy here is for a proposer to submit an empty list. This would allow even censoring builders to win the auction so long as they bid the highest. There are ideas to address this and other questions, but just stressing the design here isn’t set in stone.

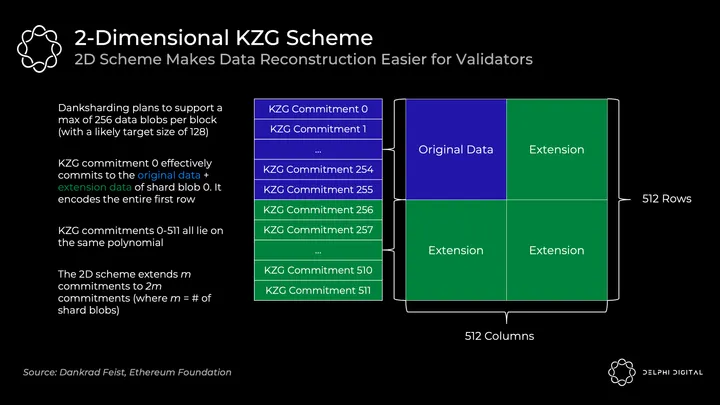

2-Dimensional KZG Scheme

We saw how KZG commitments allow us to commit to data and prove it was extended properly. However, I simplified what Ethereum will actually do. It won’t commit to all of the data in a single KZG commitment – a single block will use many KZG commitments.

We already have a specialized builder, so why not just have them create one giant KZG commitment? The issue is that this would require a powerful supernode to reconstruct. We’re ok with a supernode requirement for initial building, but we need to avoid that assumption for reconstruction here. We need lower resource entities to be able to handle reconstruction, and splitting it up into many KZG commitments makes this feasible. Reconstruction may even be fairly common or the base case assumption in this design given the amount of data at hand.

To make reconstruction easier, each block will include m shard blobs encoded in m KZG commitments. Doing this naively would result in a ton of sampling though – you’d conduct DAS on every shard blob to know it’s all available (m*k samples where k is the number of samples per blob).

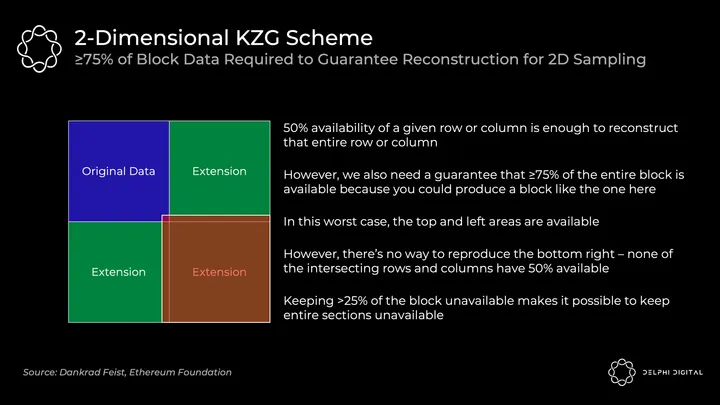

Instead, Ethereum will use a 2D KZG scheme. We use the Reed-Solomon code again to extend m commitments to 2m commitments:

We make it a 2D scheme by extending additional KZG commitments (256-511 here) that lie on the same polynomial as 0-255. Now we just conduct DAS on the table above to ensure availability of data across all shards.

The 2D sampling requirement for ≥75% of the data to be available (as opposed to 50% earlier) means we do a bit higher number of fixed samples. Before I mentioned 30 samples for DAS in the simple 1D scheme, but this will require 75 samples to ensure the same probabilistic odds of reconstructing an available block.

Sharding 1.0 (which had a 1D KZG commitment scheme) only required 30 samples, but you would need to sample 64 shards if you wanted to check full DA for 1920 total samples. Each sample is 512 B, so this would require:

(512 B x 64 shards x 30 samples) / 16 seconds = 60 KB/s bandwidth

In reality, validators were just shuffled around without ever individually checking all shards.

The combined blocks with the 2D KZG commitment scheme now makes it trivial to check full DA. It only requires 75 samples of the single unified block:

(512 B x 1 block x 75 samples) / 16 seconds = 2.5 KB/s bandwidth

Danksharding

PBS was initially designed to blunt the centralizing forces of MEV on the validator set. However, Dankrad recently took advantage of that design realizing that it unlocked a far better sharding construct – DS.

DS leverages the specialized builder to create a tighter integration of the Beacon Chain execution block and shards. We now have one builder creating the entire block together, one proposer, and one committee voting on it at a time. DS would be infeasible without PBS – regular validators couldn’t handle the massive bandwidth of a block full of rollups’ data blobs:

Sharding 1.0 included 64 separate committees and proposers, so each shard could have been unavailable individually. The tighter integration here allows us to ensure DA in aggregate in one shot. The data is still “sharded” under the hood, but from a practical perspective danksharding starts to feel more like big blocks which is great.

Danksharding – Honest Majority Validation

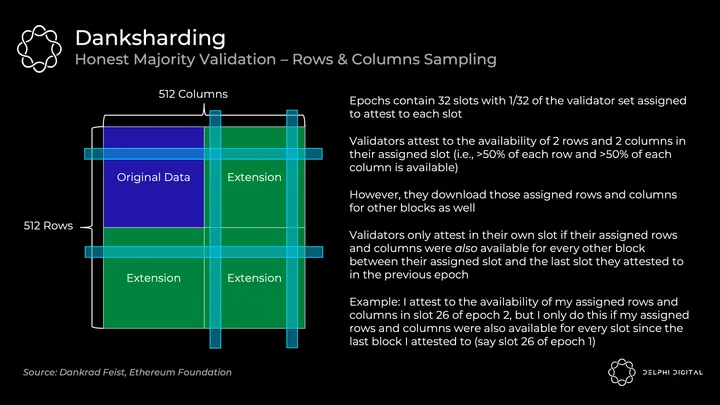

Validators attest to data being available as follows:

This relies on the honest majority of validators – as an individual validator, my columns and rows being available is not enough to give me statistical confidence that the full block is available. This relies on the honest majority to say it is. Decentralized validation matters.

Note this is different from the 75 random samples we discussed earlier. Private random sampling is how low-resource individuals will be able to easily check availability (e.g., I can run a DAS light node and know the block is available). However, validators will continue to use the rows and columns approach for checking availability and leading block reconstruction.

Danksharding – Reconstruction

As long as 50% of an individual row or column is available, then it can easily be fully reconstructed by the sampling validator. As they reconstruct any chunks missing from their rows/columns, they redistribute those pieces to the orthogonal lines. This helps other validators reconstruct any missing chunks from their intersecting rows and columns as needed.

The security assumptions for reconstruction of an available block here are:

- Enough nodes to perform sample requests such that they collectively have enough data to reconstruct the block

- Synchrony assumption between nodes who are broadcasting their individual pieces of the block

So, how many nodes is enough? Rough estimates ballpark it ~64,000 individual instances (currently over ~380,000 today so far). This is also a very pessimistic calculation which assumes no crossover in nodes being run by the same validator (which is far from the case as nodes are limited to 32 ETH instances). If you’re sampling more than 2 rows and columns, you increase the odds that you can collectively retrieve them because of the crossover. This starts to scale quadratically – if validators are running say 10 or 100 validators, the 64,000 could be reduced by orders of magnitude.

If the number of validators online starts to get uncomfortably low, DS can be set to reduce the shard data blob count automatically. Thus the security assumption would be brought down to a safe level.

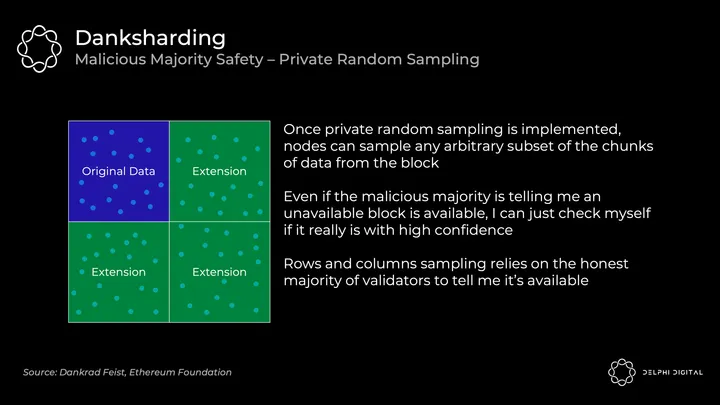

Danksharding – Malicious Majority Safety With Private Random Sampling

We saw that DS validation relies on the honest majority to attest to blocks. I as an individual can’t prove to myself that a block is available by just downloading a couple rows and columns. However, private random sampling can give me this guarantee without trusting anyone. This is where nodes check those 75 random samples as discussed earlier.

DS initially won’t include private random sampling because it’s simply a very difficult problem to solve on the networking side (PSA: maybe they could actually use your help here!).

Note the “private” is important because if the attacker has de-anonymized you, they’re able to trick a small amount of sampling nodes. They can just return you the exact chunks you requested and withhold the rest. So you wouldn’t know from your own sampling alone that all data was made available.

Danksharding – Key Takeaways

In addition to being a sweet name, DS is also incredibly exciting. It finally achieves Ethereum’s vision of a unified settlement and DA layer. This tight coupling of the Beacon block and shards essentially pretends not to be sharded.

In fact, let’s define why it’s even considered “sharded” at all. The only remnant of “sharding” is simply the fact that validators aren’t responsible for downloading all of the data. That’s it.

So you’re not crazy if you were questioning by now whether this is really still sharding. This distinction is why PDS (we’ll cover this shortly) is not considered “sharded” (even though it has “sharding” in the name, yes I know it’s confusing). PDS requires each validator to fully download all shard blobs in order to attest to their availability. DS then introduces sampling, so individual validators only download pieces of it.

The minimal sharding thankfully means a much simpler design than sharding 1.0 (so quicker delivery right? right?). Simplifications include:

- Likely hundreds of lines less code in the DS specification vs. the sharding 1.0 specification (thousands of lines less in the clients)

- No more shard committee infrastructure, committees just need to vote on the main chain

- No tracking of separate shard blob confirmations, now they all get confirmed in the main chain or they don’t

One great result of this – a merged fee market for data. Sharding 1.0 with distinct blocks made by separate proposers would have fragmented this.

The removal of shard committees also strengthens bribery resistance. DS validators vote once per epoch on the entire block, so data gets confirmed by 1/32 of the entire validator set immediately (32 slots per epoch). Sharding 1.0 validators also voted once per epoch, but each shard had its own committee being shuffled around. So each shard was only being confirmed by 1/2048 of the validator set (1/32 split across 64 shards).

The combined blocks with the 2D KZG commitment scheme also makes DAS far more efficient as discussed. Sharding 1.0 would require 60 KB/s bandwidth to check full DA of all shards. DS only requires 2.5 KB/s.

Another exciting possibility kept alive with DS – synchronous calls between ZK-rollups and L1 Ethereum execution. Transactions from a shard blob can immediately confirm and write to the L1 because everything is produced in the same Beacon Chain block. Sharding 1.0 would’ve removed this possibility due to separate shard confirmations. This allows for an exciting design space which could be incredibly valuable for things like shared liquidity (e.g., dAMM).

Danksharding – Limits to Blockchain Scalability

Modular base layers scale elegantly – more decentralization begets more scaling. This is fundamentally different from what we see today. Adding more nodes to a DA layer allows you to safely increase data throughput (i.e., more room for rollups to live on top).

There are still limits to blockchain scalability, but we can push orders of magnitude higher than anything we see today. Secure and scalable base layers allow execution to proliferate atop them. Improvements in data storage and bandwidth will also allow for higher data throughput over time.

Pushing beyond the DA throughput contemplated here is certainly in the cards, but it’s hard to say where that max will end up. There isn’t a clear red line, but rather an area where some assumptions will start to feel uncomfortable:

- Data storage – This ties into DA vs. data retrievability. The consensus layer’s role isn’t to guarantee data retrievability indefinitely. Its role is to make it available for enough time such that anyone who cares to download it can, satisfying our security assumptions. Then it gets dumped into storage wherever – this is comfortable as history is a 1 of N trust assumption, and we’re not actually talking about that much data in the grand scheme of things. This could get into uncomfortable territory though years down the line as throughput increases by orders of magnitude.

- Validators – DAS requires enough nodes to collectively reconstruct the block. Otherwise, an attacker could wait around and only respond to the queries they receive. If these queries provided aren’t enough to reconstruct the block, the attacker could withhold the rest and we’re out of luck. To safely increase throughput, we need to add more DAS nodes or increase their data bandwidth requirements. This isn’t a concern for the throughput discussed here. Again though, this could get uncomfortable if throughput increases by further orders of magnitude from this design.

Notice the builder isn’t the bottleneck. You’ll need to quickly generate KZG proofs for 32 MB of data, so expect a GPU or pretty beefy CPU plus at least 2.5 GBit/s bandwidth. This is a specialized role anyway for whom this is a negligible cost of doing business.

Proto-danksharding (EIP-4844)

DS is awesome, but we’ll have to be patient. PDS aims to tide us over – it implements necessary forward-compatible steps toward DS on an expedited timeline (targeting the Shanghai hard fork) to provide orders of magnitude scaling in the interim. However, it doesn’t actually implement data sharding yet (i.e., validators need to individually download all of the data).

Rollups today use L1 “calldata” for storage which persists on-chain forever. However, rollups only need DA for some reasonable period of time such that anyone interested has plenty of time to download it.

EIP-4844 introduces the new blob-carrying transaction format that rollups will use for data storage going forward. Blobs carry a large amount of data (~125 KB), and they can be much cheaper than similar amounts of calldata. Data blobs are then pruned from nodes after a month which blunts storage requirements. This is plenty of time to satisfy our DA security assumptions.

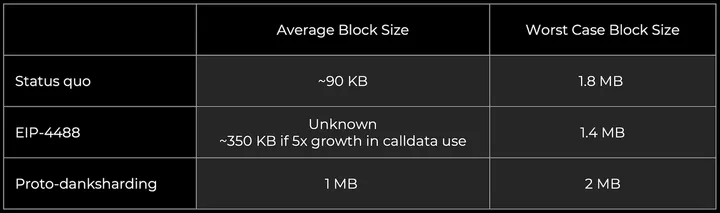

For context on scale, current Ethereum blocks generally average ~90 KB (calldata is ~10 KB of this). PDS unlocks far more DA bandwidth (target ~1 MB and max ~2 MB) for blobs because they get pruned after a month. They aren’t a permanent drag on nodes.

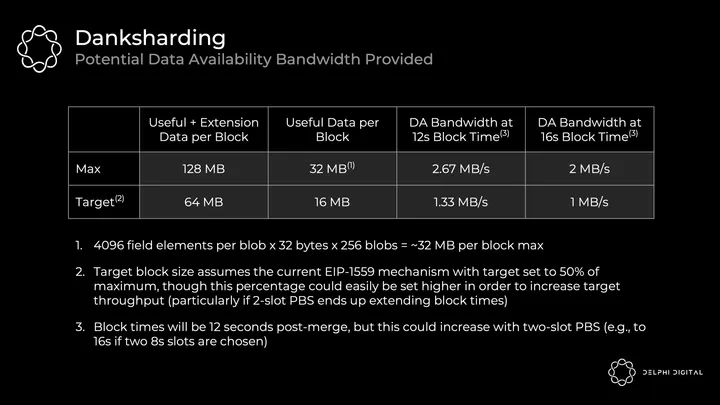

A blob is a vector of 4096 field-elements of 32 bytes each. PDS allows for a max of 16 per block, and DS will bump that up to 256.

PDS DA bandwidth = 4096 x 32 x 16 = 2 MiB per block, targeted at 1 MiB

DS DA bandwidth = 4096 x 32 x 256 = 32 MiB per block, targeted at 16 MiB

Orders of magnitude scaling with each step. PDS still requires consensus nodes to fully download the data, so it’s more conservative. DS distributes the load of storing and propagating data between validators.

Here’s are some of the goodies introduced by EIP-4844 on the road to DS:

- Data blob-carrying transaction format

- KZG commitments to the blobs

- All of the execution-layer logic required for DS

- All of the execution / consensus cross-verification logic required for DS

- Layer separation between BeaconBlock verification and DAS blobs

- Most of the BeaconBlock logic required for DS

- A self-adjusting independent gas price for blobs (multidimensional EIP-1559 with an exponential pricing rule)

And then DS will further add:

- PBS

- DAS

- 2D KZG scheme

- Proof-of-custody or similar in-protocol requirement for each validator to verify availability of a particular part of the sharded data in each block (probably for about a month)

Note these data blobs are introduced as a new transaction type on the execution chain, but they don’t burden the execution side with additional requirements. The EVM only views the commitment attached to the blobs. The execution layer changes being made with EIP-4844 are also forward compatible with DS, and no more alterations will be needed on this side. The upgrade from PDS to DS then only requires consensus layer changes.

Data blobs are fully downloaded by consensus clients in PDS. The blobs are now referenced, but not fully encoded, in the Beacon block body. Instead of embedding the full contents in the body, the contents of the blobs are propagated separately, as a “sidecar”. There is one blob sidecar per block that’s fully downloaded in PDS, then with DS validators will conduct DAS on it.

We discussed earlier how to commit to the blobs using KZG polynomial commitments. However, instead of using the KZG directly, EIP-4844 implements what we actually use – its versioned hash. This is a single 0x01 byte (representing the version) followed by the last 31 bytes of the SHA256 hash of the KZG.

We do this for easier EVM compatibility & forward compatibility:

- EVM compatibility – KZG commitments are 48 bytes whereas the EVM works more naturally with 32 byte values

- Forward compatibility – if we ever switch from KZG to something else (STARKs for quantum-resistance), the commitments can continue to be 32 bytes

Multidimensional EIP-1559

PDS finally creates a tailored data layer – data blobs will get their own distinct fee market with separate floating gas prices and limits. So even if some NFT project is selling a bunch of monkey land on L1, your rollup data costs won’t go up (though proof settlement costs would). This acknowledges that the dominant cost for any rollup today is posting their data to the L1 (not proofs).

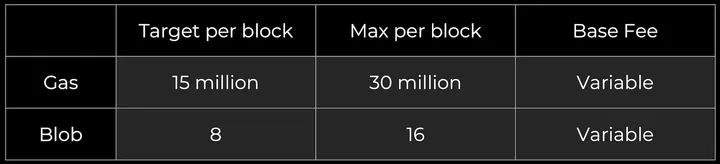

The gas fee market is unchanged, and data blobs are added as a new market:

The blob fee is charged in gas, but it’s a variable amount adjusting based on its own EIP-1559 mechanism. The long run average number of blobs per block should equal the target.

You effectively have two auctions running in parallel – one for computation and one for DA. This is a giant leap in efficient resource pricing.

There are some interesting designs being thrown around here. For example, it might make sense to change both the current gas and blob pricing mechanisms from the linear EIP-1559 to a new exponential EIP-1559 mechanism. The current implementation doesn’t average out to our target block sizes in practice. The base fee stabilizes imperfectly today, resulting in the observed average gas used per block exceeding the target by ~3% on average.

Part II – History & State Management

Quick recap on some basics here:

- History – Everything that’s ever happened on-chain. You can just stick it on a hard drive as it doesn’t require quick access. 1 of N honesty assumption in the long term.

- State – Snapshot of all the current account balances, smart contracts, etc. Full nodes (currently) all need this on hand to validate transactions. It’s too big for RAM, and a hard drive is too slow – it goes in your SSD. High throughput blockchains balloon their state, growing far beyond what us normies can keep on our laptops. If everyday users can’t hold the state, they can’t fully validate, so goodbye decentralization.

TLDR – these things get really big, so if you make nodes hold onto them it gets hard to run a node. If it’s too hard to run a node, us regular folk won’t do it. That’s bad, so we need to make sure that doesn’t happen.

Calldata Gas Cost Reduction With Total Calldata Limit (EIP-4488)

PDS is a great stepping stone toward DS which checks off many of the eventual requirements. Implementing PDS within a reasonable timespan can then pull forward the timeline on DS.

An easier to implement band-aid would be EIP-4488. It’s not quite so elegant, but it addresses the fee emergency nonetheless. Unfortunately it doesn’t implement steps along the way to DS, so all of the inevitable changes will still be required later. If it starts to feel like PDS is going to be a bit slower than we’d like, it could make sense to quickly jam through EIP-4488 (it’s just a couple lines of code changes) then get to PDS say another six months later. Timing is still in motion here.

EIP-4488 has two major components:

- Reduce calldata cost from 16 gas per byte to 3 gas per byte

- Add a limit of 1 MB calldata per block plus an extra 300 bytes per transaction (theoretical max of ~1.4 MB total)

The limit needs to be added to prevent the worst case scenario – a block full of calldata would reach up to 18 MB, which is far beyond what Ethereum can handle. EIP-4488 increases Ethereum’s average data capacity, but its burst data capacity would actually decrease slightly due to this calldata limit (30 million gas / 16 gas per calldata byte = 1.875 MB).

EIP-4488’s sustained load is much higher than PDS because this is still calldata vs. data blobs which can be pruned after a month. History growth would accelerate meaningfully with EIP-4488, making that a bottleneck to run a node. Even if EIP-4444 is implemented in tandem with EIP-4488, this only prunes execution payload history after a year. The lower sustained load of PDS is clearly preferable.

Bounding Historical Data in Execution Clients (EIP-4444)

EIP-4444 allows clients the option to locally prune historical data (headers, bodies, and receipts) older than one year. It mandates that clients stop serving this pruned historical data on the p2p layer. Pruning history allows clients to reduce users’ disk storage requirement (currently hundreds of GBs and growing).

This was already important, but it would be essentially mandatory if EIP-4488 is implemented (as it significantly grows history). Hopefully this gets done in the relatively near term regardless. Some form of history expiry will eventually be needed, so this is a great time to handle it.

History is needed for a full sync of the chain, but it isn’t needed for validating new blocks (this just requires state). So once a client has synced to the tip of the chain, historical data is only retrieved when requested explicitly over the JSON-RPC or when a peer attempts to sync the chain. With EIP-4444 implemented, we’d need to find alternative solutions for these.

Clients will be unable to “full sync” using devp2p as they do today – they will instead “checkpoint sync” from a weak subjectivity checkpoint that they’ll treat as the genesis block.

Note that weak subjectivity won’t be an added assumption – it’s inherent in the shift to PoS anyway. This necessitates that valid weak subjectivity checkpoints be used to sync due to the possibility of long-range attacks. The assumption here is that clients will not sync from an invalid or old weak subjectivity checkpoint. This checkpoint must be within the period that we start pruning historical data (i.e., within one year here) otherwise the p2p layer would be unable to provide the required data.

As more clients adopt lightweight sync strategies, this will also reduce bandwidth usage on the network.

Recovering Historical Data

It sounds nice that EIP-4444 prunes historical data after a year, and PDS prunes blobs even more quickly (after about a month). We definitely need these because we can’t require nodes to store all this and remain decentralized:

- EIP-4488 – long-run likely to include ~1 MB per slot adds ~2.5 TB storage per year

- PDS – at target ~1 MB per slot adds ~2.5 TB storage per year

- DS – at target ~16 MB per slot adds ~40 TB storage per year

But where does this data go? Don’t we still need it? Yes, but note that losing historical data is not a risk to the protocol – only to individual applications. It shouldn’t then be the job of the Ethereum core protocol to permanently maintain all of this data that it comes to consensus on.

So, who will store it? Here are some potential contributors:

- Individual and institutional volunteers

- Block explorers (e.g., etherscan.io), API providers, and other data services

- Third-party indexing protocols like TheGraph can create incentivized marketplaces where clients pay servers for historical data along with Merkle proofs

- Clients in the Portal Network (currently under development) could store random portions of chain history, and the Portal Network would automatically direct requests for data to the nodes that have it

- BitTorrent, eg. auto-generating and distributing a 7 GB file containing the blob data from the blocks in each day

- App-specific protocols (eg. rollups) can require their nodes to store the portion of history relevant to their app

The long-term data storage problem is a relatively easy problem because it’s a 1 of N trust assumption as we discussed before. We’re many years away from this being the ultimate limitation on blockchain scalability.

Weak Statelessness

Ok so we’ve got a good handle on managing history, but what about state? That’s actually the primary bottleneck to cranking up Ethereum’s TPS currently.

Full nodes take the pre-state root, execute all the transactions in a block, and check if the post-state root matches what they’ve been provided in the block. To know if those transactions were valid, they currently need the state on hand – validation is stateful.

Enter statelessness – not needing the state on hand to do some role. Ethereum is shooting for “weak statelessness” meaning state isn’t required to validate a block, but it is required to build the block. Verification becomes a pure function – give me a block in complete isolation and I can tell you if it’s valid or not. Basically this:

It’s acceptable that builders still require the state due to PBS – they’ll be more centralized high-resource entities anyway. Focus on decentralizing validators. Weak statelessness gives the builders a bit more work, and validators far less work. Great tradeoff.

You achieve this magical stateless execution with witnesses. These are proofs of correct state access that builders will start including in every block. Validating a block doesn’t actually require the whole state – you only need the state being read or affected by the transactions in that block. Builders will start including the pieces of state affected by transactions in a given block, and they’ll prove they correctly accessed that state with witnesses.

Let’s play out an example. Alice wants to send 1 ETH to Bob. To verify a block with this transaction, I need to know:

- Before the transaction – Alice had 1 ETH

- Alice’s public key – so I can tell the signature is correct

- Alice’s nonce – so I can tell the transaction was sent in the correct order

- After executing the transaction – Bob has 1 ETH more, Alice has 1 ETH less

In a weak statelessness world, the builder adds the above witness data to the block and the proof of its accuracy. The validator receives the block, executes it, and decides if it’s valid. That’s it!

Here are the implications from the validator perspective:

- Huge SSD requirement for holding state disappears – this is the key bottleneck to scaling today.

- Bandwidth requirements will increase a bit as you’re now also downloading the witness data and proof. This is a bottleneck with Merkle-Patricia trees, but it would be mild and not the bottleneck in Verkle tries.

- You still execute the transaction to fully validate. Statelessness acknowledges the fact that this isn’t currently the bottleneck to scaling Ethereum.

Weak statelessness also allows Ethereum to loosen self-imposed constraints on its execution throughput with state bloat no longer a pressing concern. Bumping up gas limits ~3x could be reasonable.

Most user execution will be taking place on L2s at this point anyway, but higher L1 throughput is still beneficial even to them. Rollups rely on Ethereum for DA (posted to shards) and settlement (which requires L1 execution). As Ethereum scales its DA layer, the amortized cost of posting proofs could become a larger share of rollup costs (especially for ZK-rollups).

Verkle Tries

We glossed over how those witnesses actually work. Ethereum currently uses a Merkle-Patricia tree for state, but the Merkle proofs required would be far too large for these witnesses to be feasible.

Ethereum will pivot to Verkle tries for state storage. Verkle proofs are far more efficient, so they can serve as viable witnesses to enable weak statelessness.

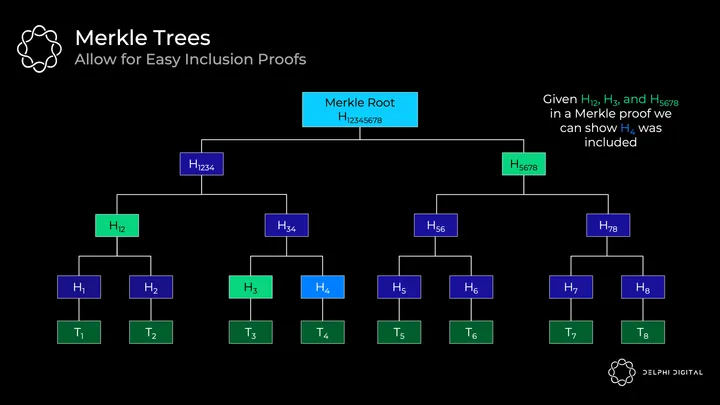

First let’s recap what a Merkle tree looks like. Every transaction is hashed to start – these hashes at the bottom are called “leaves.” All of the hashes are called “nodes”, and they are hashes of the two “children” nodes below them. The resulting final hash is the “Merkle root”.

This is a helpful data structure for proving inclusion of a transaction without needing to download the whole tree. For example, if you wanted to verify that transaction H4 is included, you just need H12, H3, and H5678 in a Merkle proof. We have H12345678 from the block header. So a light client can ask a full node for those hashes then hash them together per the route in the tree. If the result is H12345678, then we’ve successfully proved that H4 is in the tree.

The deeper the tree though, the longer the route to the bottom, and thus more items you need for the proof. So shallow and wide trees would seem good for making efficient proofs.

The problem is that if you wanted to make a Merkle tree wider by adding more children under each node, it would be incredibly inefficient. You need to hash together all siblings to make your way up the tree, so then you’d need to receive more sibling hashes for the Merkle proof. This would make the proof sizes massive.

This is where efficient vector commitments come in. Note the hashes used in Merkle trees are actually vector commitments – they’re just bad ones that only commit to two elements efficiently. So we want vector commitments where we don’t need to receive all the siblings to verify it. Once we have that, we can make the trees wider and decrease their depth. This is how we get efficient proof sizes – decreasing the amount of information that needs to be provided.

A Verkle trie is similar to a Merkle tree, but it commits to its children using an efficient vector commitment (hence the name “Verkle”) instead of a simple hash. So the basic idea is that you can have many children for each node, but I don’t need all the children to verify the proof. It’s a constant size proof regardless of the width.

We actually covered a great example of one of these possibilities before – KZG commitments can also be used as vector commitments. In fact, that’s what Ethereum devs initially planned to use here. They’ve since pivoted to Pedersen commitments to fulfill a similar role. These will be based on an elliptic curve (in this case Bandersnatch), and they’ll commit to 256 values each (a lot better than two!).

So why not have a tree of depth one that’s as wide as possible then? This would be great for the verifier who now has a super compact proof. But there’s a practical tradeoff that the prover needs to be able to compute this proof, and the wider it is the harder that gets. So these Verkle tries will lie in between the extremes at 256 values wide.

State Expiry

Weak statelessness removes state bloat constraints from validators, but state doesn’t magically disappear. Transactions cost a finite amount, but they inflict a permanent tax on the network by increasing the state. State growth is still a permanent drag on the network. Something needs to be done to address the underlying issue.

This is where state expiry comes in. State that’s been inactive for a long period of time (say a year or two) gets chopped from what even block builders need to carry. Active users won’t notice a thing, and deadweight state that’s no longer needed can be discarded.

If you ever need to resurrect expired state, you’ll simply need to display a proof and reactivate it. This falls back to the 1 of N storage assumptions here. As long as someone still has the full history (block explorers, etc.), you can get what you need from them.

Weak statelessness will blunt the immediate need for state expiry on the base layer, but it’s good to have in the long run especially as L1 throughput increases. It’ll be an even more useful tool for high throughput rollups. L2 state will grow at orders of magnitude higher rates, to the point where it will even be a drag on high-performance builders.

Part III – It’s all MEV

PBS was necessary to safely implement DS, but recall that it was actually designed at first to combat the centralizing forces of MEV. You’ll notice a recurring trend in Ethereum research today – MEV is now front and center in cryptoeconomics.

Designing blockchains with MEV in mind is critical to preserving security and decentralization. The basic protocol level approach is:

- Mitigate harmful MEV as much as possible (e.g., single-slot finality, single secret leader election)

- Democratize the rest (e.g., MEV-Boost, PBS, MEV smoothing)

The remainder must be easily captured and spread amongst validators. Otherwise, it will centralize validator sets due to the inability to compete with sophisticated searchers. This is exacerbated by the fact that MEV will comprise a much higher share of validator rewards after the merge (staking issuance is far lower than the inflation given to miners). It can’t be ignored.

MEV Supply Chain Today

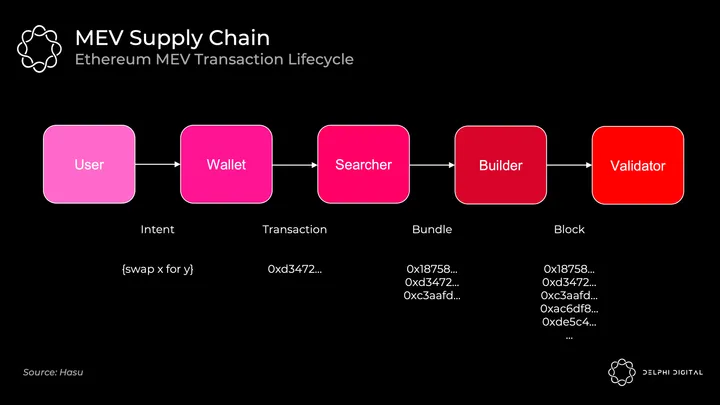

Today’s sequence of events looks like this:

Mining pools have played the builder role here. MEV searchers relay bundles of transactions (with their respective bids) to mining pools via Flashbots. The mining pool operator aggregates a full block and passes along the block header to individual miners. The miner attests to it with PoW giving it weight in the fork choice rule.

Flashbots arose to prevent vertical integration across the stack – this would open the door to censorship and other nasty externalities. When Flashbots began, mining pools were already beginning to strike exclusive deals with trading firms to extract MEV. Instead, Flashbots gave them an easy way to aggregate MEV bids and avoid vertical integration (by implementing MEV-geth).

After the merge, mining pools disappear. We want to open the door to at-home validators being reasonably able to operate. This requires finding someone to take on the specialized building role. Your at-home validator probably isn’t quite as good as the hedge fund with a payroll of quants at capturing MEV. Left unchecked, this would centralize the validator set if regular folk can’t compete. Structured properly, the protocol can redirect that MEV revenue toward the staking yields of everyday validators.

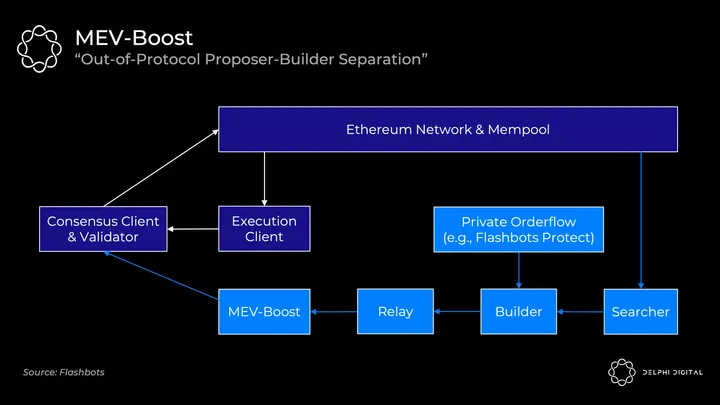

MEV-Boost

Unfortunately in-protocol PBS simply won’t be ready at the merge. Flashbots comes to the rescue again with a stepping stone solution – MEV-Boost.

Validators post-merge will default to receiving public mempool transactions directly into their execution clients. They can package these up, hand them to the consensus client, and broadcast them to the network. (If you need a refresher on how Ethereum’s consensus and execution clients work together, I cover this in Part IV).

But your mom and pop validator has no idea how to extract MEV as we discussed, so Flashbots is offering an alternative. MEV-boost will plug into your consensus client, allowing you to outsource specialized block building. Importantly, you still retain the option to use your own execution client as a fallback.

MEV searchers will continue to play the role they do today. They’ll run specific strategies (stat arb, atomic arb, sandwiches, etc.) and bid for their bundles to be included. Builders then aggregate all the bundles they see as well as any private orderflow (e.g., from Flashbots Protect) into the optimal full block. The builder passes only the header to the validator via a relay running to MEV-Boost. Flashbots intends to run the relayer and builder with plans to decentralize over time, but whitelisting additional builders will likely be slow.

MEV-Boost requires validators to trust relayers – the consensus client receives the header, signs it, and only then is the block body revealed. The relayer’s purpose is to attest to the proposer that the body is valid and exists, so that the validators don’t have to trust the builders directly.

When in-protocol PBS is ready, it then codifies what MEV-Boost offers in the interim. PBS provides the same separation of powers, allows for easier builder decentralization, and removes the need for proposers to trust anyone.

Committee-driven MEV Smoothing

PBS also opens the door to another cool idea – committee-driven MEV smoothing.

We saw the ability to extract MEV is a centralizing force on the validator set, but so is the distribution. The high variability in MEV rewards from one block to another incentivizes pooling many validators to smooth out your returns over time (as we see in mining pools today, although to a lesser degree here).

The default is to give the actual block proposer the full payment from the builders. MEV smoothing would instead split that payment across many validators. A committee of validators would check the proposed block and attest to whether or not that was indeed the block with the highest bid. If everything checks out, the block goes ahead and the reward is split amongst the committee and proposer.

This solves another concern as well – out-of-band bribes. Proposers could be incentivized to submit a suboptimal block and just take an out-of-band bribe directly to hide their payments from delegators for example. This attestation keeps proposers in check.

In-protocol PBS is a prerequisite for MEV smoothing to be implemented. You need to have an awareness of the builder market and explicit bids being submitted. There are several open research questions here, but it’s an exciting proposal again critical to ensuring decentralized validators.

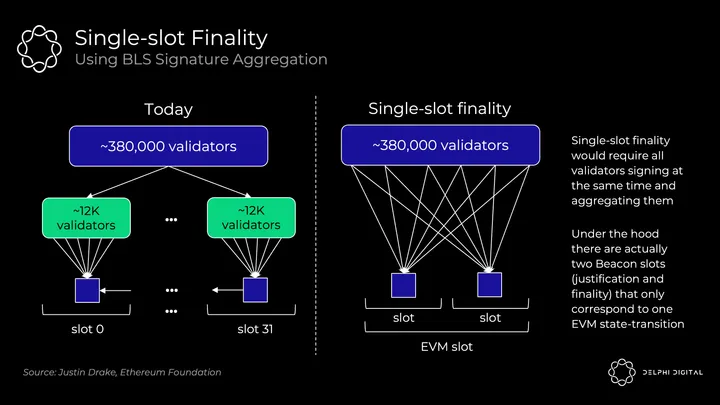

Single-slot Finality

Fast finality is great. Waiting ~15 minutes is suboptimal for UX or cross-chain communication. More importantly, it’s an MEV reorg issue.

Post-merge Ethereum will already offer far stronger confirmations than today – thousands of validators attest to each block vs. miners competing and potentially mining at the same block height without voting. This will make reorgs exceptionally unlikely. However, it’s still not true finality. If the last block had some juicy MEV, you might just tempt the validators to try to reorg the chain and steal it for themselves.

Single-slot finality removes this threat. Reverting a finalized block would require at least a third of all validators, and they’d immediately have their stake slashed (millions of ETH).

I won’t get too in the weeds on the potential mechanics here. Single-slot finality is pretty far back in Ethereum’s roadmap, and it’s a very open design space.

In today’s consensus protocol (without single-slot finality), Ethereum only requires 1/32 of validators to attest to each slot (~12,000 of over ~380,000 currently). Stretching this voting to the full validator set with BLS signature aggregation in a single slot requires more work. This would compress hundreds of thousands of votes into a single verification:

Vitalik breaks down some of the interesting solutions here.

Single Secret Leader Election

SSLE seeks to patch yet another MEV attack vector we’ll be faced with after the merge.

The Beacon Chain validator list and upcoming leader selection list are public, and it’s rather easy to deanonymize them and map their IP addresses. You can probably see the issue here.

More sophisticated validators can use tricks to better hide themselves, but small-time validators will be particularly susceptible to getting doxxed and subsequently DDOSd. This can be quite easily exploited for MEV.

Say you’re the proposer at block n, and I’m the proposer at block n+1. If I know your IP address, I can cheaply DDOS you so that you timeout and fail to produce your block. I now get to capture both of our slots’ MEV and double my rewards. This is exacerbated by the elastic block sizing of EIP-1559 (max gas per block is double the target size), so I can jam what should’ve been two blocks’ transactions into my single block which is now twice as long.

TLDR is at-home validators could throw in the towel on validation because they’re getting rekt. SSLE makes it such that nobody but the proposer knows when their turn is up preventing this attack. This won’t be live at the merge, but hopefully it can be implemented not too long afterwards.

Part IV – The Merge: Under the Hood

Ok, to be clear I was kidding before. I actually think (hope) the merge happens relatively soonTM.

Nobody will shut up about it, so I feel obligated to at least give it a brief shout out. This concludes your Ethereum crash-course.

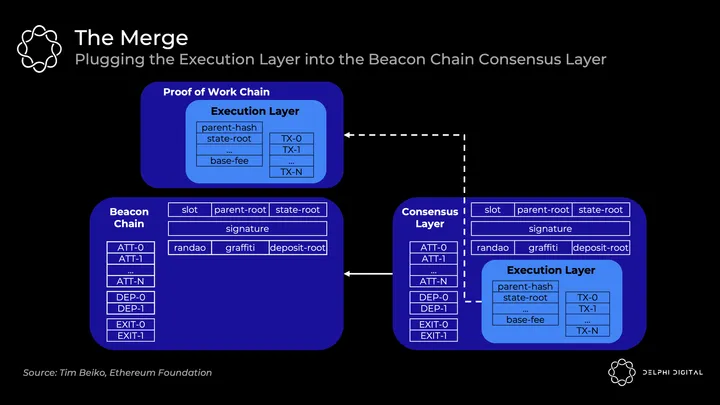

Clients After the Merge

Today you run one monolithic client (e.g., Go Ethereum, Nethermind, etc.) that handles everything. Specifically, full nodes do both:

- Execution – Execute every transaction in a block to ensure validity. Take the pre-state root, execute everything, and check that the resulting post-state root is correct

- Consensus – Verify you’re on the heaviest (highest PoW) chain with the most work done (i.e., Nakamoto consensus)

They’re inseparable because full nodes not only follow the heaviest chain, they follow the heaviest valid chain. That’s why they’re full nodes and not light nodes. Full nodes won’t accept invalid transactions even in the event of a 51% attack.

The Beacon Chain currently only runs consensus to give PoS a test run. No execution. Eventually a terminal total difficulty will be decided upon, at which point the current Ethereum execution blocks will merge into the Beacon Chain blocks forming one chain:

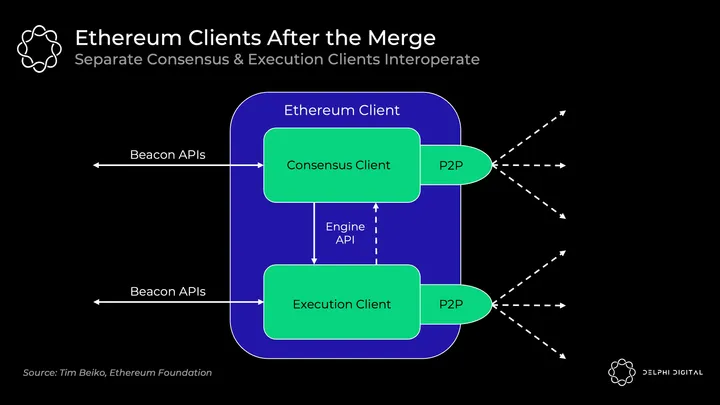

However, full nodes will run two separate clients under the hood that interoperate:

- Execution client (f.k.a. “Eth1 client”) – Current Eth 1.0 clients continue to handle execution. They process blocks, maintain mempools, and manage and sync state. The PoW stuff gets ripped out.

- Consensus client (f.k.a. “Eth2 client”) – Current Beacon Chain clients continue to handle PoS consensus. They track the chain’s head, gossip and attest to blocks, and receive validator rewards.

Clients receive Beacon Chain blocks, execution clients run the transactions, then consensus clients will follow that chain if everything checks out. You’ll be able to mix and match the execution and consensus clients of your choice, all will be interoperable. A new Engine API will be introduced for the clients to communicate with each other:

Alternatively:

Consensus After the Merge

Today’s Nakamoto Consensus is simple. Miners create new blocks, and they add them to the heaviest observed valid chain.

Post-merge Ethereum moves to Gasper – combining Casper FFG (the finality tool) plus LMD GHOST (the fork-choice rule) to reach consensus. The TLDR here – this is a liveness favoring consensus as opposed to safety favoring.

The distinction is that safety favoring consensus algorithms (e.g., Tendermint) halt when they fail to receive the requisite number of votes (⅔ of the validator set here). Liveness favoring chains (e.g., PoW + Nakamoto Consensus) continue building an optimistic ledger regardless, but they’re unable to reach finality without sufficient votes. Bitcoin and Ethereum today never reach finality – you just assume after a sufficient number of blocks that a reorg won’t occur.

However, Ethereum will also achieve finality by checkpointing periodically with sufficient votes. Each instance of 32 ETH is a separate validator, and there are already over 380,000 Beacon Chain validators. Epochs consist of 32 slots with all validators split up and attesting to one slot within a given epoch (meaning ~12,000 attestations per slot). The fork-choice rule LMD GHOST then determines the current head of the chain based on these attestations. A new block is added every slot (12 seconds), so epochs are 6.4 minutes. Finality is achieved with the requisite votes generally after two epochs (so every 64 slots, though it can take up to 95).

Concluding Thoughts

All roads lead to the endgame of centralized block production, decentralized trustless block validation, and censorship resistance. Ethereum’s roadmap has this vision square in its sights.

Ethereum aims to be the ultimate unified DA and settlement layer – massively decentralized and secure at the base with scalable computation on top. This condenses cryptographic assumptions to one robust layer. A unified modular (or disaggregated now?) base layer with execution included also captures the highest value across L1 designs – leading to monetary premium and economic security as I recently covered (now open-sourced here).

I hope you gathered a clearer view of how Ethereum research is all so interwoven. There are so many moving pieces, it’s very cutting-edge, and there’s a really big picture to wrap your head around. It’s hard to keep track of.

Fundamentally, it all makes its way back to that singular vision. Ethereum presents a compelling path to massive scalability while holding dear those values we care so much about in this space.

Special thanks to Dankrad Feist for his review and insights.

0 Comments