More Than Just Scaling With Hardware

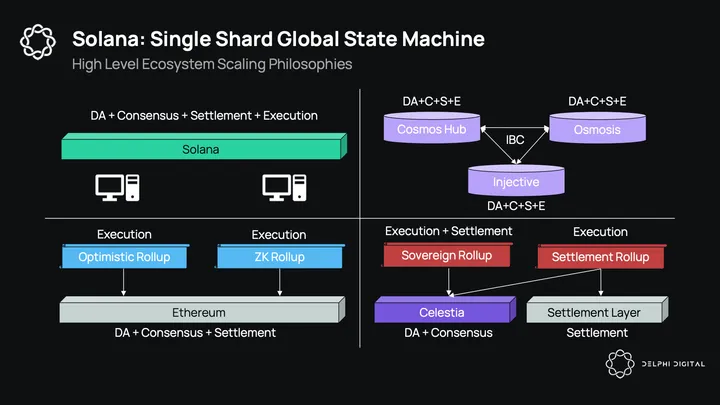

About a year ago, I wrote a piece on Cosmos and the appchain thesis. In that report, I had a slide with a high-level overview of how blockchain ecosystems intend to scale: Ethereum with execution rollups (either zk or optimistic), Celestia with their sovereign rollups, Cosmos with appchains, and Solana with hardware. Looking back on this, I feel this slide does not paint the complete picture for Solana and puts it in a somewhat unfair light. Solana is not just about slapping some expensive hardware on a blockchain and calling it a day, but rather, how do we optimize software to best take advantage of performant hardware?

Solana disagrees philosophically with other blockchains on many levels; latency should be low, fees should always be cheap, everything should be on one monolithic layer because composability is the killer app of blockchains, we should use hardware thoughtfully for performance advantages, etc. Some people don’t like that Solana goes against the prevailing modular narrative or wisdom of the crowd, but I think it should be celebrated. It is extremely useful to have different ecosystems with different philosophies designing systems with different tradeoffs. After all, doesn’t that just make our industry more decentralized? Diversity of thought, vs. groupthink, is good. It’s not like L2s, rollups, and appchains don’t have their own significant tradeoffs.

In this report, I will focus on some of the new (and upcoming) important optimizations, including:

-

Isolated fee markets

-

Firedancer

-

MEV

-

State compression

-

DePIN

-

DeFi

-

And even SVM rollups

I’ll also go over Solana’s validator decentralization, economics, and some centralization concerns that arise. What I will not be talking about in this report is Solana consensus and the basics like proof-of-history (PoH), Tower BFT, and Turbine. If you are interested in those, I can recommend this piece by Figment and this ~1h video from Near’s Whiteboard series. With that being said, this is going to be a long report, so I want to jump right in. We’ll start with the main point of contention with respect to Solana: validation.

Validators: Decentralization, Economics, Present & Future

Expensive hardware to run full nodes is the most common critique against Solana. With full nodes being too expensive to run, the number of nodes will be restricted to a small subset of network participants. Solana is also fast with 400ms targeted slot times; a globally distributed validator set is necessarily slower than a centralized one, so how does Solana fare, and how does it achieve this performance?

Validator Decentralization

Solana currently has ~2.9k full nodes, of which ~1.8k are staked (i.e., validators). Some of the largest validators are ones you would recognize, like Coinbase, P2P, Everstake, Figment, Jump Crypto, etc. But somewhat unique to Solana is that it has numerous applications running validators as well. Projects like MarginFi (DeFi), Solana Compass (analytics), Phantom (wallets), numerous NFT projects and more all run independent validators. There are a few ways to measure the decentralization of a network, and having a bunch of apps in different verticals (who compete for blockspace) independently verify and validate the network can only be seen as a positive in this respect.

As for the distribution of the stake, our first look is at ASNs. An autonomous system (AS) is a large network of different servers with a single routing number, known as its ASN. These can be geographically diverse networks but are still controlled by one entity (e.g., Amazon). With Solana’s higher validator requirements, users frequently run nodes out of data centers (this is true for most chains, not just Solana). In essence, you want to keep stake as distributed as possible here because if 33% of the stake were in data centers that decided to stop servicing Solana, the chain would halt.

This isn’t just a hypothetical, either, as we can look to November when Hetzner actually kicked off all Solana validators on their network. At the time, this was equal to ~20% of the stake — not enough to halt the network but enough to highlight the risk that relying on data centers (and external companies) poses. While Hetzner has only officially banned Solana so far, other chains should take note, especially Cosmos chains where Hetzner frequently makes up a high %.

The next angle is geographic decentralization. This one is rather self-explanatory; if a country bans staking, will the chain halt and continue to build blocks? Solana, like many other PoS blockchains, uses a BFT mechanism for finality. Solana’s Tower BFT finalizes after 32 slots (~12 seconds), and for all practical purposes, optimistically ~3 seconds. For a chain like Bitcoin, with no hard finality (Nakamoto consensus), miners could drop off but the network would keep producing blocks. Ethereum’s hybrid mechanism acts similarly as we have seen recently. But for chains like Solana or Cosmos, with hard finality after a short period of time (1 slot for Cosmos and 32 for Solana), the chain will halt if consensus cannot agree. While stake here is fairly well distributed, the US portion has grown over recent months and is something to monitor.

Solana’s Nakamoto coefficient. This is a metric Solana loves to show and represents the number of validators that (combined) control 33% of the stake and could stop the chain from finalizing. The one caveat I would include here is that the Solana Foundation controls ~20% of stake and directly improves this metric by only delegating to the medium-small sized validators. Still, if you were to remove 78.8M SOL stake from the foundation, the metric would be ~24. This is impressive however you slice it, as it’s much higher than the typical Cosmos chain’s ~7 and even Ethereum’s estimated ~20 (this doesn’t mean Solana is more decentralized than Ethereum, it’s just one metric). While it’s possible for the same entity to run multiple validators to boost this metric, 16/32 in the Nakamoto coefficient are doxxed and known entities.

The flip side to this is that the concentration of the actual SOL that’s being delegated is still quite high. We know the Foundation is 1/4th of staked SOL, but there are also other early investors who own an outsized portion as well. This is not a knock on anything Solana did, but is more so a symptom of when they raised (ICOs banned) and how little interest they had from investors. This is something that will get better with time and is the reality with any network that launches as PoS. To put it in perspective, Alameda’s locked stake is ~45M SOL (~$900M) or ~10% of supply. This is now in bankruptcy proceedings and has an average unlock of 2025.

For immediate sell pressure, there has been an account that has been TWAP-ing out over the past few months, so far having sold ~7M SOL with ~10M left, most likely an early investor. As I’ve said, this is something that just needs some time to play out. For any network that wants to be global and credibly neutral, you need not just the delegated stake but the ownership of the actual asset to be well distributed as well.

So, how decentralized is Solana? I will say that Solana’s current validator decentralization is quite impressive all things considered, and I bet if you asked a random person in crypto they wouldn’t guess close to these metrics. There is a natural decentralization tradeoff when trying to be a high performance chain, as the more dispersed the validators are, the higher the latency in sending/receiving messages and coming to consensus. Solana’s PoH, Tower BFT, Turbine, and Gulf Stream all play parts here in enabling a performant, geographically-dispersed chain.

With that being said, there are centralizing forces that will present themselves over time if not addressed.

Validator Economics

While blockchains can bootstrap relatively easily in the short-run with high inflation rates (i.e., penalties to non-stakers), in the long-run, they need to produce some sort of economic value to become self-sustaining. Solana’s value prop is low fees. “Solana is for the poors,” which can lead to many users but also directly limits the profitability of the network. Solana has three types of fees paid on the network:

-

Vote Fees: Fees validators pay to vote on blocks (~0.9 SOL/day)

-

Base Fees: Currently fixed transaction fee on Solana (0.000005 SOL/tx)

-

Priority Fees: Variable fee specified by user and dependent on CUs (compute units) used

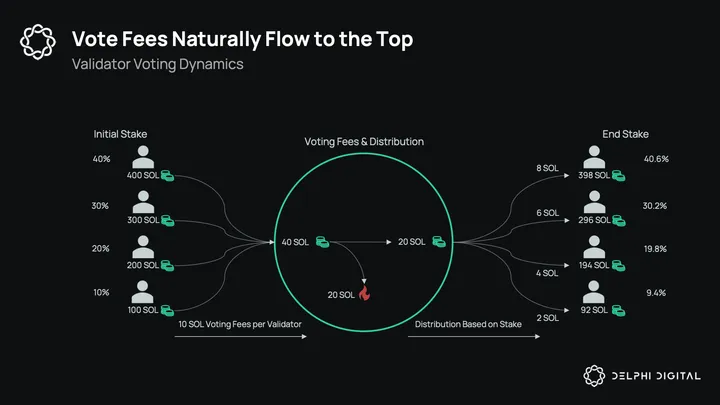

As it stands today, the majority of SOL fees are from voting, usually making up anywhere from 65-85% of validators’ income.

If you’re wondering, yes: voting fees are circular. Every validator, no matter their stake, needs to vote on every block. Whether you have 50k or 2M SOL stake, you pay the same in voting fees. Voting fees are then, along with base and priority fees, burned at a rate of 50% before being distributed to the proposer of the block. As block proposers are given leader slots based on their stake weight, the larger stakers propose more blocks and thus reap more of the fees which, as of now, are primarily for voting. This fixed cost + variable income based on stake mechanics has a naturally centralizing force to it, as larger validators are essentially taking voting fees from smaller ones. The example below is a high-level illustration of this dynamic. After ~10 days of voting, the largest validator has gained 0.6% vs. starting stake while the smaller has lost 0.6%, purely from voting.

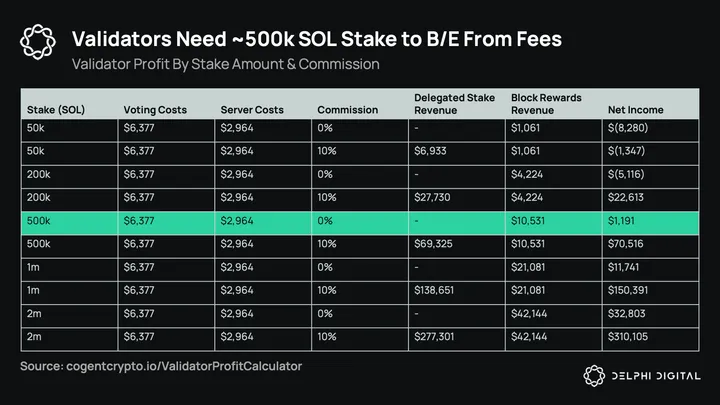

As a result, until the Solana network starts to generate meaningful fee revenue outside of votes, the minimum stake required to be a profitable validator will be quite high. For a validator to break-even with 0% commission, a stake of ~500k SOL ($10M USD) is required under current economics. While validators are free to increase their commission (i.e., the rate they take from SOL inflation), it will be harder for them to draw stake delegations. This is part of the reason the Solana Foundation tries to delegate to solo or smaller validators, although it is debatable if they would be better off concentrating their delegation on a smaller number of validators rather than spreading it over many unprofitable ones.

As votes cannot be relied upon in perpetuity due to their centralizing nature, how does Solana create sustainable fee income? First, we should say that if Solana really wanted to, they could probably increase base fees for most users by 10-100x today and not lose any of them (base fees are currently $0.0001). But restricting blockspace to create fee revenue this early in Solana’s life would be extremely short-sighted and goes against the ethos of the chain. It’s also a very simple parameter to switch if you really needed to (vs. the much more challenging ambition of creating a fast, cheap, global single-state machine). So instead, outside of the obvious (create apps people actually want to use), there are three ways to improve Solana’s economics:

-

Increase supply of blockspace (Firedancer)

-

Improve the efficiency of MEV extraction (Jito)

-

Accurately isolate hot state & price accordingly (fee markets)

Firedancer

Firedancer. You’ve probably heard it hyped up on Twitter, how it’s going to 10x Solana’s performance, lead to 1M TPS, stop the chain halts and lead Solana to Valhalla. You’re excited. You then look into it and how it works, and you end up here.

Don’t worry, you’re not alone. At a high level, Firedancer brings two things to Solana:

-

Performance gains

-

Client diversity

Performance Gains: Maybe the best way to start with Firedancer is to talk about why the Solana Labs client has had issues in the first place. The reality is that Solana did not have the resources at the time of launching the chain nor did they see Solana in practice and the issues that would arise on mainnet. It also just has a lot of code, which makes it complex and prone to bugs. Anatoly will tell you this himself, that Firedancer is the client that should have been built from the start if they had the resources. I’ve heard another analogy that the Labs client was building a house without a blueprint. Well, Firedancer is essentially a complete rewrite of the Solana validator from scratch, with a blueprint.

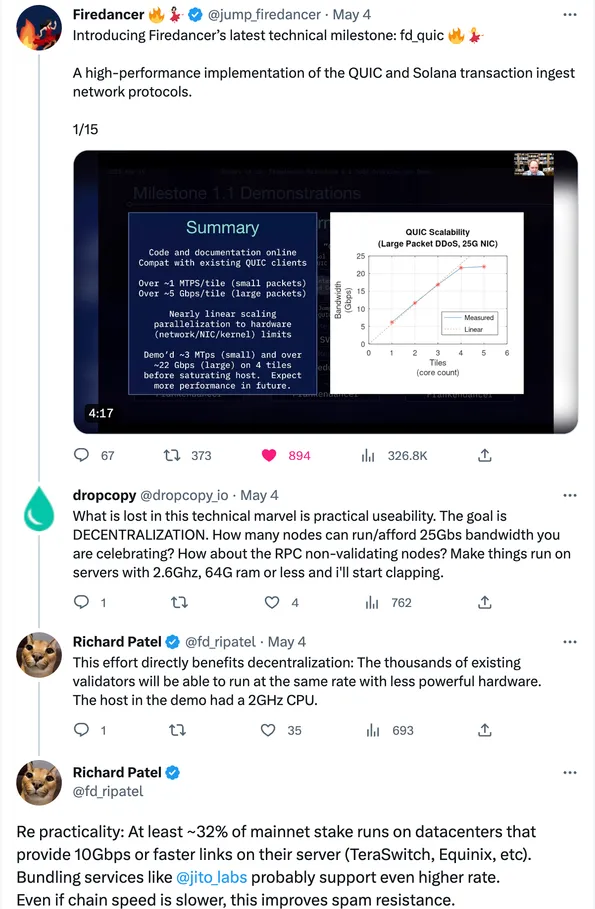

There have only been a few demos of Firedancer so far, but the most recent milestone was fd_quic, with test results showing the ability to handle 21.8 Gbps of incoming transactions on 4 cores (Solana Labs client targets 1 Gbps for comparison). Now, this doesn’t mean Firedancer will turn Solana into a 1M TPS blockchain. These test results are mostly just proving that “QUIC will not be the bottleneck or require a ton of cores.” The important takeaway here is that Firedancer is about software optimizations to the validator client and is expected to be significantly more performant than the current Labs client. In Anatoly’s words, “Now that Jump has seen how Solana works and what the end state should be, they can build these hyper-optimizations.” Also, if you’re wondering, it shouldn’t increase hardware requirements, and could even lead to the opposite.

Client Diversity: When your resident CT anon talks about Solana decentralization, they usually talk about validating cost. For me, that’s not my main concern… not even close really. My first concern would be the amount of critical programs that are still gated by a multi-sig. For example, every NFT is based on Metaplex’s standards, and until they make their programs immutable in ~2 years, every single NFT on Solana could be changed to, I don’t know, a banana, or a coconut, or hell, maybe a banana shaped coconut… you get the deal. And even with this immutability roadmap, Metaplex has now introduced a tax, so the centralization/monopoly concerns remain. My second concern would be client diversity. Solana is 100% reliant on the Labs validator and we have seen how this centralization has hurt the network numerous times in the past. Firedancer will hopefully bring some diversity.

With a single client, if it has a bug, you’re pretty much, well… f’ed. We saw client diversity in action with Ethereum a few weeks ago where one client stopped finalizing blocks but enough of the network was on other clients to keep it going. Firedancer won’t bring exactly that same level of diversification (due to nuances in how it handles finality vs. Ethereum), but it will be a step-up improvement in diversity for Solana. The risk to this not actually helping Solana diversity is that if Firedancer really is 10x+ more performant than the Labs one, then many validators will just run Firedancer instead, pushing the single point of failure from the Labs client to Firedancer. What will likely happen here is that a lot of the larger validators who can afford to run two servers will run both, although it’s not really clear how the backup and tradeoff would work in a situation where Firedancer goes down, as the Labs client would be behind (i.e., slower than) Firedancer. In a perfect world, validators would switch over to the labs client, the chain would catch-up, keep finalizing, and the only real downside would be fees spiking to deal with the reduced capacity from the Labs client. If the result is a loss of performance while maintaining liveness, that would be a big win, but we can only speculate for now and will see it when it’s on mainnet.

Now for the tradeoff to Firedancer (wait, there are tradeoffs?). While all of this should help Solana from both a performance and diversity standpoint, it will make Solana’s state growth even worse. At current capacity target (1 Gbps), Solana would generate 4 petabytes of data every year. Now 10x+ that number with the amount of capacity Firedancer will provide, and you can see how this will become Solana’s biggest bottleneck. While Solana isn’t close to its 1 Gbps capacity target currently, if Solana reaches mass adoption, this state growth issue will become real. There’s an incentive here for someone to design a solution specifically for Solana.

The Firedancer hybrid client containing the networking component (QUIC) is expected by the end of the year, but the full validator doesn’t really have a timeline (could be 2024, 2025, or even later). While Firedancer should bring needed performance and diversity, it’s still another full node that will be relatively costly to run. What Solana lacks is the ability for end-users who do not run a full node (99%+ of users) to validate the state, i.e., they do not have light clients. Enter Tinydancer, the Solana “diet client” under development (yes, everything in Solana needs to have a slightly different terminology, because why not? All the words are made up here anyways).

Tinydancer

While Firedancer should bring needed performance and diversity, it doesn’t address end-user verifiability.

Most people are coming to the realization that blockchains cannot scale without high hardware requirements for validators. You can try and shift this around and push it to somewhere else like the block builder, but the point remains: the Future of France will not run on a Raspberry Pi. Where blockchains are likely to converge is high hardware requirements to validate and low hardware requirements to verify. We know Solana has high hardware requirements to validate, but there is no way for end-users to actually verify the chain unless they want to spend $300/month on a full node. When users interact with Solana, they have to trust whatever the RPC is telling them. This leads us to light clients.

Indeed, the “diet client” is a carefully considered term, as we can think of it like a full node on a diet. Under normal conditions, the Tinydancer client doesn’t download blocks and execute txs like full nodes do. Much like trust-minimized light clients, it relies on a single honest full node to alert them if there is something fishy. Tinydancer is thus imagined to be a lot lighter than a full node in terms of hardware specs. The gold standard here would be if we could run Tinydancer on the Saga phone. That would give everyday end-users the ability to verify Solana and render Solana’s high validator requirements rather moot.

On the other hand, there is notable criticism regarding the design of Tinydancer. We will not dive fully into the actual mechanics here, but it’s worth noting that there are important differences in the designs of diet clients and trust-minimized light clients as imagined by Celestia. When it comes to light nodes, the name of the game is cheap verification. Diet clients sit somewhere in-between trust-minimized light nodes and full nodes on this spectrum. While they can be resource-light during normal operation, when presented with an alert, they may have to start downloading full blocks, which opens the door for potential griefing vectors.

Now, there’s still a long way to go here and the exact design is not finalized. Regardless, Tinydancer is a project worth following, and is arguably even more important than Firedancer, depending on where you sit on the philosophical spectrum.

So in regards to clients, we have the Labs validator client, a new, more performant validator client in Firedancer, a diet client in Tinydancer, and finally, an MEV client in Jito. Unlike the dancers, Jito is live today.

Jito & MEV

To first understand Jito, you should understand Solana transactions a bit. As we highlighted previously, transactions can be broken down into vote fees, priority fees, and base fees. For our purposes, we are concerned about base fees. Base fees on Solana are a fixed amount at 0.000005 SOL/tx or about 1/10th of a penny. Due to the fixed price, the amount of compute (or blockspace) you intend to use does not change the amount you pay (compared to Ethereum where your transaction fee is a function of how much compute/gas you’ll require).

As a side effect of this design choice, Solana has been plagued by spam. Most of these transactions have been between 200k-600k CUs; with a 48M CU block limit this is 0.4%-1.25% per transaction. In times of high demand, searchers start spamming these transactions with no real disincentive to stop them. This chews up blockspace for a bunch of failed arb transactions and leads to extra work (that’s not accurately compensated) for validators and end-user frustration. Over the past year, there have been >1 billion failed arbitrage transactions on Solana, a success rate under 3%. More recently, this success rate has been ~4.5%. Still, there are a lot of failed arbs taking up compute.

Jito has crafted a solution, their main priority being improving this MEV extraction mechanism through auctions and bundles, leading to two benefits:

-

Less spam eating up blockspace

-

Higher revenue for validators, as they can capture these profits through auctions

Now, running auctions on Solana is not as simple as on Ethereum or other blockchains. On Ethereum, block times are a set 12s, during which a public mempool receives transactions and fills up with them. At the end of the 12s, a block is proposed from these transactions and executed. This is a discrete time window where you can run an auction (keep taking bids for 12s and then execute the highest bid at the end). Solana doesn’t have a mempool. Instead, transactions are sent directly to the leader and executed on a FIFO basis as they arrive. By default, you cannot run an auction under this continuous time architecture, as there are no discrete time windows to let transactions build up before executing.

Jito MEV-client creates this window, effectively running their own pseudo-mempool with an auction every 200ms. For normal arbs on Solana, searchers cannot identify the transactions they’re targeting until they have actually occurred on-chain (remember, no mempool). This is why the majority of arb MEV on Solana is back-runs (can’t front-run if you don’t know what you’re front-running until it’s executed).

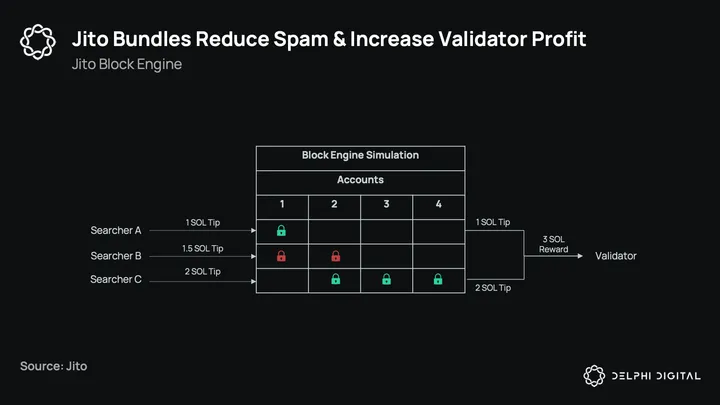

With Jito, searchers can see the transactions coming in, simulate what’s going to happen, and then create a bundle around them to ensure they get executed atomically. These bundles then get priority access to the leader through a separate processing pipeline with a high success rate. Searchers’ execution can now be based on the highest auction bid vs. a probabilistic guarantee through spamming.

Recently, Jito’s bundles have been gaining traction. It’s been a bit of a chicken and egg problem because searchers won’t want to use it if validators aren’t and validators won’t use it if searchers aren’t, so recent bundle adoption is a good sign. Jito is now run by ~26% of total stake, up from single digits in February after open-sourcing in Q4 of last year. Jito democratizes access to MEV capture on Solana, and this MEV capture continues to increase in a steady uptrend.

For some rough numbers, let’s break down two recent epochs (452 and 453) into MEV, vote fees, base fees, and priority fees. For vote/base/priority fees, we’re taking the total for the epoch and multiplying it by Jito’s percent of total stake.

As you can see, these MEV tips are starting to become a material amount. In a typical epoch (which we use epoch 452 to represent), MEV is usually around 4% of rewards. Epoch 453 was much higher, running at ~20% of fees. While it’s an outlier for now, Jito is only just starting to find adoption. That, plus the return of more on-chain activity, can make these numbers become more regular. The one nuance I need to add here is that these MEV rewards are shared with stakers (as the previous chart had highlighted), so these rewards don’t all go to validators like the other rewards do. The benefit of course is this democratization of MEV.

In May, we’ve seen numerous tips >1 SOL. As the average Solana block reward is <0.001 SOL, some of these bundles are orders of magnitude greater than they would receive otherwise. While 1 SOL tips may seem low, keep in mind Solana blocks are 400ms. Over the course of a year, that’s ~78.8M blocks. And while 1 SOL bundles are not a regular occurrence (especially in a bear market), they can add a meaningful amount of revenue over time with continued Jito adoption and overall crypto and Solana on-chain activity rising.

With that being said, Jito doesn’t solve all of Solana’s spam or fee issues. Spamming can still be a higher EV play for searchers if they’re reacting to something that has already happened on-chain. With more Jito adoption, these should become less frequent. But they’ll still exist, especially with respect to being first in line for a CeFi-DeFi arb/latency race. We should also note, however, that the low latency, continuous block production architecture of Solana, while posing some challenges in running auctions and leading to colocation, does come with some benefits like easier mitigation of DEX MEV.

Low latency systems are useful for applications like CLOBs, and those building them (like the co-founder of Ellipsis) will prefer them over systems with long block times. And as we highlighted when we started, Solana’s low latency has not led to any sort of extreme colocation as of yet. Maybe this will change over time if activity picks back up and there’s more money to be made on-chain, but for now colocation has been well mitigated.

To wrap it up, Jito is mostly about running auctions for MEV bundles, but there’s more to fee markets than just MEV auctions.

Isolated Fee Markets

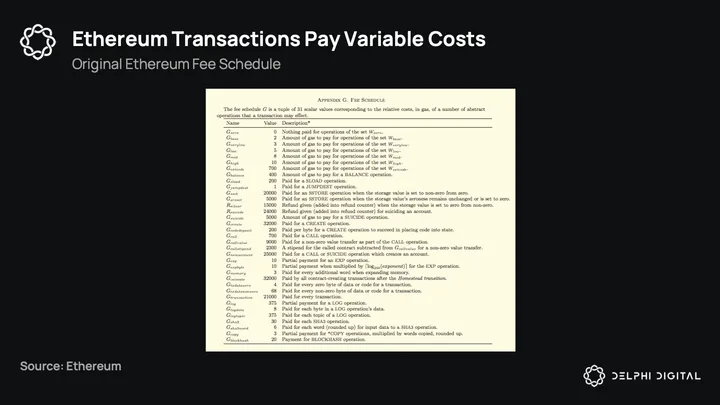

To understand the SVM’s fee markets, we should understand the EVM’s at a high level. In the EVM, every operation is assigned some specific cost. These costs are mostly determined by the amount of computation and how long this computation will take. For some common examples, sending ETH on Ethereum costs 21k gas, a token approval ~41k, and a Uniswap trade ~127k. The more complex, the more gas the transaction will consume. These gas units are then multiplied by the base fee, which is a function of demand. To meter demand (and state growth), Ethereum sets a block gas target of 15M total gas. If a block consumes more, the base fee goes up; if less, it goes down. Ethereum is always trying to facilitate the same amount of computation taking place on the network, and so if demand goes up, price for this computation goes up.

Notably, all of these transactions (whether an ETH transfer or Uniswap trade) share the same base fee. While an ETH transfer will always cost ~1/6th of a Uniswap trade, the total transaction fee paid will both rise and fall in tandem with the base fee (measured in gwei). If the base fee is 10 gwei, the ETH transfer will cost 0.00021 ETH (21,000 * 10 gwei) and the Uniswap trade 0.00127 ETH (127,000 * 10 gwei). If gas prices rise to 20 gwei, they’ll now cost 0.00042 ETH and 0.00254 ETH, respectively. When demand for Ethereum’s blockspace rises, the prices for all transactions on Ethereum rise proportionally. When PEPE mania hit a few weeks ago, it didn’t matter what you were trying to do on-chain, it got expensive.

The SVM, at least so far, has worked much, much differently. The biggest difference, as we mentioned in the Jito section, is that transaction fees have been fixed regardless of the amount of CUs (compute units) they consume. We can think of compute units here as gas. Using our example above, the ETH transfer and Uniswap trade would cost the same (0.000005 SOL), even though they require different levels of work from validators and full nodes. This is an inefficient use of resource allocation and does little to deter spam. The Solana team realized that fixed fees for everything was a design flaw and started working on a redesign of their fee markets in late 2021. While many parts are still a work in progress, they are starting to change considerably with some unique benefits enabled by the SVM. More specifically, fee isolation; the ability to increase fees for “hot” state while leaving other applications and their users unaffected.

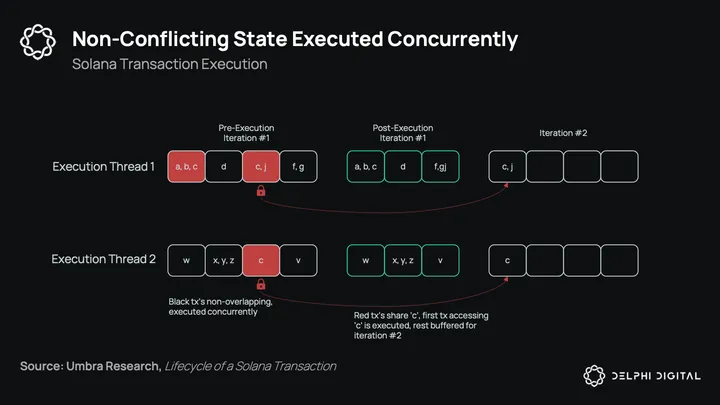

The most important and differentiating factor to understand about the SVM is that it can process transactions in parallel. The SVM is a multi-threaded runtime environment which means that it can take advantage of multiple cores of the validator to process non-overlapping transactions at the same time. The EVM, in contrast, is a single-threaded runtime environment. This means that only one thread is executing all transactions one by one. The SVM achieves this parallel execution by requiring each transaction to specify which piece of state/data it is modifying before execution.

The example below outlines how Solana transactions are executed. We have two separate execution threads with four transactions in each, and the parts of state they’re writing to are identified by individual letters. Since the black transactions are all writing to different/non-overlapping state, they can be executed at the same time. The red transactions, however, are all trying to write to “c.” Since you cannot write to the same state at the same time, these must be executed sequentially, with the first “c” transaction getting a write-lock and being included in the first iteration, and the other “c” transactions buffered until iteration 2 where they will be tried again.

This parallel execution and write-locking on state opens up some unique possibilities for fee markets. The red transactions here are clearly in higher demand (“c” is hot). While Solana transactions are predominately sorted on a FIFO basis, the addition of priority fees allows validators to sort by fees as well. This means that as state gets “hot” and transactions start building up, validators can order and execute them by priority fees vs. FIFO (although priority fees are not enforced). At the same time, the rest of the state can be processed as normal. Priority fees are based on the amount of compute the transaction is requesting, so it is a divergence from Solana’s standard fixed fees.

In the chart below, states “b” and “d” are in relatively low demand (compute requested), and so their transactions get through with the low, fixed base fee. State “a” is “warm” and some users pay priority for ordering. State “c” is hot, and ordering becomes sorted by competitive priority fee bidding. Account “c” runs up against the account-specific compute limit and so even though the final “c” transaction is paying more than transactions for a, b, and d, it is not included.

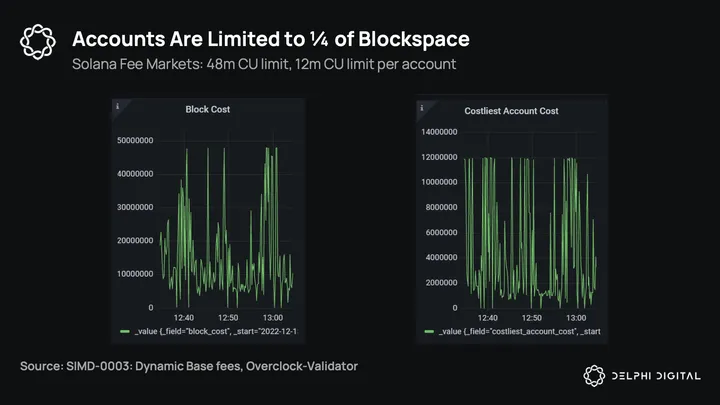

Like Ethereum, Solana has a block limit on the amount of compute it can process. The difference is that Solana has an account specific compute limit as well. With 48M CU blocks, the maximum a specific account can take up is 12M CU. Hot state, as we noted prior, still needs to be executed sequentially, but by restricting a specific account’s state to 1/4th of blockspace, you allow other transactions to not get priced out.

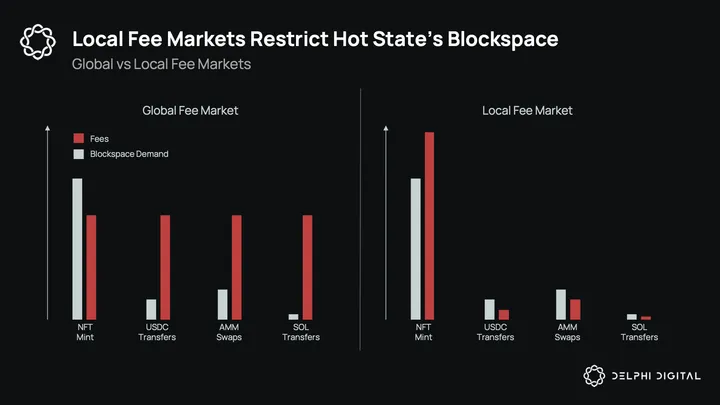

This leads to the main difference in global vs. local fee markets. In a global fee market, the block limit is shared by everyone. This means that if an NFT mint wanted to, it could take up the entire blockspace and raise fees for everyone proportionally. We saw this in 2022 when the BAYC land sale sent Ethereum gas prices to >$1k for hours.

Local fee markets restrict such state. Using the above example, the BAYC land sale would have been able to use just 1/4th of Ethereum’s blockspace. The effect here is that gas prices would have been substantially higher for the people participating in the land sale but substantially lower for everyone else. Three quarters of the blockspace would be reserved for non-BAYC transactions.

As for the numbers 48M/12M, these are somewhat arbitrary and determined by expected capacity that Solana validators can handle. The 12M account limit is roughly equal to what a single thread can process in 400ms (Solana slot time), so by setting an account limit to 12M units, you can restrict an account to ~1 thread. These numbers are of course modifiable, and as we mentioned with Firedancer, it’s likely they will be increased significantly as long as the demand is there. With the 12M account limit, you could also theoretically run into a scenario where you had 4 accounts in high demand all hitting their limits. This hasn’t happened yet, as whenever there’s been hot state it’s usually a single account. But if it happens that 4 activities were dominating blockspace, they could in turn start to price out other transactions. This probably won’t happen anytime soon, but is possible in a bull market. If it really becomes a problem, you could just reduce the account limits further.

Solana usually utilizes ~50% of blockspace, but has had some periods where the limit was being hit quite frequently. If the 48M limit sees sustained periods of demand, you basically have two options — let fees rise or increase the block size (the age-old problem).

Isolated fee markets are a fairly elegant design to pricing hot state, but they’re still very new and have some issues in practice. First, as we noted in the Jito section, since Solana has continuous block production and is sorted by FIFO, priority fees will not necessarily guarantee inclusion. Before the state is actually “hot,” the fastest searcher with the lowest latency will have an advantage over someone paying a high fee. Once state starts to become saturated and goes above the 12M limit, it is much easier to sort by priority, but latency (and spamming) will still likely win the first transaction. Second, the block packing stage is still inefficient and transactions get added to threads randomly, which again incentivizes spamming. There are people working on these problems, but they are notable for now, and it is not entirely clear how the latency war would be removed without a discrete block time and auction.

Besides everything mentioned above, there are two other proposals around fees currently being discussed:

-

SIMD-003: Dynamic Base Fees: This would be a variable global fee and divergence from Solana’s fixed transaction fees. While the global fee would impact all transactions like it does in the EVM, you would still have local fee markets absorbing the majority of hot state’s demand. This is more so for the spam problem, as there’s really no good mechanism to disincentivize spam on Solana and raising fees is one of the easiest mitigations to it. The challenge here will be in not having the dynamic base fee start to price out Solana-specific use cases. Many applications on Solana only work because fees are so low. Voting, oracle updates, CLOB orders, payments, DePIN (to be discussed later), etc. These applications cannot really tolerate any meaningful amount of fees or they become impractical. You could increase fees on Solana for end-users by 100x and it probably wouldn’t matter for them, but you can’t really do it for these applications.

-

SIMD-0017: Priced Compute Units: Similar to SIMD-003, this would move Solana’s fee model to be more in line with the EVM by pricing transactions by the amount of compute they take up. While priority fees are now calculated this way, base fees are not. Some of the frequent transactions mentioned above like votes, CLOB orders, and oracle updates take low compute limits, so you could probably make this work without pricing out those use cases.

Solana’s ambitions here should not be understated. They are trying to design a fee market that disincentivizes spam, isolates and captures hot state fees, and leaves fees cheap for 99% of users. At the same time, we should understand the current state. While the approach is quite innovative, there’s still a lot to be worked out and it’s to be determined how effective they will be in the long-run. We should also note that a lot of the benefits of Solana’s performance have not just been due to fee markets but also the addition of QUIC, which has been the main mitigant for some of the IDO and NFT mint issues that took the chain down in the past.

Now, while the redesign of Solana’s fee capture can be a definite positive for economics and performance going forward, they’re still kind of useless without useful applications. This next section will be focused on three such applications/sectors:

-

Compressed NFTs

-

DePIN

-

DeFi

State Compression & Compressed NFTs

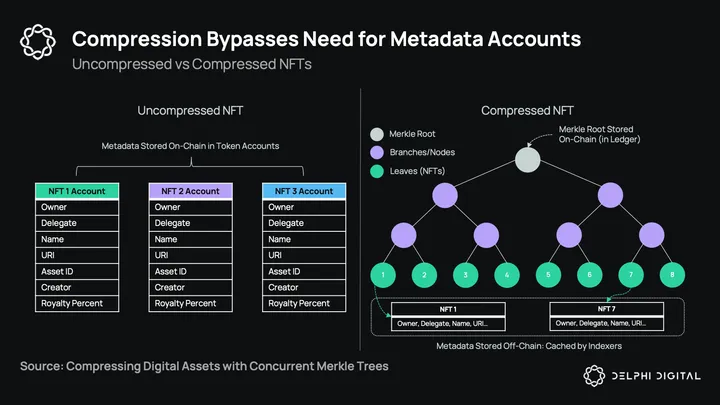

State compression is a new feature that enables the creation and storage of a “fingerprint” or hash of off-chain data on-chain. The fingerprint stored on-chain is the root hash of a Merkle tree storing off-chain data. Those unfamiliar with Merkle trees can refer to this simple visual explainer.

The first use case to adopt compression is NFTs; more precisely, Metaplex with Bubblegum and the new cNFT standard. In the chart below, we give a high-level comparison of uncompressed and compressed NFTs. In uncompressed (or standard) NFTs, we store all of the metadata on-chain in token accounts. These are attributes like the owner of an asset, creator, URI (link to the asset metadata like a jpeg), royalties, etc. Storing all of this data on-chain would require opening a token account and paying rent in SOL. This is cheap for one but can get costly with scale. With compression, each individual NFTs’ metadata is hashed to a leaf. The only thing we store on-chain is the single Merkle root in the ledger. Not only is storing data in the ledger cheaper than accounts, but we don’t need to make accounts for each NFT like we do with standard NFTs. The root is what allows us to verify all of the leaves in the tree. Another benefit is that if the end-user ever wants to decompress their NFT, they can. This process will remove a leaf (their NFT) from the Merkle tree and transfer it to a token account under the uncompressed Metaplex NFT standard.

So if the data is off-chain, then how do we use it? This comes down to the indexers (i.e., RPC providers). The dApp will pay the RPC provider to store and provide the data upon request, and RPC providers will compete for the business. Some of the RPC providers that support this today are Helius, Triton, and SimpleHash. While this is cheaper for creators, it puts more demand on the RPCs and further contributes to the archival/state growth challenge for these providers. They need to store all of this data off-chain and serve it to dApps upon request. And since cNFTs are so cheap, we can expect at least an order of magnitude more data needing to be stored. Essentially, instead of dApps paying rent in SOL to store on-chain, they will pay less on-chain but a bit more to RPCs to store, index, and fetch the metadata off-chain. Overall, the costs are substantially reduced, with the tradeoff being off-chain data storage.

The on-chain cost reduction is quite dramatic and scales with size. Most of the costs for the compressed NFTs are in the change logs, whereas for standard NFTs they are in the metadata. These change logs are what allow state compression on Solana to work, utilizing concurrent Merkle trees that allow for updates to multiple leafs by referencing the change log buffer. In a Merkle tree, every time you receive an update to a leaf, the entire tree and root need a new proof. Validators who receive multiple updates in one slot would only be able to process one before the rest fail (due to the referenced proof now being invalid). Concurrent Merkle trees, on the other hand, allow for updating multiple leaf data requests by keeping a record of the most recent changes (the buffer). This is the TL;DR, but if you want a more detailed explanation, you can read the whitepaper.

Compression scales much more efficiently vs. uncompressed. In the latter, the on-chain footprint of the state/metadata increases linearly. For example, if the metadata for an NFT was 400 bytes, then every new NFT you put on-chain would require paying for those 400 bytes in a new token account. With compression, you would create the tree and the majority of the cost would be fixed by the size of your change log buffer and the size of your tree’s depth. We can put some actual numbers behind this. Minting 1k NFTs is 78% cheaper compressed, 10k 97% cheaper, 100k 99.6% cheaper compressed, and so on. Compared to minting on Ethereum or Polygon, the savings are even more extreme.

Compression went live in April and we’ve seen a few usual suspects emerge. The first is DRiP, a platform for artists to send free art to collectors weekly. This allows artists to distribute art to a wider audience and have it seen by more people. The second is Helium, a DePIN protocol on Solana which recently migrated over. Helium creates decentralized wireless infrastructure and uses cNFTs to identify hotspots and physical ownership of their devices. And lastly Dialect, a Solana-native messaging platform that has sticker packs for various projects to send in-app.

While these are the main projects that have adopted cNFTs so far, the use cases are quite vast. Any use case that benefits from issuing NFTs at scale would benefit here. This could be ticketing services, video game skins or other cosmetic accessories, decentralized social media, POAPs… basically anything where NFTs are useful but having all of the data on-chain is not necessary. For a recent example, Coinbase launched their Stand With Crypto NFTs recently. These are commemorative NFTs with no real value, over 100k of them have been minted, they’re all the same, and they’re from a centralized company. Does the metadata here really need to be stored on-chain, costing users $20-$40 to mint, or are these the type of NFTs that would be better off compressed? Are any users saying “I sure am glad I paid $30 for my commemorative centralized exchange NFT to be stored on-chain?” This is mostly rhetorical. For your Punks, gen art, other high value NFTs, put it all on-chain. For a lot of these other use cases, consider compressing, especially when you consider that most of them point to off-chain images anyways.

One of the users of cNFTs we highlighted here was Helium, the wireless infrastructure protocol that uses cNFTs to track and identify their physical devices. These decentralized physical infrastructure (DePIN) projects like Helium are quickly finding homes on Solana.

DePIN (Decentralized Physical Infrastructure)

DePIN is the “new Solana narrative.” It’s about decentralizing the build-out of physical (i.e., real world) infrastructure by incentivizing individuals around the world. Instead of having large centralized companies like AT&T, Apple, Google, etc. build out infra, you incentivize individuals to do so with tokens. It’s not just decentralization for the sake of decentralization; decentralizing the build can be more competitive. Some examples:

-

5G: While 5G is faster than 4G, the higher frequencies it uses have the tradeoff of not traveling as far. This means rolling out 5G infrastructure requires cell towers to be placed in more frequent locations. Incentivizing individuals around the world to put up the infra can be faster than a centralized company in this respect, especially in rural areas.

-

Mapping: Mapping companies like Google hire their team to map locations around the world. A decentralized network allows anyone to map the world by just buying a dashcam and hitting the road.

-

Rendering: People around the world have idle GPUs, and people need rendering work done. If you paid attention to Nvidia’s earnings call, GPUs are in high demand due to AI (sorry gamers, the crypto crash didn’t solve your perils). What if you could utilize a global network of idle GPUs?

DePIN has no trouble with the supply side (give them tokens and they will come), but is there actually demand? While crypto has enabled the bootstrapping of these networks, it’s rather useless if there is no actual demand from end-users. One thesis is that once you have this infrastructure built out, it’ll enable more use cases not intended originally, which seems plausible. But why should I pay to use a decentralized Google Maps instead of just… Google Maps? I’m just looking for the nearest Chipotle man…

Helium

Helium migrated to Solana from their own standalone appchain. The main reason they moved to Solana was that maintaining their own L1 took up an inordinate amount of time from the core devs and in turn left them with less resources to work on their actual product. While appchains can be useful for full-stack control, full-stack development can also be a burdensome endeavor. Helium also has numerous tokens, and integrating them into DEXs on Solana allows them to tap into DeFi markets more seamlessly vs. dealing with bridging, which the team also noted as distracting mental overhead. Which bridge to choose and why? What if the bridge we choose gets hacked? We wrote a piece on Helium and Pollen last July, so I’m not going to go in-depth on Helium here, more so just highlight some Solana-specific metrics since the migration.

Since the migration in April, Helium device owners have minted ~1M compressed NFTs on Solana. These are used for identification/tracking purposes and make it easier for Helium to fetch the addresses of rewardable entities to issue payments. When people pay to use the network, hotspot owners are rewarded, and compressed NFTs make this task much easier operationally at a fraction of the cost.

As for demand, it’s still immaterial, but there are some people paying to use IOT as ~$700 of Data Credits (DC) are burned/day to use Helium’s IOT network. This amounts to ~$0.61/yr for the average hotspot that costs ~$120, so this demand needs to pick up by a few orders of magnitude before there is more to the network than just earning token inflation. Helium’s newest focus is on their mobile 5G network after IOT saw little traction. There’s the potential for a synergistic relationship here with Solana’s mobile device Saga, and the 5G infra build out thesis is compelling, so we’ll give it time to play out.

Helium’s move to Solana will allow them to focus on building out the network and no longer worry about the infra. They still have a ways to go until the networks become self-sustaining.

Hivemapper

Hivemapper is building a decentralized map. Contributors buy dashcams for ~$300, hit the road, and earn some of that sweet HONEY. To date ~14.5k contributors have mapped 2.6M kilometers, predominantly in the US and Western Europe. HONEY is minted to contributors who map with various weightings:

-

Coverage: Low coverage/mapped areas earn more

-

Freshness: How recently the region has been mapped

-

Quality: Is the mapping/image quality good

There are rewards for quality assurance and operations (validating quality of images, storing images, adding annotations, etc.), but ~90% goes to the mappers. To date, contributors have earned ~$700k in HONEY using current prices.

As noted, the supply side for this stuff is usually easy to bootstrap with tokens. Map man gets paid. So who will actually consume these maps and pay HONEY? Mostly organizations who have to pay companies like Google to integrate their maps into their products. Since there are few mapping companies (mostly Google and Apple), it’s somewhat of an oligopoly. There are other issues with centralizing mapping as well, like outdated maps, data mining and privacy invasion of everyday users, and government censorship. Consumption-wise, there’s been ~$4.4k paid so far to access the maps. It’s low, but kind of expected at this stage given it’s still early in the mapping phase. As the network continues to get mapped out, we’ll look to see if demand catches up.

What does this have to do with Solana? Mostly the frequency of mints and burns. Hivemapper has put ~10M transactions on-chain over the past 3 months, which is not really practical with most blockchains. With $0.0001 tx fees on Solana, it’s more feasible, as these 10M transactions have paid ~$1k. They could use an appchain for this as well of course, but the tradeoff would be maintaining the infrastructure, dealing with bridging/CEX integrations, and the economic security of their chain. Ethereum L2s don’t really work either, as tx fees are still >$0.10 and can get up to a few dollars when the L1 is congested (during PEPE mania it was $1-$2 to swap on an L2). Solana is a simple solution that’s ready today.

Render

Render is one of the hottest projects/tokens right now based off of all the AI hype. In a nutshell, Render will allow idle GPUs to rent out and monetize their compute power to creators around the world. Think artists, game developers, mechanical engineers, etc. While Render did not start on Solana, the community recently voted for and approved a migration to the network for numerous reasons:

-

Their burn/mint module (similar to Helium and Hivemapper) is impractical on low-throughput chains

-

Compressed NFTs to utilize for renderings

-

Preference for Rust over Solidity, both for security and having a similar codebase to their GPU rendering

-

The success of Helium’s migration to Solana

-

Solana’s overall security and decentralization

Render is currently on Polygon and considered L2s as well. They came to the conclusion that Solana was best fit for their needs. You can read their entire reasoning here.

Are these all going to work out? Honestly, I don’t know. But crypto needs a way to provide useful products that expand into the meatspace beyond our circular bubble of trading internet tokens. And speaking of tokens… that’s another angle to the DePIN movement. Yes, the tokens. If you look at the state of Solana DeFi today, it’s pretty barren. One of the reasons, among many, is there’s just a fundamental lack of tokens on the network. For the most part, Solana DeFi is facilitating trades of SOL and USDC. What good is DeFi without assets to… DeFi?

State of DeFi

It’s no secret that DeFi on Solana has been struggling. TVL hit nearly $10B at the peak and sits at ~$265M today, a 97% drawdown. You may say TVL is a poor metric and rewards inefficient vs. efficient capital, and that Solana protocols generally have higher volume/TVL ratios than other chains, to which I would agree. But it doesn’t really matter which metric you use, the reality is that DeFi right now is… kind of dead. On the plus side, there have been some active pockets recently, and there are some novel new apps coming to market.

High-Level Stats

The best way to visualize Solana DeFi activity is probably looking at Jupiter volumes. Jupiter is the top aggregator on Solana (and best in all of crypto imo) with the majority of trades going through them. Volume has (expectedly) been down since November, but has found a bear market floor of ~$700M or so per month.

Digging in further, there are a few reasons for the low volumes:

-

#1 DeFi app Mango getting exploited in October for ~$100M

-

FTX fiasco in November and the removal of Alameda as an on-chain market maker

-

IDOs launching in the peak of the bull market and performing poorly since

-

Liveness issues (chain halts)

-

Overall crypto volumes down

-

Not a lot to trade besides SOL and USDC

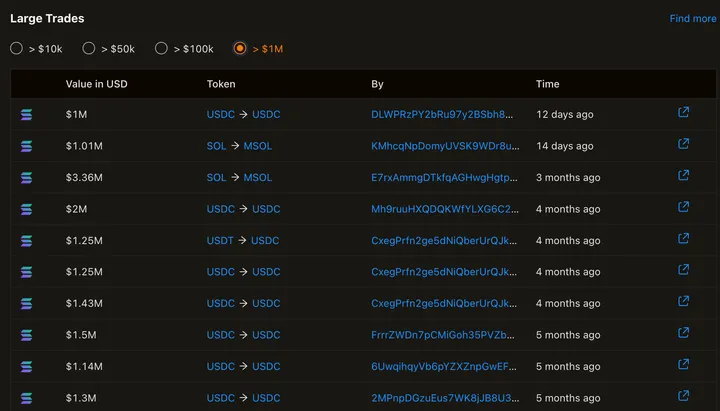

Maybe a better way to explain is with a picture. Solana DeFi went over 2 months without a million-dollar on-chain swap. Since so much of the DeFi infrastructure is based on CLOBs, it’s more concentrated and less sticky than a bunch of passive retail AMMs. When Alameda left, ~50% of maker volume went away.

Overall, activity is low, but there are reasons to expect this to eventually turn.

Newer Projects to Watch

We want to be forward-looking, and so while we can lament on Solana’s DeFi past, we’d rather look at what’s coming. Some notable projects are as follows:

-

MarginFi: A composable lending protocol that will enable not just your typical borrow/lend, but composability with third party DeFi protocols as well, allowing one to manage their collateral on MarginFi across a suite of DeFi apps. For example, a user could deposit USDC into MarginFi and then take out two separate DeFi positions on separate protocols with the collateral. As of now, they’ve just launched mrgnlnd, the borrow/lend portion of the app. They’re up to >$3M USD TVL and are the fastest growing Solana protocol MoM (in part due to low-base effect). The team has publicly been pushing for other Solana dApps to launch tokens and has hinted at their own numerous times. MarginFi needs liquidity in mrgnlnd to grow before enabling some of the cross-protocol composability they intend to launch.

-

OpenBook: Community fork of the Serum CLOB and spearheaded by the Mango team (Mango also recently released their v4). They’ve been doing a few million $/day on Solana since launch and recently hit $500M all-time (since November). In April, they announced v2, a rewrite of the outdated Serum codebase that v1 was forked from. v2 is a complete rebuild and will be completely open-source with the intention of being a public good.

-

Phoenix: A new CLOB developed by the Ellipsis team, Phoenix brings a fully on-chain, non-custodial and crankless (i.e., instant settlement) DEX to Solana. The Ellipsis team is fully aligned with the Solana architecture/philosophy and has frequently expressed their disdain for AMMs. Phoenix has launched a public dashboard with some metrics on their recent growth. Another new project, rebirth, is building on Phoenix with the goal of decentralizing order book liquidity provisioning. Phoenix and OpenBook are competitors, but the volume on Solana is so low that both can succeed by growing Solana’s overall pie over the coming year. Their founders have also started Umbra Research with Jito and will be releasing more Solana-specific pieces. One of the co-founders, Jarry Xiao, was a co-author on the state compression whitepaper.

-

Cypher: One of the newer spot and perp DEXs with cross-margin and cross-collateral. They’ve been the most aggressive with listing collaterals and have also hinted at a token drop on numerous occasions. Their spot market utilizes OpenBook, and they intend to launch dated futures as well.

These are just some of the newer ones, and many of the well known DeFi projects from the first wave continue to develop new products and versions having learned from their experiences. More recently, projects like Trails have emerged, incentivizing people to try out Solana protocols, and I wouldn’t be surprised to see more things like this plus token incentives in the future. It’s not like Solana doesn’t have capital, there is $1.5B of stables on-chain, and while that’s quite low compared to Ethereum, it’s still #5 among all chains.

In a nutshell, Solana DeFi is just under-utilized.

-

Solana LSTs make up only 2.2% of SOL’s circulating supply vs. 7.7% on Ethereum

-

Total assets in DeFi protocols are 3.3% of SOL’s market cap vs. 12.4% on Ethereum (ex L2s)

-

Total assets in DeFi protocols are 17% of $ value of on-chain stablecoins vs. 38.9% on Ethereum

For the LSTs, part of the reason is people do not want to take on LST contract risk for a few extra basis points of yield (they can just delegate stake natively), and the same can be said for DeFi, along with the liveness issues we’ve seen. But this mindset needs to change, and it comes from useful applications that are worth the smart contract risk. You need DeFi to enable unique “only possible on Solana” applications along with other narratives like NFTs, payments, and DePIN to get these numbers up again.

For Solana DeFi to really take off though, I think you need at least the hybrid Firedancer networking component (expected EOY) and a long period of network stability. DeFi needs liveness to really thrive, and it will be hard to win back traders without it.

On this topic, one of the reasons developers may have been hesitant to build on Solana after all of the chain halts was platform risk. If you don’t believe in the single shard L1 thesis, you could be wasting a lot of time learning to develop for the SVM. But what if the SVM becomes a developer standard like the EVM? After all, the SVM is arguably Solana’s greatest innovation. What if you don’t have to take on the L1’s risk by learning the SVM? Enter SVM rollups.

Making SVM a Standard

Wait, rollups? Aren’t rollups the antithesis of Solana? Sure, but if we think the SVM is good, why not create rollups with it? Maybe a developer wants the benefit of the SVM’s technical innovations but wants to use another DA layer like Celestia instead, either for the (expected) gold standard light client support or just to not have to share blockspace with other apps on Solana?

-

Thesis: Monolithic Solana

-

Antithesis: Modular Celestia

-

Synthesis: Solana rollups on Celestia

The first two teams working in this direction are:

-

Eclipse: Eclipse is a rollup builder for many VMs, including both EVM and SVM. Their first SVM rollup is Cascade and is launching on Injective, another order book-based Cosmos L1. Eclipse intends to launch SVM rollups on Celestia when it’s live.

-

Nitro: Being developed by the Sei team. Sei is an order book-based Cosmos L1 (is it a coincidence all these SVM rollups are on order book chains?) and intends to have SVM rollups on top. This allows Solana developers to deploy on a chain in Cosmos with access to IBC assets’ liquidity.

There are more teams that are building sovereign rollups (like Sovereign Labs’ zk rollups) which can adopt SVM in the future as well. The biggest challenge for SVM rollups will be making them fraud or validity provable — so far we have not seen this in production and it will be a challenge since Solana doesn’t commit to a global state root. But there’s real momentum here, and they will allow developers another option if they want to build SVM applications. Even if Solana hits it out of the park over the next few years and handles 50-100k TPS, has near-perfect fee market isolation and no liveness issues, there will still be a world for SVM rollups. Developers who don’t want to share state with all the applications on Solana and want to have more sovereignty over their blockspace will still prefer the rollup approach. There will always be tradeoffs to deploying on a shared L1 vs. deploying on your own environment. Of course, deploying on a shared layer has benefits too (did I mention there are tradeoffs?).

Now, if you’re asking the question “Should Solana just become a rollup on Ethereum?” the answer is a resounding no. People need to stop saying that, it fundamentally misunderstands the point of Solana, nor are Solana’s ambitions even possible with Ethereum’s DA throughput (both now and in the future). Solana’s philosophical underpinnings are not compatible with being an Ethereum rollup. Now, if someone wants to launch their own SVM rollup on Ethereum, sure. Knock yourself out.

Where Does Solana Go From Here?

This report was mainly focused on the infra layer, but there’s a lot going on with Solana besides just improving the infrastructure.

-

Saga and the SMS for mobile apps

-

Helius and their work on RPCs, APIs, and webhooks

-

Light Protocol bringing zkSNARKs to Solana for private programs

-

Dialect for Web3 messaging

-

Solana Pay for payments, combined with the mobile push, can make Solana the payments platform

-

The entire NFT ecosystem and gaming sector

-

The wallet infra covered in the Wallet Wars

While Solana has had some struggles, we should remember that it’s only 3 years old. It went live right around Covid and DeFi summer, and a year later was accumulating billions in DeFi. This was a blessing and a curse, as it got way too many assets too quickly before it had time to mature as a protocol.

Over the last year, anyone who has used the chain will tell you it’s gotten significantly better. The NFT mints taking down the chain are gone, transactions don’t fail as frequently, and overall it just works a lot more smoothly than it did in the past. A year ago, the concept of a fee market on Solana barely existed, and now you have Jito with auctions, isolated fee markets, and all the other discussions around base fees and compute pricing taking hold. By this time next year, I’m sure it’ll look significantly different than it does now. A network of 2k+ globally distributed validators is truly impressive given the performance, and Firedancer and Tinydancer will hopefully improve this further; Firedancer for performance and Tinydancer for trust-minimization.

Solana moves fast, not just with respect to block times but overall development. It’s been said by myself and others many times, Solana is more of an engineering-focused chain vs. the academic focus of others. While we have seen this “move fast” mentality hurt them on a few occasions, it has allowed them to ship numerous upgrades over the years and you have to acknowledge the strides they continue to make. If they keep executing, there’s no reason why Solana can’t be a successful ecosystem. And if we base this on the short history of Solana, I have no reason to believe it won’t. Solana looks a lot different than it did a year ago, and I’m sure next year we’ll say the same thing.

A single, composable, globally distributed state machine.

Special thanks to Buffalu from Jito and 7Layer from Overclock for discussions on fee markets, Jose Villacrez for designing the cover image for this report, and to Can Gurel and Brian McRae for editing.

0 Comments