Report Summary

Stablecoins are now core financial infrastructure – They’ve evolved from niche crypto assets to default settlement primitives bridging traditional finance and onchain systems.

Payment stack compression – Onchain settlement eliminates intermediaries (banks, card networks), reducing costs and enabling near-instant, programmable transactions.

Distribution beats issuance – As minting and custody commoditize, competitive advantage shifts to liquidity routing, compliance, and merchant integration.

Stablecoin chain consolidation ahead – Many specialized chains will launch, but only those with real distribution and clear differentiation will survive.

Onchain FX opportunity in long-tail corridors – The biggest efficiency gains lie in underserved currency pairs, not major forex markets.

Institutional adoption requires compliance infrastructure – Protocol-level privacy, identity, and permissioning are critical prerequisites for large-scale institutional entry.

RWAs and new credit primitives expanding scope – Tokenized assets, equity perps, prediction markets, and GPU-backed credit signal broader financial migration onchain.

2026 intensifies competition – Expect battles across issuers, payment chains, fintechs, and corporates as value accrues to those solving real-world integration challenges.

Stablecoins as Payment Infrastructure

2025 was clearly a standout year for stablecoins, and we see this trend continuing into 2026. But the broader adoption of stablecoins in the context of our report extends beyond the purview of more than just USDT/USDC growth, or new stablecoin issuers popping up. Our thesis is that stablecoins are playing a fundamental role on an infrastructural level to bridge legacy finance onchain. In this section, we’ll primarily cover payments. However, it’s worth considering that stablecoins are unlocking an umbrella of unique products and use cases that span multiple categories. In fact, much of the theme of our report this year revolves around stablecoins to some degree.

Stablecoins were indeed the gateway for TradFi to figure out crypto.

It took the most basic use case, put a dollar on a blockchain, to show people used to the status quo how much better crypto tech is for finance.

Now, a many-year race to upgrade finance by bringing it onchain.

— Jake Chervinsky (@jchervinsky) November 27, 2025

Before we dive in, let’s recap some of the notable metrics we’ve seen this year.

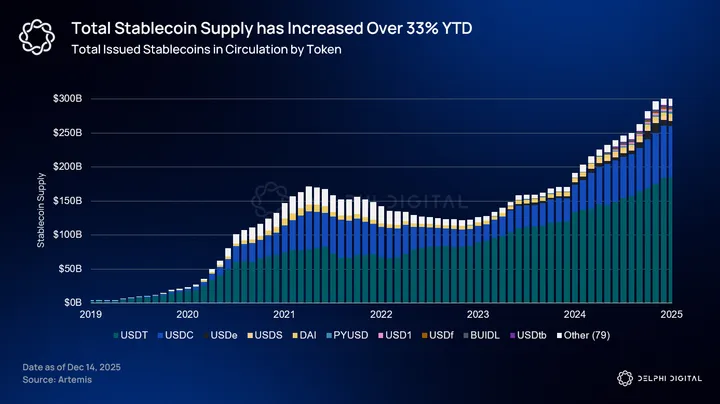

Total stablecoin supply has increased over 33% since last year (Dec ’24), now sitting at just over $304 billion. As of today, that’s just shy of 1.4% of M2 money supply. Of that $304B, Tether and Circle’s USDC still remain as the two dominant players, representing ~60.8% and ~25.4% respectively.

Within just the last year, USDT has added over $30 billion to the total stablecoin supply; USDC a close second with $20.8B. That’s ~18% and ~33.5% of their total supply minted in the last year for both USDT and USDC respectively.

But the more interesting metrics is how this exponential growth spills over into the legacy system and compares against traditional payment rails. Monthly adjusted stablecoin volume has eclipsed both PayPal and Visa within the last year.

Note: The chart below is the average last 30D rolling adjusted volume on stablecoins, excluding MEV and intra-centralized exchange transactions.

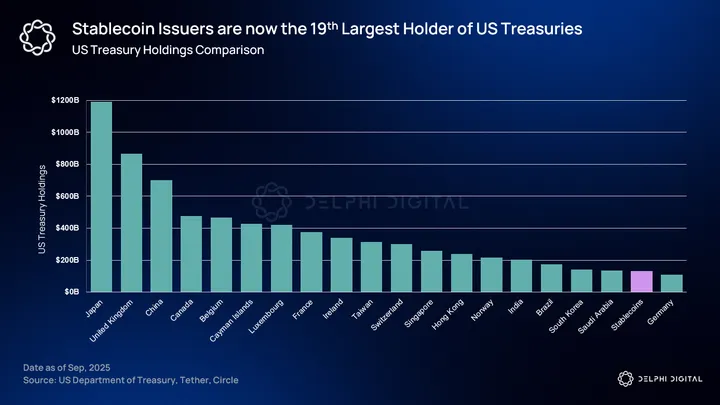

Stablecoins are also now the 19th largest holder of U.S. Treasuries, holding ~$133 billion.

What began as a niche financial product has become a major conduit for dollar liquidity that has extended into remittance rails and global commerce. Payments were always considered boring in crypto. But they have always been the largest use case, bar none. In a 2025 Global Payments Report by McKinsey, the payments industry generates $2.5 trillion in revenue from $2 quadrillion in value flows. There is no larger TAM than global payments, and stablecoins represent only a fraction of it.

The Evolution of Payment Rails

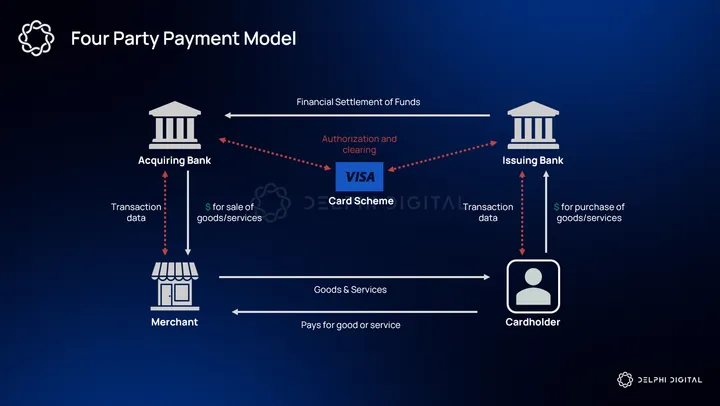

Payments should be understood first and foremost as infrastructure. Like rails, they exist to move value reliably, cheaply, and at scale from point A to B. For much of the modern era, the dominant design for those rails has been layered and riddled with intermediaries. Banks provided deposits and on-ramps, card networks provided global routing, and a large set of merchants, acquirers, and issuers mediated the final consumer experience. Each generation of innovation added a new abstraction layer meant to reduce friction for users, but also introduced additional rent seeking middlemen in the process.

With the advent of stablecoins, the payment stack is now compressing. We’re moving from legacy roles that required multiple counterparty relationships and recurring fees to programmable, onchain settlement. We illustrate this transition in four stages:

Stage 1 – The Card Network Era (Visa & Mastercard)

Visa and Mastercard rose to prominence because they solved the global coordination problem of cross-border interoperability and trust between banks and merchants. They imposed a single set of operational and risk rules, provided dispute resolution and fraud mitigation mechanisms, and enabled instant authorization that merchants could rely on without establishing bilateral relationships with every potential issuer.

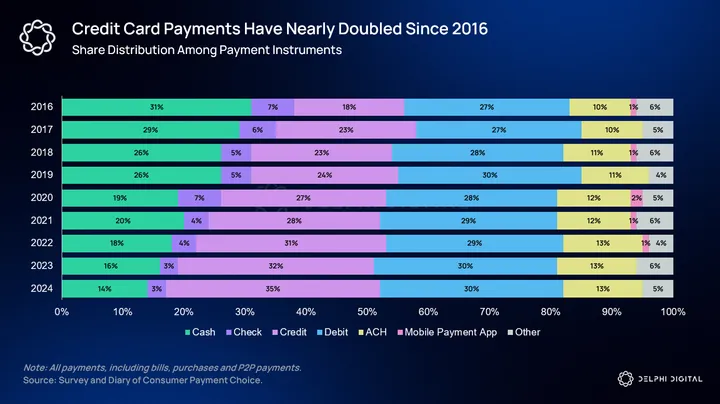

For decades those properties translated into ubiquitous convenience, and the networks became a form of default infrastructure for commerce. So much so that credit card networks have nearly doubled in terms of market share among current legacy payment instruments.

At the same time, this centralization of functions came with systemic costs:

- layered fees across issuing banks, acquiring banks, and the networks themselves

- settlement liquidity constraints/ multiple settlement windows

- implicit dependency on the banking system for the underlying money

Stage 2 – The Fintech Layer (PayPal, Venmo, Klarna)

The first major consumer-level challenge to this model took the form of fintech platforms such as PayPal, Venmo, and later Buy Now Pay Later providers like Klarna.

These platforms effectively abstracted away the underlying rails. Instead of every merchant needing to negotiate with every bank and card issuer, consumers and merchants gained access to a UX-first layer that held internal balances and reconciled value off-network until settlement. These platforms created closed-loop ledgers at scale and, in doing so, showed that many retail flows could be routed without touching the full legacy clearing path for every micro-transaction.

That abstraction produced enormous UX benefits including one-click checkout, peer-to-peer transfers, and deferred settlement mechanics that hid complexity from users.

However, incumbent banks and card networks were not passive. They recognized this as a threat and launched alternative payment models (APMs) as a response. Platforms such as Zelle and bank-owned real-time payments rails sought to bring settlement speed and direct bank-to-bank flows into the mainstream. Today, P2P transactions are just as seamless whether it’s through Venmo or Zelle.

Stage 3 – Fintech Neobanks (Revolut & Nubank)

Neobanks such as Revolut, Nubank, Monzo, and N26 represent the next wave of attempts to modernize the legacy stack. They offer a dramatically improved user experience with fee-free international spending, instant card issuance, and mobile-first onboarding. To the average user, the experiences may feel like an improvement, but in reality, neobanks are largely a repackaging of the same legacy model.

They still depend on traditional banks, payment processors, and card networks for core functions. Many rely on partner banks or licensed financial institutions to hold deposits, issue cards, and provide regulatory oversight. Even when neobanks acquire their own banking licenses, the underlying settlement flows remain tied to the same rails used by incumbents.

When a customer pays with a Revolut or Nubank card, the transaction still moves through the four-party model: the merchant routes the payment to an acquiring bank, the card network switches the authorization request, and the issuing bank settles later through standard clearing cycles. The experience feels instantaneous, but the mechanics mirror those of a traditional bank-issued card.

The economics of the model reinforce this. Neobanks derive significant revenue from the same fee structures that incumbents use, particularly interchange. Their business model assumes continued participation in the card network ecosystem. This dependence constrains how far they can deviate from the network’s rules or innovate on the settlement layer itself. When they introduce features like instant internal transfers or virtual cards, they are built around their control of the user-facing ledger, not control of the external settlement environment.

Revolut and Nubank are perfect examples of this. Both have scaled to tens of millions of users and have demonstrated that consumers will migrate to better-designed financial apps. But their growth did not meaningfully alter the economics or structure of payments. The fundamental flows still traverse issuing banks, acquiring banks, and existing card networks. The infrastructure remains just as cost-laden with the same number of intermediaries as before.

Now, we can make the same case for crypto neobanks as well, at least in their current iterations. But the main differentiator here is that a crypto neobank sits much closer to a new settlement primitive than Revolut or Nubank ever could. They still rely heavily on the traditional payment stack when interacting with merchants or on/off-ramps, but the underlying asset that users hold inside these platforms is not confined to the rules of the banking system.

Instead of holding a synthetic balance whose only expression is through an issuing bank and a card network, users hold a digitally native, transferable settlement unit (stablecoin) that can move through multiple environments.

Nothing more ironic than crypto companies competing with each other on traditional payments rails pic.twitter.com/xz7v28v3MP

— Omar (@TheOneandOmsy) November 20, 2025

And I agree, there is nothing more ironic than crypto companies competing with each other on traditional payment rails. But it’s a meaningful first attempt in transitioning into the fourth stage, where stablecoins are the new settlement primitive. It just means we’ll see the long-tail of competitors fall to the wayside if they can’t truly provide a self-sovereign way for users to hold and spend their crypto on real, everyday things.

Stage Four – Stablecoins as the New Settlement Primitive

Even before the recent proliferation of these new crypto neobanks and card providers, stablecoins had already been well established as a form of P2P settlement, especially in emerging markets and countries with rampant inflation.

Compared to the legacy model, stablecoins are a step change in terms of cost and efficiency. A stablecoin is a bearer-representative of value that exists on an open ledger and can be moved without necessarily invoking the traditional acquiring-issuing-acquirer flow. That capability matters for a few reasons:

- Stablecoins can remove several intermediary hops from the critical path between payer and payee, thus reducing friction and the number of fee-takers.

- Because settlement and finality happen on a public ledger, reconciliation and settlement processes are simplified, lowering operational complexity for merchants and platforms.

- Programmability enables things like compliance, conditional transfers, and automated reconciliation to become native platform features.

Stablecoins effectively bypass parts or all of the legacy stack.

But the existing fintechs are well aware of this. In fact, many have introduced stablecoin products, partnered with crypto-native protocols, and even launched their own payments-focused blockchains. Klarna’s introduction of KlarnaUSD is the latest among fintechs launching their own native stablecoins. So far, we have Stripe’s USDB, PayPal’s PYUSD, KlarnaUSD, and even Cloudflare has launched their own stablecoin NET.

Introducing KlarnaUSD, our first @Stablecoin.

We’re the first bank to launch on @tempo, the payments blockchain by @stripe and @paradigm.

With stablecoin transactions already at $27T a year, we’re bringing faster, cheaper cross-border payments to our 114M customers.

Crypto is…

— Klarna (@Klarna) November 25, 2025

That leads us to the question of who wins this race?

From neobanks to stablecoin-chains to issuers, and now existing fintechs, there’s no shortage of participants in stablecoin payments. Everyone is vying for a piece of the pie. And it’s fair to assume that the competition will only increase going into 2026. In the immediate term I expect a continued proliferation before any sort of consolidation back to a handful of obvious winners. However, Stripe’s ability to acquire and aggregate all parts of the payment stack might indicate that this consolidation phase might not be too far off.

Is Stablecoin Issuance Commoditizing?

The stablecoin landscape is dominated by two players: Tether and Circle’s USDC. But issuance is moving toward becoming a standardized, low-margin infrastructure service. At the simplest level, issuing a well-collateralized stablecoin includes a standard process of:

- Custody of reserves

- Transparent accounting

- Mint & burn functions

- Legal/ compliance wrappers

- Merchant/ banking integrations for on and off-ramping

These are functions that can and have been streamlined and automated to operate at scale. We’ve seen it all across crypto so far. Roll-ups-as-a-service (RaaS), sequencing services, data availability (DA), RPC & node infrastructure. The list goes on. Stablecoin issuance is following the same path. Once reserve management, legal frameworks, and redemption guarantees are commoditized, the underlying token becomes a fungible unit of short-term dollar-equivalent liquidity.

The issuer landscape already reflects a sort of bifurcation between a commodity-esque issuance model and distribution-led differentiation. On the one hand, there are incumbent issuers with large distribution networks and strong regulatory profiles. Circle is the clearest example of this. USDC is already well adopted, well trusted, and well positioned in a way that’s attractive for enterprises, exchanges, and financial institutions. We see just how valuable distribution is considering the stablecoin space has been dominated by two participants for the last five years.

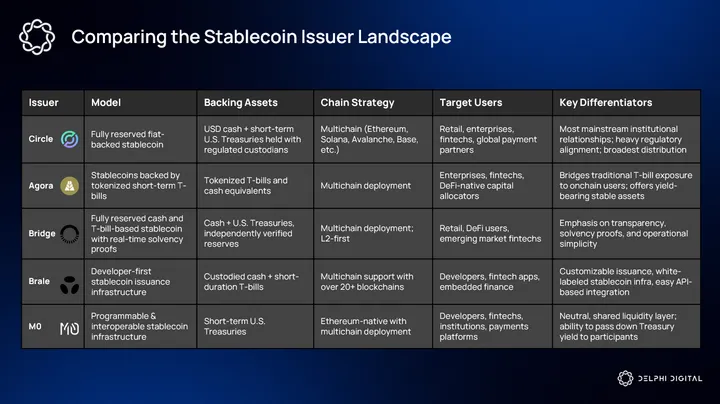

At the same time, a growing class of companies are productizing issuance itself and making white-label stablecoins trivial to spin up. They provide reserve management/ custody integrations, mint and burn APIs, and plug-and-play connectivity to on and off-ramps. Some notable names are: Agora, Brale, M0, and of course Bridge.

But if issuance becomes commoditized, distribution likely wins out, as was the case with many prior technologies. For stablecoins, this means the issuer that is most embedded into payment rails, exchange liquidity, and merchant plugins will capture the bulk of settlement demand.

This matters for two reasons. First, it changes where the pricing power sits. Interchange-style rents that once accrued to card networks will be replaced by value captured by whoever routes final settlement and provides liquidity functions on demand. Second, it changes the unit economics of the entrants. A white-label issuer may offer cheap issuance, but will struggle to accrue value if they fail to integrate with the necessary channels that move that value at scale.

As payment rails standardize, differentiation shifts up the stack into services and products that sit above the primitive.

For everyone playing the “neobank meta” right now, I’d start thinking hard about your differentiators. Phantom, Revolut and Base app can and will add cards and virtual accounts and their distribution isn’t a joke. Either focus on a very specific customer segment, build unique…

— Stepan | squads.xyz (@SimkinStepan) November 17, 2025

The functions will likely converge around standardized mint and burn and custody semantics. But once those components are reliable and interchangeable, we could potentially see competition concentrate around things like identity and compliance, liquidity routing and pro-rated access to pools, settlement assurance/ insured custody, or even things like credit overlays and verticalized merchant integrations.

Ultimately, the commercial value migrates toward whoever can make the stablecoin the path of least resistance for real world flows, whether that is payroll, cross-border B2B settlement, merchant acceptance, or in-app economies.

The Stablecoin-Chain Wars

In our last two infra year ahead reports, we covered the L2 wars – an infra landscape at the time dominated by what seemed like a new L2 launch every week. Since the launch of Plasma earlier this year, and the proliferation of what has now been dubbed “stablecoin-chains”, the same narrative has shifted from L2s to stablecoin-specific chains. These are basically L1 or L2s that strictly focus on stablecoin settlements and stablecoin-first design structures.

The design philosophy behind a “stablecoin-native” chain is that current general purpose L1s like Ethereum, Tron, or Solana weren’t designed with stablecoins as the main use case in mind. This means handling millions of daily transactions, low-cost stablecoin transfers, fiat on and off-ramps, and even offering neobank-like products for their users. The goal is to optimize for the single use case of moving and settling value via stablecoins.

But it seems like with every new primitive, the space proliferates with countless copycats and competitors with marginal improvements, all seeming to capitalize on a trending new narrative. That’s why I compare this trend to the L2 wars of the last couple years.

We’re gonna launch so many stablecoin chains, you may even get tired of stablecoin chains. And you’ll say, ‘Please, please. It’s too many stablecoin chains. We can’t take it anymore. It’s too much. pic.twitter.com/7efOKt4M6u

— Jon Charbonneau 🇺🇸 (@jon_charb) August 12, 2025

There’s no doubt that we’ll see more stablecoin-focused infrastructure launches in the coming year, but for now let’s break down the existing players in this category. It’s worth noting that this is not a comprehensive review of every single chain. We list a handful of the larger names here.

Plasma

Plasma is a high throughput, stablecoin-first L1 built to support the kinds of recurring, low-value transfers that dominate remittances and merchant payouts, particularly in emerging markets. We covered Plasma at length earlier this year, but a quick technical overview emphasizes: EVM compatibility, sub-12 second block times under a consensus variant called PlasmaBFT, and operational defaults optimized for USDT flows such as custom gas tokens and zero-fee USDT transfers.

Technically, Plasma prioritizes deterministic finality and throughput to reduce friction for onchain settlement in corridors where on and off ramp liquidity is anchored to Tether. The combination of low-cost routing and explicit product alignment with USDT distribution creates a network that is intended to lean into an existing stablecoin liquidity environment rather than attempt to replace it.

Plasma was the first stablecoin-chain to go live earlier this year. And while there’s much to be said about its XPL token price performance, they’ve arguably created a category of infrastructure where the nth chain thinks it’s worth building towards. That’s to say, if it was totally irrelevant, we wouldn’t see the corporates jump in and launch their own stablecoin chains. But we’ll get to that in a second.

Codex

Codex is a stablecoin-native L2 payments and settlement chain built on the OP stack with an explicit focus on cross-currency settlement and wholesale FX flows.

Many stablecoin-chains are primarily oriented toward transfers, remittances, or simple stablecoin payments. Their value proposition is often high throughput, low fees, and maybe wide stablecoin support. However, Codex recognizes that there is more friction on the cross-stablecoin or stablecoin-to-fiat exchange path. The goal for Codex is to bring this stablecoin-to-fiat conversion friction down to zero. For the average user in some of these emerging markets, the conversion is just too costly.

Source: Codex

Global payments and cross-border trade almost always involve currency conversion, local currency on/off-ramps, compliance, and liquidity in multiple jurisdictions. For stablecoins to meaningfully substitute for legacy rails, a chain must support more than USD stablecoin transfers.

It’s worth noting that, despite the ambitious approach, there remain nontrivial challenges in regards to onchain FX:

- Liquidity depth across multiple stablecoins/ fiat corridors

- Reliance on off-chain custodians, ramps, and real-world counterparties

- Regulatory and compliance complexity across jurisdictions

Payy

Payy targets a different category of specialization: private payments. While blockchains have historically emphasized transparency as a feature, payments often require confidentiality for both consumer and corporate use cases. Payy approaches the stablecoin-chain concept through this lens. Its design centers on allowing users to transact stablecoins while preserving confidentiality for transaction amounts, parties, and flows.

Privacy is especially relevant where competitive data, payrolls, merchant revenue, and remittance flows need to be shielded from public visibility. Imagine a company sending payroll through a public ledger and everyone’s salaries are fully transparent. Or paying a friend for dinner and suddenly doxxing your entire account balance. In fact, this is one of the main reasons why most people, even crypto natives, don’t actively use crypto rails for payments. Why dox your wallets when you can just use the legacy rails which keep your transactions private?

Payy has recently launched the Payy Card – a private, undoxxable non-custodial crypto card. Undoxxable in this context means that it can’t connect your KYC information to your Payy network balance and transactions. Today, nearly every other custodial card has at least one entity that holds both of those pieces of information.

The Corporates Are Entering the Space

Alongside these public chains, large corporates and established stablecoin issuers are launching their own specialized chains. Circle’s Arc and Stripe’s Tempo are the latest additions to this trend. But there’s some natural contention with the idea of a corporate chain. The main goal of crypto has always been to enable self-sovereign digital money. It’s about taking power away from the institutions and giving it back to the people. A corporate chain is antithetical to that entire ethos.

Arc

Arc is Circle’s dedicated L1 for USDC settlement. It represents the first major attempt by a stablecoin issuer to bring its own chain into production. Circle already distributes USDC across general purpose networks, but these environments introduce variability in fees, confirmation times, and congestion. Arc’s solution to this is creating a controlled settlement layer that is optimized specifically for stablecoin movement rather than general computation – very reminiscent of Plasma’s pitch.

A few features to note:

- Stable Fee & USDC as Native Gas

- Deterministic Settlement Finality

- Opt-In Privacy

Tempo

Tempo was incubated by Stripe and Paradigm and is marketed as a payments-first L1 that leverages Stripe’s merchant distribution and operations know-how. The chain focuses on instant merchant payouts and programmatic settlement.

Tempo is not designed as a public ecosystem. Its primary function is to serve Stripe’s existing product suite. The chain enables Stripe to settle onchain and to abstract that complexity behind the interfaces merchants already use. This creates a distribution advantage that is difficult for any external chain to match. Millions of businesses gain access to faster settlement without changing operational workflows or managing digital assets directly. It’s a classic example of “distribution over innovation”.

It’s really fascinating how many crypto people are absolutely certain that companies aren’t going to use Stripe’s chain despite the fact that all these companies are current customers and run 100% of their payments through Stripe right now

— Gwart (@GwartyGwart) November 26, 2025

Onchain FX: An Overlooked Market

Onchain FX has been increasingly gaining attention over the last month. Mainly around the idea of the stablecoin market being dominated by the U.S. dollar and the lack of non-USD stablecoins relative in percentage terms. At the time of writing, USD represents ~99.7% of the entire stablecoin supply.

The debate surrounding non-USD stablecoins, or lack thereof, first started with the following tweet:

Followed with another controversial take:

What a ridiculous take

— jordan (@yeak__) November 27, 2025

Personally, I think onchain FX is a market that is criminally overlooked – as you could have guessed with the recent takes on CT. Foreign exchange is one of the largest and most liquid markets in the world, and it goes beyond whether people want non-USD stables or not. It’s true, today most onchain activity doesn’t mirror the traditional FX market, and most of stablecoin use outside of crypto is for payments and hedging against local currency devaluation.

But this doesn’t necessarily invalidate the case for onchain FX, or non-USD stables. Yes, people will hedge and denominate against USD in jurisdictions with hyperinflation. But at the same time, people also incur FX fees to some degree when they swap in and out of a USD denominated stablecoin and into their local currency. And in some markets, these FX fees are simply too expensive. Imagine receiving a $1,000 remittance payment in USD, only to pay an average of 1-3%, and up to 6% in some cases for a foreign exchange fee.

how do people send money from UAE to Europe

my bank charges 4.6% in fx fees lol

— lito (@litocoen) November 29, 2025

Now, the example above is a bank-to-bank FX process; however, it still applies in the context of transferring stables if there is at least one touchpoint with a local fiat on/off-ramp. The defining characteristic of onchain FX is that it collapses the traditional stack. In the legacy model, an FX transfer touches at least four systems:

- The bank holding the originating currency

- The bank receiving the foreign currency

- The broker or liquidity provider facilitating the conversion

- The messaging system coordinating settlement

The cost structure is inherently vertical. Each layer charges a fee because each layer performs a distinct function. With stablecoins, all currencies could theoretically exist as tokenized assets on a shared execution layer, and conversion would become an in-protocol action, effectively cutting out the middlemen.

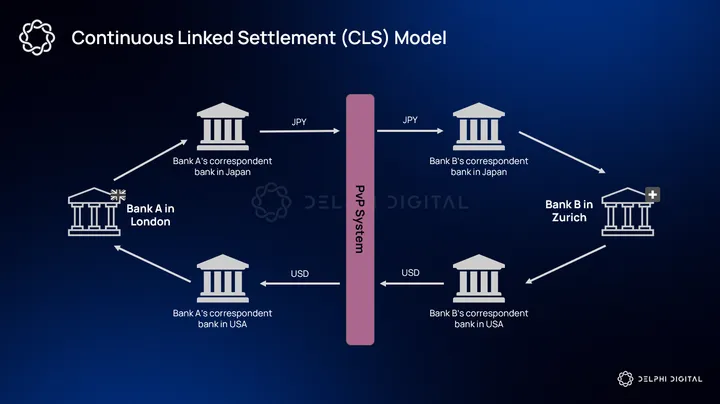

Like every other outdated legacy system, traditional FX is riddled with inefficiencies. The underlying infrastructure is highly fragmented, slow, and filled with intermediaries. Most currencies settle on local rails that have limited operating hours and inconsistent counterparty risk. Only a small set of major currencies settle through what’s known as Continuous Linked Settlement (CLS), while all others rely on long correspondent routes where each bank maintains bilateral accounts across jurisdictions.

These relationships create layers of credit exposure and require banks to pre-fund balances that sit idle in nostro/vostro accounts. In fact, this was a major pain point that Keeta was aiming to solve with its Zero Liquidity Model. Instead of requiring banks to hold idle capital in remote accounts, settlement would happen directly onchain using tokenized money.

The good thing is that FX is already an entirely digital market where most activity consists of ledger-based claims rather than physical currency movements. Trades are processed through dealer balance sheets, matched across global venues, and then settled through a network of correspondent banks. Stablecoins and crypto rails fit directly into this architecture because they replace messaging-based claims with atomic settlement and eliminate dependency on multiple intermediaries.

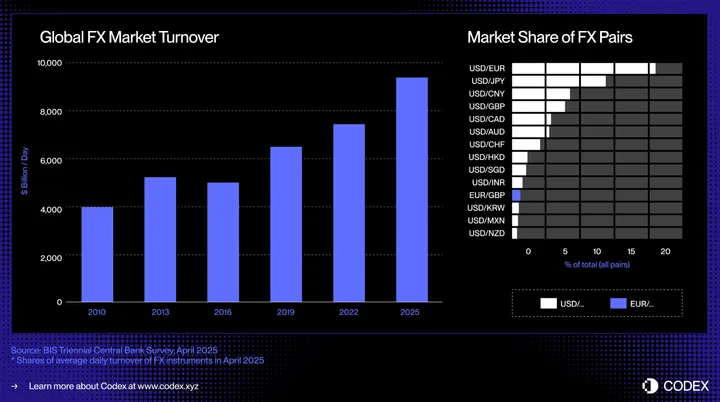

But much of the current discussion around onchain FX primarily focuses on AMMs that enable spot swaps between stablecoins. And while we could make the case for spot swaps being useful and providing basic price discovery, they do not capture the bulk of how FX markets actually function. Spot exchanges represent only a minority of global FX activity. They do not express things like maturity, collateralization, or credit constraints.

AMMs typically treat liquidity as static pools where marginal trades adjust a price curve, but real FX markets are mostly balance sheet driven.

Most “FX on-chain” pitches treat FX as a big spot market that should live in AMMs. The balance sheets tell a different story. Roughly half of global FX turnover is in short-dated FX swaps, not spot, and those swaps embed tens of trillions of off-balance-sheet dollar obligations… pic.twitter.com/1RYrLPMFLF

— neira (@borjaneira_) November 27, 2025

The foundation of global FX is the short-dated FX swap market. FX swaps allow institutions to obtain funding in a foreign currency for a defined period while posting collateral or exchanging principal. These instruments are effectively two-legged contracts. An institution exchanges currency A for currency B today and simultaneously agrees to reverse the transaction at a forward rate. The FX market is really just pricing the cost of borrowing one currency versus another.

This activity accounts for the large majority of global FX volume.

Source: bis.org

Now, I get it, we’re likely a bit early before onchain FX is competing with the CLS groups of the world, but the scale of this market can’t be undermined. We’re talking trillions in daily volume and into the quadrillions on an annual basis. In the meantime, the upside is in the corridors that are underserviced and much more inefficient. And in my guess, this is likely why Codex is looking into the more exotic currencies, as the opportunity right now lies in the long-tail pairs.

Yes, the value is in the long-tail, non-CLS pairs. Which matters to some global corporates as much as it does to remittances.

There’s still an opportunity for financial compression using DLT-like rails and / or stablecoins, but its longer term.

— Simon Taylor (@sytaylor) November 28, 2025

If I had to make a wild assumption, these long-tail corridors could be the first real FX markets we bring onchain. The more friction with these currencies in the traditional FX rails, the more opportunity for crypto rails to abstract it and capture those markets. This is likely where onchain FX has an immediate product-market fit.

I think it’ll be interesting gauging the success of this narrative by the distribution change in the stablecoin supply. Who knows, maybe the next prediction market can be on whether USD-denominated stablecoin share falls below 99% for the first time.

Institutional Adoption – Running the Compliance Playbook

From Bitcoin’s outperformance, to the launch of the recent ETFs, this cycle has commonly been termed the institutional cycle. But I think we’re only just cracking the surface of institutional adoption. And in fact, I’d argue the vast majority of financial institutions are still hesitant to approach crypto despite the change in sentiment and regulatory apparatus. I think going into 2026 we’ll likely see an acceleration of institutional adoption in a way that overshadows the past three years. The reason is that the regulatory constraints that once held the FI’s back are now being solved at the infrastructural level.

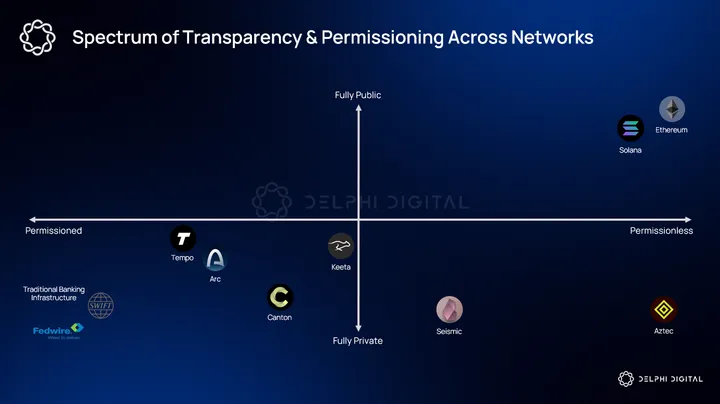

Today, the main barrier for institutional adoption is the structural mismatch between public, pseudonymous ledgers and the regulatory/compliance requirements. This includes KYC/KYB, AML, and private transfers. Banks, money transmitters, and corporates all operate under guidelines that require clear attribution of ownership and control. I know this is contrary to many of the design philosophies that crypto was originally built on, but the reality is that regulated entities cannot touch these rails even if they wanted to.

Take the Bank Secrecy Act. Institutions must know precisely who sent what, to whom, and under what conditions. They must maintain the ability to audit records that tie every transfer to a verified customer or legal entity. Now, I’m not advocating for more guardrails and overarching surveillance. In fact, I believe quite the opposite, but I think it’s worth considering that certain institutions (like banks) require some compliance measures. You wouldn’t want to use a bank that can’t protect against fraud or resolve resolutions because there’s no paper trail, would you?

Aside from the KYC aspect, FI’s also require private payments/transfers. Banks cannot send client flows where counterparties and random observers can analyze every activity on a public ledger. What’s required is private flows and public accounts. Blockchains offer public flows and anonymous accounts. As it stands today, most existing chains are not an option, hence why we see hints of a resurgence of the “corpo chain” narrative.

In this context, it’s worth viewing transparency as a sort of spectrum. On one end are fully public ledgers where every transaction is visible. On the other end is total opacity where transactions are only visible to the direct participants. Traditional bank settlement systems function this way. Only the parties involved can view transaction context. An institutional blockchain would need to fall somewhere in the middle of this spectrum.

Keeta

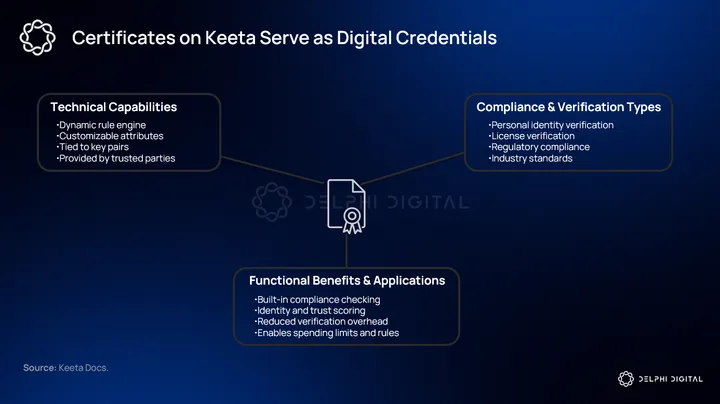

There are a few blockchains today that specifically focus on this domain. The first one that comes to mind is Keeta, with its onchain KYC and identity certificates. We recently covered Keeta at length in our full report. With onchain identity certificates, wallets are effectively tied to verifiable identity attestations without doxxing the underlying data. This is achieved via the X.509 standard, commonly used across many internet protocols.

These certificates function as cryptographically signed credentials which represent a range of verified attributes including KYC status, business licenses, and jurisdictional permissions. The point of these identity certificates is to act as a form of selective disclosure. This means users can prove specific attributes to counterparties without revealing unnecessary personal data. Certificates and profiles enable institutions to enforce compliance rules at the protocol level, while still maintaining pseudonymity in the public ledger.

Alongside identity certificates, Keeta also integrates a permission system tailored around asset issuance. The logic around this is that institutions require compliance rules around the assets and services they provide and who can actually interact with them. This could include rules like jurisdictional restrictions, KYC-gated transfers, transfer limits, and role-based permissions for custodians and issuers – all features that are already native to a traditional bank or FI.

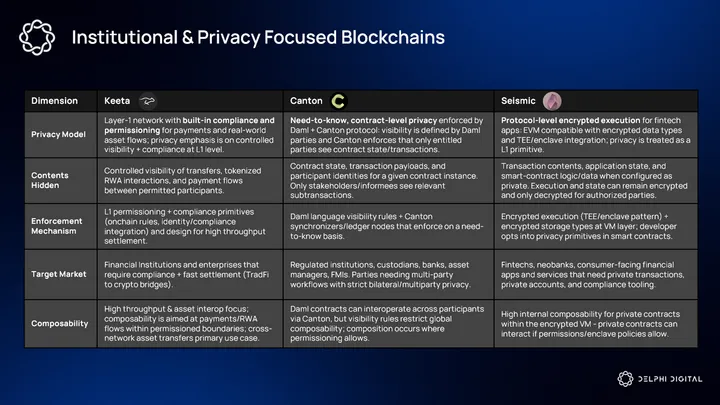

Canton Network

Canton Network

While Canton may seem like a newer L1, they have actually been live since mid-2024, with their whitepaper dating back to early 2020. Canton markets themselves as an institutional-grade blockchain purpose built for privacy and regulated entities. Their approach is focused on enabling each application provider to define their own unique attributes as it relates to privacy, permissions, and governance.

Programmable privacy is built into every asset and data via a smart contract language called Daml. Daml allows to specify access and authorization policies, supports interoperability with both applications and other systems, and provides concepts to capture rules that govern real-world business transactions.

Canton has already established a list of well-known institutional participants including Goldman Sachs, BNP Paribas, Deutsche Börse Group, and most recently Franklin Templeton.

Source: Coindesk

Seismic

Seismic takes a slightly different go-to-market approach focusing primarily on fintechs. Like regulated institutions, it’s also in their best interest to maintain private transfers/payments and compliance integrations. Some of the fintech-specific use cases include: checking accounts, savings, auto loans, brokerage, “buy now, pay later,” expense splitting, and even payroll.

Rather than using ZKP’s, Seismic provides privacy via protocol-level encryption and secure hardware integrations. This means not just obfuscating balances/identities, but enabling encryption of application logic, state, and transaction data.

Fintech firms like Cred (private credit services) and Brookwell (stablecoin account platform) are already building on Seismic.

Brokerage Accounts Become Self-Custodied Wallets

This year, tokenized stocks, commodities, and other real-world assets (RWAs) began to act like true permissionless crypto products rather than siloed wrappers. For the first time, TradFi markets were truly accessible for all. And we’re only at the very beginning.

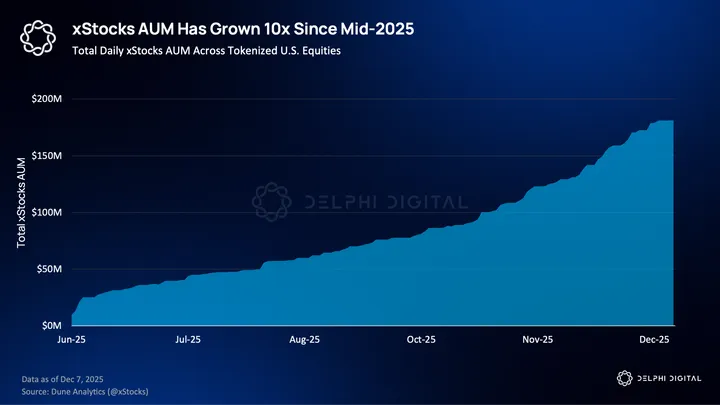

In particular, onchain equities saw their real breakout in 2025. Teams like Backed Finance, Dinari, and Securitize have long been working on tokenized stocks, but growth this year was exponential. These assets began to appear and move through channels crypto natives already used, supported by improved liquidity and much better distribution.

In January, there was only about $15 million dollars worth of genuine tokenized stock onchain. Today, there’s more than half a billion. Anyone around the world can hold and trade spot Tesla or Nvidia tokens in their own self-custodied wallet. No brokerage, no KYC.

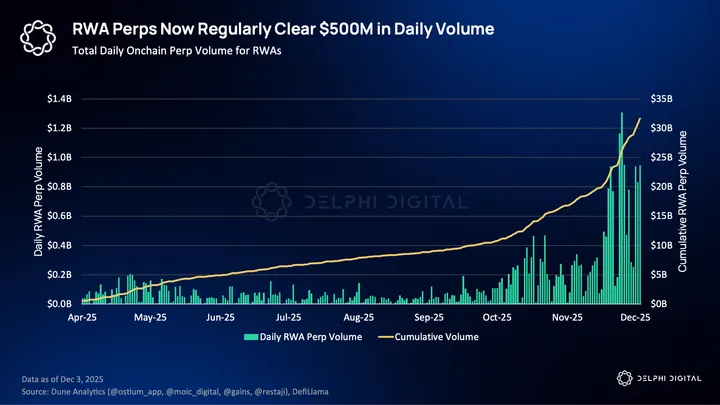

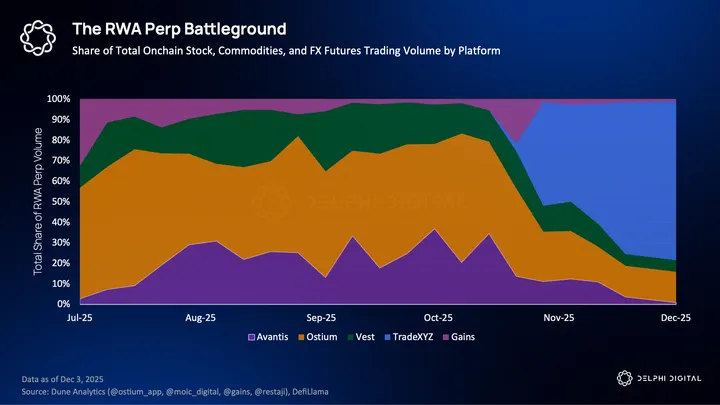

RWA perp futures have seen even faster adoption. Platforms like Hyperliquid, Ostium, and Vest now offer leveraged long/short exposure to stocks, indices, commodities, and FX. These products perfectly blend one of crypto’s core primitives with global markets that everyone wants to trade.

As a result, RWA perps have emerged as one of the primary formats through which traditional markets are achieving meaningful scale onchain.

What remains early is everything around these instruments. Liquidity is thin across the board. Tokenized RWAs lack much composability within DeFi. Equity perp exchanges are still finding the best product models.

Over the next year, competition moves from RWA listings alone to complete infrastructure. Winning projects will build the rails for liquidity, margin, custody, and composability needed to support these markets at scale.

Composable Stocks

Crypto is built around anonymous wallets, 24/7 trading, and composable “money Legos.” Until very recently, stocks did not fit into that stack. Earlier attempts to tokenize them lived on seemingly separate rails, KYC-gated and disconnected from where onchain activity was actually happening.

That changed this year. Products like xStocks (recently acquired by Kraken) plugged over 60 equities directly onto Solana, making GOOG tradable right alongside PUMP. A permissionless secondary market emerged, allowing anyone with a wallet to buy and hold stocks without opening a brokerage account.

As a result, xStocks moved through the same wallets and apps that already powered billions of dollars of crypto trading. Users can open their Jupiter app and instantly swap USDC for Tesla. RWAs no longer require their own dedicated platforms to trade and manage holdings.

The regulatory landscape is also becoming less restrictive. The SEC just closed its two-year investigation into Ondo with no action, removing one of the most visible overhangs for U.S. tokenization efforts and giving the space a clear runway.

The SEC has formally closed a confidential Biden-era investigation into Ondo — without any charges.

The inquiry began in 2024, focused on whether Ondo’s tokenization of certain real-world assets complied with federal securities laws as well as whether the ONDO token was a… pic.twitter.com/yV4xVX7Qrx

— Ondo Finance (@OndoFinance) December 8, 2025

This matters less for the future of spot trading alone and more for what it enables next.

Looking ahead to 2026, tokenized stocks are likely to continue growing exponentially as standalone products. Moves by incumbents like Robinhood to build their own L2 for RWAs only reflect how seriously traditional firms are taking this shift. But the more important shift is how RWAs begin to fit into the rest of the ecosystem.

Early infrastructure, like Kamino’s xStocks market, is small now but signals where the market is headed. Already, a limited range of tokenized stocks can be borrowed against and used within broader strategies, using the same primitives that grew DeFi from the ground up.

3/ Building on this structure, Kamino has become a primary venue for xStocks on Solana.

Users can acquire assets like METAx, GOOGLx, TSLAx, NVDAx through @kamino_swap, which provides RFQ quotes, alongside market-hours indicators and CEX price comparisons that clarify spreads and… pic.twitter.com/rKqtE6l8E7

— Kamino (@kamino) November 26, 2025

As RWAs onchain continue to expand, more integrations like this will surface across top application layers. Everyone and their “fat app thesis” is already fighting to control a user’s entire balance and activty. Exchanges and DeFi apps have a growing incentive to bring these massive assets into their existing UX, rather than risk missing out on a rapidly growing market.

This manifests as fewer standalone RWA protocols and more integrations with existing tools. Stocks, crypto, FX, and precious metals all start to sit in the same wallets and applications. From the user’s perspective, global markets are increasingly settling onchain and consolidating in a single location, even as custody remains completely self-controlled.

Equity Perps Are the Real Battleground

Equity perpetuals have proven to be one of the most effective ways for traditional markets to achieve scale onchain. That is no accident.

For anyone who has traded crypto perps, the equity perp is immediately familiar. There are no expiries or strikes to choose, nor Greeks to sort out. Exposure is linear to price, and the cost of leverage is reflected directly in a funding rate. For almost everybody, this is a much more intuitive way to trade stocks, indices, commodities, or macro views than other TradFi derivatives.

This explains why equity perps have been adopted so much faster than other onchain RWA products, and why they are now the center of competition instead of the niche product they once were.

High level, a perp market doesn’t require much. It just needs a mark price, an oracle, and a few traders in the orderbook. But in practice, trying to bring trillion-dollar markets onchain introduces constraints that do not exist for crypto. Continuous pricing across non-market hours, sufficient liquidity for traders, and a stable funding rate mechanism must all work in concert.

With hundreds of billions of dollars in TradFi futures and options traded daily, the winners here are going to be big winners. This is why RWA and equity perps are where much of the competition will be as we move into next year.

Exchanges will be racing to expand coverage, listing more single-name stocks, indices, and FX pairs as demand continues to grow. Traders want the same universe of assets they already trade elsewhere, without compromise. But listings alone do not determine where liquidity ends up.

Some platforms will favor 24/7 trading, allowing price discovery to move fully onchain when traditional venues are closed. Others will limit activity during closed hours to contain risk. This is probably less important than it seems today, as TradFi exchanges and brokerages already plan to operate 24/5 or fully 24/7 by the end of next year.

Source: Barron’s

The major differentiator here is execution and microstructure. Rather than an onchain central limit order book (CLOB), which can sometimes be quite thin, some projects like Ostium are building out their own custom infrastructure to attract large players.

Here, trades are done via an RFQ-style process. Instead of hitting an orderbook, traders receive a quoted price from LPs that’s sourced from traditional markets and executed in a single clip. Execution is designed to match against resting orderbook liquidity in their TradFi counterpart, minimizing slippage and removing the need to work positions across fragmented liquidity.

For participants trading meaningful size right now, this format may be more comfortable than a 24/7 book that can thin out and wick on weekends.

Viewed this way, the onchain RFQ model begins to resemble something very familiar: CFDs. This is effectively how CFDs already work across global markets today, with tens of billions in daily volume outside the U.S.

But there is still no “correct” model for RWA perps. Different projects will continue experimenting with different architectures to determine which one scales best.

As more listings come in and product models improve, RWA perps become increasingly attractive to the traders already active in CFD markets. The experience is familiar, but settlement, margining, and custody live entirely on crypto rails. There are no brokerage accounts to manage, and no accounts to close if you’re too profitable. By the end of 2026, onchain RWA perps as a whole will take a meaningful share of the pie from offshore CFD brokers.

Equity perps will also begin to encroach on the massive, largely retail-driven options market. Much of today’s options volume, especially in short-dated contracts like 0DTEs, reflects a desire for simple, leveraged directional exposure rather than complex volatility trading. For those use cases, perps are a cleaner instrument.

As these continue to see strong adoption, it is natural for retail-focused TradFi exchanges to follow the flow. It would not be surprising to see Robinhood introduce an RWA-perp-like instrument in its app by the end of next year. At that point, equity perps begin to resemble a new default for how global markets are traded.

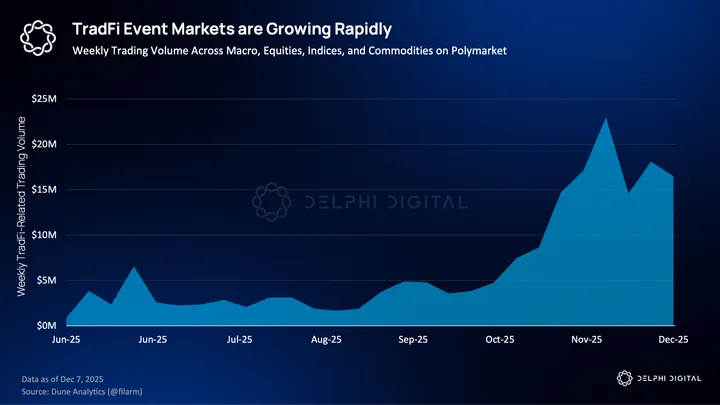

Prediction Markets Become a First-Class Derivative for TradFi

Prediction markets are starting to sit alongside traditional derivatives as a way not only to trade events that move markets but also to manage a more intelligent portfolio. Earnings beats, CPI surprises, and guidance changes don’t map cleanly onto existing TradFi products. You can approximate them with options or other trade setups, but event markets collapse that into a single yes-or-no outcome with continuously updating probabilities.

Earlier this year, Intercontinental Exchange (ICE), the owner of the NYSE and one of the most systemically important exchange operators, took a multi-billion-dollar strategic stake in Polymarket. Probably one of the clearest signals that event markets are moving into the financial mainstream. ICE has already outlined plans to distribute Polymarket’s data to thousands of institutional clients and is exploring how to surface these within existing market infra.

Thomas Peterffy, founder and chairman of Interactive Brokers (IBKR), clearly echoed this idea at a recent financial conference. He framed prediction markets as a new live information layer for institutional portfolios, sharing that early demand on IBKR is concentrated in weather-related contracts for energy usage, logistics, and insurance risk.

Key takeaways on the institutionalization of Prediction Markets from @IBKR‘s session at the Goldman Sachs 2025 Financial Services Conference:

1. From Speculation to Risk Pricing: Surprisingly, the highest interest on IBKR isn’t sports or politics—it’s temperatures. The logic is… pic.twitter.com/mQdViTIjIz

— Daedalus Research (@DaedalusRsch) December 14, 2025

More importantly, Peterffy described a future in which portfolios can be continuously informed and updated by shifting probabilities from these markets, rather than by stale analysts’ estimates.

As tokenized equities and RWA rails mature, event markets move naturally into the unified onchain brokerage account.

A trader holding spot AAPL in their wallet could borrow against that position and use a small slice of collateral to hedge earnings risk through prediction markets. Or they could constantly update their holdings based on a quote, or combination of quotes, from a simple instrument (“Will Apple beat quarterly earnings?”).

Looking ahead to 2026, the stage is set for huge growth across a few categories:

- Equity event markets (earnings beats/misses, revenue figures, guidance ranges)

- Macro prints (CPI, NFP, Fed rate decisions)

- Weather and climate markets (electricity usage, gas prices, insurance exposure)

- Cross-asset relative value markets (“First to $5K,” future market cap, comparative performance across assets)

This is the point where prediction markets move from a fringe product associated with sports betting to a serious institutional TradFi instrument.

InfraFI

I get it, it is yet another ‘Fi,’ but after observing crypto infra and DeFi cycles closely for the last three years, this one actually connects to real demand instead of reflexive onchain behavior. Hear me out.

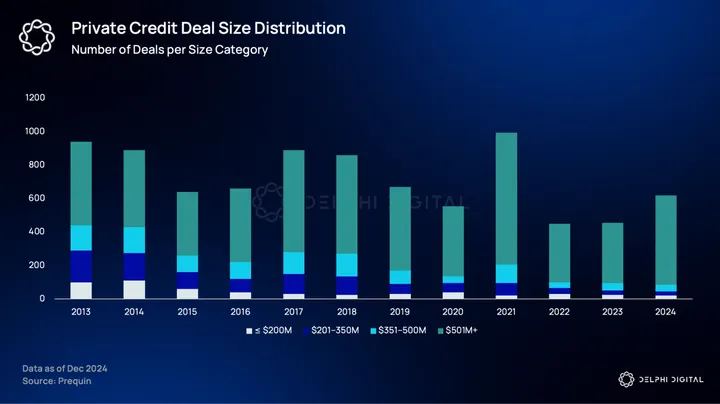

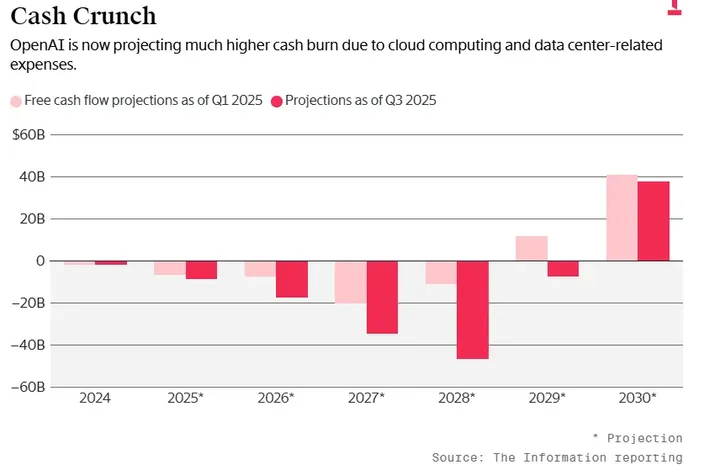

Capital Mismatch in AI Infrastructure

For most of the last decade, private credit has been one of the behemoths of global finance. As banks retreated after 2008, firms like KKR, Apollo, and Blackstone stepped in to finance the real economy. They took up underwriting cash-flowing assets at scale: factories, warehouses, aircraft, data centers. Long-lived projects. Predictable counterparties. Contracts that look the same in Texas, Frankfurt, or Singapore. This model works because the world it finances is slow, legible, and mostly static. AI is none of those things.

“Hold on, hold on, hold on. You’re selling GPUs to xAI via a SPV. That SPV is partially funding that purchase with equity from you?”

“We call that synthetic vendor financing.”

“That is fucking crazy.”

“No, it’s not. It’s awesome.” pic.twitter.com/ln4R13n8w9

— Buyback Capital (@Larryjamieson_) October 10, 2025

The current wave of AI infrastructure sits in an awkward middle ground. It is too CapEx-intensive to be funded like software, but too dynamic to fit neatly into traditional project finance. Hardware refresh cycles run in months, not decades. Demand spikes with model releases and fades just as quickly. Operators are often global from day one, but incorporated nowhere, Wall Street feels comfortable underwriting without weeks of legal work. For private credit committees, this is not an opportunity but friction.

Read more on the holes in the current AI funding scene on this killer blog post from UncoverAlpha https://www.uncoveralpha.com/p/too-much-ai-too-soon

This is why institutional capital in AI flows overwhelmingly to the very top of the stack. Private credit firms are focused on financing massive GPU clusters with offtake agreements from hyperscalers like Meta, Google, and Microsoft. These deals are large, slow, and defensible. They offer the best return on time and energy. A nine-figure facility with a single counterparty is simply more efficient than underwriting dozens of smaller operators with heterogeneous risks, even if those operators are economically viable.

There is nothing irrational about this. Private credit makes its money by being bespoke. Complex legal structures, tailored covenants, negotiated pricing, and custom risk allocation are just how they operate. The problem is that this approach does not scale down. Once deal size falls below a certain threshold, the cost of structuring overwhelms the return. The time and resources spent looking into these deals is just not worth the opportunity cost.

The long tail of AI companies sits precisely below this line. These operators do not lack demand or revenue. They lack access to financing that matches their cadence. When capital takes six months to arrive but hardware depreciates meaningfully over eighteen, the financing itself becomes a liability. When every deal requires setting up a unique workflow, speed becomes the scarcest resource.

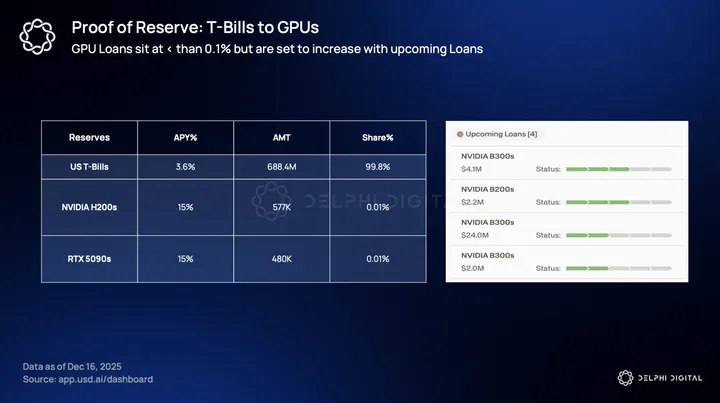

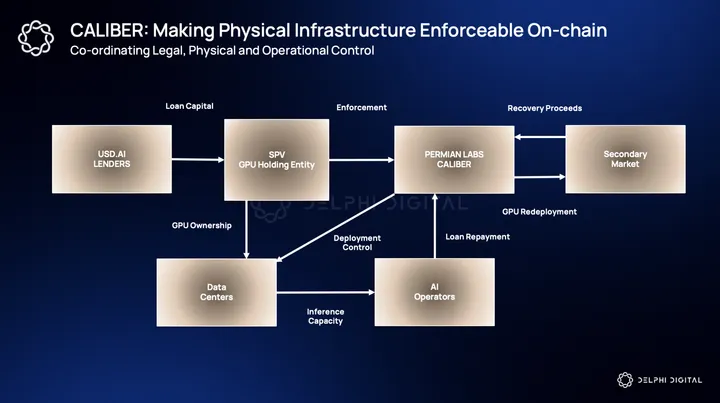

USD.AI becomes interesting not because it offers cheaper capital, but because it rejects this bespoke logic entirely. Instead of negotiating terms borrower by borrower, it standardizes them. Instead of tailoring structures to each counterparty, it publishes the structure upfront which is applicable to each and every deal. For example, USD.AI follows a strict financing policy. They do not fund purchase order loans or business loans, they stick to only after-installs, as they directly avoid any other uncertainty or credit risk related to the business itself.

(yes we are adding more loans)

one of them literally had their chips stuck at French customs

this is also why we dont finance the purchase orders loans or business loans and only after-install

the painful things we do to have proper proof of inventory & reserves

— David | www.usd.ai (@0xZergs) October 31, 2025

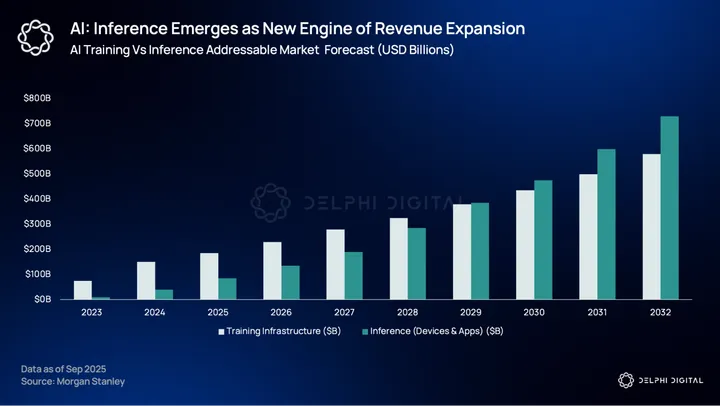

Inference Cash Flows Vs GPU LTV

GPUs are a financeable asset not because they have a high LTV (Life Time Value) but because they generate predictable inference cash flow. Confusing these two ideas is where most GPU-backed financing goes wrong. Although, most of the deals financed so far have assumed a 5-7 year life span for GPUs, which is grossly over-estimated.

UncoverAlpha’s post from earlier mentioned NVIDIA has effectively moved to a 12 month product cycle with each new generation delivering a 10-20x improvement in tokens-per-watt. This only means that the economic competitiveness of a GPU could degrade faster than its physical lifespan. So regardless of how much juice the older GPUs have left in them, they might still fail to meet the cost curves required to service debt.

Inference demand does create a second life for GPUs, but that life is shorter than most financing models assume. Under realistic utilization and pricing assumptions, the effective economic life of a GPU is closer to 12–24 months, not the 3–6 years still embedded in a large share of AI infrastructure debt. The gap between these assumptions is where most risk accumulates.

Under realistic operating assumptions of high utilization, declining rental rates, and fixed costs from power, colocation, insurance, and brokerage, the window in which inference revenues comfortably service leveraged debt compresses rapidly. The limiting factor is not whether GPUs generate revenue at all, but whether that revenue remains sufficient to amortize principal meaningfully before efficiency shocks arrive. Beyond roughly 12–24 months, debt service increasingly depends on optimistic utilization or refinancing rather than on operating cash flow alone.

This is where most risk accumulates. A large share of AI infrastructure debt is still structured around 3–6 year amortization schedules, inherited from legacy data center and server financing models. Those schedules assume gradual depreciation and stable pricing dynamics that no longer hold in inference-heavy compute markets. When amortization outruns cash generation, collateral can remain operational while failing as credit support.

For GPUs to function as collateral, amortization must outrun obsolescence. Loan duration, repayment schedules, and recovery assumptions matter more than headline performance or replacement cost. Secondary inference markets are a necessary condition, but not a sufficient one. Redeployability only preserves value when demand is broad enough to absorb hardware at prices consistent with the capital structure.

USD.AI’s model implicitly targets this window. It does not assume that GPUs will be productive for five or six years. It assumes that there is a finite inference phase where cash flows are predictable, workloads are fungible, and redeployment is possible if a borrower fails. Financing works only if loan duration, amortization, and depreciation are aligned tightly within this period. If they are not, collateral quality erodes quickly.

This is why GPUs are different from earlier attempts at “tech-backed” collateral. Servers, networking gear, and consumer hardware lacked a global, metered demand curve tied directly to economic output. Inference provides that curve, but only temporarily and only for certain classes of hardware. Collateral quality is not a property of the chip itself.

None of this makes GPUs safe collateral. It makes them legible collateral. Their risks can be modeled, their cash flows observed, and their failure modes anticipated. That legibility is what enables underwriting. The moment inference margins collapse, demand concentrates too narrowly, or hardware cycles compress further, the window closes.

This is the backdrop against which any GPU-backed financing model must be evaluated. Over the last two years, GPU-backed debt has quietly grown into a material corner of the AI financing stack. Estimates now put the market at $20–25 billion outstanding, spanning neoclouds, data center SPVs, and bespoke vendor-financed structures. The risk is not that AI demand disappears, but that debt has been written against optimistic assumptions about hardware lifetimes and utilization.

NVIDIA’s move to annual product cycles, combined with 10-20× gains in tokens-per-watt between generations, compresses the economically useful life of GPUs far faster than the 3-6 year amortization schedules many borrowers still model. As Jim Chanos (a famous short seller) recently warned, this creates a familiar dynamic where assets that remain operational, but no longer productive enough to service debt. When repayment depends on sustained subsidies, circular vendor financing, or continued capital inflows, defaults become a timing issue, not a tail risk. In that sense, the stress in AI credit markets is not cyclical and will surface first where leverage outruns cash flows.

Fragmentation of the GPU Market

The most common objection to using GPUs as collateral is not that they depreciate, but that the market around them will not stay stable. NVIDIA’s dominance, critics argue, is temporary. Google has TPUs. AMD is closing performance gaps. Custom inference chips and ASIC-like designs are proliferating. If compute fragments across architectures, the fear is that today’s collateral becomes tomorrow’s stranded asset.

This concern is valid, but incomplete. Fragmentation does not mean demand disappears. It means demand becomes more selective. The history of infrastructure markets suggests that dominant technologies rarely vanish overnight. They are slowly displaced at the margins, while retaining relevance in secondary use cases. CPUs did not disappear when GPUs rose. On-prem servers did not die when the cloud arrived. They were repriced and redeployed.

The same dynamic is likely to play out in AI compute. Specialized hardware will capture specific workloads where it offers clear advantages. Frontier training may migrate aggressively. But inference, especially at scale and at the margin, is less sensitive to architectural purity. What matters is cost per unit of output and operational reliability. As long as there is a large installed base of software built around CUDA-compatible GPUs, NVIDIA-class hardware will retain economic utility even as alternatives mature.

From USD.AI’s perspective, fragmentation is not an existential threat. It is a filter. The protocol does not need every form of compute to be financeable. It needs a subset of hardware that exhibits three properties: measurable cash flows, a secondary market for redeployment, and predictable depreciation. If alternative accelerators develop these characteristics, they can be financed. If they do not, they should not be onboarded.

In this sense, fragmentation strengthens the model’s discipline. It forces continuous reassessment of what qualifies as infrastructure. It also reduces reliance on a single vendor narrative. The risk is not that the market diversifies. The risk is assuming that every new chip deserves to be financed simply because it exists.

USD.AI’s bet is narrower than that. It is that some compute will remain boring, fungible, and productive enough to underwrite. Fragmentation does not invalidate that bet.

Liquidity, Amortization, and Exit Sequencing

USD.AI treats liquidity as a function of cash flow timing rather than a guarantee. The system is backed by two sources of liquidity: amortizing, GPU-secured loans and short-dated U.S. Treasury bills.

Treasury bills serve as the system’s liquid reserve. Capital held in T-Bills earns modest yield while remaining immediately redeemable. These reserves smooth the timing gap between loan deployment and repayment and reduce reliance on hardware cash flows to fund exits. They allow loans to be originated when underwriting is attractive, not when liquidity pressure demands it.

The remaining capital is deployed into amortizing loans secured by GPUs.

Let’s run with this example.

An AI operator running production inference requires $12 million to deploy GPUs. The hardware is expected to remain economically viable for inference for roughly 24 months, after which efficiency gains and power constraints make replacement likely. The loan is structured to amortize fully within that window.

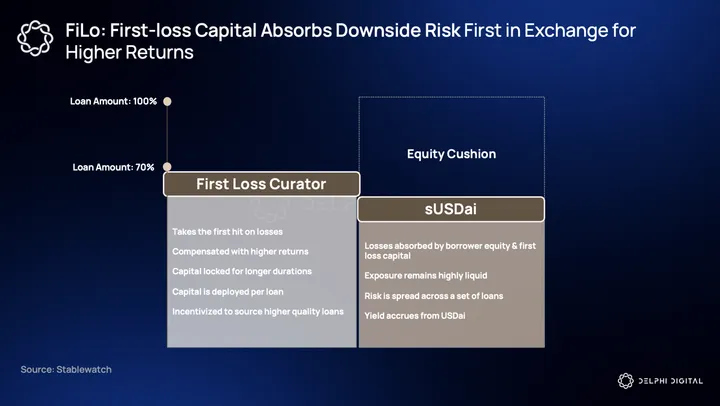

USD.AI provides a $12 million loan funded through two layers of capital. $10 million is senior capital from depositors. $2 million is first-loss capital from curators. Losses are absorbed in sequence, with first-loss capital bearing downside before senior capital. This structure aligns incentives around underwriting and deal selection.

The GPUs are owned by a bankruptcy-remote special-purpose vehicle, insured, and contractually redeployable in the event of default. This framework, implemented through CALIBER, ensures that collateral enforcement is independent of borrower solvency.

The loan amortizes over 24 months with fixed monthly payments of approximately $550,000. Inference workloads are expected to generate around $420,000 per month in gross revenue, with the remainder covered by operating reserves. Principal is returned monthly, creating a predictable inflow of cash to the system.

Depositor redemptions are funded from available liquidity and processed through a queue. As principal is repaid and reserves are drawn down, capital becomes available for exit. Depositors who prefer faster redemption can pay to move ahead in the queue, transferring value to those willing to wait.

Now consider a default.

Nine months into the loan, approximately $4.24 million of principal has been repaid, leaving $7.76 million outstanding. The borrower fails. The GPUs are repossessed and redeployed into secondary inference markets, recovering $6.2 million. The resulting $1.56 million shortfall is absorbed entirely by first-loss capital. Senior capital remains whole. Redemptions slow due to the loss of repayments but continue without forced asset sales.

This is the role of the first-loss layer. It does not eliminate risk. It confines losses to capital explicitly structured to bear them, while preserving orderly exits and enforceable collateral.

The Stress Testers

GPU-backed credit does not depend on AI demand continuing indefinitely. It depends on financing staying aligned with how inference cash flows actually show up.

Inference revenues arrive early in the life of hardware. If principal is reduced meaningfully during that period, efficiency gains and pricing pressure matter less. If it is not, the remaining balance becomes sensitive to forces outside the borrower’s control. GPUs can continue operating and still stop working as collateral. The distinction is subtle, but decisive.

Demand breadth matters just as much. Secondary markets preserve value only when redeployment is routine and pricing remains competitive. As inference demand concentrates or growth slows, recovery values become less predictable. Collateral does not disappear, but it becomes harder to price with confidence.

The final constraint is behavioral. Structures like this rely on discipline holding when capital is available. If underwriting stretches to maintain volume, or if liquidity is treated as an entitlement rather than an outcome of repayments, risk stops being priced at the margin and starts being managed after the fact.

Privacy Is Cool Again

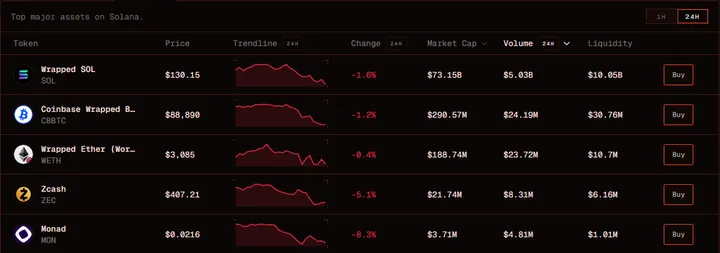

In our last year ahead report we had covered privacy tech (specifically ZK) quite extensively. But beyond the technical progress in the space this wasn’t reflected in the market until very recently with the price performance of ZEC. And while I believe this is largely a case of “price go up = gud tech”, it’s brought plenty of attention back to the topic of privacy.

However, this extends far beyond finding the next privacy token to bid. We’re now at an inflection point where privacy as a default feature is non-negotiable. Whether it’s compliance requirements for institutional adoption, or the overarching control and surveillance from governments, the value of systems with embedded privacy will become much more sought-after than those without.

The Surveillance State

“Those who would give up essential Liberty, to purchase a little temporary Safety, deserve neither Liberty nor Safety”. ~Benjamin Franklin

Over the last couple months, there’s been an alarming rate of proposals and legislation coming out of the EU and European central banks in regards to privacy and money transfer. Now, while this may not matter to most outside of Western Europe, it certainly sets a precedent that privacy is never guaranteed if it’s left to a small handful of disillusioned bureaucrats and politicians or authoritarian government regimes.

Recently, the EU announced its plan to limit cash transactions above €10,000 and identity checks on transactions above €3,000, in order to “stop money laundering”. The Bank of England (BOE) proposed a similar cap for individual stablecoin holdings, limited to just £10,000–£20,000 (~$13,320–$26,650) per person, citing “systemic risk”.

The Bank of England is proposing a cap on individual stablecoin holdings, limiting ownership to just £10,000–£20,000 per person in the name of “systemic risk.”

This is absurd, and we need to push back against this kind of regulation. Stablecoins issued onchain do not pose…

— Stani.eth (@StaniKulechov) September 15, 2025

The fact that the EU is pushing so hard to regulate cash transactions shows how desperate they are to maintain control. Especially considering the ECB plans to pilot their own digital Euro beginning in 2027 with a holding limit of €3,000 per person. Think about that for a second – a €3,000 holding limit. China’s digital yuan already has programmable restrictions, and we see just how Orwellian their society has become.

In China, CBDC is linked to your digital ID.

This person gets caught with driving without a helmet. Police in control room immediately deducts 25 yuan (US3.6$) fine from his digital wallet, and he could lose 1 social credit point. https://t.co/VYDrCCiPx1 pic.twitter.com/3ICh21yC4Y— Songpinganq (@songpinganq) November 26, 2025

I haven’t even mentioned the recent passing of the Chat Control Act (CSAR) which involves the mass surveillance of private messaging, or demands for implementing backdoors into privacy software like GrapheneOS.

GrapheneOS has shut down operations in France due to pressure from law enforcement and media, citing risks for privacy projects and demands for a backdoor. Servers are being moved to Toronto and Germany, and developers are barred from entering France https://t.co/36b1JKmZjO pic.twitter.com/bEX3CdroSz

— AlternativeTo (@AlternativeTo) November 26, 2025

And while the EU is the extreme case, it’s a reminder that privacy shouldn’t be taken for granted. Just not too long ago the Canadian government was freezing bank accounts of those associated with the truckers rally back in early 2022. Freedom of speech is synonymous with self-sovereign control of wealth. Express an opinion that the government doesn’t like, and you’re putting your livelihood at risk. It’s no coincidence that the EU decides to pass such overarching surveillance laws while pushing for strict verification on money transfer at the same time. It’s all about control.

The extreme scenario of this is a surveillance grid tied to a programmable government-issued CBDC that can expire, be frozen, or restrict certain purchases. This may sound like a tin-foil hat theory, but it’s already happening in China, and the EU is following their footsteps.

Our freedom of privacy is being encroached upon, our self-sovereignty to own and freely transmit money is being threatened, and it’s all being done in the guise of national security and public safety.

If you don’t actually have self-custody and the freedom to spend your money how you please, then do you really own your money?

Private Payments – The Real Unlock

A purely peer-to-peer version of digital cash was the original idea that Bitcoin was created on. Nearly two decades later and Bitcoin is used as a speculative trading asset, SoV, or considered “digital gold”. Of course, there is a small minority that actually use it to transact; but for the large part, Bitcoin has failed as a form of digital cash.

For an asset to be considered or function as cash, it needs to be universally (or at least widely) accepted, low friction, stable in price, and fungible. The problem is that Bitcoin lacks many of these, including privacy. It was never stable in price, the friction to send BTC has been considerably high compared to other chains today, it never had a privacy feature, and thus it was never universally accepted. Even existing alternatives like Zcash don’t fit this narrative. Let’s be realistic, how many Zcash holders are actually using it as digital cash versus holding it because they’re up 600%? It was a good trade, but no one’s using it to pay anyone.

Stablecoins have already won on that front. USD is familiar and stable. You know one dollar will always be one dollar (not considering inflation or de-peg risk). But even then, holding and using a USD-denominated stablecoin is much more practical, hence why it’s already seen such great adoption over the last few years. This is exactly why a network or settlement layer that enables the private transfer of stablecoins will ultimately win in the end. People want a stable, known form of cash, and they want to transact with it privately. Stablecoins are the purest form of what digital cash was always intended to be. The real exponential unlock is when we can transact using stablecoins in a fully private manner.

What you need for private stablecoins

(the tech is only part of the story)

– ERC20 version that shields balances, transfer amounts, and (optionally) wallet addresses

– FX trading venue with private swaps

– Blockchain intelligence provider with separate public / private tx…

— Lyron (@lyronctk) October 22, 2025

The irony is that physical cash solved all of this long ago. Cash has a feature where once it settles, it effectively vanishes with no trace other than the immediate transacting parties knowing about it. Why do you think the EU is hell-bent on limiting cash transactions and moving to a fully surveillant CBDC? It goes back to the classic adage of “criminals would prefer to use cash over crypto”. An ideal private settlement layer for stablecoins would restore this vanishing feature in a digital form.

But this shouldn’t be misrepresented as “dark money.” It should rather be viewed as contextual confidentiality, where counterparties can transact privately, but the necessary interacting institutions can gain visibility when appropriate.

Today, we can build fully private systems; the problem arises when we interact with legacy rails and try to spend using crypto. Once a wallet touches a KYC-ed off-ramp, that user is now technically doxed. The same goes for crypto cards/neobanks; in many cases, KYC is a requirement. This obstacle largely remains (at least on the consumer-facing side) as long as merchants don’t directly accept crypto.

Ideally, we would want to maintain full anonymity. But what if we could enable privacy and compliance simultaneously?

ZK-KYC & ZK AML as a Potential Solution

One possible solution we’ll use here as an example is ZK-KYC via membership proofs and ZK AML. The main primitive behind ZK-proven compliance includes what’s known as a membership proof. Instead of sharing identity documents or revealing personal information, a user can prove that they belong to an approved set of entities without revealing which element in that given set corresponds to them. This would include things like a list of verified users, a licensed customer database, or a KYC registry list.

When a user wants to transact, they generate a ZKP showing that their identity commitment is included in the registry. The proof reveals nothing else. This is functionally equivalent to performing KYC, but without creating honeypots of identity data and without broadcasting the identity-linked transaction publicly onchain.

Now, there are some caveats with this approach, the main one being scalability of the proof set. In a membership proof, the greater the dataset, the greater your identity becomes diluted in a pool of potential decoys. This is actually what we want. The problem with this is that as a given set increases, so too does the length of the Merkle tree paths, invariably increasing the proving time.

ZK AML takes a slightly different approach, allowing users to prove facts about the transaction without revealing the transaction itself. This includes things like proving the recipient is not in a sanctions list without revealing the recipient, or proving the sender is not associated with flagged/blacklisted addresses, without revealing their entire transaction graph.

Unlike a membership proof, where the user is trying to prove that they belong to an approved set of entities; ZK-AML takes the exact opposite approach, proving that neither they nor the recipient are part of a prohibited set.

Embedded Privacy as the Default

This shouldn’t be a controversial take by now. Privacy should always be assumed as the default. But for some reason, when it comes to crypto, it’s usually seen as a nonstarter. Protocols usually launch with the privacy component intended as a future add-on rather than a default standard.

Also why privacy on a public chain is improbable. Doesn’t matter if a pool is private if every on-/off-ramp, vault, and card service isn’t.

And even when the individual systems are private, if they aren’t made with the same tech, the boundaries between them still leak all info.

— Lyron (@lyronctk) December 5, 2025

As privacy continues to erode, the demand for it naturally increases. It’s almost like a self-calibrating equilibrium. People will trade-off privacy for convenience, until it becomes uncomfortable to do so. In many cases, the demand for privacy returns once the topic of discussion enters things like payments and personal finance.

A merchant cannot run millions in daily stablecoin settlements if every competitor can parse their volumes. A global payroll platform cannot expose employee salaries. Even consumer payments become uncomfortable when the coffee shop can see your entire financial history. If Venmo’s default public feed comes off as invasive, why would any consumer be okay with sharing the rest of their financial profile?

The fact of the matter is that without privacy, stablecoins eventually hit an adoption ceiling. Without universal private settlement rails, it’s unlikely to assume that stablecoins will cross that chasm into the mainstream, regardless of their recent success. Universal is the key term here. There are plenty of privacy focused protocols, but nearly all of them are siloed environments or too niche for the general public to know or care about.

Over the last few years, we’ve made significant progress as it relates to ZK and other privacy tech. The next step would be to embed those in a way where, for example, sending a private payment would be indistinguishable from sending a normal payment. Users should not be required to know what a “shielded pool” or “viewing key” is. Instead, all the technical jargon should be abstracted behind a smooth UX. This means things like instant proofs, predictable fees, and automatic selective disclosure.

Looking forward, we could anticipate privacy to become a UX standard embedded in applications and at the infrastructure level. If users and institutions demand it, protocols will be required to uphold it as a default feature. We’re already seeing it today, with protocols like Payy and Seismic.

Solana Upgrades & Progress

In the last Year Ahead Report, we had mentioned how users were moving toward general purpose chains with superior UX and throughput. This generally remains the case with Solana. However, with the advent of Hyperliquid, obvious concerns have risen around maintaining that dominance as Solana’s entire moat is centered around becoming the decentralized version of Nasdaq.

Solana core devs should see these are huge warning signals –

1. Phantom picking Hyperliquid

2. Hype REV flipping SOL REVThey need to ship more than they’ve ever shipped before

— StrategicHash (@StrategicHash) July 11, 2025

Back then, we raised this exact question of Solana maintaining its lead in an era where high throughput chains became the standard. The answer was simple: increase bandwidth, reduce latency. And it’s no more apparent that now is the time to double down on this approach.

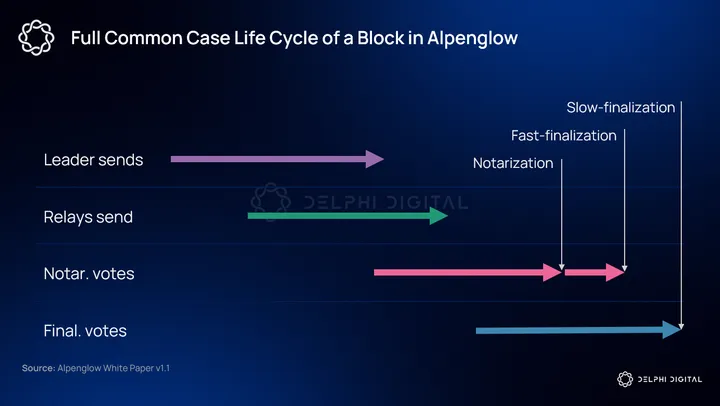

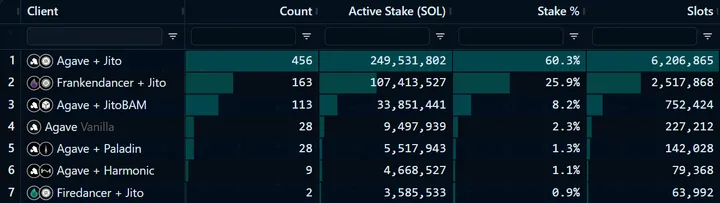

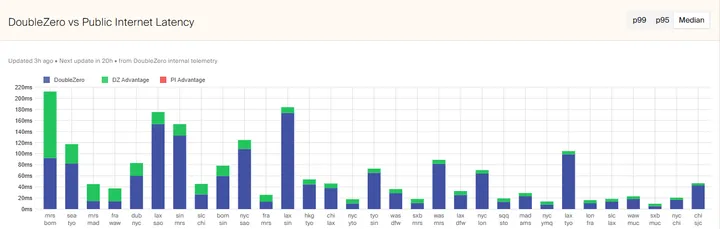

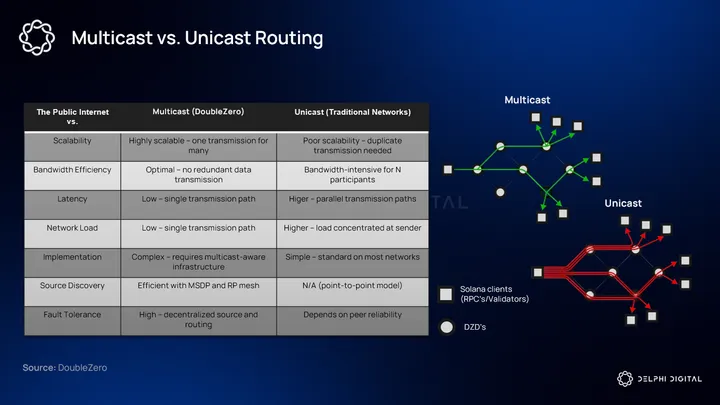

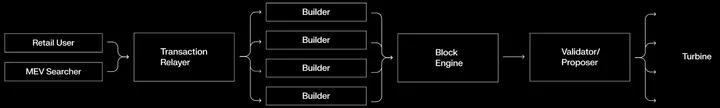

Solana’s year-ahead roadmap is about transforming it into an exchange-grade environment where a native on-chain CLOB can viably compete with CEX latency, liquidity depth, and fairness.